scrapy初探之實現爬取小說

阿新 • • 發佈:2018-06-04

scrapy 爬取小說 一、前言

上文說明了scrapy框架的基礎知識,本篇實現了爬取第九中文網的免費小說。

二、scrapy實例創建

1、創建項目

C:\Users\LENOVO\PycharmProjects\fullstack\book9>scrapy startproject book9

2、定義要爬取的字段(item.py)

import scrapy class Book9Item(scrapy.Item): # define the fields for your item here like: # name = scrapy.Field() book_name = scrapy.Field() #小說名字 chapter_name = scrapy.Field() #小說章節名字 chapter_content = scrapy.Field() #小說章節內容

3、寫爬蟲(spiders/book.py)

在spiders目錄下創建book.py文件 import scrapy from book9.items import Book9Item from scrapy.http import Request import os class Book9Spider(scrapy.Spider): name = "book9" allowed_domains = [‘book9.net‘] start_urls = [‘https://www.book9.net/xuanhuanxiaoshuo/‘] #爬取每本書的URL def parse(self, response): book_urls = response.xpath(‘//div[@class="r"]/ul/li/span[@class="s2"]/a/@href‘).extract() for book_url in book_urls: yield Request(book_url,callback=self.parse_read) #進入每一本書目錄 def parse_read(self,response): read_url = response.xpath(‘//div[@class="box_con"]/div/dl/dd/a/@href‘).extract() for i in read_url: read_url_path = os.path.join("https://www.book9.net" + i) yield Request(read_url_path,callback=self.parse_content) #爬取小說名,章節名,內容 def parse_content(self,response): #爬取小說名 book_name = response.xpath(‘//div[@class="con_top"]/a/text()‘).extract()[2] #爬取章節名 chapter_name = response.xpath(‘//div[@class="bookname"]/h1/text()‘).extract_first() #爬取內容並處理 chapter_content_2 = response.xpath(‘//div[@class="box_con"]/div/text()‘).extract() chapter_content_1 = ‘‘.join(chapter_content_2) chapter_content = chapter_content_1.replace(‘ ‘, ‘‘) item = Book9Item() item[‘book_name‘] = book_name item[‘chapter_name‘] = chapter_name item[‘chapter_content‘] = chapter_content yield item

4、處理爬蟲返回的數據(pipelines.py)

import os class Book9Pipeline(object): def process_item(self, item, spider): #創建小說目錄 file_path = os.path.join("D:\\Temp",item[‘book_name‘]) print(file_path) if not os.path.exists(file_path): os.makedirs(file_path) #將各章節寫入文件 chapter_path = os.path.join(file_path,item[‘chapter_name‘] + ‘.txt‘) print(chapter_path) with open(chapter_path,‘w‘,encoding=‘utf-8‘) as f: f.write(item[‘chapter_content‘]) return item

5、配置文件(settiings.py)

BOT_NAME = ‘book9‘

SPIDER_MODULES = [‘book9.spiders‘]

NEWSPIDER_MODULE = ‘book9.spiders‘

# Obey robots.txt rules

ROBOTSTXT_OBEY = False

# 設置請求頭部

DEFAULT_REQUEST_HEADERS = {

"User-Agent" : "Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; Trident/5.0;",

‘Accept‘: ‘text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8‘

}

# Configure item pipelines

# See https://doc.scrapy.org/en/latest/topics/item-pipeline.html

ITEM_PIPELINES = {

‘book9.pipelines.Book9Pipeline‘: 300,

}6、執行爬蟲

C:\Users\LENOVO\PycharmProjects\fullstack\book9>scrapy crawl book9 --nolog

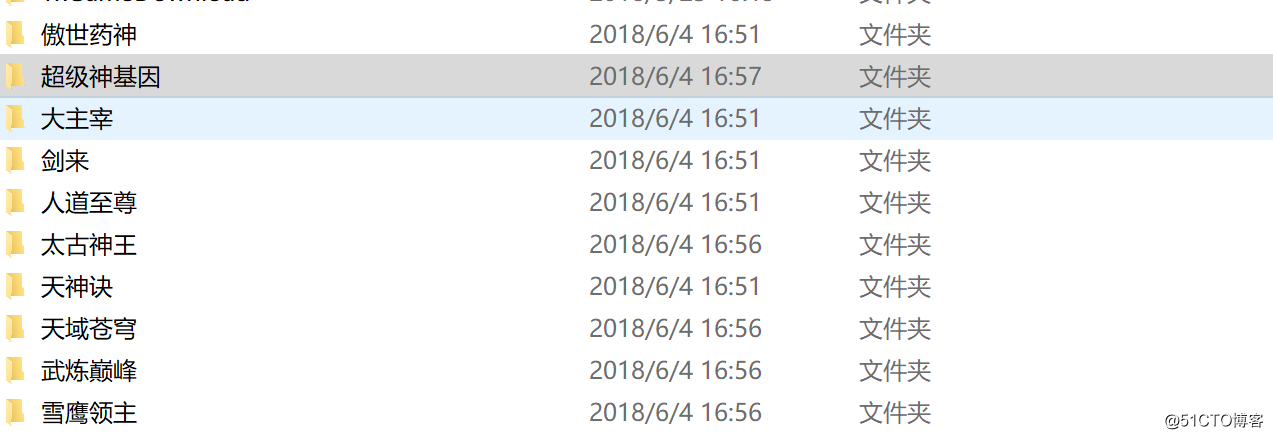

7、結果

scrapy初探之實現爬取小說