小專案(文字資料分析)--新聞分類任務

阿新 • • 發佈:2018-11-04

1.資料

import pandas as pd

import jieba

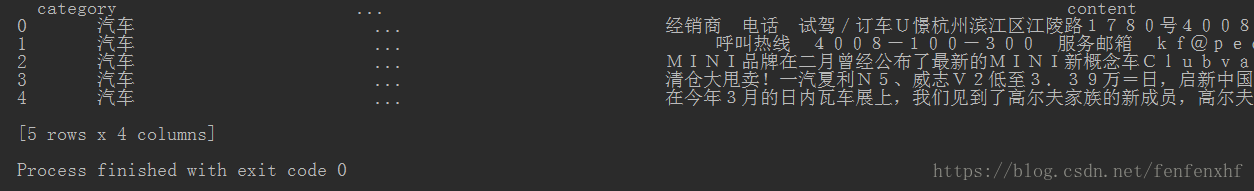

#資料(一小部分的新聞資料)

df_news = pd.read_table('val.txt',names=['category','theme','URL','content'],encoding='utf-8')

df_news = df_news.dropna() #直接丟棄包括NAN的整條資料

print(df_news.head())

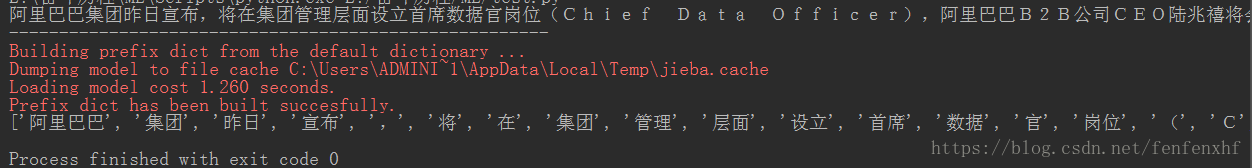

2.分詞:使用jieba庫

import pandas as pd import jieba # #資料(一小部分的新聞資料) df_news = pd.read_table('val.txt',names=['category','theme','URL','content'],encoding='utf-8') df_news = df_news.dropna() #直接丟棄包括NAN的整條資料 #print(df_news.head()) #分詞 content = df_news.content.values.tolist() #因為jieba要列表格式 print(content[1000]) print("------------------------------------------------------") content_S = [] #儲存分完詞之後結果 for line in content: current_segment = jieba.lcut(line) #jieba分詞 if len(current_segment) > 1 and current_segment != "\r\n": content_S.append(current_segment) print(content_S[1000])

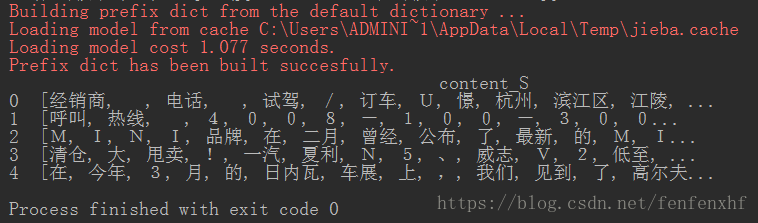

3.#將分完詞的結果轉化成DataFrame格式

import pandas as pd import jieba # #資料(一小部分的新聞資料) df_news = pd.read_table('val.txt',names=['category','theme','URL','content'],encoding='utf-8') df_news = df_news.dropna() #直接丟棄包括NAN的整條資料 #print(df_news.head()) #分詞 content = df_news.content.values.tolist() #因為jieba要列表格式 #print(content[1000]) #print("------------------------------------------------------") content_S = [] #儲存分完詞之後結果 for line in content: current_segment = jieba.lcut(line) #jieba分詞 if len(current_segment) > 1 and current_segment != "\r\n": content_S.append(current_segment) #print(content_S[1000]) #將分完詞的結果轉化成DataFrame格式 df_content = pd.DataFrame({"content_S":content_S}) print(df_content.head())

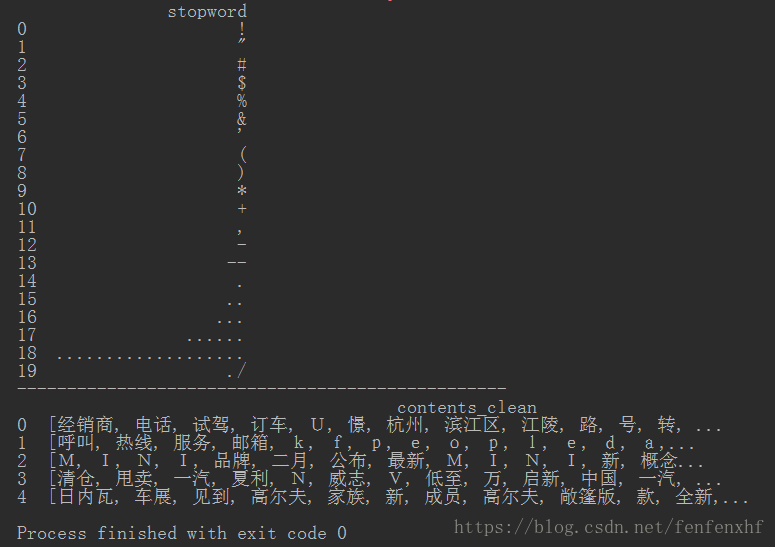

4.清洗資料(上面資料可以看到很亂),用停用詞表清洗停用詞

注:停用詞(語料庫中大量出現但是沒什麼用的詞,比如“的”)

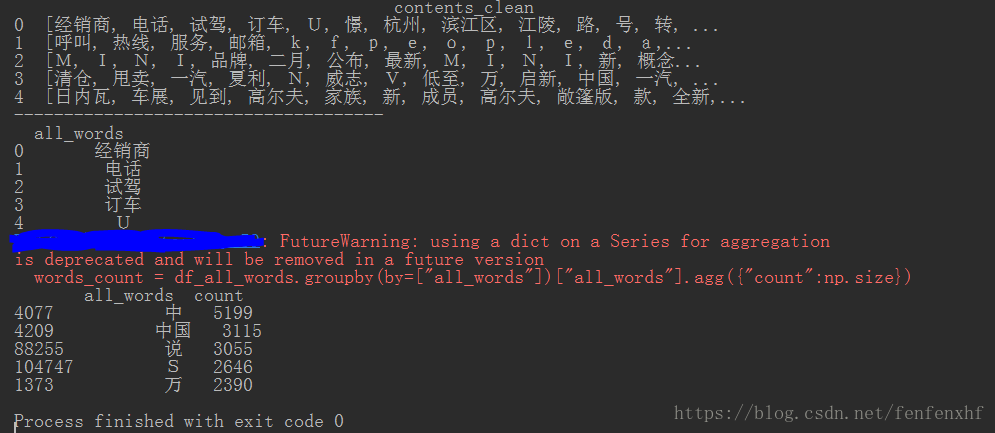

import pandas as pd import jieba #分詞 #去除停用詞函式 def drop_stopwords(contents,stopwords): contents_clean = [] all_words = [] for line in contents: line_clean = [] for word in line: if word in stopwords: continue line_clean.append(word) all_words.append(str(word)) #所有的片語成一個列表 contents_clean.append(line_clean) return contents_clean,all_words #資料(一小部分的新聞資料) df_news = pd.read_table('val.txt',names=['category','theme','URL','content'],encoding='utf-8') df_news = df_news.dropna() #直接丟棄包括NAN的整條資料 #print(df_news.head()) #分詞 content = df_news.content.values.tolist() #因為jieba要列表格式 #print(content[1000]) #print("------------------------------------------------------") content_S = [] #儲存分完詞之後結果 for line in content: current_segment = jieba.lcut(line) #jieba分詞 if len(current_segment) > 1 and current_segment != "\r\n": content_S.append(current_segment) #print(content_S[1000]) #將分完詞的結果轉化成DataFrame格式 df_content = pd.DataFrame({"content_S":content_S}) #print(df_content.head()) #清洗亂的資料,用停用詞表去除停用詞 stopwords = pd.read_csv('stopwords.txt',index_col=False,sep='\t',quoting=3,names=["stopword"],encoding="utf-8") #讀入停用詞 print(stopwords.head(20)) print("-------------------------------------------------") #呼叫去除停用詞函式 contents = df_content.content_S.values.tolist() stopwords = stopwords.stopword.values.tolist() contents_clean,all_words = drop_stopwords(contents,stopwords) #將清洗完的資料結果轉化成DataFrame格式 df_content = pd.DataFrame({"contents_clean":contents_clean}) print(df_content.head())

5.用all_words統計詞頻

import numpy as np

import pandas as pd

import jieba #分詞

#去除停用詞函式

def drop_stopwords(contents,stopwords):

contents_clean = []

all_words = []

for line in contents:

line_clean = []

for word in line:

if word in stopwords:

continue

line_clean.append(word)

all_words.append(str(word))

contents_clean.append(line_clean)

return contents_clean,all_words

#資料(一小部分的新聞資料)

df_news = pd.read_table('val.txt',names=['category','theme','URL','content'],encoding='utf-8')

df_news = df_news.dropna() #直接丟棄包括NAN的整條資料

#print(df_news.head())

#分詞

content = df_news.content.values.tolist() #因為jieba要列表格式

#print(content[1000])

#print("------------------------------------------------------")

content_S = [] #儲存分完詞之後結果

for line in content:

current_segment = jieba.lcut(line) #jieba分詞

if len(current_segment) > 1 and current_segment != "\r\n":

content_S.append(current_segment)

#print(content_S[1000])

#將分完詞的結果轉化成DataFrame格式

df_content = pd.DataFrame({"content_S":content_S})

#print(df_content.head())

#清洗亂的資料,用停用詞表去除停用詞

stopwords = pd.read_csv('stopwords.txt',index_col=False,sep='\t',quoting=3,names=["stopword"],encoding="utf-8") #讀入停用詞

#print(stopwords.head(20))

#print("-------------------------------------------------")

#呼叫去除停用詞函式

contents = df_content.content_S.values.tolist()

stopwords = stopwords.stopword.values.tolist()

contents_clean,all_words = drop_stopwords(contents,stopwords)

#將清洗完的資料結果轉化成DataFrame格式

df_content = pd.DataFrame({"contents_clean":contents_clean})

df_all_words = pd.DataFrame({"all_words":all_words})

print(df_content.head())

print("-------------------------------------")

print(df_all_words.head())

#用儲存的all_word統計一下詞頻

words_count = df_all_words.groupby(by=["all_words"])["all_words"].agg({"count":np.size}) #groupby就是按詞分類

words_count = words_count.reset_index().sort_values(by=["count"],ascending=False) #降序

print(words_count.head())

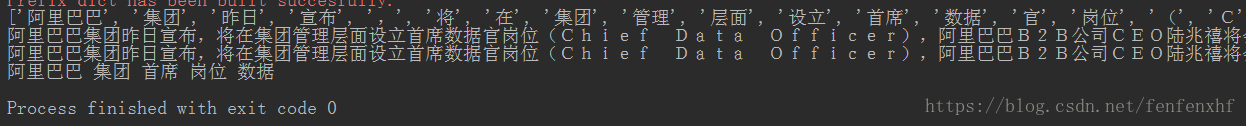

6.#用jieba.analyse提取關鍵詞(TF-IDF)

注:什麼是TF-IDF(詞頻-逆文件頻率)?

TF = 某個詞在文章中出現的次數 / 文章的總詞數

IDF = log (語料庫中文件總數 / 包含該詞的文件數+1)

import numpy as np

import pandas as pd

import jieba #分詞

from jieba import analyse

#資料(一小部分的新聞資料)

df_news = pd.read_table('val.txt',names=['category','theme','URL','content'],encoding='utf-8')

df_news = df_news.dropna() #直接丟棄包括NAN的整條資料

#print(df_news.head())

#分詞

content = df_news.content.values.tolist() #因為jieba要列表格式

#print(content[1000])

#print("------------------------------------------------------")

content_S = [] #儲存分完詞之後結果

for line in content:

current_segment = jieba.lcut(line) #jieba分詞

if len(current_segment) > 1 and current_segment != "\r\n":

content_S.append(current_segment)

print(content_S[1000])

#用jieba.analyse提取關鍵詞

index = 1000

print(df_news["content"][index]) #列印第1000資料的content

content_S_str = "".join(content_S[index]) #將單個詞列表連線在一起

print(content_S_str)

print(" ".join(analyse.extract_tags(content_S_str,topK=5)))

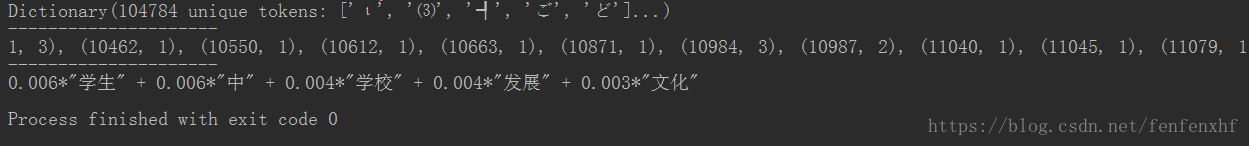

7.LDA:主題模型

#要求格式:list of list格式,是將整個語料庫分詞好的list of list

import numpy as np

import pandas as pd

import jieba #分詞

from jieba import analyse

import gensim #自然語言處理庫

from gensim import corpora,models,similarities

#去除停用詞函式

def drop_stopwords(contents,stopwords):

contents_clean = []

all_words = []

for line in contents:

line_clean = []

for word in line:

if word in stopwords:

continue

line_clean.append(word)

all_words.append(str(word))

contents_clean.append(line_clean)

return contents_clean,all_words

#資料(一小部分的新聞資料)

df_news = pd.read_table('val.txt',names=['category','theme','URL','content'],encoding='utf-8')

df_news = df_news.dropna() #直接丟棄包括NAN的整條資料

#print(df_news.head())

#分詞

content = df_news.content.values.tolist() #因為jieba要列表格式

#print(content[1000])

#print("------------------------------------------------------")

content_S = [] #儲存分完詞之後結果

for line in content:

current_segment = jieba.lcut(line) #jieba分詞

if len(current_segment) > 1 and current_segment != "\r\n":

content_S.append(current_segment)

#print(content_S[1000])

#將分完詞的結果轉化成DataFrame格式

df_content = pd.DataFrame({"content_S":content_S})

#print(df_content.head())

#清洗亂的資料,用停用詞表去除停用詞

stopwords = pd.read_csv('stopwords.txt',index_col=False,sep='\t',quoting=3,names=["stopword"],encoding="utf-8") #讀入停用詞

#print(stopwords.head(20))

#print("-------------------------------------------------")

#呼叫去除停用詞函式

contents = df_content.content_S.values.tolist()

stopwords = stopwords.stopword.values.tolist()

contents_clean,all_words = drop_stopwords(contents,stopwords)

#將清洗完的資料結果轉化成DataFrame格式

df_content = pd.DataFrame({"contents_clean":contents_clean})

df_all_words = pd.DataFrame({"all_words":all_words})

#print(df_content.head())

#print("-------------------------------------")

#print(df_all_words.head())

#LDA:主題模型

#要求格式:list of list格式,是將整個語料庫分詞好的list of list

#做對映,相當於詞袋

dictionary = corpora.Dictionary(contents_clean) #將清洗完的資料生成字典形式

corpus = [dictionary.doc2bow(sentence) for sentence in contents_clean]

print(dictionary)

print("---------------------")

print(corpus)

print("---------------------")

lda = gensim.models.LdaModel(corpus=corpus,id2word=dictionary,num_topics=20)

#列印1號分類結果

print(lda.print_topic(1,topn=5))

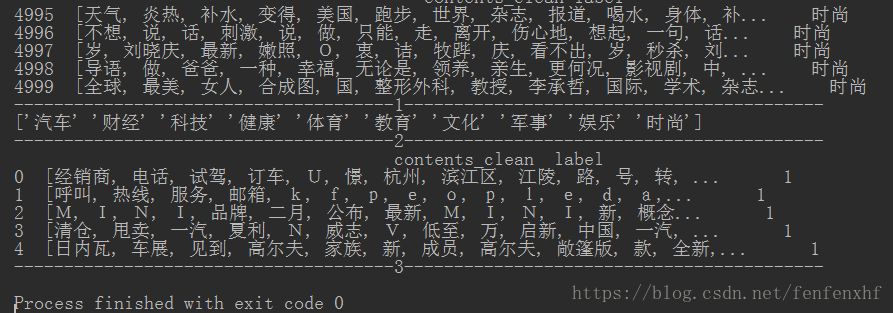

8.新聞分類

第一步:現將資料集的標籤轉換成sklearn可以識別的數值型格式

import numpy as np

import pandas as pd

import jieba #分詞

from jieba import analyse

import gensim #自然語言處理庫

from gensim import corpora,models,similarities

#去除停用詞函式

def drop_stopwords(contents,stopwords):

contents_clean = []

all_words = []

for line in contents:

line_clean = []

for word in line:

if word in stopwords:

continue

line_clean.append(word)

all_words.append(str(word))

contents_clean.append(line_clean)

return contents_clean,all_words

#資料(一小部分的新聞資料)

df_news = pd.read_table('val.txt',names=['category','theme','URL','content'],encoding='utf-8')

df_news = df_news.dropna() #直接丟棄包括NAN的整條資料

#print(df_news.head())

#分詞

content = df_news.content.values.tolist() #因為jieba要列表格式

#print(content[1000])

#print("------------------------------------------------------")

content_S = [] #儲存分完詞之後結果

for line in content:

current_segment = jieba.lcut(line) #jieba分詞

if len(current_segment) > 1 and current_segment != "\r\n":

content_S.append(current_segment)

#print(content_S[1000])

#將分完詞的結果轉化成DataFrame格式

df_content = pd.DataFrame({"content_S":content_S})

#print(df_content.head())

#清洗亂的資料,用停用詞表去除停用詞

stopwords = pd.read_csv('stopwords.txt',index_col=False,sep='\t',quoting=3,names=["stopword"],encoding="utf-8") #讀入停用詞

#print(stopwords.head(20))

#print("-------------------------------------------------")

#呼叫去除停用詞函式

contents = df_content.content_S.values.tolist()

stopwords = stopwords.stopword.values.tolist()

contents_clean,all_words = drop_stopwords(contents,stopwords)

#將清洗完的資料結果轉化成DataFrame格式

df_content = pd.DataFrame({"contents_clean":contents_clean})

df_all_words = pd.DataFrame({"all_words":all_words})

#print(df_content.head())

#print("-------------------------------------")

#print(df_all_words.head())

#新聞分類

#列印DataFreme格式的內容和標籤

df_train = pd.DataFrame({"contents_clean":contents_clean,"label":df_news["category"]})

print(df_train.tail()) #列印最後幾個資料

print("--------------------------------------1------------------------------------------")

print(df_train.label.unique()) #列印標籤的種類

print("--------------------------------------2------------------------------------------")

#因為sklearn只識別數值型標籤,所以將字元型標籤轉換成數值型

label_mappping = {'汽車':1,'財經':2, '科技':3, '健康':4, '體育':5, '教育':6, '文化':7, '軍事':8, '娛樂':9, '時尚':0}

df_train["label"] = df_train["label"].map(label_mappping)

print(df_train.head())

print("--------------------------------------3------------------------------------------")

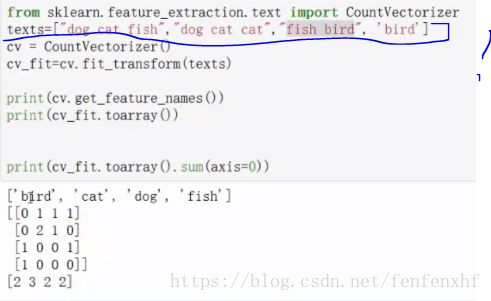

第二步:將清洗過的文章分詞轉化成樸素貝葉斯的矩陣形式[[],[],[],[]…]

如:注意標註的格式,下面需要將資料轉化成這種格式

import numpy as np

import pandas as pd

import jieba #分詞

from jieba import analyse

import gensim #自然語言處理庫

from gensim import corpora,models,similarities

from sklearn.feature_extraction.text import CountVectorizer #詞集轉換成向量

from sklearn.model_selection import train_test_split

#去除停用詞函式

def drop_stopwords(contents,stopwords):

contents_clean = []

all_words = []

for line in contents:

line_clean = []

for word in line:

if word in stopwords:

continue

line_clean.append(word)

all_words.append(str(word))

contents_clean.append(line_clean)

return contents_clean,all_words

def format_transform(x): #x是資料集(訓練集或者測試集)

words =[]

for line_index in range(len(x)):

try:

words.append(" ".join(x[line_index]))

except:

print("資料格式有問題")

return words

def vec_transform(words):

vec = CountVectorizer(analyzer="word",max_features=4000,lowercase=False)

return vec.fit(words)

#資料(一小部分的新聞資料)

df_news = pd.read_table('val.txt',names=['category','theme','URL','content'],encoding='utf-8')

df_news = df_news.dropna() #直接丟棄包括NAN的整條資料

#print(df_news.head())

#分詞

content = df_news.content.values.tolist() #因為jieba要列表格式

#print(content[1000])

#print("------------------------------------------------------")

content_S = [] #儲存分完詞之後結果

for line in content:

current_segment = jieba.lcut(line) #jieba分詞

if len(current_segment) > 1 and current_segment != "\r\n":

content_S.append(current_segment)

#print(content_S[1000])

#將分完詞的結果轉化成DataFrame格式

df_content = pd.DataFrame({"content_S":content_S})

#print(df_content.head())

#清洗亂的資料,用停用詞表去除停用詞

stopwords = pd.read_csv('stopwords.txt',index_col=False,sep='\t',quoting=3,names=["stopword"],encoding="utf-8") #讀入停用詞

#print(stopwords.head(20))

#print("-------------------------------------------------")

#呼叫去除停用詞函式

contents = df_content.content_S.values.tolist()

stopwords = stopwords.stopword.values.tolist()

contents_clean,all_words = drop_stopwords(contents,stopwords)

#將清洗完的資料結果轉化成DataFrame格式

df_content = pd.DataFrame({"contents_clean":contents_clean})

df_all_words = pd.DataFrame({"all_words":all_words})

#print(df_content.head())

#print("-------------------------------------")

#print(df_all_words.head())

#新聞分類

#列印DataFreme格式的內容和標籤

df_train = pd.DataFrame({"contents_clean":contents_clean,"label":df_news["category"]})

#print(df_train.tail()) #列印最後幾個資料

#print("--------------------------------------1------------------------------------------")

#print(df_train.label.unique()) #列印標籤的種類

#print("--------------------------------------2------------------------------------------")

#因為sklearn只識別數值型標籤,所以將字元型標籤轉換成數值型

label_mappping = {'汽車':1,'財經':2, '科技':3, '健康':4, '體育':5, '教育':6, '文化':7, '軍事':8, '娛樂':9, '時尚':0}

df_train["label"] = df_train["label"].map(label_mappping)

#print(df_train.head())

#print("--------------------------------------3------------------------------------------")

#切分資料集

x_train,x_test,y_train,y_test = train_test_split(df_train["contents_clean"].values,df_train["label"].values)

#將清洗過的文章分詞轉化成樸素貝葉斯的矩陣形式[[],[],[],[]...]

#首先將資料的分詞(list of list)轉換成["a b c","a b c",...]這種格式,因為呼叫的包只識別這種格式

#呼叫函式format_transform()函式

words_train = format_transform(x_train)

words_test = format_transform(x_test)

#轉化成向量格式,呼叫函式vec_transform()

vec_trian = vec_transform(words_train)

vec_test = vec_transform(words_test)

print(vec_trian.transform(words_train))

print("------------------------------------------------")

print(vec_test)

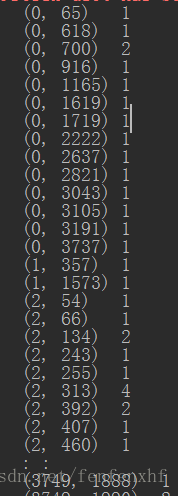

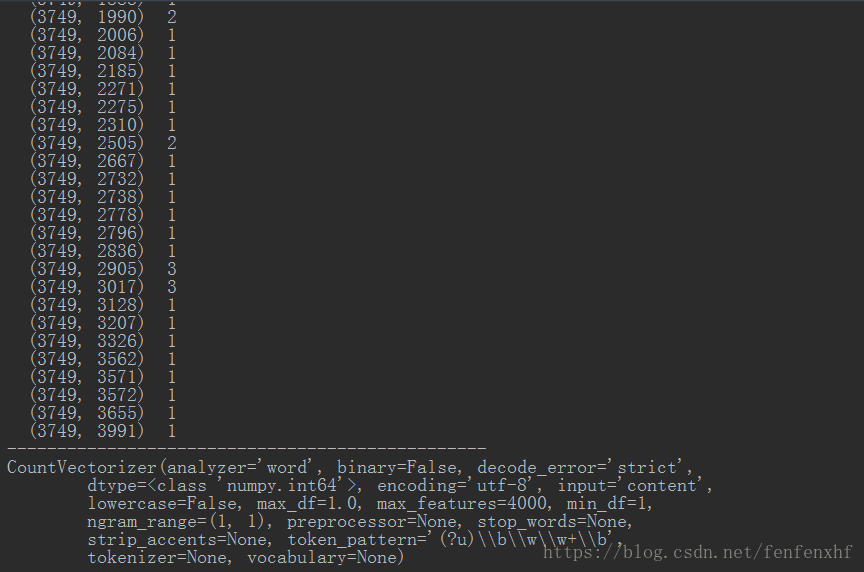

第三步:訓練資料,並給出測試的效果

import numpy as np

import pandas as pd

import jieba #分詞

from jieba import analyse

import gensim #自然語言處理庫

from gensim import corpora,models,similarities

from sklearn.feature_extraction.text import CountVectorizer #詞集轉換成向量

from sklearn.feature_extraction.text import TfidfVectorizer #另一個轉換成向量的庫

from sklearn.model_selection import train_test_split

from sklearn.naive_bayes import MultinomialNB #樸素貝葉斯多分類

#去除停用詞函式

def drop_stopwords(contents,stopwords):

contents_clean = []

all_words = []

for line in contents:

line_clean = []

for word in line:

if word in stopwords:

continue

line_clean.append(word)

all_words.append(str(word))

contents_clean.append(line_clean)

return contents_clean,all_words

def format_transform(x): #x是資料集(訓練集或者測試集)

words =[]

for line_index in range(len(x)):

try:

words.append(" ".join(x[line_index]))

except:

print("資料格式有問題")

return words

def vec_transform(words):

vec = CountVectorizer(analyzer="word",max_features=4000,lowercase=False)

return vec.fit(words)

#資料(一小部分的新聞資料)

df_news = pd.read_table('val.txt',names=['category','theme','URL','content'],encoding='utf-8')

df_news = df_news.dropna() #直接丟棄包括NAN的整條資料

#print(df_news.head())

#分詞

content = df_news.content.values.tolist() #因為jieba要列表格式

#print(content[1000])

#print("------------------------------------------------------")

content_S = [] #儲存分完詞之後結果

for line in content:

current_segment = jieba.lcut(line) #jieba分詞

if len(current_segment) > 1 and current_segment != "\r\n":

content_S.append(current_segment)

#print(content_S[1000])

#將分完詞的結果轉化成DataFrame格式

df_content = pd.DataFrame({"content_S":content_S})

#print(df_content.head())

#清洗亂的資料,用停用詞表去除停用詞

stopwords = pd.read_csv('stopwords.txt',index_col=False,sep='\t',quoting=3,names=["stopword"],encoding="utf-8") #讀入停用詞

#print(stopwords.head(20))

#print("-------------------------------------------------")

#呼叫去除停用詞函式

contents = df_content.content_S.values.tolist()

stopwords = stopwords.stopword.values.tolist()

contents_clean,all_words = drop_stopwords(contents,stopwords)

#將清洗完的資料結果轉化成DataFrame格式

df_content = pd.DataFrame({"contents_clean":contents_clean})

df_all_words = pd.DataFrame({"all_words":all_words})

#print(df_content.head())

#print("-------------------------------------")

#print(df_all_words.head())

#新聞分類

#列印DataFreme格式的內容和標籤

df_train = pd.DataFrame({"contents_clean":contents_clean,"label":df_news["category"]})

#print(df_train.tail()) #列印最後幾個資料

#print("--------------------------------------1------------------------------------------")

#print(df_train.label.unique()) #列印標籤的種類

#print("--------------------------------------2------------------------------------------")

#因為sklearn只識別數值型標籤,所以將字元型標籤轉換成數值型

label_mappping = {'汽車':1,'財經':2, '科技':3, '健康':4, '體育':5, '教育':6, '文化':7, '軍事':8, '娛樂':9, '時尚':0}

df_train["label"] = df_train["label"].map(label_mappping)

#print(df_train.head())

#print("--------------------------------------3------------------------------------------")

#切分資料集

x_train,x_test,y_train,y_test = train_test_split(df_train["contents_clean"].values,df_train["label"].values)

#將清洗過的文章分詞轉化成樸素貝葉斯的矩陣形式[[],[],[],[]...]

#首先將資料的分詞(list of list)轉換成["a b c","a b c",...]這種格式,因為呼叫的包只識別這種格式

#呼叫函式format_transform()函式

words_train = format_transform(x_train)

words_test = format_transform(x_test)

#轉化成向量格式,呼叫函式vec_transform()

vec_trian = vec_transform(words_train)

#vec_test = vec_transform(words_test)

#print(vec_trian.transform(words_train))

#print("------------------------------------------------")

#print(vec_test)

#訓練

nbm = MultinomialNB()

nbm.fit(vec_trian.transform(words_train),y_train)

#測試

score = nbm.score(vec_trian.transform(words_test),y_test)

print(score) #0.8016