《用Python玩轉資料》專案—線性迴歸分析入門之波士頓房價預測(二)

阿新 • • 發佈:2018-11-25

接上一部分,此篇將用tensorflow建立神經網路,對波士頓房價資料進行簡單建模預測。

二、使用tensorflow擬合boston房價datasets

1、資料處理依然利用sklearn來分訓練集和測試集。

2、使用一層隱藏層的簡單網路,試下來用當前這組超引數收斂較快,準確率也可以。

3、啟用函式使用relu來引入非線性因子。

4、原本想使用如下方式來動態更新lr,但是嘗試下來效果不明顯,就索性不要了。

def learning_rate(epoch):

if epoch < 200:

return 0.01

if epoch < 400:

return 0.001

if epoch < 800:

return 1e-4

好了,廢話不多說了,看程式碼如下:

from sklearn import datasets

from sklearn.model_selection import train_test_split

import os

import matplotlib.pyplot as plt

import numpy as np

import tensorflow as tf

dataset = datasets.load_boston()

x = dataset.data

target = dataset.target

y = np.reshape(target,(len(target), 1))

x_train, x_verify, y_train, y_verify = train_test_split(x, y, random_state=1)

y_train = y_train.reshape(-1)

train_data = np.insert(x_train, 0, values=y_train, axis=1)

def r_square(y_verify, y_pred):

var = np.var(y_verify)

mse = np.sum(np.power((y_verify-y_pred.reshape(-1,1)), 2))/len(y_verify)

res = 1 - mse/var

print('var:', var)

print('MSE-ljj:', mse)

print('R2-ljj:', res)

EPOCH = 3000

lr = tf.placeholder(tf.float32, [], 'lr')

x = tf.placeholder(tf.float32, shape=[None, 13], name='input_feature_x')

y = tf.placeholder(tf.float32, shape=[None, 1], name='input_feature_y')

W = tf.Variable(tf.truncated_normal(shape=[13, 10], stddev=0.1))

b = tf.Variable(tf.constant(0., shape=[10]))

W2 = tf.Variable(tf.truncated_normal(shape=[10, 1], stddev=0.1))

b2 = tf.Variable(tf.constant(0., shape=[1]))

with tf.Session() as sess:

hidden1 = tf.nn.relu(tf.add(tf.matmul(x, W), b))

y_predict = tf.add(tf.matmul(hidden1, W2), b2)

loss = tf.reduce_mean(tf.reduce_sum(tf.pow(y-y_predict,2), reduction_indices=[1]))

print(loss.shape)

train = tf.train.AdamOptimizer(learning_rate=lr).minimize(loss)

sess.run(tf.global_variables_initializer())

saver = tf.train.Saver()

W_res = 0

b_res = 0

try:

last_chk_path = tf.train.latest_checkpoint(checkpoint_dir='/home/ljj/PycharmProjects/mooc/train_record')

saver.restore(sess, save_path=last_chk_path)

except:

print('no save file to recover-----------start new train instead--------')

loss_list = []

over_flag = 0

for i in range(EPOCH):

if over_flag ==1:

break

y_t = train_data[:, 0].reshape(-1, 1)

_, W_res, b_res, loss_train = sess.run([train, W, b, loss],

feed_dict={x: train_data[:, 1:],

y: y_t,

lr: 0.01})

checkpoint_file = os.path.join('/home/ljj/PycharmProjects/mooc/train_record', 'checkpoint')

saver.save(sess, checkpoint_file, global_step=i)

loss_list.append(loss_train)

if loss_train < 0.2:

over_flag = 1

break

if i %500 == 0:

print('EPOCH = {:}, train_loss ={:}'.format(i, loss_train))

if i % 500 == 0:

r = loss.eval(session=sess, feed_dict={x: x_verify,

y: y_verify,

lr: 0.01})

print('verify_loss = ',r)

np.random.shuffle(train_data)

plt.plot(range(len(loss_list)-1), loss_list[1:], 'r')

plt.show()

print('final loss = ',loss.eval(session=sess, feed_dict={x: x_verify,

y: y_verify,

lr: 0.01}))

y_pred = sess.run(y_predict, feed_dict={x: x_verify,

y: y_verify,

lr: 0.01})

plt.subplot(2,1,1)

plt.xlim([0,50])

plt.plot(range(len(y_verify)), y_pred,'b--')

plt.plot(range(len(y_verify)), y_verify,'r')

plt.title('validation')

y_ss = sess.run(y_predict, feed_dict={x: x_train,

y: y_train.reshape(-1, 1),

lr: 0.01})

plt.subplot(2,1,2)

plt.xlim([0,50])

plt.plot(range(len(y_train)), y_ss,'r--')

plt.plot(range(len(y_train)), y_train,'b')

plt.title('train')

plt.savefig('tf.png')

plt.show()

r_square(y_verify, y_pred)

訓練了大概3000個epoch後,儲存模型,之後可以多次訓練,但是loss基本收斂了,沒有太大變化。

輸出結果如下:

final loss = 15.117827

var: 99.0584735569471

MSE-ljj: 15.11782691349897

R2-ljj: 0.8473848185757882

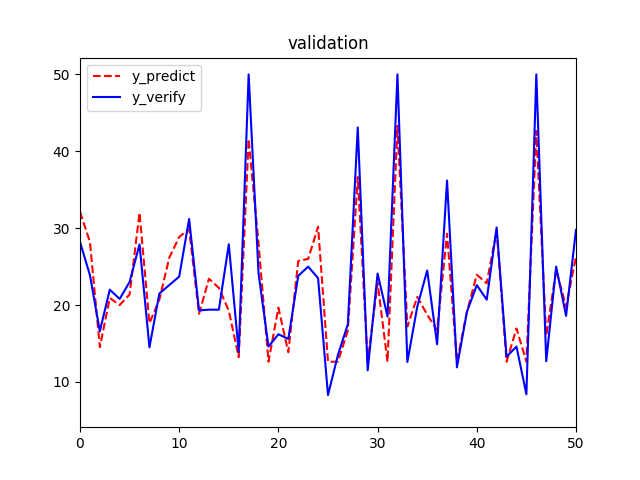

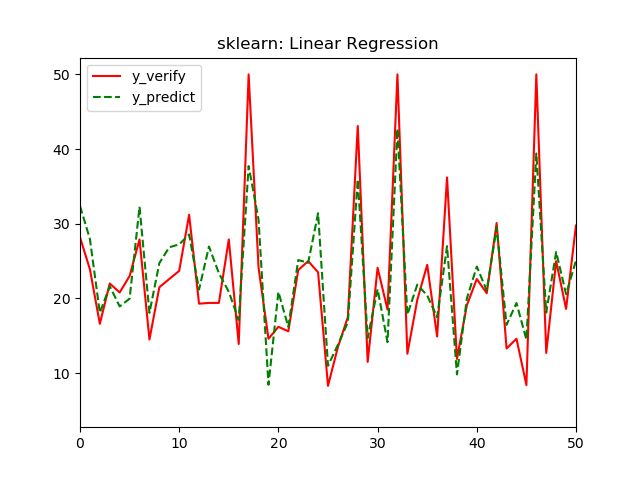

從影象上看,擬合效果也是一般,再拿一個放大版本的validation圖,同樣取前50個樣本,這樣方便和之前的線性迴歸模型對比。

最後我們還是用資料來說明:

tf模型結果中,

R2:0.847 > 0. 779

MSE:15.1 < 21.8

都比sklearn的線性迴歸結果要好。所以,此tf模型對波士頓房價資料的可解釋性更強。

def learning_rate(epoch):

if epoch < 200:

return 0.01

if epoch < 400:

return 0.001

if epoch < 800:

return 1e-4