[AI教程]TensorFlow入門:訓練卷積網路模型解決分類問題

介紹

1.相關包匯入

// An highlighted block

import math

import numpy as np

import h5py

import matplotlib.pyplot as plt

import scipy

from PIL import Image

from scipy import ndimage

import tensorflow as tf

from tensorflow.python.framework import ops

from cnn_utils import *

%matplotlib inline np.random.seed(1)

2.資料準備

本實驗使用SIGNS資料集是6個符號的集合,表示從0到5的數字。

訓練集:1080個影象(64乘64畫素)的符號表示從0到5的數字(每個數字180個影象)。

測試集:120張圖片(64乘64畫素)的符號,表示從0到5的數字(每個數字20張圖片)。

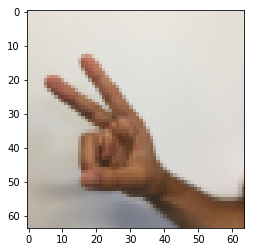

2.1資料例項

下面將顯示資料集中標記影象的示例。 隨意更改下面的index的值,然後重新執行以檢視不同的示例。

index = 6

plt.imshow(X_train_orig[index])

print ("y = " + str(np.squeeze(Y_train_orig[:, index])))

y = 2

2.2資料檢查

X_train = 執行結果:

number of training examples = 1080

number of test examples = 120

X_train shape: (1080, 64, 64, 3)

Y_train shape: (1080, 6)

X_test shape: (120, 64, 64, 3)

Y_test shape: (120, 6)

3.引數初始化

# GRADED FUNCTION: initialize_parameters

def initialize_parameters():

"""

Initializes weight parameters to build a neural network with tensorflow. The shapes are:

W1 : [4, 4, 3, 8]

W2 : [2, 2, 8, 16]

Returns:

parameters -- a dictionary of tensors containing W1, W2

"""

tf.set_random_seed(1) # so that your "random" numbers match ours

### START CODE HERE ### (approx. 2 lines of code)

W1 = tf.get_variable("W1", [4,4,3,8], initializer = tf.contrib.layers.xavier_initializer(seed = 0))

W2 = tf.get_variable("W2", [2,2,8,16], initializer = tf.contrib.layers.xavier_initializer(seed = 0))

### END CODE HERE ###

parameters = {"W1": W1,

"W2": W2}

return parameters

tf.reset_default_graph()

with tf.Session() as sess_test:

parameters = initialize_parameters()

init = tf.global_variables_initializer()

sess_test.run(init)

print("W1 = " + str(parameters["W1"].eval()[1,1,1]))

print("W2 = " + str(parameters["W2"].eval()[1,1,1]))

執行結果:

W1 = [ 0.00131723 0.1417614 -0.04434952 0.09197326 0.14984085 -0.03514394

-0.06847463 0.05245192]

W2 = [-0.08566415 0.17750949 0.11974221 0.16773748 -0.0830943 -0.08058

-0.00577033 -0.14643836 0.24162132 -0.05857408 -0.19055021 0.1345228

-0.22779644 -0.1601823 -0.16117483 -0.10286498]

4.前向傳播

在TensorFlow中,有內建函式可以執行卷積步驟。

- tf.nn.conv2d(X,W1, strides = [1,s,s,1], padding = ‘SAME’): 給定一個輸入?和一組濾波器?1,第三個輸入([1,f,f,1])表示過濾器在每個維度移動的步長,SAME表示0填充。

- tf.nn.max_pool(A, ksize = [1,f,f,1], strides = [1,s,s,1], padding = ‘SAME’): 給定輸入A,此函式使用大小為(f,f)的視窗和大小(s,s)的跨度來在每個視窗上執行最大池化。

- tf.nn.relu(Z1): 啟用函式,可以是任意維度的。

- tf.contrib.layers.flatten§:給定輸入P,此函式將每個示例展平為一維向量,同時保持batch-size大小。 它返回一個形狀為[batch_size,k]的張量。

- tf.contrib.layers.fully_connected(F, num_outputs): 給定輸入F,它返回使用全連線層計算的輸出。

我們將按照CONV2D -> RELU -> MAXPOOL -> CONV2D -> RELU -> MAXPOOL -> FLATTEN -> FULLYCONNECTED的順序實現前向傳播功能。

# GRADED FUNCTION: forward_propagation

def forward_propagation(X, parameters):

"""

Implements the forward propagation for the model:

CONV2D -> RELU -> MAXPOOL -> CONV2D -> RELU -> MAXPOOL -> FLATTEN -> FULLYCONNECTED

Arguments:

X -- input dataset placeholder, of shape (input size, number of examples)

parameters -- python dictionary containing your parameters "W1", "W2"

the shapes are given in initialize_parameters

Returns:

Z3 -- the output of the last LINEAR unit

"""

# Retrieve the parameters from the dictionary "parameters"

W1 = parameters['W1']

W2 = parameters['W2']

### START CODE HERE ###

# CONV2D: stride of 1, padding 'SAME'

Z1 = tf.nn.conv2d(X,W1, strides = [1,1,1,1], padding = 'SAME')

# RELU

A1 = tf.nn.relu(Z1)

# MAXPOOL: window 8x8, sride 8, padding 'SAME'

P1 = tf.nn.max_pool(A1, ksize = [1,8,8,1], strides = [1,8,8,1], padding = 'SAME')

# CONV2D: filters W2, stride 1, padding 'SAME'

Z2 = tf.nn.conv2d(P1,W2, strides = [1,1,1,1], padding = 'SAME')

# RELU

A2 = tf.nn.relu(Z2)

# MAXPOOL: window 4x4, stride 4, padding 'SAME'

P2 = tf.nn.max_pool(A2, ksize = [1,4,4,1], strides = [1,4,4,1], padding = 'SAME')

# FLATTEN

P2 = tf.contrib.layers.flatten(P2)

# FULLY-CONNECTED without non-linear activation function (not not call softmax).

# 6 neurons in output layer. Hint: one of the arguments should be "activation_fn=None"

Z3 = tf.contrib.layers.fully_connected(P2, 6,activation_fn=None)

### END CODE HERE ###

return Z3

tf.reset_default_graph()

with tf.Session() as sess:

np.random.seed(1)

X, Y = create_placeholders(64, 64, 3, 6)

parameters = initialize_parameters()

Z3 = forward_propagation(X, parameters)

init = tf.global_variables_initializer()

sess.run(init)

a = sess.run(Z3, {X: np.random.randn(2,64,64,3), Y: np.random.randn(2,6)})

print("Z3 = " + str(a))

執行結果:

Z3 = [[ 1.4416984 -0.24909666 5.450499 -0.2618962 -0.20669907 1.3654671 ]

[ 1.4070846 -0.02573211 5.08928 -0.48669922 -0.40940708 1.2624859 ]]

5.代價函式

可以呼叫下面的函式計算損失:

- tf.nn.softmax_cross_entropy_with_logits(logits = Z3, labels = Y):計算softmax熵損失, 該函式既可以用作softmax啟用函式,也可以用來計算產生的損失。

# GRADED FUNCTION: compute_cost

def compute_cost(Z3, Y):

"""

Computes the cost

Arguments:

Z3 -- output of forward propagation (output of the last LINEAR unit), of shape (6, number of examples)

Y -- "true" labels vector placeholder, same shape as Z3

Returns:

cost - Tensor of the cost function

"""

### START CODE HERE ### (1 line of code)

cost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(logits = Z3, labels = Y))

### END CODE HERE ###

return cost

tf.reset_default_graph()

with tf.Session() as sess:

np.random.seed(1)

X, Y = create_placeholders(64, 64, 3, 6)

parameters = initialize_parameters()

Z3 = forward_propagation(X, parameters)

cost = compute_cost(Z3, Y)

init = tf.global_variables_initializer()

sess.run(init)

a = sess.run(cost, {X: np.random.randn(4,64,64,3), Y: np.random.randn(4,6)})

print("cost = " + str(a))

執行結果:

cost = 4.66487

6.構造模型

# GRADED FUNCTION: model

def model(X_train, Y_train, X_test, Y_test, learning_rate = 0.009,

num_epochs = 100, minibatch_size = 64, print_cost = True):

"""

Implements a three-layer ConvNet in Tensorflow:

CONV2D -> RELU -> MAXPOOL -> CONV2D -> RELU -> MAXPOOL -> FLATTEN -> FULLYCONNECTED

Arguments:

X_train -- training set, of shape (None, 64, 64, 3)

Y_train -- test set, of shape (None, n_y = 6)

X_test -- training set, of shape (None, 64, 64, 3)

Y_test -- test set, of shape (None, n_y = 6)

learning_rate -- learning rate of the optimization

num_epochs -- number of epochs of the optimization loop

minibatch_size -- size of a minibatch

print_cost -- True to print the cost every 100 epochs

Returns:

train_accuracy -- real number, accuracy on the train set (X_train)

test_accuracy -- real number, testing accuracy on the test set (X_test)

parameters -- parameters learnt by the model. They can then be used to predict.

"""

ops.reset_default_graph() # to be able to rerun the model without overwriting tf variables

tf.set_random_seed(1) # to keep results consistent (tensorflow seed)

seed = 3 # to keep results consistent (numpy seed)

(m, n_H0, n_W0, n_C0) = X_train.shape

n_y = Y_train.shape[1]

costs = [] # To keep track of the cost

# Create Placeholders of the correct shape

### START CODE HERE ### (1 line)

X, Y = create_placeholders(n_H0, n_W0, n_C0, n_y)

### END CODE HERE ###

# Initialize parameters

### START CODE HERE ### (1 line)

parameters = initialize_parameters()

### END CODE HERE ###

# Forward propagation: Build the forward propagation in the tensorflow graph

### START CODE HERE ### (1 line)

Z3 = forward_propagation(X, parameters)

### END CODE HERE ###

# Cost function: Add cost function to tensorflow graph

### START CODE HERE ### (1 line)

cost = compute_cost(Z3, Y)

### END CODE HERE ###

# Backpropagation: Define the tensorflow optimizer. Use an AdamOptimizer that minimizes the cost.

### START CODE HERE ### (1 line)

optimizer = tf.train.AdamOptimizer(learning_rate).minimize(cost)

### END CODE HERE ###

# Initialize all the variables globally

init = tf.global_variables_initializer()

# Start the session to compute the tensorflow graph

with tf.Session() as sess:

# Run the initialization

sess.run(init)

# Do the training loop

for epoch in range(num_epochs):

minibatch_cost = 0.

num_minibatches = int(m / minibatch_size) # number of minibatches of size minibatch_size in the train set

seed = seed + 1

minibatches = random_mini_batches(X_train, Y_train, minibatch_size, seed)

for minibatch in minibatches:

# Select a minibatch

(minibatch_X, minibatch_Y) = minibatch

# IMPORTANT: The line that runs the graph on a minibatch.

# Run the session to execute the optimizer and the cost, the feedict should contain a minibatch for (X,Y).

### START CODE HERE ### (1 line)

_ , temp_cost = sess.run([optimizer, cost],feed_dict = {X:minibatch_X,Y:minibatch_Y})

### END CODE HERE ###

minibatch_cost += temp_cost / num_minibatches

# Print the cost every epoch

if print_cost == True and epoch % 5 == 0:

print ("Cost after epoch %i: %f" % (epoch, minibatch_cost))

if print_cost == True and epoch % 1 == 0:

costs.append(minibatch_cost)

# plot the cost

plt.plot(np.squeeze(costs))

plt.ylabel('cost')

plt.xlabel('iterations (per tens)')

plt.title("Learning rate =" + str(learning_rate))

plt.show()

# Calculate the correct predictions

predict_op = tf.argmax(Z3, 1)

correct_prediction = tf.equal(predict_op, tf.argmax(Y, 1))

# Calculate accuracy on the test set

accuracy = tf.reduce_mean(tf.cast(correct_prediction, "float"))

print(accuracy)

train_accuracy = accuracy.eval({X: X_train, Y: Y_train})

test_accuracy = accuracy.eval({X: X_test, Y: Y_test})

print("Train Accuracy:", train_accuracy)

print("Test Accuracy:", test_accuracy)

return train_accuracy, test_accuracy, parameters

_, _, parameters = model(X_train, Y_train, X_test, Y_test)

執行結果:

Cost after epoch 0: 1.921332

Cost after epoch 5: 1.904156

Cost after epoch 10: 1.904309

Cost after epoch 15: 1.904477

Cost after epoch 20: 1.901876

Cost after epoch 25: 1.784078

Cost after epoch 30: 1.681051

Cost after epoch 35: 1.618207

Cost after epoch 40: 1.597971

Cost after epoch 45: 1.566707

Cost after epoch 50: 1.554487

Cost after epoch 55: 1.502188

Cost after epoch 60: 1.461036

Cost after epoch 65: 1.304479

Cost after epoch 70: 1.201502

Cost after epoch 75: 1.144233

Cost after epoch 80: 1.096785

Cost after epoch 85: 1.081992

Cost after epoch 90: 1.054077

Cost after epoch 95: 1.025999

本文內容編輯:陳鑫