eclipse操作HDFS叢集API

阿新 • • 發佈:2018-12-22

eclipse操作HDFS叢集

windows下配置環境

1.配置HADOOP_HOME

hadoop-common-2.2.0-bin-master下載

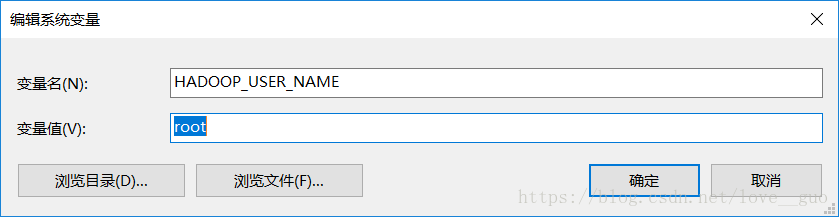

2.配置HADOOP_USER_NAME

防止使用時出現許可權問題

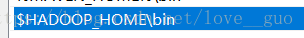

3.修改Path

修改eclipse配置

1.新增外掛

eclipse資料夾-->dropins資料夾-->plugins-->hadoop-eclipse-plugin-2.6.0.jar

hadoop-eclipse-plugin-2.6.0.jar百度雲下載

啟動eclipse

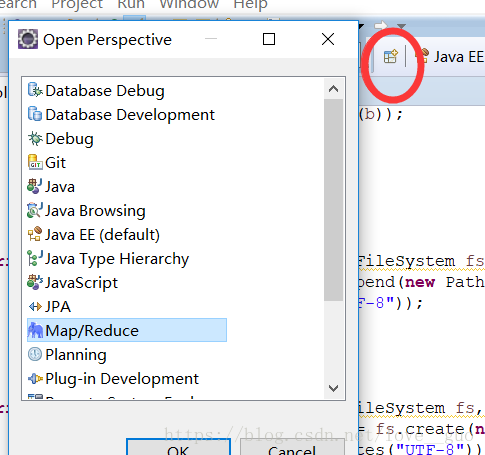

1.開啟Map/Reduce視窗

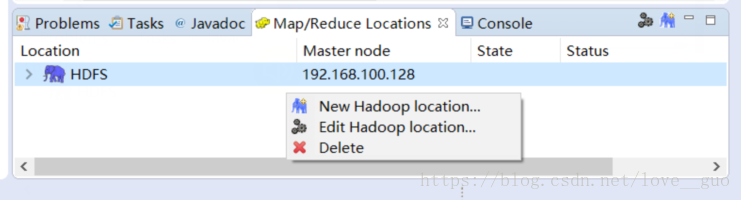

2.建立連線

1.空白處右擊選擇New Hadoop location

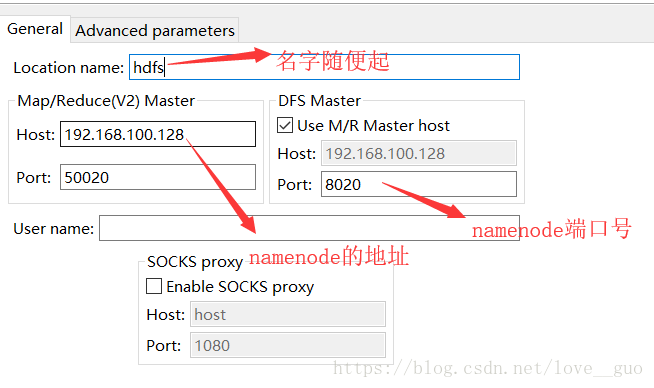

2.修改資訊

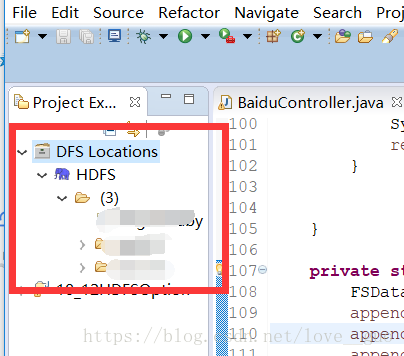

3.檢視HDFS檔案

3.建立java工程

1.匯入jar包

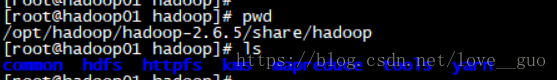

匯入hadoop目錄下的sharw/hadoop中common、hdfs、tools中的jar包及lib檔案下的jar包

記得build path

2.匯入xml配置檔案

匯入hadoop目錄下的etc/hadoop中的hdfs-site.xml和core.hdfs.xml。

3.建立log4j.properties檔案

log4j.rootLogger=INFO, stdout log4j.appender.stdout=org.apache.log4j.ConsoleAppender log4j.appender.stdout.layout=org.apache.log4j.PatternLayout log4j.appender.stdout.layout.ConversionPattern=%d %p [%c] - %m%n log4j.appender.logfile=org.apache.log4j.FileAppender log4j.appender.logfile.File=target/spring.log log4j.appender.logfile.layout=org.apache.log4j.PatternLayout log4j.appender.logfile.layout.ConversionPattern=%d %p [%c] - %m%n

4.建立Java檔案

import java.io.FileNotFoundException;

import java.io.IOException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.BlockLocation;

import org.apache.hadoop.fs.FSDataInputStream;

import org.apache.hadoop.fs.FSDataOutputStream;

import org.apache.hadoop.fs.FileStatus;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.FileUtil;

import org.apache.hadoop.fs.HdfsBlockLocation;

import org.apache.hadoop.fs.Path;

import com.sun.xml.bind.v2.schemagen.xmlschema.List;

public class HDFSTest{

public static void main(String[] args) throws IOException {

//操作HDFS之前得先建立配置物件

Configuration conf = new Configuration(true);

//建立操作HDFS的物件

FileSystem fs = FileSystem.get(conf);

//檢視檔案系統的內容

List list = listFileSystem(fs,"/");

//建立資料夾

createDir(fs,"/test/abc");

//上傳檔案

uploadFileToHDFS(fs,"D:\\hadoop\\VMware.txt","/test/abc/");

//下載檔案

downLoadFileFromHDFS(fs,"/test/abc/VMware.txt","d:/");

//刪除.....

//重新命名

// renameFile(fs,"/test/abc/VMware.txt","/test/abc/Angelababy");

//內部移動 內部複製

// innerCopyAndMoveFile(fs,conf,"/test/abc/Angelababy","/");

//建立一個新檔案

// createNewFile(fs,"/test/abc/hanhong");

//寫檔案

// writeToHDFSFile(fs,"/test/abc/hanhong","hello world");

//追加寫

// appendToHDFSFile(fs,"/test/abc/hanhong","\nhello world");

//讀檔案內容

// readFromHDFSFile(fs,"/test/abc/hanhong");

//獲取資料的位置

// getFileLocation(fs,"/Angelababy");

}

private static void getFileLocation(FileSystem fs, String string) throws IOException {

FileStatus fileStatus = fs.getFileStatus(new Path(string));

long len = fileStatus.getLen();

BlockLocation[] fileBlockLocations = fs.getFileBlockLocations(fileStatus, 0, len);

String[] hosts = fileBlockLocations[0].getHosts();

for (String string2 : hosts) {

System.out.println(string2);

}

HdfsBlockLocation blockLocation = (HdfsBlockLocation)fileBlockLocations[0];

long blockId = blockLocation.getLocatedBlock().getBlock().getBlockId();

System.out.println(blockId);

}

private static void readFromHDFSFile(FileSystem fs, String string) throws IllegalArgumentException, IOException {

FSDataInputStream inputStream = fs.open(new Path(string));

FileStatus fileStatus = fs.getFileStatus(new Path(string));

long len = fileStatus.getLen();

byte[] b = new byte[(int)len];

int read = inputStream.read(b);

while(read != -1){

System.out.println(new String(b));

read = inputStream.read(b);

}

}

private static void appendToHDFSFile(FileSystem fs, String filePath, String content) throws IllegalArgumentException, IOException {

FSDataOutputStream append = fs.append(new Path(filePath));

append.write(content.getBytes("UTF-8"));

append.flush();

append.close();

}

private static void writeToHDFSFile(FileSystem fs, String filePath, String content) throws IllegalArgumentException, IOException {

FSDataOutputStream outputStream = fs.create(new Path(filePath));

outputStream.write(content.getBytes("UTF-8"));

outputStream.flush();

outputStream.close();

}

private static void createNewFile(FileSystem fs, String string) throws IllegalArgumentException, IOException {

fs.createNewFile(new Path(string));

}

private static void innerCopyAndMoveFile(FileSystem fs, Configuration conf,String src, String dest) throws IOException {

Path srcPath = new Path(src);

Path destPath = new Path(dest);

//內部拷貝

// FileUtil.copy(srcPath.getFileSystem(conf), srcPath, destPath.getFileSystem(conf), destPath,false, conf);

//內部移動

FileUtil.copy(srcPath.getFileSystem(conf), srcPath, destPath.getFileSystem(conf), destPath,true, conf);

}

private static void renameFile(FileSystem fs, String src, String dest) throws IOException {

Path srcPath = new Path(src);

Path destPath = new Path(dest);

fs.rename(srcPath, destPath);

}

private static void downLoadFileFromHDFS(FileSystem fs, String src, String dest) throws IOException {

Path srcPath = new Path(src);

Path destPath = new Path(dest);

//copyToLocal

fs.copyToLocalFile(srcPath, destPath);

//moveToLocal

//將HDFS上的檔案刪除下載

// fs.copyToLocalFile(true,srcPath, destPath);

}

private static void uploadFileToHDFS(FileSystem fs, String src, String dest) throws IOException {

Path srcPath = new Path(src);

Path destPath = new Path(dest);

//copyFromLocal

fs.copyFromLocalFile(srcPath, destPath);

//moveFromLocal

//將本地的檔案剪下上傳

// fs.copyFromLocalFile(true,srcPath, destPath);

}

private static void createDir(FileSystem fs, String string) throws IllegalArgumentException, IOException {

Path path = new Path(string);

if(fs.exists(path)){

fs.delete(path, true);

}

fs.mkdirs(path);

}

private static List listFileSystem(FileSystem fs, String path) throws FileNotFoundException, IOException {

Path ppath = new Path(path);

FileStatus[] listStatus = fs.listStatus(ppath);

for (FileStatus fileStatus : listStatus) {

System.out.println(fileStatus);

}

return null;

}

}

可能出現的問題:

WARN [org.apache.hadoop.util.NativeCodeLoader] - Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

這個warn可以無視,並不影響使用