OpenCV3.0實現SIFT特徵提取+RANSAC剔除誤匹配點

開發環境:VS2013+OpenCV3.0

一、Preparation

在學習影象識別中特徵點檢測與匹配時,需要用到OpenCV中的SIFT和SURF演算法,如SiftFeatureDetector或SiftFeatureExtractor,在OpenCV2中SIFT和SURF演算法被寫在檔案#include<opencv2/nonfree/feature2d.hpp>中,但是OpenCV3.0中這兩種演算法存放在opencv_contrib目錄下,若想使用該目錄下的功能需要自己重新編譯OpenCV。

opencv3.0+opencv_contrib在Windows上的配置和編譯可參考:

Note:這裡opencv和opencv_contrib的版本要一致,否則後面編譯的時候會報錯。

二、RANSAC Algorithm

RANSAC(RANdom SAmple Consensus), 隨機抽樣一致性,它可以從一組包含“局外點”的觀測資料,通過迭代的方式訓練最優的引數模型,不符合最優引數模型的被定義為“局外點”。

1. RANSAC消除誤匹配點可以分為三部分:

(1)根據matches將特徵點對齊,將座標轉換為float型別

(2)使用求基礎矩陣的方法,findFundamentalMat,得到RansacStatus

(3)根據RansacStatus來刪除誤匹配點,即RansacStatus[0]=0的點。

2.函式說明

findFundamentalMat()得到基礎矩陣,基礎矩陣對應三維影象中像點之間的對應關係。

findHomography()得到變換矩陣。

2. 優點與缺點

優點是對模型引數的估計具有魯棒性,能從具有大量局外點的觀測資料中估計出高精度的模型引數,缺點就是迭代次數沒有上限,如果設定迭代次數很可能得不到最優的引數模型,甚至得到錯誤的模型。RANSAC只有一定的概率得到可信模型,迭代次數越大,概率越高,兩者成正比。

RANSAC只能從資料集中得到一個模型,如果有多個模型,該演算法不能實現。

三. 程式碼實現與效果

#include <iostream> #include<opencv2/highgui/highgui.hpp> #include<opencv2/imgproc/imgproc.hpp> #include <opencv2/opencv.hpp> #include<opencv2/xfeatures2d.hpp> #include<opencv2/core/core.hpp> using namespace cv; using namespace std; using namespace cv::xfeatures2d;//只有加上這句名稱空間,SiftFeatureDetector and SiftFeatureExtractor才可以使用 int main() { //Create SIFT class pointer Ptr<Feature2D> f2d = xfeatures2d::SIFT::create(); //SiftFeatureDetector siftDetector; //Loading images Mat img_1 = imread("101200.jpg"); Mat img_2 = imread("101201.jpg"); if (!img_1.data || !img_2.data) { cout << "Reading picture error!" << endl; return false; } //Detect the keypoints double t0 = getTickCount();//當前滴答數 vector<KeyPoint> keypoints_1, keypoints_2; f2d->detect(img_1, keypoints_1); f2d->detect(img_2, keypoints_2); cout << "The keypoints number of img1 is:" << keypoints_1.size() << endl; cout << "The keypoints number of img2 is:" << keypoints_2.size() << endl; //Calculate descriptors (feature vectors) Mat descriptors_1, descriptors_2; f2d->compute(img_1, keypoints_1, descriptors_1); f2d->compute(img_2, keypoints_2, descriptors_2); double freq = getTickFrequency(); double tt = ((double)getTickCount() - t0) / freq; cout << "Extract SIFT Time:" <<tt<<"ms"<< endl; //畫關鍵點 Mat img_keypoints_1, img_keypoints_2; drawKeypoints(img_1,keypoints_1,img_keypoints_1,Scalar::all(-1),0); drawKeypoints(img_2, keypoints_2, img_keypoints_2, Scalar::all(-1), 0); //imshow("img_keypoints_1",img_keypoints_1); //imshow("img_keypoints_2",img_keypoints_2); //Matching descriptor vector using BFMatcher BFMatcher matcher; vector<DMatch> matches; matcher.match(descriptors_1, descriptors_2, matches); cout << "The number of match:" << matches.size()<<endl; //繪製匹配出的關鍵點 Mat img_matches; drawMatches(img_1, keypoints_1, img_2, keypoints_2, matches, img_matches); //imshow("Match image",img_matches); //計算匹配結果中距離最大和距離最小值 double min_dist = matches[0].distance, max_dist = matches[0].distance; for (int m = 0; m < matches.size(); m++) { if (matches[m].distance<min_dist) { min_dist = matches[m].distance; } if (matches[m].distance>max_dist) { max_dist = matches[m].distance; } } cout << "min dist=" << min_dist << endl; cout << "max dist=" << max_dist << endl; //篩選出較好的匹配點 vector<DMatch> goodMatches; for (int m = 0; m < matches.size(); m++) { if (matches[m].distance < 0.6*max_dist) { goodMatches.push_back(matches[m]); } } cout << "The number of good matches:" <<goodMatches.size()<< endl; //畫出匹配結果 Mat img_out; //紅色連線的是匹配的特徵點數,綠色連線的是未匹配的特徵點數 //matchColor – Color of matches (lines and connected keypoints). If matchColor==Scalar::all(-1) , the color is generated randomly. //singlePointColor – Color of single keypoints(circles), which means that keypoints do not have the matches.If singlePointColor == Scalar::all(-1), the color is generated randomly. //CV_RGB(0, 255, 0)儲存順序為R-G-B,表示綠色 drawMatches(img_1, keypoints_1, img_2, keypoints_2, goodMatches, img_out, Scalar::all(-1), CV_RGB(0, 0, 255), Mat(), 2); imshow("good Matches",img_out); //RANSAC匹配過程 vector<DMatch> m_Matches; m_Matches = goodMatches; int ptCount = goodMatches.size(); if (ptCount < 100) { cout << "Don't find enough match points" << endl; return 0; } //座標轉換為float型別 vector <KeyPoint> RAN_KP1, RAN_KP2; //size_t是標準C庫中定義的,應為unsigned int,在64位系統中為long unsigned int,在C++中為了適應不同的平臺,增加可移植性。 for (size_t i = 0; i < m_Matches.size(); i++) { RAN_KP1.push_back(keypoints_1[goodMatches[i].queryIdx]); RAN_KP2.push_back(keypoints_2[goodMatches[i].trainIdx]); //RAN_KP1是要儲存img01中能與img02匹配的點 //goodMatches儲存了這些匹配點對的img01和img02的索引值 } //座標變換 vector <Point2f> p01, p02; for (size_t i = 0; i < m_Matches.size(); i++) { p01.push_back(RAN_KP1[i].pt); p02.push_back(RAN_KP2[i].pt); } /*vector <Point2f> img1_corners(4); img1_corners[0] = Point(0,0); img1_corners[1] = Point(img_1.cols,0); img1_corners[2] = Point(img_1.cols, img_1.rows); img1_corners[3] = Point(0, img_1.rows); vector <Point2f> img2_corners(4);*/ ////求轉換矩陣 //Mat m_homography; //vector<uchar> m; //m_homography = findHomography(p01, p02, RANSAC);//尋找匹配影象 //求基礎矩陣 Fundamental,3*3的基礎矩陣 vector<uchar> RansacStatus; Mat Fundamental = findFundamentalMat(p01, p02, RansacStatus, FM_RANSAC); //重新定義關鍵點RR_KP和RR_matches來儲存新的關鍵點和基礎矩陣,通過RansacStatus來刪除誤匹配點 vector <KeyPoint> RR_KP1, RR_KP2; vector <DMatch> RR_matches; int index = 0; for (size_t i = 0; i < m_Matches.size(); i++) { if (RansacStatus[i] != 0) { RR_KP1.push_back(RAN_KP1[i]); RR_KP2.push_back(RAN_KP2[i]); m_Matches[i].queryIdx = index; m_Matches[i].trainIdx = index; RR_matches.push_back(m_Matches[i]); index++; } } cout << "RANSAC後匹配點數" <<RR_matches.size()<< endl; Mat img_RR_matches; drawMatches(img_1, RR_KP1, img_2, RR_KP2, RR_matches, img_RR_matches); imshow("After RANSAC",img_RR_matches); //等待任意按鍵按下 waitKey(0); }

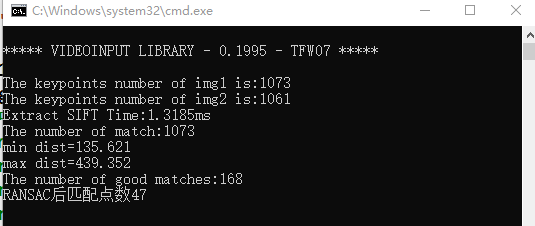

執行效果: