xgboost 自定義評價函數(metric)與目標函數

阿新 • • 發佈:2017-05-28

binary ret and 參數 cnblogs from valid ges zed

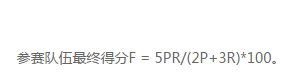

比賽得分公式如下:

其中,P為Precision , R為 Recall。

GBDT訓練基於驗證集評價,此時會調用評價函數,XGBoost的best_iteration和best_score均是基於評價函數得出。

評價函數:

input: preds和dvalid,即為驗證集和驗證集上的預測值,

return string 類型的名稱 和一個flaot類型的fevalerror值表示評價值的大小,其是以error的形式定義,即當此值越大是認為模型效果越差。

1 from sklearn.metrics import confusion_matrix 2 def customedscore(preds, dtrain):3 label = dtrain.get_label() 4 pred = [int(i>=0.5) for i in preds] 5 confusion_matrixs = confusion_matrix(label, pred) 6 recall =float(confusion_matrixs[0][0]) / float(confusion_matrixs[0][1]+confusion_matrixs[0][0]) 7 precision = float(confusion_matrixs[0][0]) / float(confusion_matrixs[1][0]+confusion_matrixs[0][0])8 F = 5*precision* recall/(2*precision+3*recall)*100 9 return ‘FSCORE‘,float(F)

應用:

訓練時要傳入參數:feval = customedscore,

1 params = { ‘silent‘: 1, ‘objective‘: ‘binary:logistic‘ , ‘gamma‘:0.1, 2 ‘min_child_weight‘:5, 3 ‘max_depth‘:5, 4 ‘lambda‘:10, 5 ‘subsample‘:0.7, 6 ‘colsample_bytree‘:0.7, 7 ‘colsample_bylevel‘:0.7, 8 ‘eta‘: 0.01, 9 ‘tree_method‘:‘exact‘} 10 model = xgb.train(params, trainsetall, num_round,verbose_eval=10, feval = customedscore,maximize=False)

自定義 目標函數,這個我沒有具體使用

1 # user define objective function, given prediction, return gradient and second order gradient 2 # this is log likelihood loss 3 def logregobj(preds, dtrain): 4 labels = dtrain.get_label() 5 preds = 1.0 / (1.0 + np.exp(-preds)) 6 grad = preds - labels 7 hess = preds * (1.0-preds) 8 return grad, hess

# training with customized objective, we can also do step by step training # simply look at xgboost.py‘s implementation of train bst = xgb.train(param, dtrain, num_round, watchlist, logregobj, evalerror)

參考:

https://github.com/dmlc/xgboost/blob/master/demo/guide-python/custom_objective.py

http://blog.csdn.net/lujiandong1/article/details/52791117

xgboost 自定義評價函數(metric)與目標函數