大數據(1):基於sogou.500w.utf8數據的MapReduce程序設計

阿新 • • 發佈:2017-11-18

trace 實例 map函數 writable 復制 -m 數據 mapred file

1.使用ECLIPSE工具打包運行WORDCOUNT實例,統計莎士比亞文集各單詞計數(文件SHAKESPEARE.TXT)。

①WorldCount.java 中的main函數修改如下:

public static void main(String[] args) throws Exception { Configuration conf = new Configuration(); Job job = new Job(conf, "word count"); job.setJarByClass(WordCount.class); job.setMapperClass(TokenizerMapper.class); job.setCombinerClass(IntSumReducer.class); job.setReducerClass(IntSumReducer.class); job.setOutputKeyClass(Text.class); job.setOutputValueClass(IntWritable.class); //設置輸入文本路徑 FileInputFormat.addInputPath(job, new Path("/input")); //設置mp結果輸出路徑 FileOutputFormat.setOutputPath(job, new Path("/output/wordcount")); System.exit(job.waitForCompletion(true) ? 0 : 1); }

②導出WordCount的jar包:

export->jar file->next->next->Main class裏面選擇WordCount->Finish。

③使用scp將wc.jar拷貝到node1機器,創建目錄:hadoop fs –mkdir /input,將shakespeare.txt上傳到hdfs上,運行wc.jar文件:hadoop jar wc.jar

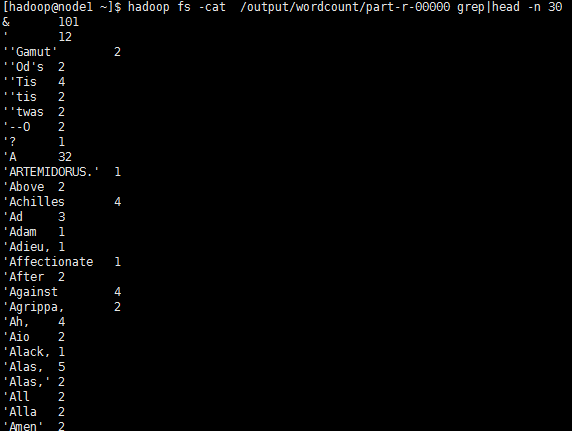

④使用hadoop fs -cat /output/wordcount/part-r-00000 grep|head -n 30 查看前30條輸出結果:

2.對於SOGOU_500W_UTF文件,完成下列程序設計內容:

(1) 統計每個用戶搜索的關鍵字總長度

Mapreduce程序:

public class sougou3 { public static class Sougou3Map extends Mapper<Object, Text, Text, Text> { public void map(Object key, Text value, Context context) throws IOException, InterruptedException { String line = value.toString(); String[] vals = line.split("\t"); String uid = vals[1]; String search = vals[2]; context.write(new Text(uid), new Text(search+"|"+search.length())); } } public static class Sougou3Reduce extends Reducer<Text, Text, Text, Text> { public void reduce(Text key, Iterable<Text> values, Context context) throws IOException, InterruptedException { String result = ""; for (Text value : values) { String strVal = value.toString(); result += (strVal+" "); } context.write(new Text(key + "\t"), new Text(result)); } } }

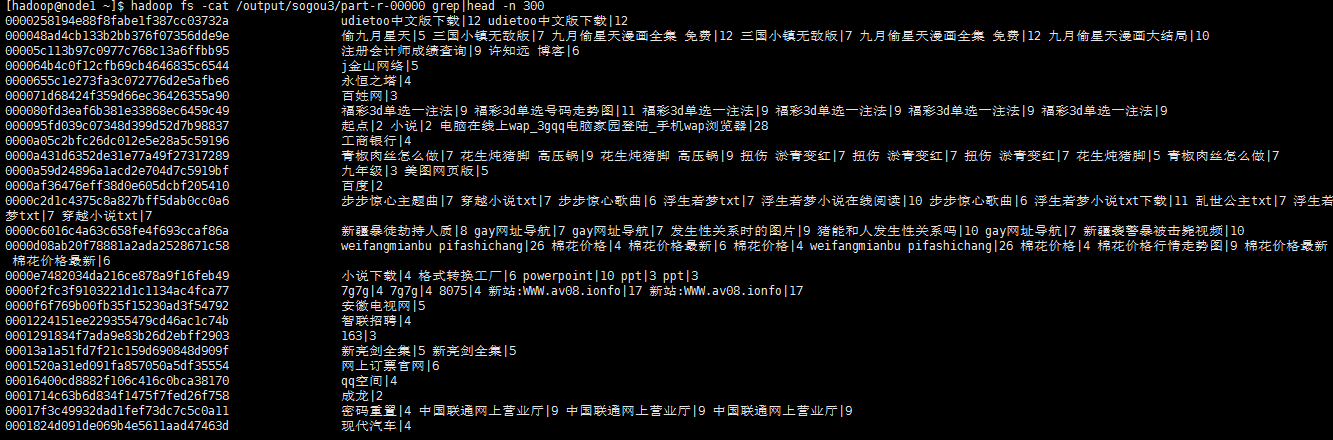

輸出結果:

(2) 統計2011年12月30日1點到2點之間,搜索過的UID有哪些?

(2) 統計2011年12月30日1點到2點之間,搜索過的UID有哪些?

Mapreduce程序:

public class sougou1 { public static class Sougou1Map extends Mapper<Object, Text, Text, Text> { public void map(Object key, Text value, Context context) throws IOException, InterruptedException { String line = value.toString(); String[] vals = line.split("\t"); String time = vals[0]; String uid = vals[1]; //2008-07-10 19:20:00 String formatTime = time.substring(0,4)+"-"+time.substring(4,6)+"-"+time.substring(6,8)+" " +time.substring(8,10)+":"+time.substring(10,12)+":"+time.substring(12,14); SimpleDateFormat sdf = new SimpleDateFormat("yyyy-MM-dd HH:mm:ss"); Date date; try { date = sdf.parse(formatTime); Date date1 = sdf.parse("2011-12-30 01:00:00"); Date date2 = sdf.parse("2011-12-30 02:00:00"); //日期在範圍區間上 if (date.getTime() > date1.getTime() && date.getTime() < date2.getTime()){ context.write(new Text(uid), new Text(formatTime)); } } catch (ParseException e) { e.printStackTrace(); } } } public static class Sougou1Reduce extends Reducer<Text, Text, Text, Text> { public void reduce(Text key, Iterable<Text> values, Context context) throws IOException, InterruptedException { String result = ""; for (Text value : values) { result += value.toString()+"|"; } context.write(key, new Text(result)); } } }

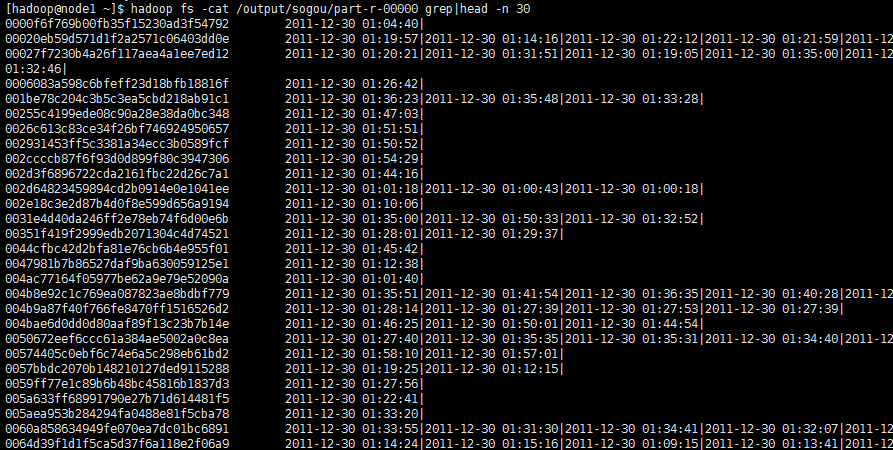

輸出結果:

左邊是用戶id,右邊分別是時間,以“|”作為分割。

(3) 統計搜索過‘仙劍奇俠’的每個UID搜索該關鍵詞的次數。

Mapreduce程序:

public class sougou2 { public static class Sougou2Map extends Mapper<Object, Text, Text, IntWritable> { public void map(Object key, Text value, Context context) throws IOException, InterruptedException { String line = value.toString(); String[] vals = line.split("\t"); String uid = vals[1]; String search = vals[2]; if (search.equals("仙劍奇俠")){ context.write(new Text(uid), new IntWritable(1)); } } } public static class Sougou2Reduce extends Reducer<Text, IntWritable, Text, IntWritable> { public void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException { int result = 0; for (IntWritable value : values) { result += value.get(); } context.write(new Text(key+"\t"), new IntWritable(result)); } } }

輸出結果:

UID為:6856e6e003a05cc912bfe13ebcea8a04的用戶搜索過“仙劍奇俠”共1次。

3.使用MAPREDUCE程序設計實現對文件中下列數據的排序操作78 11 56 87 25 63 19 22 55

Mapreduce程序:

public class Sort { //map將輸入中的value化成IntWritable類型,作為輸出的key public static class Map extends Mapper<Object,Text,IntWritable,NullWritable>{ private static IntWritable data=new IntWritable(); //實現map函數 public void map(Object key,Text value,Context context) throws IOException,InterruptedException{ String line=value.toString(); data.set(Integer.parseInt(line)); context.write(data, NullWritable.get()); } } //reduce將輸入中的key復制到輸出數據的key上, //然後根據輸入的value-list中元素的個數決定key的輸出次數 //用全局linenum來代表key的位次 public static class Reduce extends Reducer<IntWritable,NullWritable,IntWritable,NullWritable>{ //實現reduce函數 public void reduce(IntWritable key,Iterable<NullWritable> values,Context context) throws IOException,InterruptedException{ for(NullWritable val:values){ context.write(key, NullWritable.get()); } } } }

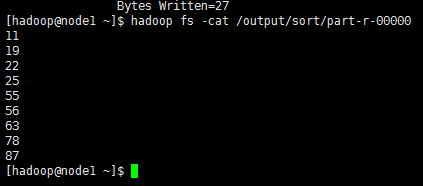

輸出內容為:

4.學生成績文件TXT內容(字段用TAB鍵分隔)如下,使用MAPREDUCE計算每個學生的平均成績

李平 87 89 98 75

張三 66 78 69 70

李四 96 82 78 90

王五 82 77 74 86

趙六 88 72 81 76

Mapreduce 程序:

public class Score { public static class ScoreMap extends Mapper<Object, Text, Text, NullWritable> { public void map(Object key, Text value, Context context) throws IOException, InterruptedException { context.write(value, NullWritable.get()); } } public static class ScoreReduce extends Reducer<Text, NullWritable, Text, IntWritable> { public void reduce(Text key, Iterable<NullWritable> values, Context context) throws IOException, InterruptedException { for (NullWritable nullWritable : values) { String line = key.toString(); String[] vals = line.split("\t"); String name = vals[0]; int val1 = Integer.parseInt(vals[1]); int val2 = Integer.parseInt(vals[2]); int val3 = Integer.parseInt(vals[3]); int average = (val1 + val2 + val3) / 3; context.write(new Text(name), new IntWritable(average)); } } } }

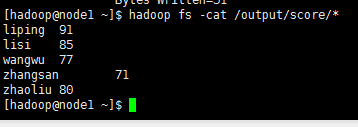

輸出結果為

大數據(1):基於sogou.500w.utf8數據的MapReduce程序設計