Istio微服務架構初試

感謝

http://blog.csdn.net/qq_34463875/article/details/77866072

看了一些文檔,有些半懂不懂,所以還是需要helloworld一下。因為istio需要kubernetes 1.7的環境,所以又把環境重新安裝了一邊,詳情看隨筆。

文章比較少,我也遇到不少問題,基本還是出於對一些東西的理解不夠深刻,踩坑下來也算是學習啦。

重要事情先說一次

1.Kube-apiserver需要打開ServiceAccount配置

2.Kube-apiserver需要配置ServiceAccount

3.集群需要配置DNS

架構

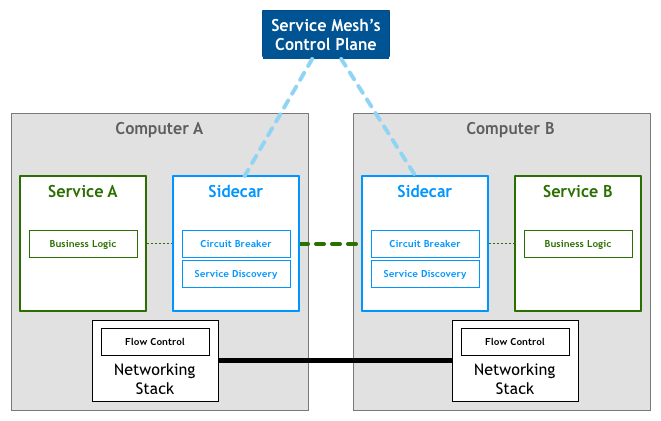

先看一張Service Mesh的架構圖

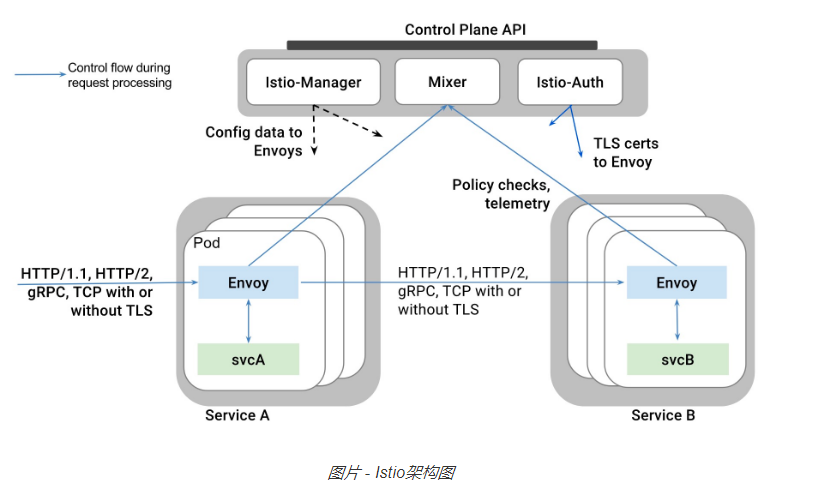

istio架構圖

時間有限以後慢慢補充功能

安裝

下載地址 https://github.com/istio/istio/releases

我下載的是0.1.6版本。 https://github.com/istio/istio/releases/download/0.1.6/istio-0.1.6-linux.tar.gz

解壓,然後下載鏡像,涉及鏡像包括

- istio/mixer:0.1.6

- pilot:0.1.6

- proxy_debug:0.1.6

- istio-ca:0.1.6

[root@node1 ~]# docker images REPOSITORY TAG IMAGE ID CREATED SIZE docker.io/tomcat 9.0-jre8 e882239f2a28 2 weeks ago 557.3 MB docker.io/alpine latest 053cde6e8953 3 weeks ago 3.962 MB registry.cn-hangzhou.aliyuncs.com/szss_k8s/k8s-dns-sidecar-amd64 1.14.5 fed89e8b4248 8 weeks ago 41.81 MB registry.cn-hangzhou.aliyuncs.com/szss_k8s/k8s-dns-kube-dns-amd64 1.14.5 512cd7425a73 8 weeks ago 49.38 MB registry.cn-hangzhou.aliyuncs.com/szss_k8s/k8s-dns-dnsmasq-nanny-amd64 1.14.5 459944ce8cc4 8 weeks ago 41.42 MB gcr.io/google_containers/exechealthz 1.0 82a141f5d06d 20 months ago 7.116 MB gcr.io/google_containers/kube2sky 1.14 a4892326f8cf 21 months ago 27.8 MB gcr.io/google_containers/etcd-amd64 2.2.1 3ae398308ded 22 months ago 28.19 MB gcr.io/google_containers/skydns 2015-10-13-8c72f8c 718809956625 2 years ago 40.55 MB docker.io/kubernetes/pause latest f9d5de079539 3 years ago 239.8 kB docker.io/istio/istio-ca 0.1.6 c25b02aba82d 292 years ago 153.6 MB docker.io/istio/mixer 0.1.6 1f4a2ce90af6 292 years ago 158.9 MB docker.io/istio/proxy_debug 0.1 5623de9317ff 292 years ago 825 MB docker.io/istio/proxy_debug 0.1.6 5623de9317ff 292 years ago 825 MB docker.io/istio/pilot 0.1.6 e0c24bd68c04 292 years ago 144.4 MB docker.io/istio/init 0.1 0cbd83e9df59 292 years ago 119.3 MB

進入istio.yaml後先把pullPolicy給修改了

imagePullPolicy: IfNotPresent

然後運行

kubectl create -f istio-rbac-beta.yaml

kubectl create -f istio.yaml

此處遇到無數問題,都和環境不ready相關

1.Kube-apiserver需要打開ServiceAccount配置

2.Kube-apiserver需要配置ServiceAccount

3.集群需要配置DNS

運行起來後一看service

[root@k8s-master kubernetes]# kubectl get services NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE helloworldsvc 10.254.145.112 <none> 8080/TCP 47m istio-egress 10.254.164.118 <none> 80/TCP 14h istio-ingress 10.254.234.8 <pending> 80:32031/TCP,443:32559/TCP 14h istio-mixer 10.254.227.198 <none> 9091/TCP,9094/TCP,42422/TCP 14h istio-pilot 10.254.15.121 <none> 8080/TCP,8081/TCP 14h kubernetes 10.254.0.1 <none> 443/TCP 1d tool 10.254.87.52 <none> 8080/TCP 44m

這個ingress服務一直處於pending狀態,後來查了半天說和是否支持外部負載均衡有關,暫時不理。

準備測試應用

建立PV和PVC,初步設想是準備一個tomcat鏡像,然後放上HelloWorld應用

[root@k8s-master ~]# cat pv.yaml apiVersion: v1 kind: PersistentVolume metadata: name: pv0003 spec: capacity: storage: 1Gi accessModes: - ReadWriteOnce persistentVolumeReclaimPolicy: Recycle hostPath: path: /webapps

[root@k8s-master ~]# cat pvc.yaml kind: PersistentVolumeClaim apiVersion: v1 metadata: name: tomcatwebapp spec: accessModes: - ReadWriteOnce resources: requests: storage: 1Gi

關於HelloWorld應用

index.jsp

<%@ page language="java" contentType="text/html; charset=utf-8" import="java.net.InetAddress" pageEncoding="utf-8"%> <html> <body> This is a Helloworld test</body> <% System.out.println("this is a session test!"); InetAddress addr = InetAddress.getLocalHost(); out.println("HostAddress="+addr.getHostAddress()); out.println("HostName="+addr.getHostName()); String version = System.getenv("SERVICE_VERSION"); out.println("SERVICE_VERSION="+version); %> </html>

建立第一個版本的rc-v1.yaml文件

[root@k8s-master ~]# cat rc-v1.yaml apiVersion: extensions/v1beta1 kind: Deployment metadata: name: helloworld-service spec: replicas: 1 template: metadata: labels: tomcat-app: "helloworld" version: "1" spec: containers: - name: tomcathelloworld image: docker.io/tomcat:9.0-jre8 volumeMounts: - mountPath: "/usr/local/tomcat/webapps" name: mypd ports: - containerPort: 8080 env: - name: "SERVICE_VERSION" value: "1" volumes: - name: mypd persistentVolumeClaim: claimName: tomcatwebapp

rc-service文件

[root@k8s-master ~]# cat rc-service.yaml apiVersion: v1 kind: Service metadata: name: helloworldsvc labels: tomcat-app: helloworld spec: ports: - port: 8080 protocol: TCP targetPort: 8080 name: http selector: tomcat-app: helloworld

然後通過istioctl kube-inject註入

istioctl kube-inject -f rc-v1.yaml > rc-v1-istio.yaml

註入後發現,多了一個Sidecar Container

[root@k8s-master ~]# cat rc-v1-istio.yaml apiVersion: extensions/v1beta1 kind: Deployment metadata: creationTimestamp: null name: helloworld-service spec: replicas: 1 strategy: {} template: metadata: annotations: alpha.istio.io/sidecar: injected alpha.istio.io/version: jenkins@ubuntu-16-04-build-12ac793f80be71-0.1.6-dab2033 pod.beta.kubernetes.io/init-containers: ‘[{"args":["-p","15001","-u","1337"],"image":"docker.io/istio/init:0.1","imagePullPolicy":"IfNotPresent","name":"init","securityContext":{"capabilities":{"add":["NET_ADMIN"]}}},{"args":["-c","sysctl -w kernel.core_pattern=/tmp/core.%e.%p.%t \u0026\u0026 ulimit -c unlimited"],"command":["/bin/sh"],"image":"alpine","imagePullPolicy":"IfNotPresent","name":"enable-core-dump","securityContext":{"privileged":true}}]‘ creationTimestamp: null labels: tomcat-app: helloworld version: "1" spec: containers: - env: - name: SERVICE_VERSION value: "1" image: docker.io/tomcat:9.0-jre8 name: tomcathelloworld ports: - containerPort: 8080 resources: {} volumeMounts: - mountPath: /usr/local/tomcat/webapps name: mypd - args: - proxy - sidecar - -v - "2" env: - name: POD_NAME valueFrom: fieldRef: fieldPath: metadata.name - name: POD_NAMESPACE valueFrom: fieldRef: fieldPath: metadata.namespace - name: POD_IP valueFrom: fieldRef: fieldPath: status.podIP image: docker.io/istio/proxy_debug:0.1 imagePullPolicy: IfNotPresent name: proxy resources: {} securityContext: runAsUser: 1337 volumes: - name: mypd persistentVolumeClaim: claimName: tomcatwebapp status: {} ---

inject之後又要下載幾個鏡像 :(

- docker.io/istio/proxy_debug:0.1

- docker.io/istio/init:0.1

- alpine

同時註意把imagePullPolicy改掉。。。。

再運行

kubectl create -f rc-v1-istio.yaml

此處又遇到無數坑

1.權限問題,需要在/etc/kubernetes/config下打開--allow-privileged,master和節點都需要打開

[root@k8s-master ~]# cat /etc/kubernetes/config ### # kubernetes system config # # The following values are used to configure various aspects of all # kubernetes services, including # # kube-apiserver.service # kube-controller-manager.service # kube-scheduler.service # kubelet.service # kube-proxy.service # logging to stderr means we get it in the systemd journal KUBE_LOGTOSTDERR="--logtostderr=true" # journal message level, 0 is debug KUBE_LOG_LEVEL="--v=0" # Should this cluster be allowed to run privileged docker containers KUBE_ALLOW_PRIV="--allow-privileged=true" # How the controller-manager, scheduler, and proxy find the apiserver KUBE_MASTER="--master=http://192.168.44.108:8080"

2.發現只要一加上 securityContext: runAsUser: 1337 POD無論如何都不啟動,去掉至少可以啟動,一直在desired階段,因為提示信息有限,比較燒腦,後發現需要修改APIServer中的配置,去掉--admission-control=NamespaceLifecycle,LimitRanger,SecurityContextDeny,ResourceQuota,ServiceAccount中的SecurityContextDeny

最後kube-apiserver配置為

[root@k8s-master ~]# cat /etc/kubernetes/apiserver ### # kubernetes system config # # The following values are used to configure the kube-apiserver # # The address on the local server to listen to. KUBE_API_ADDRESS="--insecure-bind-address=192.168.44.108" # The port on the local server to listen on. # KUBE_API_PORT="--port=8080" # Port minions listen on # KUBELET_PORT="--kubelet-port=10250" # Comma separated list of nodes in the etcd cluster KUBE_ETCD_SERVERS="--etcd-servers=http://192.168.44.108:2379" # Address range to use for services KUBE_SERVICE_ADDRESSES="--service-cluster-ip-range=10.254.0.0/16" # default admission control policies #KUBE_ADMISSION_CONTROL="--admission-control=NamespaceLifecycle,NamespaceExists,LimitRanger,ServiceAccount,SecurityContextDeny,ResourceQuota" KUBE_ADMISSION_CONTROL="--admission-control=NamespaceLifecycle,NamespaceExists,LimitRanger,ServiceAccount,ResourceQuota" # Add your own! KUBE_API_ARGS="--secure-port=443 --client-ca-file=/srv/kubernetes/ca.crt --tls-cert-file=/srv/kubernetes/server.cert --tls-private-key-file=/srv/kubernetes/server.key"

好了,搞完能順利啟動。

再建立一個rc-v2.yaml

[root@k8s-master ~]# cat rc-v2.yaml apiVersion: extensions/v1beta1 kind: Deployment metadata: name: helloworld-service-v2 spec: replicas: 1 template: metadata: labels: tomcat-app: "helloworld" version: "2" spec: containers: - name: tomcathelloworld image: docker.io/tomcat:9.0-jre8 volumeMounts: - mountPath: "/usr/local/tomcat/webapps" name: mypd ports: - containerPort: 8080 env: - name: "SERVICE_VERSION" value: "2" volumes: - name: mypd persistentVolumeClaim: claimName: tomcatwebapp

tool.yaml,用於在服務網絡中進行測試用.其實就是一個shell

[root@k8s-master ~]# cat tool.yaml apiVersion: extensions/v1beta1 kind: Deployment metadata: name: tool spec: replicas: 1 template: metadata: labels: name: tool version: "1" spec: containers: - name: tool image: docker.io/tomcat:9.0-jre8 volumeMounts: - mountPath: "/usr/local/tomcat/webapps" name: mypd ports: - containerPort: 8080 volumes: - name: mypd persistentVolumeClaim: claimName: tomcatwebapp --- apiVersion: v1 kind: Service metadata: name: tool labels: name: tool spec: ports: - port: 8080 protocol: TCP targetPort: 8080 name: http selector: name: tool

兩個都需要kube-inject並且通過apply進行部署。

最後結果

[root@k8s-master ~]# kubectl get pods NAME READY STATUS RESTARTS AGE helloworld-service-2437162702-x8w05 2/2 Running 0 1h helloworld-service-v2-2637126738-s7l4s 2/2 Running 0 1h istio-egress-2869428605-2ftgl 1/1 Running 2 14h istio-ingress-1286550044-6g3vj 1/1 Running 2 14h istio-mixer-765485573-23wc6 1/1 Running 2 14h istio-pilot-1495912787-g5r9s 2/2 Running 4 14h tool-185907110-fsr04 2/2 Running 0 1h

流量分配

建立一個路由規則

istioctl create -f default.yaml

[root@k8s-master ~]# cat default.yaml type: route-rule name: helloworld-default spec: destination: helloworldsvc.default.svc.cluster.local precedence: 1 route: - tags: version: "2" weight: 10 - tags: version: "1" weight: 90

也就是訪問helloworldsvc,有90%的流量會訪問到version 1的pod,而10%的流量會訪問到version 2的節點

如何判斷這個helloworldsvc確實是指到後端兩個pod呢,可以通過下面命令確認

[root@k8s-master ~]# kubectl describe service helloworldsvc Name: helloworldsvc Namespace: default Labels: tomcat-app=helloworld Annotations: <none> Selector: tomcat-app=helloworld Type: ClusterIP IP: 10.254.145.112 Port: http 8080/TCP Endpoints: 10.1.40.3:8080,10.1.40.7:8080 Session Affinity: None Events: <none>

說明service和deployment的配置沒有問題

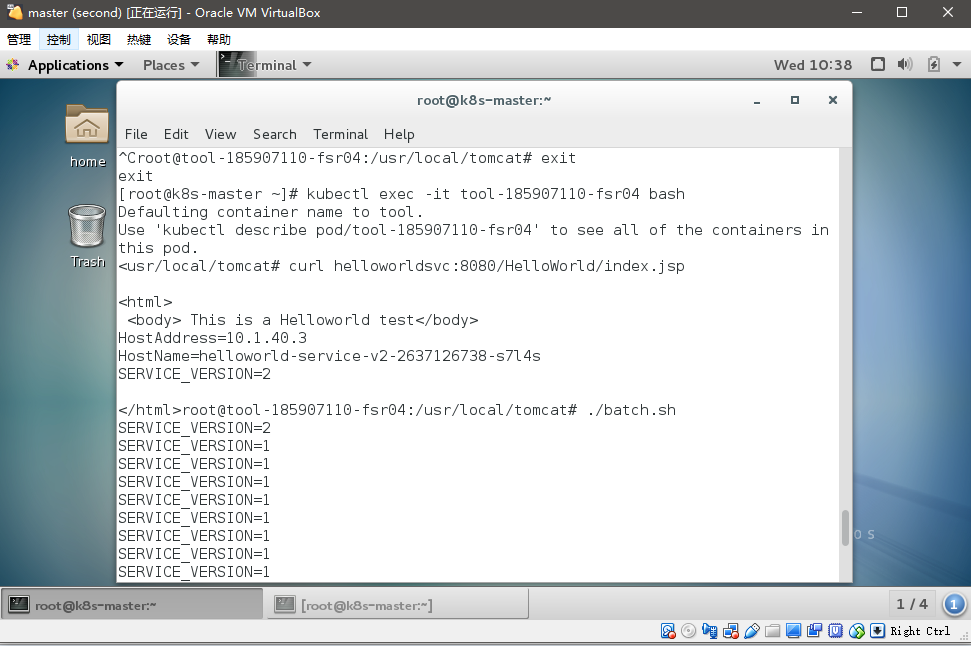

進入到tools

[root@k8s-master ~]# kubectl exec -it tool-185907110-fsr04 bash Defaulting container name to tool. Use ‘kubectl describe pod/tool-185907110-fsr04‘ to see all of the containers in this pod. root@tool-185907110-fsr04:/usr/local/tomcat#

運行

<usr/local/tomcat# curl helloworldsvc:8080/HelloWorld/index.jsp <html> <body> This is a Helloworld test</body> HostAddress=10.1.40.3 HostName=helloworld-service-v2-2637126738-s7l4s SERVICE_VERSION=2

這裏又折騰很久,開始怎麽都返回connection refuse,在pod中訪問localhost通但curl ip不通,後來嘗試采用不註入的tool發現沒有問題,但並不進行流量控制,最後又切換會inject後的pod後居然發現能夠聯通了,解決方法是: 把inject的重新create一遍,同時把service又create一遍。

寫個shell腳本

echo "for i in {1..100} do curl -s helloworldsvc:8080/HelloWorld/index.jsp | grep SERVICE_VERSION done" > batch.sh

然後運行

然後通過grep統計驗證流量分布

root@tool-185907110-fsr04:/usr/local/tomcat# ./batch.sh | grep 2 | wc -l 10 root@tool-185907110-fsr04:/usr/local/tomcat# ./batch.sh | grep 1 | wc -l 90

未完待續。。。

Istio微服務架構初試