nginx+upsync+consul 構建動態nginx配置系統

http://www.php230.com/weixin1456193048.html 【upsync模塊說明、性能評測】

https://www.jianshu.com/p/76352efc5657

https://www.jianshu.com/p/c3fe55e6a5f2

說明:

動態nginx負載均衡的配置,可以通過Consul+Consul-template方式,但是這種方案有個缺點:每次發現配置變更都需要reload nginx,而reload是有一定損耗的。而且,如果你需要長連接支持的話,那麽當reloadnginx時長連接所在worker進程會進行優雅退出,並當該worker進程上的所有連接都釋放時,進程才真正退出(表現為

目前的開源解決方法有3種:

1、Tengine的Dyups模塊

2、微博的Upsync模塊+Consul

3、使用OpenResty的balancer_by_lua,而又拍雲使用其開源的slardar(Consul + balancer_by_lua)實現動態負載均衡。

這裏我們使用的是upsync模塊+consul 來實現動態負載均衡。操作筆記如下:

consul的命令很簡單,官方文檔有詳細的樣例供參考,這裏略過。

實驗環境:

3臺centos7.3機器

cat /etc/hosts 如下:

192.168.5.71 node71

192.168.5.72 node72

192.168.5.73 node73

consul 我們使用3節點都是server角色。如果集群內某個節點宕機的話,集群會自動重新選主的。

nginx-Upsync模塊:新浪微博開源的,,它的功能是拉取 consul 的後端 server 的列表,並更新 Nginx 的路由信息。且reload對nginx性能影響很少。

1、安裝nginx+ nginx-upsync-module(3臺機器上都執行安裝)

nginx-upsync-module模塊: https://github.com/weibocom/nginx-upsync-module

nginx版本:1.13.8

yum install gcc gcc-c++ make libtool zlib zlib-devel openssl openssl-devel pcre pcre-devel -y

cd /root/

git clone https://github.com/weibocom/nginx-upsync-module.git

# 建議使用git clone代碼編譯,剛開始我使用release的tar.gz 編譯nginx失敗了

groupadd nginx

useradd -g nginx -s /sbin/nologin nginx

mkdir -p /var/tmp/nginx/client/

mkdir -p /usr/local/nginx

tar xf nginx-1.13.8.tar.gz

cd /root/nginx-1.13.8

./configure --prefix=/usr/local/nginx --user=nginx --group=nginx --with-http_ssl_module --with-http_flv_module --with-http_stub_status_module --with-http_gzip_static_module --with-http_realip_module --http-client-body-temp-path=/var/tmp/nginx/client/ --http-proxy-temp-path=/var/tmp/nginx/proxy/ --http-fastcgi-temp-path=/var/tmp/nginx/fcgi/ --http-uwsgi-temp-path=/var/tmp/nginx/uwsgi --http-scgi-temp-path=/var/tmp/nginx/scgi --with-pcre --add-module=/root/nginx-upsync-module

make && make install

echo 'export PATH=/usr/local/nginx/sbin:$PATH' >> /etc/profile

source /etc/profile

2、配置nginx虛擬主機

在node1上配置虛擬主機:

cat /usr/local/nginx/conf/nginx.conf 內容如下:

user nginx;

worker_processes 4;

events {

worker_connections 1024;

}

http {

include mime.types;

default_type application/octet-stream;

sendfile on;

tcp_nopush on;

keepalive_timeout 65;

gzip on;

server {

listen 80;

server_name 192.168.5.71;

location / {

root /usr/share/nginx/html;

index index.html index.htm;

}

}

}

創建網頁文件:

echo 'node71' > /usr/share/nginx/html/index.html

在node3上啟動虛擬主機:

cat /usr/local/nginx/conf/nginx.conf 內容如下:

user nginx;

worker_processes 4;

events {

worker_connections 1024;

}

http {

include mime.types;

default_type application/octet-stream;

sendfile on;

tcp_nopush on;

keepalive_timeout 65;

gzip on;

server {

listen 80;

server_name 192.168.5.73;

location / {

root /usr/share/nginx/html;

index index.html index.htm;

}

}

}

創建網頁文件:

echo 'node73' > /usr/share/nginx/html/index.html

在node2上配置虛擬主機:

此處的node2作為LB負載均衡+代理服務器使用

cat /usr/local/nginx/conf/nginx.conf 內容如下:

user nginx;

worker_processes 1;

error_log logs/error.log notice;

pid logs/nginx.pid;

events {

worker_connections 1024;

}

http {

include mime.types;

default_type application/octet-stream;

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"'

'$upstream_addr $upstream_status $upstream_response_time $request_time';

access_log logs/access.log main;

sendfile on;

tcp_nopush on;

keepalive_timeout 65;

gzip on;

upstream pic_backend {

# 兜底假數據

# server 192.168.5.71:8080;

# upsync模塊會去consul拉取最新的upstream信息並存到本地的文件中

upsync 192.168.5.72:8500/v1/kv/upstreams/pic_backend upsync_timeout=6m upsync_interval=500ms upsync_type=consul strong_dependency=off;

upsync_dump_path /usr/local/nginx/conf/servers/servers_pic_backend.conf;

}

# LB對外信息

server {

listen 80;

server_name 192.168.5.72;

location = / {

proxy_pass http://pic_backend;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

add_header real $upstream_addr;

}

location = /upstream_show {

upstream_show;

}

location = /upstream_status {

stub_status on;

access_log off;

}

}

# 兜底的後端服務器

server {

listen 82;

server_name 192.168.5.72;

location / {

root /usr/share/nginx/html82/;

index index.html index.htm;

}

}

}

創建網頁文件:

mkdir /usr/share/nginx/html82 -p

echo 'fake data in SLB_72' > /usr/share/nginx/html82/index.html

創建upsync_dump_path(consul、upsync存放upstream主機信息使用到這個目錄)

mkdir /usr/local/nginx/conf/servers/

3、安裝consul(3臺機器上都執行安裝)

cd /root/

mkdir /usr/local/consul/

unzip consul_1.0.0_linux_amd64.zip

mv consul /usr/local/consul/

mkdir /etc/consul.d

cd /usr/local/consul/

echo 'export PATH=/usr/local/consul/:$PATH' >> /etc/profile

source /etc/profile

node71上:

/usr/local/consul/consul agent -server -bootstrap-expect 3 -ui -node=node71 -config-dir=/etc/consul.d --data-dir=/etc/consul.d -bind=192.168.5.71 -client 0.0.0.0

node72上:

/usr/local/consul/consul agent -server -bootstrap-expect 3 -ui -node=node72 -config-dir=/etc/consul.d --data-dir=/etc/consul.d -bind=192.168.5.72 -client 0.0.0.0 -join 192.168.5.71

意思是把本節點加入到192.168.5.71這個ip的節點中

node73上:

/usr/local/consul/consul agent -server -bootstrap-expect 3 -ui -node=node73 -config-dir=/etc/consul.d --data-dir=/etc/consul.d -bind=192.168.5.73 -client 0.0.0.0 -join 192.168.5.71

意思是把本節點加入到192.168.5.71這個ip的節點中

這樣的話,就在3臺主機前臺啟動了consul程序。

可以在任一臺主機上執行:

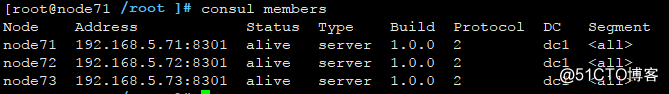

consul members 列出當前集群的節點狀態

consul info 列出當前集群的節點詳細信息 (輸出信息太多,自己運行時候看去吧)

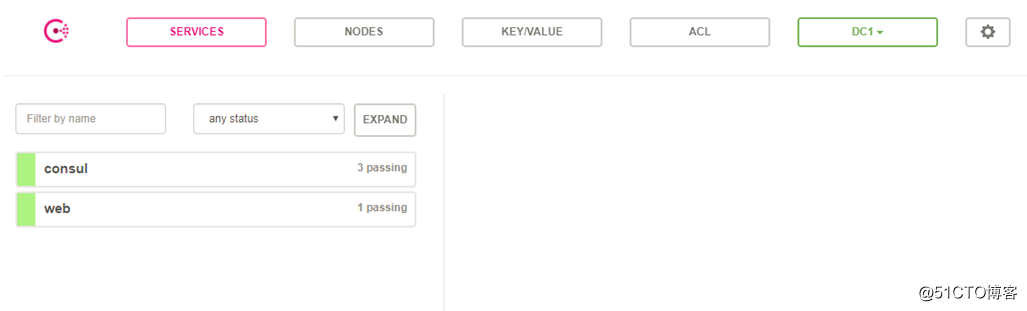

訪問consul自帶的web界面

http://192.168.5.71/upstream_show (3個節點都開了webui,因此我們訪問任意節點都行)

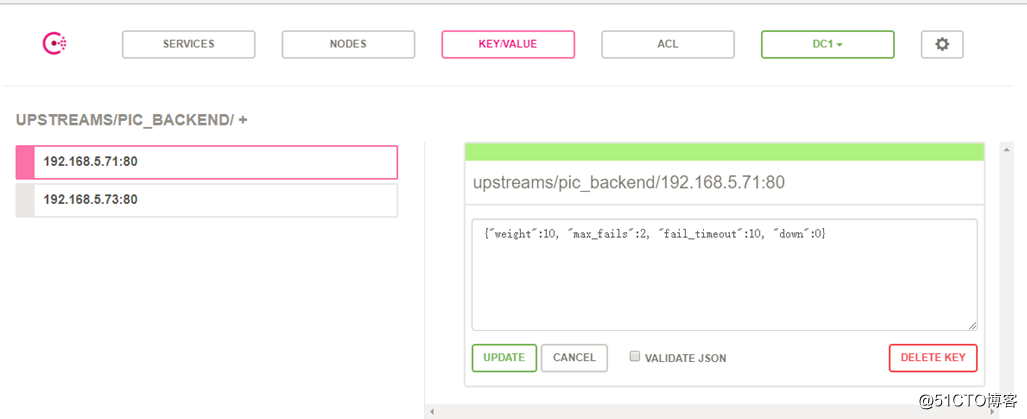

在任一節點上執行如下命令,即可添加2個key-value信息:

curl -X PUT -d '{"weight":10, "max_fails":2, "fail_timeout":10, "down":0}' http://192.168.5.71:8500/v1/kv/upstreams/pic_backend/192.168.5.71:80

curl -X PUT -d '{"weight":10, "max_fails":2, "fail_timeout":10, "down":0}' http://192.168.5.71:8500/v1/kv/upstreams/pic_backend/192.168.5.73:80

在web界面,就可看到如下所示:

刪除的命令是:

curl -X DELETE http://192.168.5.71:8500/v1/kv/upstreams/pic_backend/192.168.5.71:80

curl -X DELETE http://192.168.5.71:8500/v1/kv/upstreams/pic_backend/192.168.5.73:80

調整後端服務的參數:

curl -X PUT -d '{"weight":10, "max_fails":2, "fail_timeout":10, "down":0}' http://192.168.5.71:8500/v1/kv/upstreams/test/192.168.5.71:80

4、測試consul+nginx調度

在node1、node2、node3上都執行 /usr/local/nginx/sbin/nginx 啟動nginx服務

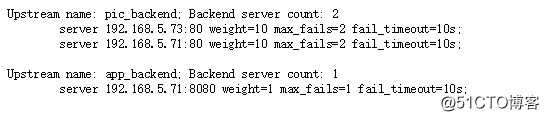

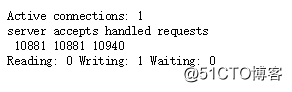

訪問http://192.168.5.72/upstream_show

訪問http://192.168.5.72/upstream_status

剛才我們在第三步的時候,執行了如下2條命令,自動在consul裏面加了2行內容。

curl -X PUT -d '{"weight":10, "max_fails":2, "fail_timeout":10, "down":0}' http://192.168.5.71:8500/v1/kv/upstreams/pic_backend/192.168.5.71:80

curl -X PUT -d '{"weight":10, "max_fails":2, "fail_timeout":10, "down":0}' http://192.168.5.71:8500/v1/kv/upstreams/pic_backend/192.168.5.73:80

我們node2的nginx在啟動的時候,會去nginx.conf裏面配置的consul地址去尋找對應的upstream信息。同時會dump一份upstream的配置到/usr/local/nginx/conf/servers目錄下。

[root@node72 /usr/local/nginx/conf/servers ]# cat servers_pic_backend.conf

server 192.168.5.73:80 weight=10 max_fails=2 fail_timeout=10s;

server 192.168.5.71:80 weight=10 max_fails=2 fail_timeout=10s;

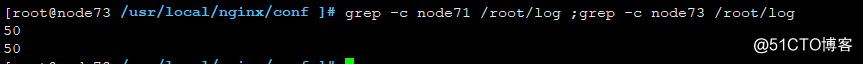

我們可以寫個curl腳本測試下,如下

for i in {1..100} ;do curl http://192.168.5.72/; done > /root/log

grep -c node71 /root/log ;grep -c node73 /root/log

可以看到curl是輪詢請求到後端的node1和node3上去的。

或者使用for i in {1..100} ;do curl -s -I http://192.168.5.72/|tail -2 |head -1; done

如果要下線後端主機進行發布的話,只要把down參數置為1即可,類似如下:

curl -X PUT -d '{"weight":2, "max_fails":2, "fail_timeout":10, "down":1}' http://192.168.5.71:8500/v1/kv/upstreams/test/192.168.5.73:80

如果發布完成並驗證後,需要上線,可以再次把down參數置為0:

curl -X PUT -d '{"weight":2, "max_fails":2, "fail_timeout":10, "down":0}' http://192.168.5.71:8500/v1/kv/upstreams/test/192.168.5.73:80

如果調整在線調整後端服務的upstream參數:

curl -X PUT -d '{"weight":2, "max_fails":2, "fail_timeout":10, "down":0}' http://192.168.5.71:8500/v1/kv/upstreams/test/192.168.5.73:80

說明:

每次去拉取 consul 都會設置連接超時,由於 consul 在無更新的情況下默認會 hang 五分鐘,所以響應超時配置時間應大於五分鐘。大於五分鐘之後,consul 依舊沒有返回,便直接做超時處理。

此外,還可使用nginx +consulconsul-template這種架構來控制nginx的配置

具體可以參考:

https://www.jianshu.com/p/9976e874c099

https://www.jianshu.com/p/a4c04a3eeb57?utm_campaign=maleskine&utm_content=note&utm_medium=seo_notes&utm_source=recommendation

https://www.cnblogs.com/MrCandy/p/7152312.html

https://github.com/hashicorp/consul-template

官方提供的nginx參考模板:https://github.com/hashicorp/consul-template/blob/master/examples/nginx.md

nginx+upsync+consul 構建動態nginx配置系統