flume 監控hive日誌文件

阿新 • • 發佈:2018-04-12

大數據 hadoop flume 日誌抽取

- flume 監控hive 日誌文件

一: flume 監控hive的日誌

1.1 案例需求:

1. 實時監控某個日誌文件,將數據收集到存儲hdfs 上面, 此案例使用exec source ,實時監控文件數據,使用Memory Channel 緩存數據,使用HDFS Sink 寫入數據

2. 此案例實時監控hive 日誌文件,放到hdfs 目錄當中。

hive 的日誌目錄是

hive.log.dir = /home/hadoop/yangyang/hive/logs1.2 在hdfs 上面創建收集目錄:

bin/hdfs dfs -mkdir /flume 1.3 拷貝flume 所需要的jar 包

cd /home/hadoop/yangyang/hadoop/ cp -p share/hadoop/hdfs/hadoop-hdfs-2.5.0-cdh5.3.6.jar /home/hadoop/yangyang/flume/lib/ cp -p share/hadoop/common/hadoop-common-2.5.0-cdh5.3.6.jar /home/hadoop/yangyang/flume/lib/ cp -p share/hadoop/tools/lib/commons-configuration-1.6.jar /home/hadoop/yangyang/flume/lib/ cp -p share/hadoop/tools/lib/hadoop-auth-2.5.0-cdh5.3.6.jar /home/hadoop/yangyang/flume/lib/

1.4 配置一個新文件的hive-test.properties 文件:

cp -p test-conf.properties hive-conf.propertiesvim hive-conf.properties

# example.conf: A single-node Flume configuration # Name the components on this agent a2.sources = r2 a2.sinks = k2 a2.channels = c2 # Describe/configure the source a2.sources.r2.type = exec a2.sources.r2.command = tail -f /home/hadoop/yangyang/hive/logs/hive.log a2.sources.r2.bind = namenode01.hadoop.com a2.sources.r2.shell = /bin/bash -c # Describe the sink a2.sinks.k2.type = hdfs a2.sinks.k2.hdfs.path = hdfs://namenode01.hadoop.com:8020/flume/%Y%m/%d a2.sinks.k2.hdfs.fileType = DataStream a2.sinks.k2.hdfs.writeFormat = Text a2.sinks.k2.hdfs.batchSize = 10 # 設置二級目錄按小時切割 a2.sinks.k2.hdfs.round = true a2.sinks.k2.hdfs.roundValue = 1 a2.sinks.k2.hdfs.roundUnit = hour # 設置文件回滾條件 a2.sinks.k2.hdfs.rollInterval = 60 a2.sinks.k2.hdfs.rollsize = 128000000 a2.sinks.k2.hdfs.rollCount = 0 a2.sinks.k2.hdfs.useLocalTimeStamp = true a2.sinks.k2.hdfs.minBlockReplicas = 1 # Use a channel which buffers events in memory a2.channels.c2.type = memory a2.channels.c2.capacity = 1000 a2.channels.c2.transactionCapacity = 100 # Bind the source and sink to the channel a2.sources.r2.channels = c2 a2.sinks.k2.channel = c2

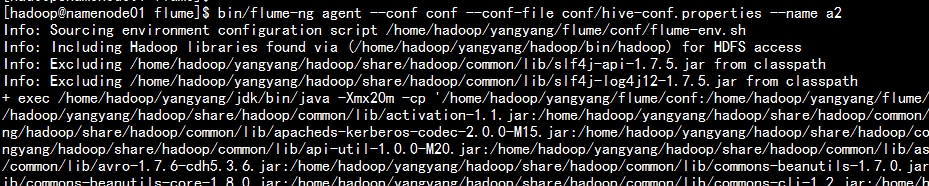

1.5 運行agent 處理

bin/flume-ng agent --conf conf --conf-file conf/hive-conf.properties --name a2

1.6 寫入hive 的log日誌文件測試:

cd /home/hadoop/yangyang/hive/logs

echo "111" >> hive.log

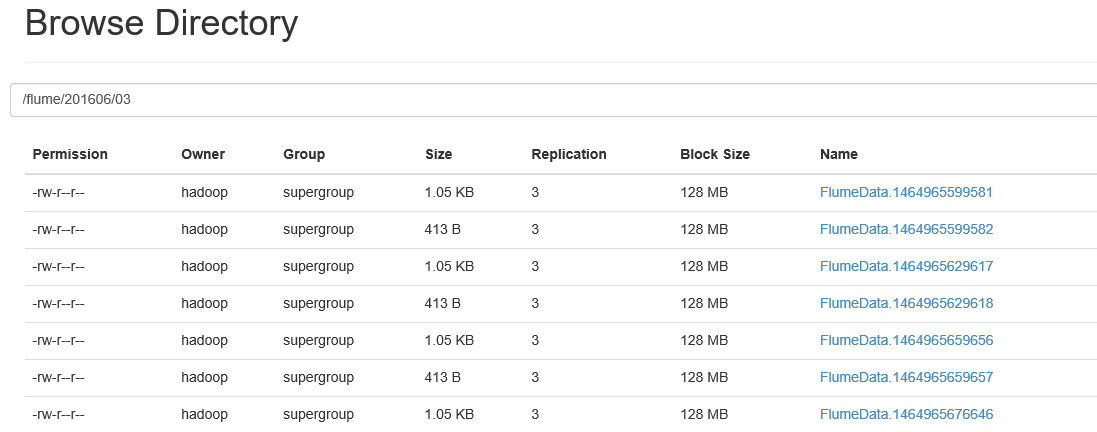

每隔一段時間執行上面的命令測試1.7 去hdfs 上面去查看:

flume 監控hive日誌文件