Hive項目實戰:用Hive分析“余額寶”躺著賺大錢背後的邏輯

一、項目背景

前兩年,支付寶推出的“余額寶”賺盡無數人的眼球,同時也吸引的大量的小額資金進入。“余額寶”把用戶的散錢利息提高到了年化收益率4.0%左右,比起銀行活期存儲存款0.3%左右高出太多了,也正在撼動著銀行躺著賺錢的地位。

在金融市場,如果想獲得年化收益率4%-5%左右也並非難事,通過“逆回購”一樣可以。一旦遇到貨幣緊張時(銀行缺錢),更可達到50%一天隔夜回夠利率。我們就可以美美地在家裏數錢了!!

所謂逆回購:通俗來講,就是你(A)把錢借給別人(B),到期時,B按照約定利息,還給你(A)本資+利息。逆回購本身是無風險的。(操作銀行儲蓄存款類似)。現在火熱吵起來的,阿裏金融的“余額寶”利息與逆回購持平。我們可以猜測“余額 寶”的資金也在操作“逆回購”,不僅保持良好的流通性,同時也提供穩定的利息。

二、項目需求分析

通過歷史數據分析,找出走勢規律,發現當日高點,進行逆回購,賺取最高利息。

三、項目數據集

猛戳此鏈接下載數據集

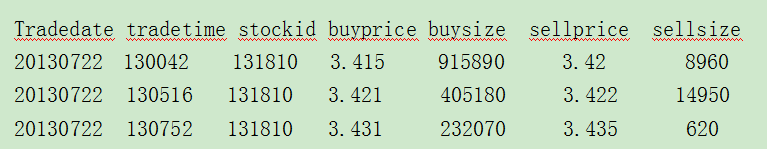

數據格式如下:

tradedate: 交易日期

tradetime: 交易時間

stockid: 股票id

buyprice: 買入價格

buysize: 買入數量

sellprice: 賣出價格

sellsize: 賣出數量

四、項目思路分析

基於項目的需求,我們可以使用Hive工具完成數據的分析。

1、首先將數據集total.csv導入Hive中,用日期做為分區表的分區ID。

2、選取自己的股票編號stockid,分別統計該股票產品每日的最高價和最低價。

3、以分鐘做為最小單位,統計出所選股票每天每分鐘均價。

五、步驟詳解

第一步:將數據導入Hive中

在hive中,創建 stock 表結構。

hive> create table if not exists stock(tradedate string, tradetime string, stockid string, buyprice double, buysize int, sellprice string, sellsize int) row format delimited fields terminated by ‘,‘ stored as textfile;

將HDFS中的股票歷史數據導入hive中。

[hadoop@master bin]$ cd /home/hadoop/test/

[hadoop@master test]$ sudo rz

hive> load data local inpath ‘/home/handoop/test/stock.csv’ into table stock;

創建分區表 stock_partition,用日期做為分區表的分區ID。

hive> create table if not exists stock_partition(tradetime string, stockid string, buyprice double, buysize int, sellprice string, sellsize int) partitioned by (tradedate string) row format delimited fields terminated by ‘,‘;

OK

Time taken: 0.112 seconds

hive> desc stock_partition;

OK

tradetime string

stockid string

buyprice double

buysize int

sellprice string

sellsize int

tradedate string

# Partition Information

# col_name data_type comment

tradedate string

如果設置動態分區首先執行。

hive>set hive.exec.dynamic.partition.mode=nonstrict;

創建動態分區,將stock表中的數據導入stock_partition表。

hive> insert overwrite table stock_partition partition(tradedate) select tradetime, stockid, buyprice, buysize, sellprice, sellsize, tradedate from stock distribute by tradedate;

Query ID = hadoop_20180524122020_f7a1b61a-84ed-4487-a37e-64ef9c3abc5f

Total jobs = 1

Launching Job 1 out of 1

Number of reduce tasks not specified. Estimated from input data size: 1

In order to change the average load for a reducer (in bytes):

set hive.exec.reducers.bytes.per.reducer=<number>

In order to limit the maximum number of reducers:

set hive.exec.reducers.max=<number>

In order to set a constant number of reducers:

set mapreduce.job.reduces=<number>

Starting Job = job_1527103938304_0002, Tracking URL = http://master:8088/proxy/application_1527103938304_0002/

Kill Command = /opt/modules/hadoop-2.6.0/bin/hadoop job -kill job_1527103938304_0002

Hadoop job information for Stage-1: number of mappers: 1; number of reducers: 1

2018-05-24 12:20:13,931 Stage-1 map = 0%, reduce = 0%

2018-05-24 12:20:21,434 Stage-1 map = 100%, reduce = 0%, Cumulative CPU 2.19 sec

2018-05-24 12:20:40,367 Stage-1 map = 100%, reduce = 100%, Cumulative CPU 5.87 sec

MapReduce Total cumulative CPU time: 5 seconds 870 msec

Ended Job = job_1527103938304_0002

Loading data to table default.stock_partition partition (tradedate=null)

Time taken for load dynamic partitions : 492

Loading partition {tradedate=20130726}

Loading partition {tradedate=20130725}

Loading partition {tradedate=20130724}

Loading partition {tradedate=20130723}

Loading partition {tradedate=20130722}

Time taken for adding to write entity : 6

Partition default.stock_partition{tradedate=20130722} stats: [numFiles=1, numRows=25882, totalSize=918169, rawDataSize=892287]

Partition default.stock_partition{tradedate=20130723} stats: [numFiles=1, numRows=26516, totalSize=938928, rawDataSize=912412]

Partition default.stock_partition{tradedate=20130724} stats: [numFiles=1, numRows=25700, totalSize=907048, rawDataSize=881348]

Partition default.stock_partition{tradedate=20130725} stats: [numFiles=1, numRows=20910, totalSize=740877, rawDataSize=719967]

Partition default.stock_partition{tradedate=20130726} stats: [numFiles=1, numRows=24574, totalSize=862861, rawDataSize=838287]

MapReduce Jobs Launched:

Stage-Stage-1: Map: 1 Reduce: 1 Cumulative CPU: 5.87 sec HDFS Read: 5974664 HDFS Write: 4368260 SUCCESS

Total MapReduce CPU Time Spent: 5 seconds 870 msec

OK

Time taken: 39.826 seconds

第二步:hive自定義UDF,統計204001該只股票每日的最高價和最低價

Hive 自定義Max統計最大值。

package zimo.hadoop.hive;

import org.apache.hadoop.hive.ql.exec.UDF;

/**

* @function 自定義UDF統計最大值

* @author Zimo

*

*/

public class Max extends UDF{

public Double evaluate(Double a, Double b) {

if(a == null)

a=0.0;

if(b == null)

b=0.0;

if(a >= b){

return a;

} else {

return b;

}

}

}

Hive 自定義Min統計最小值。

package zimo.hadoop.hive;

import org.apache.hadoop.hive.ql.exec.UDF;

/**

* @function 自定義UDF統計最小值

* @author Zimo

*

*/

public class Min extends UDF{

public Double evaluate(Double a, Double b) {

if(a == null)

a = 0.0;

if(b == null)

b = 0.0;

if(a >= b){

return b;

} else {

return a;

}

}

}

將自定義的Max和Min分別打包成maxUDF.jar和minUDF.jar, 然後上傳至/home/hadoop/hive目錄下,添加Hive自定義的UDF函數

[hadoop@master ~]$ cd $HIVE_HOME

[hadoop@master hive1.0.0]$ sudo mkdir jar/

[hadoop@master hive1.0.0]$ ll

total 408

drwxr-xr-x 4 hadoop hadoop 4096 May 24 06:15 bin

drwxr-xr-x 2 hadoop hadoop 4096 May 24 05:53 conf

drwxr-xr-x 4 hadoop hadoop 4096 May 14 23:28 examples

drwxr-xr-x 7 hadoop hadoop 4096 May 14 23:28 hcatalog

drwxrwxr-x 3 hadoop hadoop 4096 May 24 11:50 iotmp

drwxr-xr-x 2 root root 4096 May 24 12:34 jar

drwxr-xr-x 4 hadoop hadoop 4096 May 14 23:41 lib

-rw-r--r-- 1 hadoop hadoop 23828 Jan 30 2015 LICENSE

drwxr-xr-x 2 hadoop hadoop 4096 May 24 03:36 logs

-rw-r--r-- 1 hadoop hadoop 397 Jan 30 2015 NOTICE

-rw-r--r-- 1 hadoop hadoop 4044 Jan 30 2015 README.txt

-rw-r--r-- 1 hadoop hadoop 345744 Jan 30 2015 RELEASE_NOTES.txt

drwxr-xr-x 3 hadoop hadoop 4096 May 14 23:28 scripts

[hadoop@master hive1.0.0]$ cd jar/

[hadoop@master jar]$ sudo rz

[hadoop@master jar]$ ll

total 8

-rw-r--r-- 1 root root 714 May 24 2018 maxUDF.jar

-rw-r--r-- 1 root root 713 May 24 2018 minUDF.jar

hive> add jar /opt/modules/hive1.0.0/jar/maxUDF.jar;

Added [/opt/modules/hive1.0.0/jar/maxUDF.jar] to class path

Added resources: [/opt/modules/hive1.0.0/jar/maxUDF.jar]

hive> add jar /opt/modules/hive1.0.0/jar/minUDF.jar;

Added [/opt/modules/hive1.0.0/jar/minUDF.jar] to class path

Added resources: [/opt/modules/hive1.0.0/jar/minUDF.jar]

創建Hive自定義的臨時方法maxprice和minprice。

hive> create temporary function maxprice as ‘zimo.hadoop.hive.Max‘;

OK

Time taken: 0.009 seconds

hive> create temporary function minprice as ‘zimo.hadoop.hive.Min‘;

OK

Time taken: 0.004 seconds

統計204001股票,每日的最高價格和最低價格。

hive> select stockid, tradedate, max(maxprice(buyprice,sellprice)), min(minprice(buyprice,sellprice)) from stock_partition where stockid=‘204001‘ group by tradedate;

204001 20130722 4.05 0.0

204001 20130723 4.48 2.2

204001 20130724 4.65 2.205

204001 20130725 11.9 8.7

204001 20130726 12.3 5.2

第三步:統計每分鐘均價

統計204001這只股票,每天每分鐘的均價

hive> select stockid, tradedate, substring(tradetime,0,4), sum(buyprice+sellprice)/(count(*)*2) from stock_partition where stockid=‘204001‘ group by stockid, tradedate, substring(tradetime,0,4);

204001 20130725 0951 9.94375

204001 20130725 0952 9.959999999999999

204001 20130725 0953 10.046666666666667

204001 20130725 0954 10.111041666666667

204001 20130725 0955 10.132500000000002

204001 20130725 0956 10.181458333333333

204001 20130725 0957 10.180625

204001 20130725 0958 10.20340909090909

204001 20130725 0959 10.287291666666667

204001 20130725 1000 10.331041666666668

204001 20130725 1001 10.342500000000001

204001 20130725 1002 10.344375

204001 20130725 1003 10.385

204001 20130725 1004 10.532083333333333

204001 20130725 1005 10.621041666666667

204001 20130725 1006 10.697291666666667

204001 20130725 1007 10.702916666666667

204001 20130725 1008 10.78

以上就是博主為大家介紹的這一板塊的主要內容,這都是博主自己的學習過程,希望能給大家帶來一定的指導作用,有用的還望大家點個支持,如果對你沒用也望包涵,有錯誤煩請指出。如有期待可關註博主以第一時間獲取更新哦,謝謝!

版權聲明:本文為博主原創文章,未經博主允許不得轉載。

Hive項目實戰:用Hive分析“余額寶”躺著賺大錢背後的邏輯