案例二(構建雙主高可用HAProxy負載均衡系統)

1.系統架構圖與實現原理

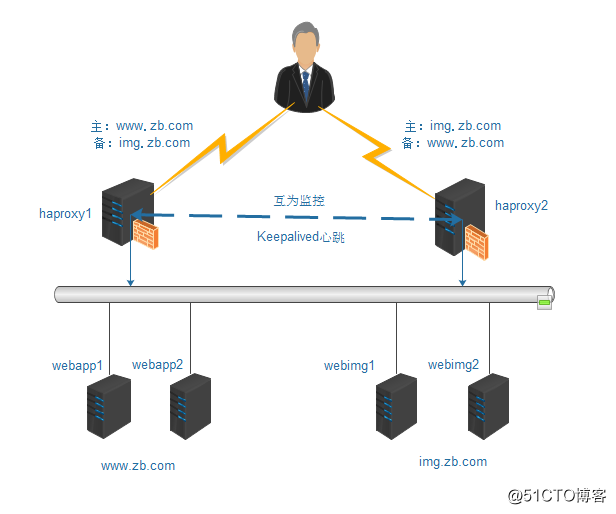

為了能充分利用服務器資源並將負載進行分流,可以在一主一備的基礎上構建雙主互備的高可用HAProxy負載均衡集群系統。雙主互備的集群架構如圖:

在這個架構中,要實現的功能是:通過haproxy1服務器將www.zb.com的訪問請求發送到webapp1和webapp2兩臺主機上,要實現www.zb.com的負載均衡;通過haproxy2將img.zb.com的訪問請求發送到webimg1和webimg2兩臺主機上,要實現img.zb.com的負載均衡;同時,如果haproxy1或haproxy2任何一臺服務器出現故障,都會將用戶訪問請求發送到另一臺健康的負載均衡節點,進而繼續保持兩個網站的負載均衡。

操作系統:

CentOS release 6.7

地址規劃:

| 主機名 | 物理IP地址 | 虛擬IP地址 | 集群角色 |

| haproxy1 | 10.0.0.35 | 10.0.0.40 | 主:www.zb.com |

| 備:img.zb.com | |||

| haproxy2 | 10.0.0.36 | 10.0.0.50 | 主:img.zb.com |

| 備:www.zb.com | |||

| webapp1 | 10.0.0.150 | 無 | Backend Server |

| webapp2 | 10.0.0.151 | 無 | Backend Server |

| webimg1 | 10.0.0.152 | 無 | Backend Server |

| webimg2 | 10.0.0.8 | 無 | Backend Server |

主要:為了保證haproxy1和haproxy2服務器資源得到充分利用,這裏對訪問進行了分流操作,需要將www.zb.com的域名解析到10.0.0.40這個IP上,將img.zb.com域名解析到10.0.0.50這個IP上。

在主機名為haproxy1和haproxy2的節點依次安裝HAProxy並配置,配置好的haproxy.cfg文件,內容如下:

global

# to have these messages end up in /var/log/haproxy.log you will

# need to:

#

# 1) configure syslog to accept network log events. This is done

# by adding the '-r' option to the SYSLOGD_OPTIONS in

# /etc/sysconfig/syslog

#

# 2) configure local2 events to go to the /var/log/haproxy.log

# file. A line like the following can be added to

# /etc/sysconfig/syslog

#

# local2.* /var/log/haproxy.log

#

log 127.0.0.1 local2

pidfile /var/run/haproxy.pid

maxconn 4096

user haproxy

group haproxy

daemon

nbproc 1

# turn on stats unix socket

#---------------------------------------------------------------------

# common defaults that all the 'listen' and 'backend' sections will

# use if not designated in their block

#---------------------------------------------------------------------

defaults

mode http

retries 3

timeout connect 5s

timeout client 30s

timeout server 30s

timeout check 2s

listen admin_stats

bind 0.0.0.0:19088

mode http

log 127.0.0.1 local0 err

stats refresh 30s

stats uri /haproxy-status

stats realm welcome login\ Haproxy

stats auth admin:admin

stats hide-version

stats admin if TRUE

#---------------------------------------------------------------------

# main frontend which proxys to the backends

#---------------------------------------------------------------------

frontend www

bind *:80

mode http

option httplog

option forwardfor

log global

acl host_www hdr_dom(host) -i www.zb.com

acl host_img hdr_dom(host) -i img.zb.com

use_backend server_www if host_www

use_backend server_img if host_img

#---------------------------------------------------------------------

# static backend for serving up images, stylesheets and such

#---------------------------------------------------------------------

backend server_www

mode http

option redispatch

option abortonclose

balance roundrobin

option httpchk GET /index.html

server web01 10.0.0.150:80 weight 6 check inter 2000 rise 2 fall 3

server web02 10.0.0.151:80 weight 6 check inter 2000 rise 2 fall 3

backend server_img

mode http

option redispatch

option abortonclose

balance roundrobin

option httpchk GET /index.html

server webimg1 10.0.0.152:80 weight 6 check inter 2000 rise 2 fall 3

server webimg2 10.0.0.8:80 weight 6 check inter 2000 rise 2 fall 3

在這個HAProxy配置中,通過ACL規則將www.zb.com站點轉到webapp1、webapp2兩個後端節點,將img.zb.com站點轉到webimg1和webimg2兩個後端服務節點,分別實現負載均衡。

最後將haproxy.conf文件分別復制到haproxy1和haproxy2兩臺服務器上,然後在兩個負載均衡器上依次啟動HAProxy服務。

3.安裝並配置雙主的Keepalived高可用系統

依次在主、備兩個節點上安裝Keepalived。keepalived.conf文件內容:

! Configuration File for keepalived

global_defs {

notification_email {

}

notification_email_from [email protected]

smtp_server 192.168.200.1

smtp_connect_timeout 30

router_id LVS_DEVEL

}

vrrp_script check_haproxy {

script "killall -0 haproxy"

interval 2

}

vrrp_instance HAProxy_HA {

state MASTER #在haproxy2主機上,此處為BACKUP

interface eth0

virtual_router_id 80 #在一個實例下,virtual_router_id是唯一的,因此在haproxy2上,virtual_router_id也為80

priority 100 #在haproxy2主機上,priority值為80

advert_int 2

authentication {

auth_type PASS

auth_pass aaaa

}

notify_master "/etc/keepalived/mail_notify.sh master"

notify_backup "/etc/keepalived/mail_notify.sh backup"

notify_fault "/etc/keepalived/mail_notify.sh fault"

track_script {

check_haproxy

}

virtual_ipaddress {

10.0.0.40/24 dev eth0

}

}

vrrp_instance HAProxy_HA2 {

state BACKUP #在haproxy2主機上,此處為MASTER

interface eth0

virtual_router_id 81 #在haproxy2主機上,此外virtual_router_id也必須為81

priority 80 #在haproxy2主機上,priority值為100

advert_int 2

authentication {

auth_type PASS

auth_pass aaaa

}

notify_master "/etc/keepalived/mail_notify.sh master"

notify_backup "/etc/keepalived/mail_notify.sh backup"

notify_fault "/etc/keepalived/mail_notify.sh fault"

track_script {

check_haproxy

}

virtual_ipaddress {

10.0.0.50/24 dev eth0

}

}

在雙主互備的配置中,有兩個VIP地址,在正常情況下,10.0.0.40將自動加載到haproxy1主機上,而10.0.0.50將自動加載到haproxy2主機上。這裏要特別註意的是,haproxy1和haproxy2兩個節點上virtual_router_id的值要互不相同,並且MASTER角色的priority值要大於BACKUP角色的priority值。

在完成所有配置修好後,依次在haproxy1和haproxy2兩個節點啟動Keepalived服務,並觀察VIP地址是否正常加載到對應的節點上。

4.測試

在haproxy1和haproxy2節點依次啟動HAProxy服務和Keepalived服務後,首先觀察haproxy節點Keepalived的啟動日誌,信息如下:

Jul 25 10:06:34 data-1-1 Keepalived_vrrp[33167]: VRRP_Instance(HAProxy_HA) Transition to MASTER STATE

Jul 25 10:06:36 data-1-1 Keepalived_vrrp[33167]: VRRP_Instance(HAProxy_HA) Entering MASTER STATE

Jul 25 10:06:36 data-1-1 Keepalived_vrrp[33167]: VRRP_Instance(HAProxy_HA) setting protocol VIPs.

Jul 25 10:06:36 data-1-1 Keepalived_vrrp[33167]: VRRP_Instance(HAProxy_HA) Sending gratuitous ARPs on eth0 for 10.0.0.40

Jul 25 10:06:36 data-1-1 Keepalived_healthcheckers[33166]: Netlink reflector reports IP 10.0.0.40 added

Jul 25 10:06:53 data-1-1 Keepalived_vrrp[33167]: VRRP_Instance(HAProxy_HA2) Received higher prio advert

Jul 25 10:06:53 data-1-1 Keepalived_vrrp[33167]: VRRP_Instance(HAProxy_HA2) Entering BACKUP STATE

下面測試一下雙主互備的故障切換功能,這裏為了模擬故障,將haproxy1節點上HAProxy服務關閉,然後在haproxy1節點觀察Keepalived的啟動日誌,信息如下:

Jul 25 14:42:09 data-1-1 Keepalived_vrrp[33167]: VRRP_Script(check_haproxy) failed

Jul 25 14:42:10 data-1-1 Keepalived_vrrp[33167]: VRRP_Instance(HAProxy_HA) Entering FAULT STATE

Jul 25 14:42:10 data-1-1 Keepalived_vrrp[33167]: VRRP_Instance(HAProxy_HA) removing protocol VIPs.

Jul 25 14:42:10 data-1-1 Keepalived_vrrp[33167]: VRRP_Instance(HAProxy_HA) Now in FAULT state

Jul 25 14:42:10 data-1-1 Keepalived_healthcheckers[33166]: Netlink reflector reports IP 10.0.0.40 removed

從切換過程看,keepalived運行完全正常。

案例二(構建雙主高可用HAProxy負載均衡系統)