opencv之光照補償和去除光照

阿新 • • 發佈:2018-10-31

本部落格借用了不少其他部落格,相當於知識整理

一、光照補償

1.直方圖均衡化

#include "stdafx.h" #include<opencv2/opencv.hpp> #include<iostream> using namespace std; using namespace cv; int main(int argc, char *argv[]) { Mat image = imread("D://vvoo//123.jpg", 1); if (!image.data) { cout << "image loading error" <<endl; return -1; } Mat imageRGB[3]; split(image, imageRGB); for (int i = 0; i < 3; i++) { equalizeHist(imageRGB[i], imageRGB[i]); } merge(imageRGB, 3, image); imshow("equalizeHist", image); waitKey(); return 0; }

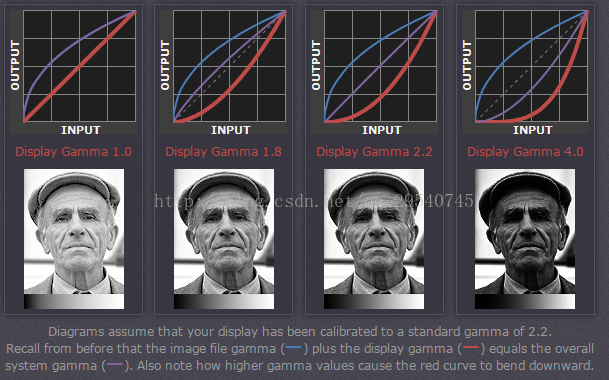

2.gamma corection:

http://www.cambridgeincolour.com/tutorials/gamma-correction.htm

人眼是按照gamma < 1的曲線對輸入影象進行處理的。

原圖

#include<opencv2/opencv.hpp> #include<iostream> using namespace std; using namespace cv; // Normalizes a given image into a value range between 0 and 255. Mat norm(const Mat& src) { // Create and return normalized image: Mat dst; switch (src.channels()) { case 1: cv::normalize(src, dst, 0, 255, NORM_MINMAX, CV_8UC1); break; case 3: cv::normalize(src, dst, 0, 255, NORM_MINMAX, CV_8UC3); break; default: src.copyTo(dst); break; } return dst; } int main() { Mat image,X,I; VideoCapture cap(0); while (1) { cap >> image; image.convertTo(X, CV_32FC1); //轉換格式 float gamma = 4; pow(X, gamma, I); imshow("Original Image", image); imshow("Gamma correction image", norm(I)); char key = waitKey(30); if (key=='q' ) break; } return 0; }

3.拉普拉斯運算元增強

效果不好int main(int argc, char *argv[]) { Mat image = imread("D://vvoo//123.jpg", 1); if (!image.data) { cout << "image loading error" <<endl; return -1; } imshow("原圖", image); Mat imageEnhance; Mat kernel = (Mat_<float>(3, 3) << 0, -1, 0, 0, 7, 0, 0, -1, 0); filter2D(image, imageEnhance, CV_8UC3, kernel); imshow("拉普拉斯運算元影象增強效果", imageEnhance); imwrite("C://Users//TOPSUN//Desktop//123.jpg",imageEnhance); waitKey(); return 0; }

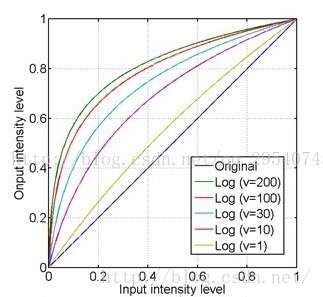

4.對數變換

對數影象增強是影象增強的一種常見方法,其公式為: S = c log(r+1),其中c是常數(以下演算法c=255/(log(256)),這樣可以實現整個畫面的亮度增大此時預設v=e,即 S = c ln(r+1)。

如下圖,對數使亮度比較低的畫素轉換成亮度比較高的,而亮度較高的畫素則幾乎沒有變化,這樣就使圖片整體變亮。

int main(int argc, char *argv[])

{

double temp = 255 / log(256);

cout << "doubledouble temp ="<< temp<<endl;

Mat image = imread("D://vvoo//123.jpg", 1);

if (!image.data)

{

cout << "image loading error" <<endl;

return -1;

}

imshow("原圖", image);

Mat imageLog(image.size(), CV_32FC3);

for (int i = 0; i < image.rows; i++)

{

for (int j = 0; j < image.cols; j++)

{

imageLog.at<Vec3f>(i, j)[0] = temp* log(1 + image.at<Vec3b>(i, j)[0]);

imageLog.at<Vec3f>(i, j)[1] = temp*log(1 + image.at<Vec3b>(i, j)[1]);

imageLog.at<Vec3f>(i, j)[2] = temp*log(1 + image.at<Vec3b>(i, j)[2]);

}

}

//歸一化到0~255

normalize(imageLog, imageLog, 0, 255, CV_MINMAX);

//轉換成8bit影象顯示

convertScaleAbs(imageLog, imageLog);

int channel = image.channels();

cout << channel << endl;

imshow("Soure", image);

imshow("after", imageLog);

imwrite("C://Users//TOPSUN//Desktop//123.jpg", imageLog);

waitKey();

return 0;

}二、去除光照

5.RGB歸一化

據說能消除光照,自己實現出來好垃圾啊

int main(int argc, char *argv[])

{

//double temp = 255 / log(256);

//cout << "doubledouble temp ="<< temp<<endl;

Mat image = imread("D://vvoo//sun_face.jpg", 1);

if (!image.data)

{

cout << "image loading error" <<endl;

return -1;

}

imshow("原圖", image);

Mat src(image.size(), CV_32FC3);

for (int i = 0; i < image.rows; i++)

{

for (int j = 0; j < image.cols; j++)

{

src.at<Vec3f>(i, j)[0] = 255 * (float)image.at<Vec3b>(i, j)[0] / ((float)image.at<Vec3b>(i, j)[0] + (float)image.at<Vec3b>(i, j)[2] + (float)image.at<Vec3b>(i, j)[1]+0.01);

src.at<Vec3f>(i, j)[1] = 255 * (float)image.at<Vec3b>(i, j)[1] / ((float)image.at<Vec3b>(i, j)[0] + (float)image.at<Vec3b>(i, j)[2] + (float)image.at<Vec3b>(i, j)[1]+0.01);

src.at<Vec3f>(i, j)[2] = 255 * (float)image.at<Vec3b>(i, j)[2] / ((float)image.at<Vec3b>(i, j)[0] + (float)image.at<Vec3b>(i, j)[2] + (float)image.at<Vec3b>(i, j)[1]+0.01);

}

}

normalize(src, src, 0, 255, CV_MINMAX);

convertScaleAbs(src,src);

imshow("rgb", src);

imwrite("C://Users//TOPSUN//Desktop//123.jpg", src);

waitKey(0);

return 0;

}

6.另一種去除光照的方法

void unevenLightCompensate(Mat &image, int blockSize)

{

if (image.channels() == 3) cvtColor(image, image, 7);

double average = mean(image)[0];

int rows_new = ceil(double(image.rows) / double(blockSize));

int cols_new = ceil(double(image.cols) / double(blockSize));

Mat blockImage;

blockImage = Mat::zeros(rows_new, cols_new, CV_32FC1);

for (int i = 0; i < rows_new; i++)

{

for (int j = 0; j < cols_new; j++)

{

int rowmin = i*blockSize;

int rowmax = (i + 1)*blockSize;

if (rowmax > image.rows) rowmax = image.rows;

int colmin = j*blockSize;

int colmax = (j + 1)*blockSize;

if (colmax > image.cols) colmax = image.cols;

Mat imageROI = image(Range(rowmin, rowmax), Range(colmin, colmax));

double temaver = mean(imageROI)[0];

blockImage.at<float>(i, j) = temaver;

}

}

blockImage = blockImage - average;

Mat blockImage2;

resize(blockImage, blockImage2, image.size(), (0, 0), (0, 0), INTER_CUBIC);

Mat image2;

image.convertTo(image2, CV_32FC1);

Mat dst = image2 - blockImage2;

dst.convertTo(image, CV_8UC1);

}

int main(int argc, char *argv[])

{

//double temp = 255 / log(256);

//cout << "doubledouble temp ="<< temp<<endl;

Mat image = imread("C://Users//TOPSUN//Desktop//2.jpg", 1);

if (!image.data)

{

cout << "image loading error" <<endl;

return -1;

}

imshow("原圖", image);

unevenLightCompensate(image, 12);

imshow("rgb", image);

imwrite("C://Users//TOPSUN//Desktop//123.jpg", image);

waitKey(0);

return 0;

}

7.又找到一個

int highlight_remove_Chi(IplImage* src, IplImage* dst)

{

int height = src->height;

int width = src->width;

int step = src->widthStep;

int i = 0, j = 0;

unsigned char R, G, B, MaxC;

double alpha, beta, alpha_r, alpha_g, alpha_b, beta_r, beta_g, beta_b, temp = 0, realbeta = 0, minalpha = 0;

double gama, gama_r, gama_g, gama_b;

unsigned char* srcData;

unsigned char* dstData;

for (i = 0; i<height; i++)

{

srcData = (unsigned char*)src->imageData + i*step;

dstData = (unsigned char*)dst->imageData + i*step;

for (j = 0; j<width; j++)

{

R = srcData[j * 3];

G = srcData[j * 3 + 1];

B = srcData[j * 3 + 2];

alpha_r = (double)R / (double)(R + G + B);

alpha_g = (double)G / (double)(R + G + B);

alpha_b = (double)B / (double)(R + G + B);

alpha = max(max(alpha_r, alpha_g), alpha_b);

MaxC = max(max(R, G), B);// compute the maximum of the rgb channels

minalpha = min(min(alpha_r, alpha_g), alpha_b); beta_r = 1 - (alpha - alpha_r) / (3 * alpha - 1);

beta_g = 1 - (alpha - alpha_g) / (3 * alpha - 1);

beta_b = 1 - (alpha - alpha_b) / (3 * alpha - 1);

beta = max(max(beta_r, beta_g), beta_b);//將beta當做漫反射係數,則有 // gama is used to approximiate the beta

gama_r = (alpha_r - minalpha) / (1 - 3 * minalpha);

gama_g = (alpha_g - minalpha) / (1 - 3 * minalpha);

gama_b = (alpha_b - minalpha) / (1 - 3 * minalpha);

gama = max(max(gama_r, gama_g), gama_b);

temp = (gama*(R + G + B) - MaxC) / (3 * gama - 1);

//beta=(alpha-minalpha)/(1-3*minalpha)+0.08;

//temp=(gama*(R+G+B)-MaxC)/(3*gama-1);

dstData[j * 3] = R - (unsigned char)(temp + 0.5);

dstData[j * 3 + 1] = G - (unsigned char)(temp + 0.5);

dstData[j * 3 + 2] = B - (unsigned char)(temp + 0.5);

}

}

cvShowImage("src", src);

cvShowImage("dst", dst);

return 1;

}

void main()

{

IplImage *src = cvLoadImage("C://Users//TOPSUN//Desktop//2.jpg");

IplImage *dst = cvCreateImage(cvSize(src->width, src->height), src->depth, 3);

if (!src)

{

printf("請確保影象輸入正確;");

return;

}

highlight_remove_Chi(src, dst);

cvSaveImage("C://Users//TOPSUN//Desktop//123.jpg", dst);

cvWaitKey(0);

}

未完待續。。。