Gstreamer教程 --動態pipeline

阿新 • • 發佈:2018-11-07

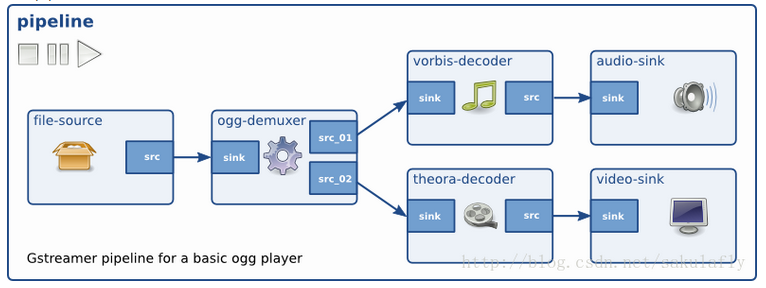

demuxer在沒有看到容器檔案之前無法確定需要做的工作,不能生成對應的內容。也就是說,demuxer開始時是沒有source pad給其他element連線用的。

解決方法是隻管建立pipeline,讓source和demuxer連線起來,然後開始執行。當demuxer接收到資料之後它就有了足夠的資訊生成source pad。這時我們就可以繼續把其他部分和demuxer新生成的pad連線起來,生成一個完整的pipeline。

g_signal_connect()

#define

Connects a GCallback functionto a signal for a particular object.

The handlerwill be called before the default handler of the signal.

instance : |

the instance to connect to. |

detailed_signal : |

a string of the form "signal-name::detail". |

c_handler : |

the GCallback to connect. |

data : |

data to pass to c_handler calls. |

Returns : |

the handler id |

只能相鄰狀態改變,

絕大多數應用都是在PLAYING狀態開始播放,然後跳轉到PAUSE狀態來提供暫停功能,最後在退出時退到NULL狀態。

來自 <http://blog.csdn.net/sakulafly/article/details/20936067>

#include <gst/gst.h>

/* Structure to contain all our information, so we can passit to callbacks */

typedef struct _CustomData {

GstElement *pipeline;

GstElement *source;

GstElement *convert;

GstElement *sink;

} CustomData;

/* Handler for the pad-added signal */

staticvoid pad_added_handler (GstElement *src, GstPad *pad, CustomData *data);

int main(int argc, char *argv[]) {

CustomData data;

GstBus *bus;

GstMessage *msg;

GstStateChangeReturn ret;

gboolean terminate = FALSE;

/* InitializeGStreamer */

gst_init (&argc,&argv);

/* Create the elements */

data.source = gst_element_factory_make ("uridecodebin","source");

data.convert = gst_element_factory_make ("audioconvert","convert");

data.sink = gst_element_factory_make ("autoaudiosink","sink");

/*

uridecodebin自己會在內部初始化必要的element,然後把一個URI變成一個原始音視訊流輸出,它差不多做了playbin2的一半工作。因為它自己帶著demuxer,所以它的source pad沒有初始化,我們等會會用到。

audioconvert在不同的音訊格式轉換時很有用。這裡用這個element是為了確保應用的平臺無關性。

autoaudiosink,這個element的輸出就是直接送往音效卡的音訊流。

*/

/* Create the emptypipeline */

data.pipeline =gst_pipeline_new ("test-pipeline");

if (!data.pipeline ||!data.source || !data.convert || !data.sink) {

g_printerr ("Notall elements could be created.\n");

return -1;

}

/* Build thepipeline. Note that we are NOT linking the source at this

* point. We will doit later. */

gst_bin_add_many(GST_BIN (data.pipeline), data.source, data.convert , data.sink, NULL);

/* 連線convert,sink,由於目前沒有source pad,之後再連線 */

if (!gst_element_link (data.convert, data.sink)) {

g_printerr("Elements could not be linked.\n");

gst_object_unref (data.pipeline);

return -1;

}

/* Set the URI toplay */

g_object_set(data.source, "uri", "http://docs.gstreamer.com/media/sintel_trailer-480p.webm",NULL);

/* Connect to the pad-added signal */

/*source element最後獲得足夠的資料時,它就會自動生成source pad,並且觸發“pad-added”訊號*/

g_signal_connect(data.source, "pad-added", G_CALLBACK (pad_added_handler),&data);

/* Start playing */

ret = gst_element_set_state(data.pipeline, GST_STATE_PLAYING);

if (ret ==GST_STATE_CHANGE_FAILURE) {

g_printerr("Unable to set the pipeline to the playing state.\n");

gst_object_unref(data.pipeline);

return -1;

}

/* Listen to the bus*/

bus =gst_element_get_bus (data.pipeline);

do {

msg =gst_bus_timed_pop_filtered (bus, GST_CLOCK_TIME_NONE,

GST_MESSAGE_STATE_CHANGED | GST_MESSAGE_ERROR | GST_MESSAGE_EOS);

/* Parse message */

if (msg != NULL) {

GError *err;

gchar *debug_info;

switch(GST_MESSAGE_TYPE (msg)) {

caseGST_MESSAGE_ERROR:

gst_message_parse_error (msg, &err, &debug_info);

g_printerr("Error received from element %s: %s\n", GST_OBJECT_NAME(msg->src), err->message);

g_printerr("Debugging information: %s\n", debug_info ? debug_info :"none");

g_clear_error(&err);

g_free(debug_info);

terminate =TRUE;

break;

caseGST_MESSAGE_EOS:

g_print ("End-Of-Streamreached.\n");

terminate =TRUE;

break;

case GST_MESSAGE_STATE_CHANGED:

/* We are onlyinterested in state-changed messages from the pipeline */

if (GST_MESSAGE_SRC (msg) == GST_OBJECT (data.pipeline)) {

GstStateold_state, new_state, pending_state;

gst_message_parse_state_changed (msg, &old_state, &new_state,&pending_state);

g_print("Pipeline state changed from %s to %s:\n",

gst_element_state_get_name (old_state), gst_element_state_get_name(new_state));

}

break;

default:

/* We shouldnot reach here */

g_printerr("Unexpected message received.\n");

break;

}

gst_message_unref (msg);

}

} while (!terminate);

/* Free resources */

gst_object_unref (bus);

gst_element_set_state(data.pipeline, GST_STATE_NULL);

gst_object_unref(data.pipeline);

return 0;

}

/* This function will be called by the pad-added signal */

static void pad_added_handler (GstElement *src, GstPad*new_pad, CustomData *data) {

/*獲取convert的sinkpad */

GstPad *sink_pad =gst_element_get_static_pad (data->convert, "sink");

GstPadLinkReturn ret;

GstCaps *new_pad_caps =NULL;

GstStructure*new_pad_struct = NULL;

const gchar*new_pad_type = NULL;

g_print ("Receivednew pad '%s' from '%s':\n", GST_PAD_NAME (new_pad), GST_ELEMENT_NAME(src));

//uridecodebin會自動建立許多的pad,對於每一個pad,這個回撥函式都會被呼叫。

/* If our converter isalready linked, we have nothing to do here */

if (gst_pad_is_linked(sink_pad)) {

g_print (" We are already linked. Ignoring.\n");

goto exit;

}

//gst_pad_get_caps()方法會獲得pad的capability(也就是pad支援的資料型別),是被封裝起來的GstCaps結構。一個pad可以有多個capability,GstCaps可以包含多個GstStructure,每個都描述了一個不同的capability。

/* Check the newpad's type */

new_pad_caps =gst_pad_get_caps (new_pad);

new_pad_struct =gst_caps_get_structure (new_pad_caps, 0);

new_pad_type =gst_structure_get_name (new_pad_struct);

/*判斷是否以audio/x-raw開頭,是否為音訊*/

if (!g_str_has_prefix(new_pad_type, "audio/x-raw")) {

g_print (" It has type '%s' which is not raw audio.Ignoring.\n", new_pad_type);

goto exit;

}

/* Attempt thelink src新產生的pad和convert的sink pad*/

ret = gst_pad_link(new_pad, sink_pad);

if (GST_PAD_LINK_FAILED(ret)) {

g_print (" Type is '%s' but link failed.\n",new_pad_type);

} else {

g_print (" Link succeeded (type '%s').\n",new_pad_type);

}

exit:

/* Unreference the newpad's caps, if we got them */

if (new_pad_caps !=NULL)

gst_caps_unref(new_pad_caps);

/* Unreference the sinkpad */

gst_object_unref(sink_pad);

}