Linux fsync和fdatasync系統呼叫實現分析(Ext4檔案系統)

參考:https://blog.csdn.net/luckyapple1028/article/details/61413724

在Linux系統中,對檔案系統上檔案的讀寫一般是通過頁快取(page cache)進行的(DirectIO除外),這樣設計的可以延時磁碟IO的操作,從而可以減少磁碟讀寫的次數,提升IO效能。但是效能和可靠性在一定程度上往往是矛盾的,雖然核心中設計有一個工作佇列執行贓頁回寫同磁碟檔案進行同步,但是在一些極端的情況下還是免不了掉電資料丟失。因此核心提供了sync、fsync、fdatasync和msync系統呼叫用於同步,其中sync會同步整個系統下的所有檔案系統以及塊裝置,而fsync和fdatasync只針對單獨的檔案進行同步,msync用於同步經過mmap的檔案。使用這些系統API,使用者可以在寫完一些重要檔案之後,立即執行將新寫入的資料回寫到磁碟,儘可能的降低資料丟失的概率。本文將介紹fsync和fdatasync的功能和區別,並以Ext4檔案系統為例,分析它們是如何將資料同步到磁碟中的。

核心版本:Linux 4.10.1

核心檔案:fs/sync.c、fs/ext4/fsync.c、fs/ext4/inode.c、mm/filemap.c

1、概述

當用戶在寫一個檔案時,若在open時沒有設定O_SYNC和O_DIRECT,那麼新write的資料內容將會暫時儲存在頁快取(page cache)中,對應的頁成為贓頁(dirty page),這些資料並不會立即寫回磁碟中。同時核心中設計有一個等待佇列bdi_wq以及一些writeback worker,它們在達到一定的條件之後(延遲時間到期(預設5s)、系統記憶體不足、贓頁超過閾值等)就會被喚醒執行贓頁(dirty page)的回寫操作,檔案中新寫入的資料在此時才能夠寫回磁碟。雖然從write操作到writeback之間的視窗時間(Ext4預設啟用delay alloc特性,該時間延長到了30s)較短,若在此期間裝置掉電或者系統奔潰,那使用者的資料將會丟失。因此,對於單個檔案來說,如果需要提高可靠性,可以在寫入後呼叫fsync和fdatasync來實現檔案(資料)的同步。

fsync系統呼叫會同步fd表示檔案的所有資料,包括資料和元資料,它會一直阻塞等待直到回寫結束。fdatasync同fsync類似,但是它不會回寫被修改的元資料,除非對於一些對於資料完整性檢索有關的場景。例如,若僅是檔案的最後一次訪問時間(st_atime)或最後一次修改時間(st_mtime)發生變化是不需要同步元資料的,因為它不會影響檔案資料塊的檢索,若是檔案的大小改變了(st_isize)則顯然是需要同步元資料的,若不同步則可能導致系統崩潰後無法檢索修改的資料。鑑於fdatasync的以上區別,可以看出應用程式對一些無需回寫檔案元資料的場景使用fdatasync可以提升效能。

需要注意,如果物理磁碟的write cache被使能,那麼fsync和fdatasync將不能保證回寫的資料被完整的寫入到磁碟儲存介質中(資料可能依然儲存在磁碟的cache中沒有寫入介質),因此可能會出現明明呼叫了fsync系統呼叫但是資料在掉電後依然丟失了或者出現檔案系統不一致的情況。

最後,關於fsync和fdatasync的詳細描述可以參考fsync的manual page。

2、實現分析

關於fsync和fdatasync的實現,其呼叫處理流程並不複雜,但是其中涉及檔案系統日誌和block分配管理、記憶體頁回寫機制等相關的諸多細節,若要完全掌握則需要具備相關的知識。本文結合Ext4檔案系統,從主線呼叫流程入手進行詳細分析,不深入檔案系統和其他模組的過多的其他細節。同時我們約定對於Ext4檔案系統,使用預設的選項,即使用order型別的日誌模型,不啟用inline data、加密等特殊選項。首先主要函式呼叫關係如下圖所示:

sys_fsync/sys_datasync

---> do_fsync

---> vfs_fsync

---> vfs_fsync_range

---> mark_inode_dirty_sync

| ---> ext4_dirty_inode

| | ---> ext4_journal_start

| | ---> ext4_mark_inode_dirty

| | ---> ext4_journal_stop

| ---> inode_io_list_move_locked

| ---> wb_wakeup_delayed

---> ext4_sync_file

---> filemap_write_and_wait_range

| ---> __filemap_fdatawrite_range

| ---> do_writepages

| ---> ext4_writepages

| ---> filemap_fdatawait_range

---> jbd2_complete_transaction

---> blkdev_issue_flush

fysnc和fdatasync系統呼叫按照相同的執行程式碼路徑執行。在do_fsync函式中會根據入參fd找到對應的檔案描述符file結構,在vfs_fsync_range函式中fdatasync流程不會執行mark_inode_dirty_sync函式分支,fsync函式會判斷當前的檔案是否在訪問、修改時間上有發生過變化,若發生過變化則會呼叫mark_inode_dirty_sync分支更新元資料並設定為dirty然後將對應的贓頁新增到jbd2日誌的對應連結串列中等待日誌提交程序執行回寫;隨後的ext4_sync_file函式中會呼叫filemap_write_and_wait_range函式同步檔案中的dirty page cache,它會向block層提交bio並等待回寫執行結束,然後呼叫jbd2_complete_transaction函式觸發元資料回寫(若元資料不為髒則不會回寫任何與該檔案相關的元資料),最後若Ext4檔案系統啟用了barrier特性且需要flush write cache,那呼叫blkdev_issue_flush向底層傳送flush指令,這將觸發磁碟中的cache寫入介質的操作(這樣就能保證在正常情況下資料都被落盤了)。

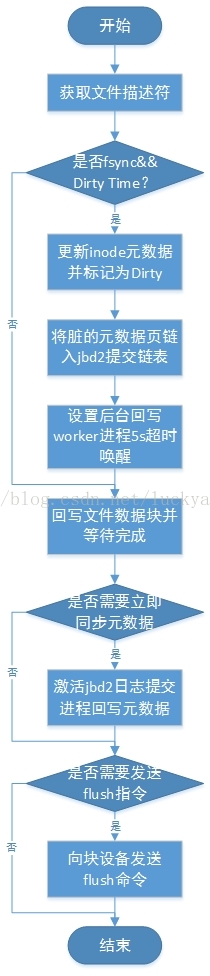

具體的執行流程圖如下圖所示:

fsync和fdatasync系統呼叫流程圖

下面跟蹤fsync和fdatasync系統呼叫的原始碼具體分析它是如何實現檔案資料同步操作的:

-

SYSCALL_DEFINE1(fsync, unsigned int, fd) -

{ -

return do_fsync(fd, 0); -

}

-

SYSCALL_DEFINE1(fdatasync, unsigned int, fd) -

{ -

return do_fsync(fd, 1); -

}

fsync和fdatasync系統呼叫只有一個入參,即已經開啟的檔案描述符fd;函式直接呼叫do_fsync,僅第二個入參datasync標識不同。

-

static int do_fsync(unsigned int fd, int datasync) -

{ -

struct fd f = fdget(fd); -

int ret = -EBADF; -

if (f.file) { -

ret = vfs_fsync(f.file, datasync); -

fdput(f); -

} -

return ret; -

}

do_fsync函式首先呼叫fdget從當前程序的fdtable中根據fd找到對應的struct fd結構體,真正用到的是它裡面的struct file例項(該結構體在open檔案時動態生成並和fd繫結後儲存在程序task_struct結構體中),然後呼叫通用函式vfs_fsync。

-

/** -

* vfs_fsync - perform a fsync or fdatasync on a file -

* @file: file to sync -

* @datasync: only perform a fdatasync operation -

* -

* Write back data and metadata for @file to disk. If @datasync is -

* set only metadata needed to access modified file data is written. -

*/ -

int vfs_fsync(struct file *file, int datasync) -

{ -

return vfs_fsync_range(file, 0, LLONG_MAX, datasync); -

} -

EXPORT_SYMBOL(vfs_fsync);

vfs_fsync函式直接轉調vfs_fsync_range,其中入參二和入參三為需要同步檔案資料位置的起始與結束偏移值,以位元組為單位,這裡傳入的分別是0和LLONG_MAX,顯然是表明要同步所有的資料了。

-

/** -

* vfs_fsync_range - helper to sync a range of data & metadata to disk -

* @file: file to sync -

* @start: offset in bytes of the beginning of data range to sync -

* @end: offset in bytes of the end of data range (inclusive) -

* @datasync: perform only datasync -

* -

* Write back data in range @[email protected] and metadata for @file to disk. If -

* @datasync is set only metadata needed to access modified file data is -

* written. -

*/ -

int vfs_fsync_range(struct file *file, loff_t start, loff_t end, int datasync) -

{ -

struct inode *inode = file->f_mapping->host; -

if (!file->f_op->fsync) -

return -EINVAL; -

if (!datasync && (inode->i_state & I_DIRTY_TIME)) { -

spin_lock(&inode->i_lock); -

inode->i_state &= ~I_DIRTY_TIME; -

spin_unlock(&inode->i_lock); -

mark_inode_dirty_sync(inode); -

} -

return file->f_op->fsync(file, start, end, datasync); -

} -

EXPORT_SYMBOL(vfs_fsync_range);

vfs_fsync_range函式首先從file結構體的addess_space中找到檔案所屬的inode(地址對映address_space結構在open檔案時的sys_open->do_dentry_open呼叫中初始化,裡面儲存了該檔案的所有建立的page cache、底層塊裝置和對應的操作函式集),然後判斷檔案系統的file_operation函式集是否實現了fsync介面,如果未實現直接返回EINVAL。

接下來在非datasync(sync)的情況下會對inode的I_DIRTY_TIME標記進行判斷,如果置位了該標識(表示該檔案的時間戳已經發生了跟新但還沒有同步到磁碟上)則清除該標誌位並呼叫mark_inode_dirty_sync設定I_DIRTY_SYNC標識,表示需要進行sync同步操作。該函式會針對當前inode所在的不同state進行區別處理,同時會將inode新增到後臺回刷bdi的Dirty list上去(bdi回寫任務會遍歷該list執行同步操作,當然容易導致誤解的是當前的回寫流程是不會由bdi write back worker來執行的,而是在本呼叫流程中就直接一氣呵成的)。

-

static inline void mark_inode_dirty_sync(struct inode *inode) -

{ -

__mark_inode_dirty(inode, I_DIRTY_SYNC); -

}

-

void __mark_inode_dirty(struct inode *inode, int flags) -

{ -

#define I_DIRTY_INODE (I_DIRTY_SYNC | I_DIRTY_DATASYNC) -

struct super_block *sb = inode->i_sb; -

int dirtytime; -

trace_writeback_mark_inode_dirty(inode, flags); -

/* -

* Don't do this for I_DIRTY_PAGES - that doesn't actually -

* dirty the inode itself -

*/ -

if (flags & (I_DIRTY_SYNC | I_DIRTY_DATASYNC | I_DIRTY_TIME)) { -

trace_writeback_dirty_inode_start(inode, flags); -

if (sb->s_op->dirty_inode) -

sb->s_op->dirty_inode(inode, flags); -

trace_writeback_dirty_inode(inode, flags); -

}

__mark_inode_dirty函式由於當前傳入的flag等於I_DIRTY_SYNC(表示inode為髒但是不需要在fdatasync時進行同步,一般用於時間戳i_atime等改變的情況下,定義在include/linux/fs.h中),所以這裡會呼叫檔案系統的dirty_inode函式指標,對於ext4檔案系統即是ext4_dirty_inode函式。

-

void ext4_dirty_inode(struct inode *inode, int flags) -

{ -

handle_t *handle; -

if (flags == I_DIRTY_TIME) -

return; -

handle = ext4_journal_start(inode, EXT4_HT_INODE, 2); -

if (IS_ERR(handle)) -

goto out; -

ext4_mark_inode_dirty(handle, inode); -

ext4_journal_stop(handle); -

out: -

return; -

}

ext4_dirty_inode函式涉及ext4檔案系統使用的jbd2日誌模組,它將啟用一個新的日誌handle(日誌原子操作)並將應該同步的inode元資料block向日志jbd2模組transaction進行提交(注意不會立即寫日誌和回寫)。其中ext4_journal_start函式會簡單判斷一下ext4檔案系統的日誌執行狀態最後直接呼叫jbd2__journal_start來啟用日誌handle;然後ext4_mark_inode_dirty函式會呼叫ext4_get_inode_loc獲取inode元資料所在的buffer head對映block,按照標準的日誌提交流程jbd2_journal_get_write_access(獲取寫許可權)-> 對元資料raw_inode進行更新 -> jbd2_journal_dirty_metadata(設定元資料為髒並新增到日誌transaction的對應連結串列中);最後ext4_journal_stop->jbd2_journal_stop呼叫流程結束這個handle原子操作。這樣後面日誌commit程序會對日誌的元資料塊進行提交(注意,這裡並不會立即喚醒日誌commit程序啟動日誌提交動作,啟用largefile特性除外)。

回到__mark_inode_dirty函式中繼續往下分析:

-

if (flags & I_DIRTY_INODE) -

flags &= ~I_DIRTY_TIME; -

dirtytime = flags & I_DIRTY_TIME; -

/* -

* Paired with smp_mb() in __writeback_single_inode() for the -

* following lockless i_state test. See there for details. -

*/ -

smp_mb(); -

if (((inode->i_state & flags) == flags) || -

(dirtytime && (inode->i_state & I_DIRTY_INODE))) -

return;

下面如果inode當前的state同要設定的標識完全相同或者在設定dirtytime的情況下inode已經為髒了那就直接退出,無需再設定標識了和新增Dirty list了。

-

if (unlikely(block_dump)) -

block_dump___mark_inode_dirty(inode); -

spin_lock(&inode->i_lock); -

if (dirtytime && (inode->i_state & I_DIRTY_INODE)) -

goto out_unlock_inode; -

if ((inode->i_state & flags) != flags) { -

const int was_dirty = inode->i_state & I_DIRTY; -

inode_attach_wb(inode, NULL); -

if (flags & I_DIRTY_INODE) -

inode->i_state &= ~I_DIRTY_TIME; -

inode->i_state |= flags; -

/* -

* If the inode is being synced, just update its dirty state. -

* The unlocker will place the inode on the appropriate -

* superblock list, based upon its state. -

*/ -

if (inode->i_state & I_SYNC) -

goto out_unlock_inode; -

/* -

* Only add valid (hashed) inodes to the superblock's -

* dirty list. Add blockdev inodes as well. -

*/ -

if (!S_ISBLK(inode->i_mode)) { -

if (inode_unhashed(inode)) -

goto out_unlock_inode; -

} -

if (inode->i_state & I_FREEING) -

goto out_unlock_inode;

首先為了便於除錯,在設定了block_dump時會有除錯資訊的列印,會呼叫block_dump___mark_inode_dirty函式將該dirty inode的inode號、檔名和裝置名打印出來。

然後對inode上鎖並進行最後的處理,先設定i_state新增flag標記,當前置位的flag為I_DIRTY_SYNC,執行到此處inode的狀態標識就設定完了;隨後判斷該inode是否已經正在進行sync同步(設定I_SYNC標識,在執行回寫worker的writeback_sb_inodes函式呼叫中會設定該標識)或者inode已經在銷燬釋放的過程中了,若是則直接退出,不再繼續回寫。

-

/* -

* If the inode was already on b_dirty/b_io/b_more_io, don't -

* reposition it (that would break b_dirty time-ordering). -

*/ -

if (!was_dirty) { -

struct bdi_writeback *wb; -

struct list_head *dirty_list; -

bool wakeup_bdi = false; -

wb = locked_inode_to_wb_and_lock_list(inode); -

WARN(bdi_cap_writeback_dirty(wb->bdi) && -

!test_bit(WB_registered, &wb->state), -

"bdi-%s not registered\n", wb->bdi->name); -

inode->dirtied_when = jiffies; -

if (dirtytime) -

inode->dirtied_time_when = jiffies; -

if (inode->i_state & (I_DIRTY_INODE | I_DIRTY_PAGES)) -

dirty_list = &wb->b_dirty; -

else -

dirty_list = &wb->b_dirty_time; -

wakeup_bdi = inode_io_list_move_locked(inode, wb, -

dirty_list); -

spin_unlock(&wb->list_lock); -

trace_writeback_dirty_inode_enqueue(inode); -

/* -

* If this is the first dirty inode for this bdi, -

* we have to wake-up the corresponding bdi thread -

* to make sure background write-back happens -

* later. -

*/ -

if (bdi_cap_writeback_dirty(wb->bdi) && wakeup_bdi) -

wb_wakeup_delayed(wb); -

return; -

} -

} -

out_unlock_inode: -

spin_unlock(&inode->i_lock); -

#undef I_DIRTY_INODE -

}

最後針對當前的inode尚未Dirty的情況,設定inode的Dirty time並將它新增到它回寫bdi_writeback對應的Dirty list中去(當前上下文新增的是wb->b_dirty連結串列),然後判斷該bdi是否沒有正在處理的dirty io操作(需判斷dirty list、io list和io_more list是否都為空)且支援回寫操作,就呼叫wb_wakeup_delayed函式往後臺回寫工作佇列新增延時回寫任務,延時的時間由dirty_writeback_interval全域性變數設定,預設值為5s時間。

當然了,雖然這裡會讓writeback回寫程序在5s以後喚醒執行回寫,但是在當前fsync的呼叫流程中是絕對不會等5s以後由writeback回寫程序來執行回寫的(這部分涉及後臺bdi贓頁回寫機制)。

回到vfs_fsync_range函式中,程式碼流程執行到這裡,從針對!datasync && (inode->i_state & I_DIRTY_TIME)這個條件的分支處理中就可以看到fsync和fdatasync系統呼叫的不同之處了:fsync系統呼叫針對時間戳變化的inode會設定inode為Dirty,這將導致後面的執行流程對檔案的元資料進行回寫,而fdatasync則不會。

繼續往下分析,vfs_fsync_range函式最後呼叫file_operation函式集裡的fsync註冊函式,ext4檔案系統呼叫的是ext4_sync_file,將由ext4檔案系統執行檔案資料和元資料的同步操作。

-

/* -

* akpm: A new design for ext4_sync_file(). -

* -

* This is only called from sys_fsync(), sys_fdatasync() and sys_msync(). -

* There cannot be a transaction open by this task. -

* Another task could have dirtied this inode. Its data can be in any -

* state in the journalling system. -

* -

* What we do is just kick off a commit and wait on it. This will snapshot the -

* inode to disk. -

*/ -

int ext4_sync_file(struct file *file, loff_t start, loff_t end, int datasync) -

{ -

struct inode *inode = file->f_mapping->host; -

struct ext4_inode_info *ei = EXT4_I(inode); -

journal_t *journal = EXT4_SB(inode->i_sb)->s_journal; -

int ret = 0, err; -

tid_t commit_tid; -

bool needs_barrier = false; -

J_ASSERT(ext4_journal_current_handle() == NULL); -

trace_ext4_sync_file_enter(file, datasync); -

if (inode->i_sb->s_flags & MS_RDONLY) { -

/* Make sure that we read updated s_mount_flags value */ -

smp_rmb(); -

if (EXT4_SB(inode->i_sb)->s_mount_flags & EXT4_MF_FS_ABORTED) -

ret = -EROFS; -

goto out; -

}

分段來分析ext4_sync_file函式,首先明確幾個區域性變數:1、commit_tid是日誌提交事物的transaction id,用來區分不同的事物(transaction);2、needs_barrier用於表示是否需要對所在的塊裝置傳送cache刷寫命令,是一種用於保護資料一致性的手段。這幾個區域性變數後面會看到是如何使用的,這裡先關注一下。

ext4_sync_file函式首先判斷檔案系統只讀的情況,對於一般以只讀方式掛載的檔案系統由於不會寫入檔案,所以不需要執行fsync/fdatasync操作,立即返回success即可。但是檔案系統只讀也可能是發生了錯誤導致的,因此這裡會做一個判斷,如果檔案系統abort(出現致命錯誤),就需要返回EROFS而不是success,這樣做是為了避免應用程式誤認為檔案已經同步成功了。

-

if (!journal) { -

ret = __generic_file_fsync(file, start, end, datasync); -

if (!ret) -

ret = ext4_sync_parent(inode); -

if (test_opt(inode->i_sb, BARRIER)) -

goto issue_flush; -

goto out; -

}

接下來處理未開啟日誌的情況,這種情況下將呼叫通用函式__generic_file_fsync進行檔案同步,隨後呼叫ext4_sync_parent對檔案所在的父目錄進行同步。之所以要同步父目錄是因為在未開啟日誌的情況下,若同步的是一個新建立的檔案,那麼待到父目錄的目錄項通過writeback後臺回寫之間將有一個巨大的時間視窗,在這段時間內掉電或者系統崩潰就會導致資料的丟失,所以這裡及時同步父目錄項將該時間窗大大的縮短,也就提高了資料的安全性。ext4_sync_parent函式會對它的父目錄進行遞迴,若是新建立的目錄都將進行同步。

由於在預設情況下是啟用日誌的(jbd2日誌模組journal在mount檔案系統時的ext4_fill_super->ext4_load_journal呼叫流程中初始化),所以這個分支暫不詳細分析,回到ext4_sync_file中分析預設開啟日誌的情況。

-

ret = filemap_write_and_wait_range(inode->i_mapping, start, end); -

if (ret) -

return ret;

接下來呼叫filemap_write_and_wait_range回寫從start到end的dirty檔案資料塊並等待回寫完成。

-

/** -

* filemap_write_and_wait_range - write out & wait on a file range -

* @mapping: the address_space for the pages -

* @lstart: offset in bytes where the range starts -

* @lend: offset in bytes where the range ends (inclusive) -

* -

* Write out and wait upon file offsets lstart->lend, inclusive. -

* -

* Note that `lend' is inclusive (describes the last byte to be written) so -

* that this function can be used to write to the very end-of-file (end = -1). -

*/ -

int filemap_write_and_wait_range(struct address_space *mapping, -

loff_t lstart, loff_t lend) -

{ -

int err = 0; -

if ((!dax_mapping(mapping) && mapping->nrpages) || -

(dax_mapping(mapping) && mapping->nrexceptional)) { -

err = __filemap_fdatawrite_range(mapping, lstart, lend, -

WB_SYNC_ALL); -

/* See comment of filemap_write_and_wait() */ -

if (err != -EIO) { -

int err2 = filemap_fdatawait_range(mapping, -

lstart, lend); -

if (!err) -

err = err2; -

} -

} else { -

err = filemap_check_errors(mapping); -

} -

return err; -

} -

EXPORT_SYMBOL(filemap_write_and_wait_range);

filemap_write_and_wait_range函式首先判斷是否需要回寫,若沒有啟用dax特性,那麼其地址空間頁快取必須非0(因為需要回寫的就是頁快取page cache :)),否則會呼叫filemap_check_errors處理異常,先來看一下該函式:

-

int filemap_check_errors(struct address_space *mapping) -

{ -

int ret = 0; -

/* Check for outstanding write errors */ -

if (test_bit(AS_ENOSPC, &mapping->flags) && -

test_and_clear_bit(AS_ENOSPC, &mapping->flags)) -

ret = -ENOSPC; -

if (test_bit(AS_EIO, &mapping->flags) && -

test_and_clear_bit(AS_EIO, &mapping->flags)) -

ret = -EIO; -

return ret; -

} -

EXPORT_SYMBOL(filemap_check_errors);

filemap_check_errors函式主要檢測地址空間的AS_EIO和AS_ENOSPC標識,前者表示發生IO錯誤,後者表示空間不足(它們定義在include/linux/pagemap.h中),只需要對這兩種異常標記進行清除即可。

若有頁快取需要回寫,則呼叫__filemap_fdatawrite_range執行回寫,注意最後一個入參是WB_SYNC_ALL,這表示將會等待回寫結束:

-

/** -

* __filemap_fdatawrite_range - start writeback on mapping dirty pages in range -

* @mapping: address space structure to write -

* @start: offset in bytes where the range starts -

* @end: offset in bytes where the range ends (inclusive) -

* @sync_mode: enable synchronous operation -

* -

* Start writeback against all of a mapping's dirty pages that lie -

* within the byte offsets <start, end> inclusive. -

* -

* If sync_mode is WB_SYNC_ALL then this is a "data integrity" operation, as -

* opposed to a regular memory cleansing writeback. The difference between -

* these two operations is that if a dirty page/buffer is encountered, it must -

* be waited upon, and not just skipped over. -

*/ -

int __filemap_fdatawrite_range(struct address_space *mapping, loff_t start, -

loff_t end, int sync_mode) -

{ -

int ret; -

struct writeback_control wbc = { -

.sync_mode = sync_mode, -

.nr_to_write = LONG_MAX, -

.range_start = start, -

.range_end = end, -

}; -

if (!mapping_cap_writeback_dirty(mapping)) -

return 0; -

wbc_attach_fdatawrite_inode(&wbc, mapping->host); -

ret = do_writepages(mapping, &wbc); -

wbc_detach_inode(&wbc); -

return ret; -

}

從函式的註釋中可以看出,__filemap_fdatawrite_range函式會將<start, end>位置的dirty page回寫。它首先構造一個struct writeback_control例項並初始化相應的欄位,該結構體用於控制writeback回寫操作,其中sync_mode表示同步模式,一共有WB_SYNC_NONE和WB_SYNC_ALL兩種可選,前一種不會等待回寫結束,一般用於週期性回寫,後一種會等待回寫結束,用於sync之類的強制回寫;nr_to_write表示要回寫的頁數;range_start和range_end表示要會寫的偏移起始和結束的位置,以位元組為單位。

接下來呼叫mapping_cap_writeback_dirty函式判斷檔案所在的bdi是否支援回寫動作,若不支援則直接返回0(表示寫回的數量為0);然後呼叫wbc_attach_fdatawrite_inode函式將wbc和inode的bdi進行繫結(需啟用blk_cgroup核心屬性,否則為空操作);然後呼叫do_writepages執行回寫動作,回寫完畢後呼叫wbc_detach_inode函式將wbc和inode解除繫結。

-

int do_writepages(struct address_space *mapping, struct writeback_control *wbc) -

{ -

int ret; -

if (wbc->nr_to_write <= 0) -

return 0; -

if (mapping->a_ops->writepages) -

ret = mapping->a_ops->writepages(mapping, wbc); -

else -

ret = generic_writepages(mapping, wbc); -

return ret; -

}

do_writepages函式將優先呼叫地址空間a_ops函式集中的writepages註冊函式,ext4檔案系統實現為ext4_writepages,若沒有實現則呼叫通用函式generic_writepages(該函式在後臺贓頁回刷程序wb_workfn函式呼叫流程中也會被呼叫來執行回寫操作)。

下面來簡單分析ext4_writepages是如何執行頁回寫的(函式較長,分段來看):

-

static int ext4_writepages(struct address_space *mapping, -

struct writeback_control *wbc) -

{ -

pgoff_t writeback_index = 0; -

long nr_to_write = wbc->nr_to_write; -

int range_whole = 0; -

int cycled = 1; -

handle_t *handle = NULL; -

struct mpage_da_data mpd; -

struct inode *inode = mapping->host; -

int needed_blocks, rsv_blocks = 0, ret = 0; -

struct ext4_sb_info *sbi = EXT4_SB(mapping->host->i_sb); -

bool done; -

struct blk_plug plug; -

bool give_up_on_write = false; -

percpu_down_read(&sbi->s_journal_flag_rwsem); -

trace_ext4_writepages(inode, wbc); -

if (dax_mapping(mapping)) { -

ret = dax_writeback_mapping_range(mapping, inode->i_sb->s_bdev, -

wbc); -

goto out_writepages; -

} -

/* -

* No pages to write? This is mainly a kludge to avoid starting -

* a transaction for special inodes like journal inode on last iput() -

* because that could violate lock ordering on umount -

*/ -

if (!mapping->nrpages || !mapping_tagged(mapping, PAGECACHE_TAG_DIRTY)) -

goto out_writepages; -

if (ext4_should_journal_data(inode)) { -

struct blk_plug plug; -

blk_start_plug(&plug); -

ret = write_cache_pages(mapping, wbc, __writepage, mapping); -

blk_finish_plug(&plug); -

goto out_writepages; -

}

ext4_writepages函式首先針對dax_mapping的分支,資料頁的回寫交由dax_writeback_mapping_range處理;接下來判斷是否有頁需要回寫,如果地址空間中沒有對映頁或者radix tree中沒有設定PAGECACHE_TAG_DIRTY標識(即無髒頁,該標識會在__set_page_dirty函式中對髒的資料塊設定),那就直接退出即可。

然後判斷當前檔案系統的日誌模式,如果是journal模式(資料塊和元資料塊都需要寫jbd2日誌),將交由write_cache_pages函式執行回寫,由於預設使用的是order日誌模式,所以略過,繼續往下分析。

-

/* -

* If the filesystem has aborted, it is read-only, so return -

* right away instead of dumping stack traces later on that -

* will obscure the real source of the problem. We test -

* EXT4_MF_FS_ABORTED instead of sb->s_flag's MS_RDONLY because -

* the latter could be true if the filesystem is mounted -

* read-only, and in that case, ext4_writepages should -

* *never* be called, so if that ever happens, we would want -

* the stack trace. -

*/ -

if (unlikely(sbi->s_mount_flags & EXT4_MF_FS_ABORTED)) { -

ret = -EROFS; -

goto out_writepages; -

} -

if (ext4_should_dioread_nolock(inode)) { -

/* -

* We may need to convert up to one extent per block in -

* the page and we may dirty the inode. -

*/ -

rsv_blocks = 1 + (PAGE_SIZE >> inode->i_blkbits); -

}

接下處理dioread_nolock特性, 該特性會在檔案寫buffer前分配未初始化的extent,等待寫IO完成後才會對extent進行初始化,以此可以免去加解inode mutext鎖,從而來達到加速寫操作的目的。該特性只對啟用了extent屬性的檔案有用,且不支援journal日誌模式。若啟用了該特性則需要在日誌中設定保留塊,預設檔案系統的塊大小為4KB,那這裡將指定保留塊為2個。

-

/* -

* If we have inline data and arrive here, it means that -

* we will soon create the block for the 1st page, so -

* we'd better clear the inline data here. -

*/ -

if (ext4_has_inline_data(inode)) { -

/* Just inode will be modified... */ -

handle = ext4_journal_start(inode, EXT4_HT_INODE, 1); -

if (IS_ERR(handle)) { -

ret = PTR_ERR(handle); -

goto out_writepages; -

} -

BUG_ON(ext4_test_inode_state(inode, -

EXT4_STATE_MAY_INLINE_DATA)); -

ext4_destroy_inline_data(handle, inode); -

ext4_journal_stop(handle); -

}

接下來處理inline data特性,該特性是對於小檔案的,它的資料內容足以儲存在inode block中,這裡也同樣先略過該特性的處理。

-

if (wbc->range_start == 0 && wbc->range_end == LLONG_MAX) -

range_whole = 1; -

if (wbc->range_cyclic) { -

writeback_index = mapping->writeback_index; -

if (writeback_index) -

cycled = 0; -

mpd.first_page = writeback_index; -

mpd.last_page = -1; -

} else { -

mpd.first_page = wbc->range_start >> PAGE_SHIFT; -

mpd.last_page = wbc->range_end >> PAGE_SHIFT; -

} -

mpd.inode = inode; -

mpd.wbc = wbc; -

ext4_io_submit_init(&mpd.io_submit, wbc);

接下來進行一些標識位的判斷,其中range_whole置位表示寫整個檔案;然後初始化struct mpage_da_data mpd結構體,在當前的非週期寫的情況下設定需要寫的first_page和last_page,然後初始化mpd結構體的inode、wbc和io_submit這三個欄位,然後跳轉到retry標號處開始執行。

-

retry: -

if (wbc->sync_mode == WB_SYNC_ALL || wbc->tagged_writepages) -

tag_pages_for_writeback(mapping, mpd.first_page, mpd.last_page); -

done = false; -

blk_start_plug(&plug);

這裡的tag_pages_for_writeback函式需要關注一下,它將address_mapping的radix tree中已經設定了PAGECACHE_TAG_DIRTY標識的節點設定上PAGECACHE_TAG_TOWRITE標識,表示開始回寫,後文中的等待結束__filemap_fdatawrite_range函式會判斷該標識。接下來進入一個大迴圈,逐一處理需要回寫的資料頁。

-

while (!done && mpd.first_page <= mpd.last_page) { -

/* For each extent of pages we use new io_end */ -

mpd.io_submit.io_end = ext4_init_io_end(inode, GFP_KERNEL); -

if (!mpd.io_submit.io_end) { -

ret = -ENOMEM; -

break; -

} -

/* -

* We have two constraints: We find one extent to map and we -

* must always write out whole page (makes a difference when -

* blocksize < pagesize) so that we don't block on IO when we -

* try to write out the rest of the page. Journalled mode is -

* not supported by delalloc. -

*/ -

BUG_ON(ext4_should_journal_data(inode)); -

needed_blocks = ext4_da_writepages_trans_blocks(inode); -

/* start a new transaction */ -

handle = ext4_journal_start_with_reserve(inode, -

EXT4_HT_WRITE_PAGE, needed_blocks, rsv_blocks); -

if (IS_ERR(handle)) { -

ret = PTR_ERR(handle); -

ext4_msg(inode->i_sb, KERN_CRIT, "%s: jbd2_start: " -

"%ld pages, ino %lu; err %d", __func__, -

wbc->nr_to_write, inode->i_ino, ret); -

/* Release allocated io_end */ -

ext4_put_io_end(mpd.io_submit.io_end); -

break; -

} -

trace_ext4_da_write_pages(inode, mpd.first_page, mpd.wbc); -

ret = mpage_prepare_extent_to_map(&mpd); -

if (!ret) { -

if (mpd.map.m_len) -

ret = mpage_map_and_submit_extent(handle, &mpd, -

&give_up_on_write); -

else { -

/* -

* We scanned the whole range (or exhausted -

* nr_to_write), submitted what was mapped and -

* didn't find anything needing mapping. We are -

* done. -

*/ -

done = true; -

} -

} -

/* -

* Caution: If the handle is synchronous, -

* ext4_journal_stop() can wait for transaction commit -

* to finish which may depend on writeback of pages to -

* complete or on page lock to be released. In that -

* case, we have to wait until after after we have -

* submitted all the IO, released page locks we hold, -

* and dropped io_end reference (for extent conversion -

* to be able to complete) before stopping the handle. -

*/ -

if (!ext4_handle_valid(handle) || handle->h_sync == 0) { -

ext4_journal_stop(handle); -

handle = NULL; -

} -

/* Submit prepared bio */ -

ext4_io_submit(&mpd.io_submit); -

/* Unlock pages we didn't use */ -

mpage_release_unused_pages(&mpd, give_up_on_write); -

/* -

* Drop our io_end reference we got from init. We have -

* to be careful and use deferred io_end finishing if -

* we are still holding the transaction as we can -

* release the last reference to io_end which may end -

* up doing unwritten extent conversion. -

*/ -

if (handle) { -

ext4_put_io_end_defer(mpd.io_submit.io_end); -

ext4_journal_stop(handle); -

} else -

ext4_put_io_end(mpd.io_submit.io_end); -

if (ret == -ENOSPC && sbi->s_journal) { -

/* -

* Commit the transaction which would -

* free blocks released in the transaction -

* and try again -

*/ -

jbd2_journal_force_commit_nested(sbi->s_journal); -

ret = 0; -

continue; -

} -

/* Fatal error - ENOMEM, EIO... */ -

if (ret) -

break; -

}

該迴圈中的呼叫流程非常複雜,這裡簡單描述一下:首先呼叫ext4_da_writepages_trans_blocks計算extext所需要使用的block數量,然後呼叫ext4_journal_start_with_reserve啟動一個新的日誌handle,需要的block數量和保留block數量通過needed_blocks和rsv_blocks給出;然後呼叫mpage_prepare_extent_to_map和mpage_map_and_submit_extent函式,它將遍歷查詢wbc中的PAGECACHE_TAG_TOWRITE為標記的節點,對其中已經對映的贓頁直接下發IO,對沒有對映的則計算需要對映的頁要使用的extent並進行對映;隨後呼叫ext4_io_submit下發bio,最後呼叫ext4_journal_stop結束本次handle。

回到filemap_write_and_wait_range函式中,如果__filemap_fdatawrite_range函式返回不是IO錯誤,那將呼叫filemap_fdatawait_range等待回寫結束。

-

int filemap_fdatawait_range(struct address_space *mapping, loff_t start_byte, -

loff_t end_byte) -

{ -

int ret, ret2; -

ret = __filemap_fdatawait_range(mapping, start_byte, end_byte); -

ret2 = filemap_check_errors(mapping); -

if (!ret) -

ret = ret2; -

return ret; -

} -

EXPORT_SYMBOL(filemap_fdatawait_range);

filemap_fdatawait_range函式一共做了兩件事,第一件事就是呼叫__filemap_fdatawait_range等待<start_byte, end_byte>回寫完畢,第二件事是呼叫filemap_check_errors進行錯誤處理。

-

static int __filemap_fdatawait_range(struct address_space *mapping, -

loff_t start_byte, loff_t end_byte) -

{ -

pgoff_t index = start_byte >> PAGE_SHIFT; -

pgoff_t end = end_byte >> PAGE_SHIFT; -

struct pagevec pvec; -

int nr_pages; -

int ret = 0; -

if (end_byte < start_byte) -

goto out; -

pagevec_init(&pvec, 0); -

while ((index <= end) && -

(nr_pages = pagevec_lookup_tag(&pvec, mapping, &index, -

PAGECACHE_TAG_WRITEBACK, -

min(end - index, (pgoff_t)PAGEVEC_SIZE-1) + 1)) != 0) { -

unsigned i; -

for (i = 0; i < nr_pages; i++) { -

struct page *page = pvec.pages[i]; -

/* until radix tree lookup accepts end_index */ -

if (page->index > end) -

continue; -

wait_on_page_writeback(page); -

if (TestClearPageError(page)) -

ret = -EIO; -

} -

pagevec_release(&pvec); -

cond_resched(); -

} -

out: -

return ret; -

}

__filemap_fdatawait_range函式是一個大迴圈,在迴圈中會呼叫pagevec_lookup_tag函式找到radix tree中設定了PAGECACHE_TAG_WRITEBACK標記的節點(對應前文中的標記位置),然後呼叫wait_on_page_writeback函式設定等待佇列等待對應page的PG_writeback標記被清除(表示回寫結束),這裡的等待會讓程序進入D狀態,最後如果發生了錯誤會返回-EIO,進而觸發filemap_fdatawait_range->filemap_check_errors錯誤檢查呼叫。

通過以上filemap_write_and_wait_range呼叫可以看出,檔案的回寫動作並沒有通過由後臺bdi回寫程序來執行,這裡的fsync和fdatasync系統呼叫就在當前呼叫程序中執行回寫的。

至此,檔案的資料回寫就完成了,而元資料尚在日誌事物中等待提交,接下來回到最外層的ext4_sync_file函式,提交最後的元資料塊。

-

/* -

* data=writeback,ordered: -

* The caller's filemap_fdatawrite()/wait will sync the data. -

* Metadata is in the journal, we wait for proper transaction to -

* commit here. -

* -

* data=journal: -

* filemap_fdatawrite won't do anything (the buffers are clean). -

* ext4_force_commit will write the file data into the journal and -

* will wait on that. -

* filemap_fdatawait() will encounter a ton of newly-dirtied pages -

* (they were dirtied by commit). But that's OK - the blocks are -

* safe in-journal, which is all fsync() needs to ensure. -

*/ -

if (ext4_should_journal_data(inode)) { -

ret = ext4_force_commit(inode->i_sb); -

goto out; -

} -

commit_tid = datasync ? ei->i_datasync_tid : ei->i_sync_tid; -

if (journal->j_flags & JBD2_BARRIER && -

!jbd2_trans_will_send_data_barrier(journal, commit_tid)) -

needs_barrier = true; -

ret = jbd2_complete_transaction(journal, commit_tid); -

if (needs_barrier) { -

issue_flush: -

err = blkdev_issue_flush(inode->i_sb->s_bdev, GFP_KERNEL, NULL); -

if (!ret) -

ret = err; -

} -

out: -

trace_ext4_sync_file_exit(inode, ret); -

return ret; -

}

參考註釋中的說明,對於預設的ordered模式,前面的filemap_write_and_wait_range函式已經同步了檔案的資料塊,而元資料塊可能仍然在日誌journal裡,接下來的流程會找到一個合適的事物來進行日誌的提交。

首先做一個判斷,如果啟用了檔案系統的barrier特性,這裡會呼叫jbd2_trans_will_send_data_barrier函式判斷是否需要向塊裝置傳送flush指令,需要注意的是commit_tid引數,如果是fdatasync呼叫,那它使用ei->i_datasync_tid,否則使用ei->i_sync_tid,用以表示包含我們關注檔案元資料所在當前的事物id。

-

int jbd2_trans_will_send_data_barrier(journal_t *journal, tid_t tid) -

{ -

int ret = 0; -

transaction_t *commit_trans; -

if (!(journal->j_flags & JBD2_BARRIER)) -

return 0; -

read_lock(&journal->j_state_lock); -

/* Transaction already committed? */ -

if (tid_geq(journal->j_commit_sequence, tid)) -

goto out; -

commit_trans = journal->j_committing_transaction; -

if (!commit_trans || commit_trans->t_tid != tid) { -

ret = 1; -

goto out; -

} -

/* -

* Transaction is being committed and we already proceeded to -

* submitting a flush to fs partition? -

*/ -

if (journal->j_fs_dev != journal->j_dev) { -

if (!commit_trans->t_need_data_flush || -

commit_trans->t_state >= T_COMMIT_DFLUSH) -

goto out; -

} else { -

if (commit_trans->t_state >= T_COMMIT_JFLUSH) -

goto out; -

} -

ret = 1; -

out: -

read_unlock(&journal->j_state_lock); -

return ret; -

}

jbd2_trans_will_send_data_barrier函式會對當前日誌的狀態進行一系列判斷,返回1表示當前的transaction還沒有被提交,所以不傳送flush指令,返回0表示當前的事物可能已經被提交了,因此需要傳送flush。具體如下:

(1)檔案系統日誌模式不支援barrier,這裡返回0會觸發flush(這一點不是很理解,判斷同ext4_sync_file剛好矛盾);

(2)當前的事物id號和journal->j_commit_sequence進行比較,如果j_commit_sequence大於該id號表示這裡關注的事物已經被提交了,返回0;

(3)如果正在提交的事物不存在或者正在體驕傲的事物不是所當前的事物,表示當前的事物被日誌提交程序所處理,返回1;

(4)如果當前的事物正在提交中且提交已經進行到T_COMMIT_JFLUSH,表明元資料日誌已經寫回完畢了,返回0;

(5)最後如果當前的事物正在提交中但是還沒有將元資料日誌寫回,返回1。

回到ext4_sync_file函式中,接下來jbd2_complete_transaction函式執行日誌的提交工作:

-

int jbd2_complete_transaction(journal_t *journal, tid_t tid) -

{ -

int need_to_wait = 1; -

read_lock(&journal->j_state_lock); -

if (journal->j_running_transaction && -

journal->j_running_transact