pytorch筆記:04)resnet網路&解決輸入影象大小問題

因為torchvision對resnet18-resnet152進行了封裝實現,因而想跟蹤下原始碼(^▽^)

首先看張核心的resnet層次結構圖(圖1),它詮釋了resnet18-152是如何搭建的,其中resnet18和resnet34結構類似,而resnet50-resnet152結構類似。下面先看resnet18的原始碼

resnet18

首先是models.resnet18函式的呼叫

def resnet18(pretrained=False, **kwargs):

"""Constructs a ResNet-18 model.

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

""" 這裡涉及到了一個BasicBlock類(resnet18和34),這樣的一個結構我們稱為一個block,因為在block內部的conv都使用了padding,輸入的in_img_size和out_img_size都是56x56,在圖2右邊的shortcut只需要改變輸入的channel的大小,輸入bloack的輸入tensor和輸出tensor就可以相加(

圖2

事實上圖2是Bottleneck類(用於resnet50-152,稍後分析),其和BasicBlock差不多,圖3為圖2的精簡版(ps:可以把下圖視為為一個box_block,即多個block疊加在一起,x3說明有3個上圖一樣的結構串起來):

圖3

BasicBlock類,可以對比結構圖中的resnet18和resnet34,類中expansion =1,其表示box_block中最後一個block的channel比上第一個block的channel,即:

def conv3x3(in_planes, out_planes, stride=1):

"3x3 convolution with padding"

return nn.Conv2d(in_planes, out_planes, kernel_size=3, stride=stride,

padding=1, bias=False)

class BasicBlock(nn.Module):

expansion = 1

#inplanes其實就是channel,叫法不同

def __init__(self, inplanes, planes, stride=1, downsample=None):

super(BasicBlock, self).__init__()

self.conv1 = conv3x3(inplanes, planes, stride)

self.bn1 = nn.BatchNorm2d(planes)

self.relu = nn.ReLU(inplace=True)

self.conv2 = conv3x3(planes, planes)

self.bn2 = nn.BatchNorm2d(planes)

self.downsample = downsample

self.stride = stride

def forward(self, x):

residual = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

#把shortcut那的channel的維度統一

if self.downsample is not None:

residual = self.downsample(x)

out += residual

out = self.relu(out)

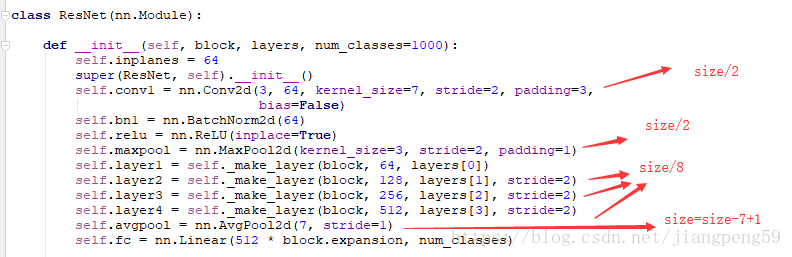

return out接下來是ResNet類,其和我們通常定義的模型差不多一個init()+forward(),程式碼有點長,我們一步步來分析:

- 參考前面的結構圖,所有的resnet的第一個conv層都是一樣的,輸出channel=64

- 然後到了self.layer1 = self._make_layer(block, 64, layers[0]),這裡的layers[0]=2,然後我們進入到_make_layer函式,由於stride=1或當前的輸入channel和上一個塊的輸出channel一樣,因而可以直接相加

- self.layer2 = self._make_layer(block, 128, layers[1], stride=2),此時planes=128而self.inplanes=64為上box_block的輸出channel,此時channel不一致,需要對輸出的x擴維後才能相加,downsample 實現的就是該功能(ps:這裡只有box_block中的第一個block需要downsample,為何?請看下圖)

- self.layer3 = self._make_layer(block, 256, layers[2], stride=2),此時planes=256而self.inplanes=128為,此時也需要擴維後才能相加,layer4 同理。

圖4

圖4中下標2,3,4和上面的步驟對應,圖中箭頭旁數值表示box_block輸入或者輸出的channel數。

具體看圖4-2,上一個box_block的最後一個block輸出channel為64(也是下一個box_block的輸入channel),而當前的box_block的第一個block的輸出為128,在此需要擴維才能相加。然後到了當前box_block的第2個block,其輸入channel和輸出channel是一致的,因此無需擴維。

也就是說在box_block內部,只需要對第1個block進行擴維,因為在box_block內,第一個block輸出channel和剩下的保持一致了。

class ResNet(nn.Module):

def __init__(self, block, layers, num_classes=1000):

self.inplanes = 64

super(ResNet, self).__init__()

self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3,

bias=False)

self.bn1 = nn.BatchNorm2d(64)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.layer1 = self._make_layer(block, 64, layers[0])

self.layer2 = self._make_layer(block, 128, layers[1], stride=2)

self.layer3 = self._make_layer(block, 256, layers[2], stride=2)

self.layer4 = self._make_layer(block, 512, layers[3], stride=2)

self.avgpool = nn.AvgPool2d(7, stride=1)

self.fc = nn.Linear(512 * block.expansion, num_classes)

for m in self.modules():

if isinstance(m, nn.Conv2d):

n = m.kernel_size[0] * m.kernel_size[1] * m.out_channels

m.weight.data.normal_(0, math.sqrt(2. / n))

elif isinstance(m, nn.BatchNorm2d):

m.weight.data.fill_(1)

m.bias.data.zero_()

def _make_layer(self, block, planes, blocks, stride=1):

#downsample 主要用來處理H(x)=F(x)+x中F(x)和xchannel維度不匹配問題

downsample = None

#self.inplanes為上個box_block的輸出channel,planes為當前box_block塊的輸入channel

if stride != 1 or self.inplanes != planes * block.expansion:

downsample = nn.Sequential(

nn.Conv2d(self.inplanes, planes * block.expansion,

kernel_size=1, stride=stride, bias=False),

nn.BatchNorm2d(planes * block.expansion),

)

layers = []

layers.append(block(self.inplanes, planes, stride, downsample))

self.inplanes = planes * block.expansion

for i in range(1, blocks):

layers.append(block(self.inplanes, planes))

return nn.Sequential(*layers)

def forward(self, x):

x = self.conv1(x)

x = self.bn1(x)

x = self.relu(x)

x = self.maxpool(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

x = self.avgpool(x)

x = x.view(x.size(0), -1)

x = self.fc(x)

return xresnet152

resnet152和resnet18差不多,Bottleneck類替換了BasicBlock,[3, 8, 36, 3]也和上面結構圖對應。

def resnet152(pretrained=False, **kwargs):

"""Constructs a ResNet-152 model.

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = ResNet(Bottleneck, [3, 8, 36, 3], **kwargs)

if pretrained:

model.load_state_dict(model_zoo.load_url(model_urls['resnet152']))

return model

Bottleneck類,這裡需要注意的是 expansion = 4,前面2個block的channel沒有變,最後一個變成了第一個的4倍,具體可看本文的第2個圖。

class Bottleneck(nn.Module):

expansion = 4

def __init__(self, inplanes, planes, stride=1, downsample=None):

super(Bottleneck, self).__init__()

self.conv1 = nn.Conv2d(inplanes, planes, kernel_size=1, bias=False)

self.bn1 = nn.BatchNorm2d(planes)

self.conv2 = nn.Conv2d(planes, planes, kernel_size=3, stride=stride,

padding=1, bias=False)

self.bn2 = nn.BatchNorm2d(planes)

self.conv3 = nn.Conv2d(planes, planes * 4, kernel_size=1, bias=False)

self.bn3 = nn.BatchNorm2d(planes * 4)

self.relu = nn.ReLU(inplace=True)

self.downsample = downsample

self.stride = stride

def forward(self, x):

residual = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out = self.relu(out)

out = self.conv3(out)

out = self.bn3(out)

if self.downsample is not None:

residual = self.downsample(x)

out += residual

out = self.relu(out)

return out

影象輸入大小問題:

首先pytorch輸入的大小固定為224*224,超過這個大小就會報錯,比如輸入大小256*256

RuntimeError: size mismatch, m1: [1 x 8192], m2: [2048 x 1000] at c:\miniconda2\conda-bld\pytorch-cpu_1519449358620\work\torch\lib\th\generic/THTensorMath.c:1434首先我們看下,resnet在哪些地方改變了輸出影象的大小

conv和pool層的輸出大小都可以根據下面公式計算得出

但是resnet裡面的卷積層太多了,就resnet152的height而言,其最後avgpool後的大小為 ,因此修改原始碼把影象的height和width傳遞進去,從而相容非224的圖片大小:

self.avgpool = nn.AvgPool2d(7, stride=1)

f = lambda x:math.ceil(x /32 - 7 + 1)

self.fc = nn.Linear(512 * block.expansion * f(w) * f(h), num_classes) #block.expansion=4也可以在外面替換跳最後一個fc層,這裡的2048即本文圖1中resnet152對應的最後layer的輸出channel,若是resnet18或resnet34則為512

model_ft = models.resnet152(pretrained=True)

f = lambda x:math.ceil(x /32 - 7 + 1)

model_ft.fc = nn.Linear(f(target_w) * f(target_h) * 2048, nb_classes) 還有另外一種暴力的方法,就是不管卷積層的輸出大小,取其平均值做為輸出,比如:

self.main = torchvision.models.resnet152(pretrained)

self.main.avgpool = nn.AdaptiveAvgPool2d((1, 1))第一次研究pytorch,請大神門輕噴

reference:

resnet詳細介紹

deeper bottleneck Architectures詳細介紹