pytorch0.4版的CNN對minist分類

阿新 • • 發佈:2018-11-14

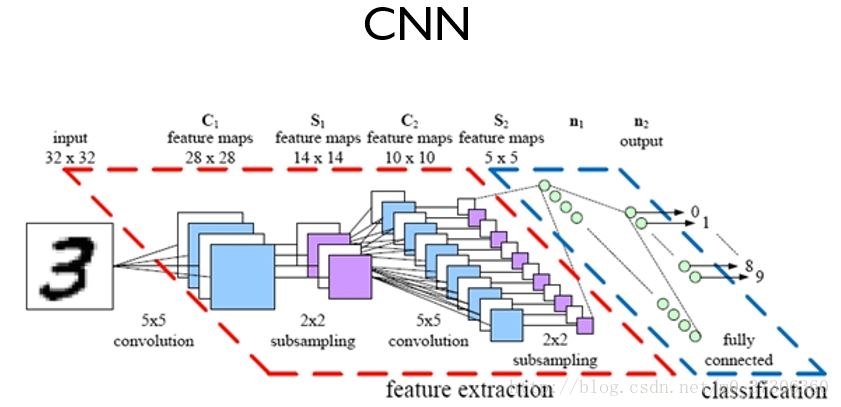

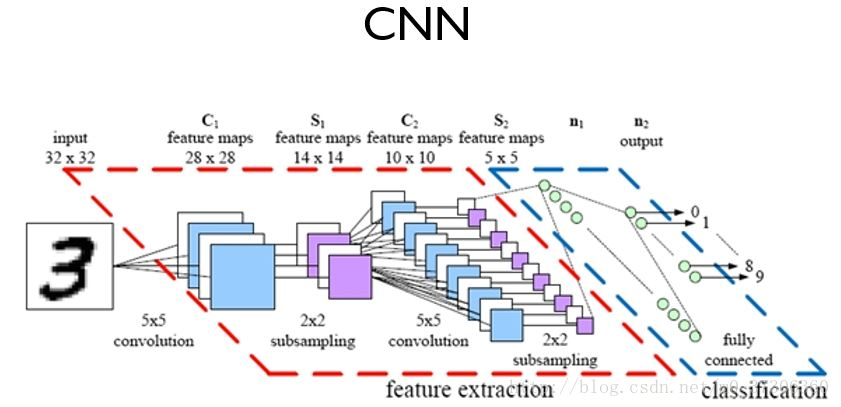

卷積神經網路(Convolutional Neural Network, CNN)是深度學習技術中極具代表的網路結構之一,在影象處理領域取得了很大的成功,在國際標準的ImageNet資料集上,許多成功的模型都是基於CNN的。

卷積神經網路CNN的結構一般包含這幾個層:

- 輸入層:用於資料的輸入

- 卷積層:使用卷積核進行特徵提取和特徵對映

- 激勵層:由於卷積也是一種線性運算,因此需要增加非線性對映

- 池化層:進行下采樣,對特徵圖稀疏處理,減少資料運算量。

- 全連線層:通常在CNN的尾部進行重新擬合,減少特徵資訊的損失

- 輸出層:用於輸出結果

用pytorch0.4 做的cnn網路做的minist 分類,程式碼如下:

1 import torch 2 import torch.nn as nn 3 import torch.nn.functional as F 4 import torch.optim as optim 5 from torchvision import datasets, transforms 6 from torch.autograd import Variable 7 8 # Training settings 9 batch_size = 64 10 11 # MNIST Dataset 12 train_dataset = datasets.MNIST(root='./data/',train=True,transform=transforms.ToTensor(),download=True) 13 test_dataset = datasets.MNIST(root='./data/',train=False,transform=transforms.ToTensor()) 14 15 # Data Loader (Input Pipeline) 16 train_loader = torch.utils.data.DataLoader(dataset=train_dataset,batch_size=batch_size,shuffle=True) 17test_loader = torch.utils.data.DataLoader(dataset=test_dataset,batch_size=batch_size,shuffle=False) 18 19 class Net(nn.Module): 20 def __init__(self): 21 super(Net, self).__init__() 22 # 輸入1通道,輸出10通道,kernel 5*5 23 self.conv1 = nn.Conv2d(1, 10, kernel_size=5) # 定義conv1函式的是影象卷積函式:輸入為影象(1個頻道,即灰度圖),輸出為 10張特徵圖, 卷積核為5x5正方形 24 self.conv2 = nn.Conv2d(10, 20, kernel_size=5) # # 定義conv2函式的是影象卷積函式:輸入為10張特徵圖,輸出為20張特徵圖, 卷積核為5x5正方形 25 self.mp = nn.MaxPool2d(2) 26 # fully connect 27 self.fc = nn.Linear(320, 10) 28 29 def forward(self, x): 30 # in_size = 64 31 in_size = x.size(0) # one batch 32 # x: 64*10*12*12 33 x = F.relu(self.mp(self.conv1(x))) 34 # x: 64*20*4*4 35 x = F.relu(self.mp(self.conv2(x))) 36 # x: 64*320 37 x = x.view(in_size, -1) # flatten the tensor 38 # x: 64*10 39 x = self.fc(x) 40 return F.log_softmax(x,dim=0) 41 42 43 model = Net() 44 optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5) 45 46 def train(epoch): 47 for batch_idx, (data, target) in enumerate(train_loader): 48 data, target = Variable(data), Variable(target) 49 optimizer.zero_grad() 50 output = model(data) 51 loss = F.nll_loss(output, target) 52 loss.backward() 53 optimizer.step() 54 if batch_idx % 200 == 0: 55 print('Train Epoch: {} [{}/{} ({:.0f}%)]\tLoss: {:.6f}'.format( 56 epoch, batch_idx * len(data), len(train_loader.dataset), 57 100. * batch_idx / len(train_loader), loss.item())) 58 59 60 def test(): 61 test_loss = 0 62 correct = 0 63 for data, target in test_loader: 64 data, target = Variable(data), Variable(target) 65 output = model(data) 66 # sum up batch loss 67 #test_loss += F.nll_loss(output, target, size_average=False).item() 68 test_loss += F.nll_loss(output, target, reduction = 'sum').item() 69 # get the index of the max log-probability 70 pred = output.data.max(1, keepdim=True)[1] 71 correct += pred.eq(target.data.view_as(pred)).cpu().sum() 72 73 test_loss /= len(test_loader.dataset) 74 print('\nTest set: Average loss: {:.4f}, Accuracy: {}/{} ({:.0f}%)\n'.format( 75 test_loss, correct, len(test_loader.dataset), 76 100. * correct / len(test_loader.dataset))) 77 78 79 if __name__=="__main__": 80 for epoch in range(1, 4): 81 train(epoch) 82 test()

執行效果如下:

Train Epoch: 1 [0/60000 (0%)] Loss: 4.163342 Train Epoch: 1 [12800/60000 (21%)] Loss: 2.689871 Train Epoch: 1 [25600/60000 (43%)] Loss: 2.553686 Train Epoch: 1 [38400/60000 (64%)] Loss: 2.376630 Train Epoch: 1 [51200/60000 (85%)] Loss: 2.321894 Test set: Average loss: 2.2703, Accuracy: 9490/10000 (94%) Train Epoch: 2 [0/60000 (0%)] Loss: 2.321601 Train Epoch: 2 [12800/60000 (21%)] Loss: 2.293680 Train Epoch: 2 [25600/60000 (43%)] Loss: 2.377935 Train Epoch: 2 [38400/60000 (64%)] Loss: 2.150829 Train Epoch: 2 [51200/60000 (85%)] Loss: 2.201805 Test set: Average loss: 2.1848, Accuracy: 9658/10000 (96%) Train Epoch: 3 [0/60000 (0%)] Loss: 2.238524 Train Epoch: 3 [12800/60000 (21%)] Loss: 2.224833 Train Epoch: 3 [25600/60000 (43%)] Loss: 2.240626 Train Epoch: 3 [38400/60000 (64%)] Loss: 2.217183 Train Epoch: 3 [51200/60000 (85%)] Loss: 2.357141 Test set: Average loss: 2.1426, Accuracy: 9723/10000 (97%)