CentOS7安裝spark2.0叢集

1、虛擬機器執行環境:

JDK: jdk1.8.0_171 64位

Scala:scala-2.12.6

Spark:spark-2.3.1-bin-hadoop2.7

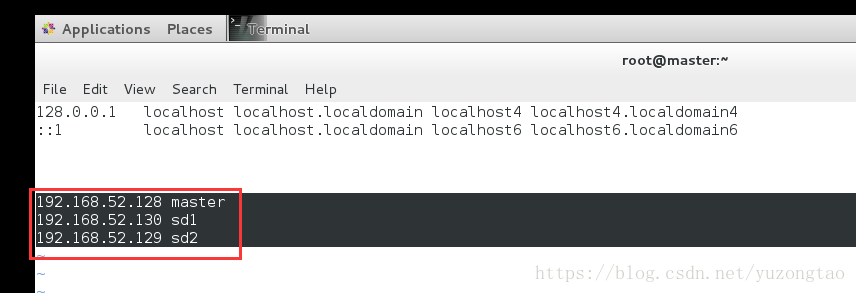

2、叢集網路環境:

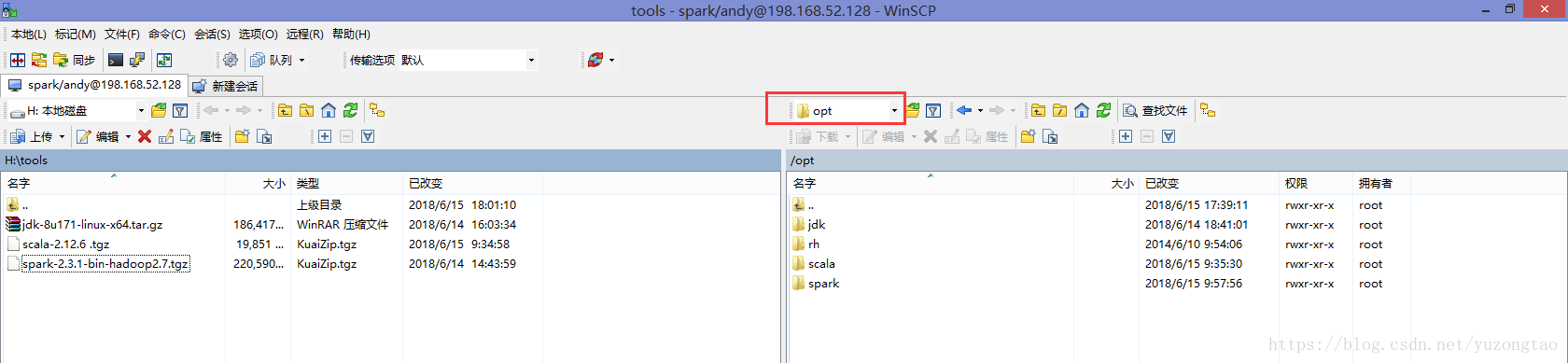

使用winscp工具上傳jdk、scala、spark安裝包到master主機/opt下新建的對應資料夾下

1)安裝jdk

解壓安裝包到目錄/usr/local下

[[email protected] conf]# tar -xvf jdk-8u171-linux-x64.tar.gz -C /usr/local/修改配置檔案:

[[email protected] conf]# vi /etc/profilejdk1.8.0_171/#jdk export JAVA_HOME=/usr/local/jdk1.8.0_171 export CLASSPATH=.:$JAVA_HOME/jre/lib/rt.jar:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar export PATH=$PATH:$JAVA_HOME/bin

2)安裝scala

解壓scala安裝包到目錄/usr/local下

[[email protected] conf]# tar -xvf scala-2.12.6\ .tgz -C /usr/local/修改配置檔案:

[[email protected] conf]# vi /etc/profile#scala

export SCALA_HOME=/usr/local/scala-2.12.6

export PATH=$PATH:$SCALA_HOME/bin3)安裝spark

解壓安裝包/usr/local目錄下

[[email protected] 修改配置檔案:

[[email protected] conf]# vi /etc/profile#spark

export SPARK_HOME=/opt/spark/spark-2.3.1-bin-hadoop2.7

export PATH=$PATH:$SPARK_HOME/bin

export SPARK_EXAMPLES_JAR=$SPARK_HOME/examples/jars/spark-examples_3.11-2.3.1.jar配置spark:

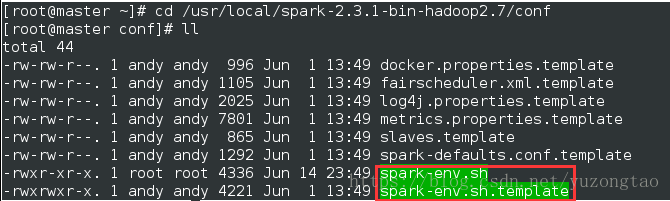

01.修改spark-env.sh如下:

[ro[email protected] conf]# cp spark-env.sh.template spark-env.sh 複製spark-env.sh.template模板到spark-env.sh,

[[email protected] conf]# vim spark-env.sh 開啟檔案,在最下方新增如下配置:

SPARK_MASTER_IP=192.168.52.128

export JAVA_HOME=/usr/local/jdk1.8.0_171

export SCALA_HOME=/usr/local/scala-2.12.6

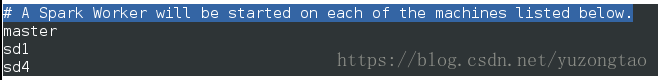

02. 修改配置檔案slaves

[[email protected] conf]# cp slaves.template slaves

[[email protected] conf]# vim slaves

03.將檔案從master複製到sd1和sd2:

[[email protected] conf]# scp -r /usr/local/spark-2.3.1-bin-hadoop2.7/conf [email protected]:/usr/local/spark-2.3.1-bin-hadoop2.7/[[email protected] conf]# scp -r /usr/local/spark-2.3.1-bin-hadoop2.7/conf [email protected]:/usr/local/spark-2.3.1-bin-hadoop2.7/ 複製目錄:

(1)將本地目錄拷貝到遠端

scp -r 目錄名使用者名稱@計算機IP或者計算機名稱:遠端路徑

(2)從遠端將目錄拷回本地

scp -r 使用者名稱@計算機IP或者計算機名稱:目錄名本地路徑

[[email protected] conf]# scp -r /usr/local/spark-2.3.1-bin-hadoop2.7/conf [email protected]:/usr/local/spark-2.3.1-bin-hadoop2.7/

spark-defaults.conf.template 100% 1292 1.3KB/s 00:00

slaves.template 100% 865 0.8KB/s 00:00

metrics.properties.template 100% 7801 7.6KB/s 00:00

spark-env.sh.template 100% 4221 4.1KB/s 00:00

fairscheduler.xml.template 100% 1105 1.1KB/s 00:00

log4j.properties.template 100% 2025 2.0KB/s 00:00

docker.properties.template 100% 996 1.0KB/s 00:00

spark-env.sh 100% 4221 4.1KB/s 00:00

slaves 100% 871 0.9KB/s 00:00

.slaves.swp 3、SSH免密碼驗證登陸:

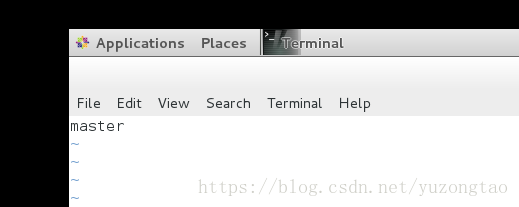

1)修改主機名稱

[[email protected] ~]# vim /etc/hostname

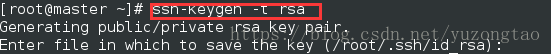

2)生成祕鑰,執行命令ssh-keygen -t rsa,然後一直按回車鍵即可

[[email protected] ~]# ssh-keygen -b 1024 -t rsa

Generating public/private rsa key pair. #提示正在生成rsa金鑰對

Enter file in which to save the key (/home/usrname/.ssh/id_dsa): #詢問公鑰和私鑰存放的位置,回車用預設位置即可

Enter passphrase (empty for no passphrase): #詢問輸入私鑰密語,輸入密語

Enter same passphrase again: #再次提示輸入密語確認

Your identification has been saved in /home/usrname/.ssh/id_dsa. #提示公鑰和私鑰已經存放在/root/.ssh/目錄下

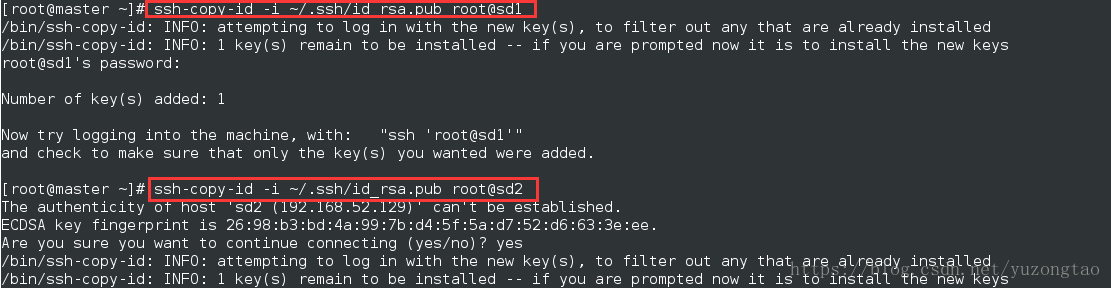

Your public key has been saved in /home/usrname/.ssh/id_dsa.pub.3)複製spark-master結點的id_rsa.pub檔案到另外兩個結點:

sup -r id_rsa.pub [email protected]:~/.ssh/

sup -r id_rsa.pub [email protected]:~/.ssh/

4.Centos7 防火牆關閉 :

#檢視防火牆的狀態

firewall-cmd --state

#關閉防火牆

systemctl stop firewalld.service

#禁止firewall開機啟動

systemctl disable firewalld.service

如果防火牆沒有關閉,sd1,sd2連線master中埠將被遮蔽掉,雖然網路可以PING通。但在啟動Hadoop ,Spark 時Work 將連線不上Master