【TensorFlow】多GPU訓練:示例程式碼解析

使用多GPU有助於提升訓練速度和調參效率。

本文主要對tensorflow的示例程式碼進行註釋解析:cifar10_multi_gpu_train.py

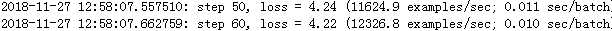

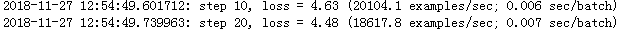

1080Ti下加速效果如下(batch=128)

單卡:

兩個GPU比單個GPU加速了近一倍 :

1.簡介

多GPU訓練分為:

資料並行和模型並行

單機多卡和多機多卡

2.示例程式碼解讀

官方示例程式碼給出了使用多個GPU計算的流程:

- CPU 做為引數伺服器

- 多個GPU計算彙總更新

#--------------------------Multi-GPUs-code------------------------#

1.demo檔案的說明部分

# Copyright 2015 The TensorFlow Authors. All Rights Reserved.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0 2.定義一些flags

這裡包含了對於資料目錄、最大batch步數、gpu數目和日誌檔案定義等

FLAGS = tf.app.flags.FLAGS #定義引數flags,隨後利用FLAGS讀取引數

#https://blog.csdn.net/m0_37041325/article/details/77448971

#https://blog.csdn.net/weiqi_fan/article/details/72722510

#定義引數對應的預設值

tf.app.flags.DEFINE_string('train_dir', './your/path/to/data/cifar10_train',

"""Directory where to write event logs """

"""and checkpoint.""")

tf.app.flags.DEFINE_integer('max_steps', 1000000,

"""Number of batches to run.""")

tf.app.flags.DEFINE_integer('num_gpus', 1,

"""How many GPUs to use.""")

tf.app.flags.DEFINE_boolean('log_device_placement', False,

"""Whether to log device placement.""")

3.定義損失彙總函式和梯度平均函式

主要定義了各個GPU上的損失函式及其合併

def tower_loss(scope, images, labels):

"""Calculate the total loss on a single tower running the CIFAR model.

計算單個tower上的總損失

Args:

scope: 特定tower的名稱空間, e.g. 'tower_0'

images: Images. 4D tensor of shape [batch_size, height, width, 3].

labels: Labels. 1D tensor of shape [batch_size].

Returns:

Tensor of shape [] containing the 某個批次資料的總損失

"""

# 計算圖構建的輸出

logits = cifar10.inference(images)

# 呼叫函式計算loss

_ = cifar10.loss(logits, labels)

# 綜合tower的loss

losses = tf.get_collection('losses', scope)

# 計算當前tower的總loss

total_loss = tf.add_n(losses, name='total_loss')

# Attach a scalar summary to all individual losses and the total loss; do the

# same for the averaged version of the losses.

for l in losses + [total_loss]:

# Remove 'tower_[0-9]/' from the name in case this is a multi-GPU training

# session. 清理tensorboard

loss_name = re.sub('%s_[0-9]*/' % cifar10.TOWER_NAME, '', l.op.name)

tf.summary.scalar(loss_name, l) #tensorboard視覺化

return total_loss

"""

#最後得到的total_loss

#每呼叫一次得到一個GPU的loss

Tensor("tower_0/total_loss_1:0", shape=(), dtype=float32, device=/device:GPU:0)

Tensor("tower_1/total_loss_1:0", shape=(), dtype=float32, device=/device:GPU:1)

"""

這部分梯度的綜合比較複雜,把它拆分出來分析,主要過程可以總結為

-首先讀入每個GPU(Tower)中的(梯度,變數),這些變數按照GPU 分為多個字列表儲存,[[GPUi],.......,[GPUn]];

-每個子列表中包含了一整個模型,對應了一整套的[(梯度,變數),........,(梯度,變數)]<-gpui

-將不同GPU中的同一個變數及其梯度((grad0_gpu0, var0_gpu0),.....,(grad0_gpun, var0_gpun))抽取出來,

#定義梯度,這些梯度來自於各個GPU的綜合

def average_gradients(tower_grads):

"""Calculate the average gradient for each shared variable across all towers.

#這個函式對塔式伺服器中的GPU提供了同步點

Note that this function provides a synchronization point across all towers.

Args:

#輸入引數為list格式,包含了由一系列元組(梯度,變數)組成的子列表

#外部的list計算獨立梯度,內部計算綜合梯度

tower_grads: List of lists of (gradient, variable) tuples. The outer list

is over individual gradients. The inner list is over the gradient

calculation for each tower.

Returns:

#在所有節點上平均後返回

List of pairs of (gradient, variable) where the gradient has been averaged

across all towers.

"""

"""例項

對於兩個GPU來說,就是兩個tower,針對這裡例子,tower_gpu中包含了下面這些內容

tower_grads = [[tower0_grad],[tower1_grads]]>>>包含了第一塊gpu的變數梯度和第二塊GPU的變數梯度,他們被放在一個大的列表裡outer-list;

而其中的每一個tower-n_grads 又是一個小的列表inner-list,包含了整個模型的梯度和變數。

[tower-n_grads] = [(grad0,variable0),.......,(gradn,variablen)

#我們將輸入的變數打印出來觀察

>>> tower_grads:

[

[

(<tf.Tensor 'tower_0/gradients/tower_0/conv1/Conv2D_grad/tuple/control_dependency_1:0' shape=(5, 5, 3, 64) dtype=float32>, <tf.Variable 'conv1/weights:0' shape=(5, 5, 3, 64) dtype=float32_ref>),

(<tf.Tensor 'tower_0/gradients/tower_0/conv1/BiasAdd_grad/tuple/control_dependency_1:0' shape=(64,) dtype=float32>, <tf.Variable 'conv1/biases:0' shape=(64,) dtype=float32_ref>),

(<tf.Tensor 'tower_0/gradients/tower_0/conv2/Conv2D_grad/tuple/control_dependency_1:0' shape=(5, 5, 64, 64) dtype=float32>, <tf.Variable 'conv2/weights:0' shape=(5, 5, 64, 64) dtype=float32_ref>),

(<tf.Tensor 'tower_0/gradients/tower_0/conv2/BiasAdd_grad/tuple/control_dependency_1:0' shape=(64,) dtype=float32>, <tf.Variable 'conv2/biases:0' shape=(64,) dtype=float32_ref>),

(<tf.Tensor 'tower_0/gradients/AddN_1:0' shape=(2304, 384) dtype=float32>, <tf.Variable 'local3/weights:0' shape=(2304, 384) dtype=float32_ref>),

(<tf.Tensor 'tower_0/gradients/tower_0/local3/add_grad/tuple/control_dependency_1:0' shape=(384,) dtype=float32>, <tf.Variable 'local3/biases:0' shape=(384,) dtype=float32_ref>),

(<tf.Tensor 'tower_0/gradients/AddN:0' shape=(384, 192) dtype=float32>, <tf.Variable 'local4/weights:0' shape=(384, 192) dtype=float32_ref>),

(<tf.Tensor 'tower_0/gradients/tower_0/local4/add_grad/tuple/control_dependency_1:0' shape=(192,) dtype=float32>, <tf.Variable 'local4/biases:0' shape=(192,) dtype=float32_ref>),

(<tf.Tensor 'tower_0/gradients/tower_0/softmax_linear/MatMul_grad/tuple/control_dependency_1:0' shape=(192, 10) dtype=float32>, <tf.Variable 'softmax_linear/weights:0' shape=(192, 10) dtype=float32_ref>),

(<tf.Tensor 'tower_0/gradients/tower_0/softmax_linear/softmax_linear_grad/tuple/control_dependency_1:0' shape=(10,) dtype=float32>, <tf.Variable 'softmax_linear/biases:0' shape=(10,) dtype=float32_ref>)],

[

(<tf.Tensor 'tower_1/gradients/tower_1/conv1/Conv2D_grad/tuple/control_dependency_1:0' shape=(5, 5, 3, 64) dtype=float32>, <tf.Variable 'conv1/weights:0' shape=(5, 5, 3, 64) dtype=float32_ref>),

(<tf.Tensor 'tower_1/gradients/tower_1/conv1/BiasAdd_grad/tuple/control_dependency_1:0' shape=(64,) dtype=float32>, <tf.Variable 'conv1/biases:0' shape=(64,) dtype=float32_ref>),

(<tf.Tensor 'tower_1/gradients/tower_1/conv2/Conv2D_grad/tuple/control_dependency_1:0' shape=(5, 5, 64, 64) dtype=float32>, <tf.Variable 'conv2/weights:0' shape=(5, 5, 64, 64) dtype=float32_ref>),

(<tf.Tensor 'tower_1/gradients/tower_1/conv2/BiasAdd_grad/tuple/control_dependency_1:0' shape=(64,) dtype=float32>, <tf.Variable 'conv2/biases:0' shape=(64,) dtype=float32_ref>),

(<tf.Tensor 'tower_1/gradients/AddN_1:0' shape=(2304, 384) dtype=float32>, <tf.Variable 'local3/weights:0' shape=(2304, 384) dtype=float32_ref>),

(<tf.Tensor 'tower_1/gradients/tower_1/local3/add_grad/tuple/control_dependency_1:0' shape=(384,) dtype=float32>, <tf.Variable 'local3/biases:0' shape=(384,) dtype=float32_ref>),

(<tf.Tensor 'tower_1/gradients/AddN:0' shape=(384, 192) dtype=float32>, <tf.Variable 'local4/weights:0' shape=(384, 192) dtype=float32_ref>),

(<tf.Tensor 'tower_1/gradients/tower_1/local4/add_grad/tuple/control_dependency_1:0' shape=(192,) dtype=float32>, <tf.Variable 'local4/biases:0' shape=(192,) dtype=float32_ref>),

(<tf.Tensor 'tower_1/gradients/tower_1/softmax_linear/MatMul_grad/tuple/control_dependency_1:0' shape=(192, 10) dtype=float32>, <tf.Variable 'softmax_linear/weights:0' shape=(192, 10) dtype=float32_ref>),

(<tf.Tensor 'tower_1/gradients/tower_1/softmax_linear/softmax_linear_grad/tuple/control_dependency_1:0' shape=(10,) dtype=float32>, <tf.Variable 'softmax_linear/biases:0' shape=(10,) dtype=float32_ref>)

]

]

"""

average_grads = []

#對輸入元組進行解壓

for grad_and_vars in zip(*tower_grads): #在各個變數var上迴圈

# grad_and_vars: ((grad0_gpu0, var0_gpu0), ... , (grad0_gpuN, var0_gpuN))

# 遍歷var0及其梯度在不同GPU上的分佈,此例子中

#((<tf.Tensor 'tower_0/gradients/tower_0/conv1/Conv2D_grad/tuple/control_dependency_1:0' shape=(5, 5, 3, 64) dtype=float32>, <tf.Variable 'conv1/weights:0' shape=(5, 5, 3, 64) dtype=float32_ref>),

#(<tf.Tensor 'tower_1/gradients/tower_1/conv1/Conv2D_grad/tuple/control_dependency_1:0' shape=(5, 5, 3, 64) dtype=float32>, <tf.Variable 'conv1/weights:0' shape=(5, 5, 3, 64) dtype=float32_ref>))

grads = []

for g, _ in grad_and_vars: #對所有GPU上的同一變數的梯度進行組合

# Add 0 dimension to the gradients to represent the tower.

expanded_g = tf.expand_dims(g, 0)

# Append on a 'tower' dimension which we will average over below.

#加上tower維度

grads.append(expanded_g)

#在tower維度上進行平均

grad = tf.concat(axis=0, values=grads) #在tower維度上,對不同的GPU求均值

grad = tf.reduce_mean(grad, 0) #得到所有變數及其梯度的均值

# 引數由於共享冗餘,所以只需要返回變數在首個tower的指標

v = grad_and_vars[0][1] #指標varxx-gpuxx

grad_and_var = (grad, v) #合併為元組 得到某個變數綜合後的平均梯度,及變數名指標。

average_grads.append(grad_and_var) #新增新的梯度和v指標,新增各個var

return average_grads

"""最後我們觀察返回的引數

>>> print(average_grads)

[(<tf.Tensor 'Mean:0' shape=(5, 5, 3, 64) dtype=float32>, <tf.Variable 'conv1/weights:0' shape=(5, 5, 3, 64) dtype=float32_ref>),

(<tf.Tensor 'Mean_1:0' shape=(64,) dtype=float32>, <tf.Variable 'conv1/biases:0' shape=(64,) dtype=float32_ref>),

(<tf.Tensor 'Mean_2:0' shape=(5, 5, 64, 64) dtype=float32>, <tf.Variable 'conv2/weights:0' shape=(5, 5, 64, 64) dtype=float32_ref>),

(<tf.Tensor 'Mean_3:0' shape=(64,) dtype=float32>, <tf.Variable 'conv2/biases:0' shape=(64,) dtype=float32_ref>),

(<tf.Tensor 'Mean_4:0' shape=(2304, 384) dtype=float32>, <tf.Variable 'local3/weights:0' shape=(2304, 384) dtype=float32_ref>),

(<tf.Tensor 'Mean_5:0' shape=(384,) dtype=float32>, <tf.Variable 'local3/biases:0' shape=(384,) dtype=float32_ref>),

(<tf.Tensor 'Mean_6:0' shape=(384, 192) dtype=float32>, <tf.Variable 'local4/weights:0' shape=(384, 192) dtype=float32_ref>),

(<tf.Tensor 'Mean_7:0' shape=(192,) dtype=float32>, <tf.Variable 'local4/biases:0' shape=(192,) dtype=float32_ref>),

(<tf.Tensor 'Mean_8:0' shape=(192, 10) dtype=float32>, <tf.Variable 'softmax_linear/weights:0' shape=(192, 10) dtype=float32_ref>),

(<tf.Tensor 'Mean_9:0' shape=(10,) dtype=float32>, <tf.Variable 'softmax_linear/biases:0' shape=(10,) dtype=float32_ref>)

]

可以看到是多gpu平均後的梯度和對應的變數

"""

4.訓練

訓練部分主要包括了構建計算圖、定義計算引數、優化器、

def train():

"""Train CIFAR-10 for a number of steps."""

with tf.Graph().as_default(), tf.device('/cpu:0'):

# Create a variable to count the number of train() calls. This equals the

# number of batches processed * FLAGS.num_gpus.

global_step = tf.get_variable(

'global_step', [],

initializer=tf.constant_initializer(0), trainable=False)

# Calculate the learning rate schedule.

num_batches_per_epoch = (cifar10.NUM_EXAMPLES_PER_EPOCH_FOR_TRAIN /

FLAGS.batch_size / FLAGS.num_gpus)

decay_steps = int(num_batches_per_epoch * cifar10.NUM_EPOCHS_PER_DECAY)

# Decay the learning rate exponentially based on the number of steps.

lr = tf.train.exponential_decay(cifar10.INITIAL_LEARNING_RATE,

global_step,

decay_steps,

cifar10.LEARNING_RATE_DECAY_FACTOR,

staircase=True)

# Create an optimizer that performs gradient descent.

opt = tf.train.GradientDescentOptimizer(lr)

#-----------------------------上面定義引數、定義優化器-----------------------#

# 影象和標籤的batch輸入

images, labels = cifar10.distorted_inputs()

batch_queue = tf.contrib.slim.prefetch_queue.prefetch_queue(

[images, labels], capacity=2 * FLAGS.num_gpus)

# 計算每一個gpu上的梯度,放入tower_grads中.

tower_grads = []

with tf.variable_scope(tf.get_variable_scope()):

for i in xrange(FLAGS.num_gpus):

with tf.device('/gpu:%d' % i):

with tf.name_scope('%s_%d' % (cifar10.TOWER_NAME, i)) as scope:

# Dequeues one batch for the GPU

image_batch, label_batch = batch_queue.dequeue()

# Calculate the loss for one tower of the CIFAR model. This function

# constructs the entire CIFAR model but shares the variables across

# all towers.

loss = tower_loss(scope, image_batch, label_batch)

# Reuse variables for the next tower.

tf.get_variable_scope().reuse_variables()

# Retain the summaries from the final tower.

summaries = tf.get_collection(tf.GraphKeys.SUMMARIES, scope)

# Calculate the gradients for the batch of data on this CIFAR tower.

grads = opt.compute_gradients(loss)

# Keep track of the gradients across all towers.

tower_grads.append(grads)

# 計算平均梯度

# 注意同步指標.

grads = average_gradients(tower_grads)

# tensorboard顯示學習率

summaries.append(tf.summary.scalar('learning_rate', lr))

# 各種梯度的tensorboard直方圖顯示

for grad, var in grads:

if grad is not None:

summaries.append(tf.summary.histogram(var.op.name + '/gradients', grad))

# 利用計算出的平均梯度來進行優化

apply_gradient_op = opt.apply_gradients(grads, global_step=global_step)

# 各種變數的直方圖

for var in tf.trainable_variables():

summaries.append(tf.summary.histogram(var.op.name, var))

# 跟蹤所有變數的移動平均

variable_averages = tf.train.ExponentialMovingAverage(

cifar10.MOVING_AVERAGE_DECAY, global_step)

variables_averages_op = variable_averages.apply(tf.trainable_variables())

# 將所有操作組合進單一操作

train_op = tf.group(apply_gradient_op, variables_averages_op)

# 儲存相關操作

saver = tf.train.Saver(tf.global_variables())

# 建立綜合操作

summary_op = tf.summary.merge(summaries)

# 初始化

init = tf.global_variables_initializer()

# 開始計算

# Start running operations on the Graph. allow_soft_placement must be set to

# True to build towers on GPU, as some of the ops do not have GPU

# implementations.

sess = tf.Session(config=tf.ConfigProto(

allow_soft_placement=True,

log_device_placement=FLAGS.log_device_placement))

sess.run(init)

# Start the queue runners.

tf.train.start_queue_runners(sess=sess)

#將訓練過程記錄下來,tensorboard視覺化

summary_writer = tf.summary.FileWriter(FLAGS.train_dir, sess.graph)

#最大步數迭代訓練,顯示時間和loss

for step in xrange(FLAGS.max_steps):

start_time = time.time()

_, loss_value = sess.run([train_op, loss])

duration = time.time() - start_time

assert not np.isnan(loss_value), 'Model diverged with loss = NaN'

#---------------------------下面是不同check steps的時候顯示的資訊-----------------#

if step % 10 == 0:

num_examples_per_step = FLAGS.batch_size * FLAGS.num_gpus

examples_per_sec = num_examples_per_step / duration

sec_per_batch = duration / FLAGS.num_gpus

format_str = ('%s: step %d, loss = %.2f (%.1f examples/sec; %.3f '

'sec/batch)')

print (format_str % (datetime.now(), step, loss_value,

examples_per_sec, sec_per_batch))

if step % 100 == 0:

summary_str = sess.run(summary_op)

summary_writer.add_summary(summary_str, step)

# Save the model checkpoint periodically.

if step % 1000 == 0 or (step + 1) == FLAGS.max_steps:

checkpoint_path = os.path.join(FLAGS.train_dir, 'model.ckpt')

saver.save(sess, checkpoint_path, global_step=step)

#注,此處程式碼較長,執行時需要注意tab鍵/空格鍵是否正確---indent

啟動主函式訓練

def main(argv=None): # pylint: disable=unused-argument

cifar10.maybe_download_and_extract() #沒資料需要下載,這個函式在cifar10.py裡

if tf.gfile.Exists(FLAGS.train_dir):

tf.gfile.DeleteRecursively(FLAGS.train_dir)

tf.gfile.MakeDirs(FLAGS.train_dir)

train()

if __name__ == '__main__':

tf.app.run()

#可以愉快的運行了

ref:

demo:https://github.com/tensorflow/models/blob/master/tutorials/image/cifar10/cifar10_multi_gpu_train.py

https://blog.csdn.net/lqfarmer/article/details/70339330

https://blog.csdn.net/weixin_40546602/article/details/81414321

https://blog.csdn.net/guotong1988/article/details/74355637