Python實現bing必應桌布下載

阿新 • • 發佈:2018-12-03

一、爬取分析

必應桌布:https://bing.ioliu.cn/

這裡共有1~84頁,每頁12張圖片

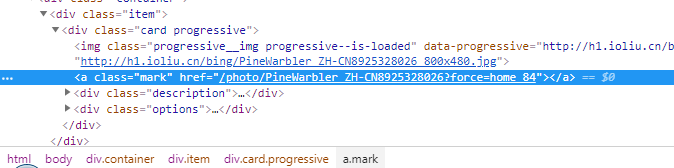

對於圖片的地址分析:

首先是首頁能獲取到圖片名稱:/photo/PoniesWales_EN-AU12228719072?force=home_2

在通過對圖片詳情頁分析:通過對地址拼接就能得到圖片下載地址

http://h1.ioliu.cn/bing/PoniesWales_EN-AU12228719072_1920x1080.jpg

二、原始碼分享

import reimport sys import os import requests import urllib.request from bs4 import BeautifulSoup # get all image pae 1~84 def allpage(): for i in range(2,84): url = 'https://bing.ioliu.cn/?p=' + str(i) imageitem(url) # print (url) # get each image item def imageitem(url):# url = "https://bing.ioliu.cn/?p=2" html_doc = urllib.request.urlopen(url).read() # html_doc = html_doc.decode('utf-8') soup = BeautifulSoup(html_doc,"html.parser",from_encoding="UTF-8") links = soup.select('.container .item .mark') for link in links: s = link['href'] down(strhandler(s))def strhandler(s): # s = '/photo/PoniesWales_EN-AU12228719072?force=home_2' l = s.find('?') s = s[7:l] # print (s) return s # download image def down(name): url = 'http://h1.ioliu.cn//bing/'+str(name)+'_1920x1080.jpg' headers = { 'Accept': '*/*', 'Accept-Encoding': 'gzip, deflate, br', 'Accept-Language': 'zh-CN,zh;q=0.8,zh-TW;q=0.7,zh-HK;q=0.5,en-US;q=0.3,en;q=0.2', 'Connection': 'keep-alive', 'Referer': url, 'User-agent': 'Mozilla/5.0 (Windows NT 10.0; WOW 64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/55.0.2883.87 Safari/537.36 QIHU 360SE' } r = requests.get(url,headers=headers) path = 'photos/'+name+'.jpg' print (r) print (' downing.. '+path) with open(path, "wb") as code: code.write(r.content) def main(): allpage() # Test allpage() # down('PoniesWales_EN-AU12228719072')

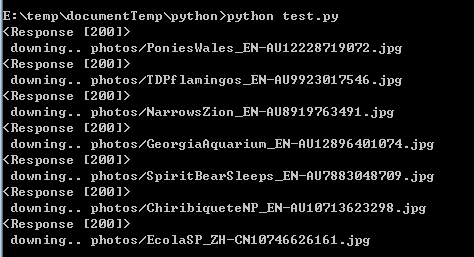

三、結果

成功下載,在photots下生成檔案

四、注意

1、bing網站的反爬蟲

需要設定headers進行模擬,在requests中進行設定:

headers = { 'Accept': '*/*', 'Accept-Encoding': 'gzip, deflate, br', 'Accept-Language': 'zh-CN,zh;q=0.8,zh-TW;q=0.7,zh-HK;q=0.5,en-US;q=0.3,en;q=0.2', 'Connection': 'keep-alive', 'Referer': url, 'User-agent': 'Mozilla/5.0 (Windows NT 10.0; WOW 64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/55.0.2883.87 Safari/537.36 QIHU 360SE' } r = requests.get(url,headers=headers)

成功後:

2、效率問題

由於未使用爬蟲優化,所以速度比較慢

單執行緒爬取

可以採用多執行緒進行同時爬取,以加快速度。