Opencv實現身份證OCR識別

阿新 • • 發佈:2018-12-07

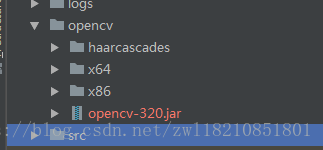

Opencv 配置IDEA可參考:https://blog.csdn.net/zwl18210851801/article/details/81075781

opencv位置:

OpencvUtil類:

package com.xinjian.x.common.utils; import org.opencv.core.*; import org.opencv.core.Point; import org.opencv.imgcodecs.Imgcodecs; import org.opencv.imgproc.Imgproc; import org.opencv.objdetect.CascadeClassifier; import javax.imageio.ImageIO; import java.awt.*; import java.awt.image.BufferedImage; import java.awt.image.DataBufferByte; import java.io.ByteArrayInputStream; import java.io.InputStream; import java.util.ArrayList; import java.util.Collections; import java.util.Comparator; import java.util.List; public class OpencvUtil { private static final int BLACK = 0; private static final int WHITE = 255; /** * 灰化處理 * @return */ public static Mat gray (Mat mat){ Mat gray = new Mat(); Imgproc.cvtColor(mat, gray, Imgproc.COLOR_BGR2GRAY,1); Imgcodecs.imwrite("D:/Users/xinjian09/Desktop/gray.jpg", gray); return gray; } /** * 二值化處理 * @return */ public static Mat binary (Mat mat){ Mat binary = new Mat(); Imgproc.adaptiveThreshold(mat, binary, 255, Imgproc.ADAPTIVE_THRESH_MEAN_C, Imgproc.THRESH_BINARY_INV, 25, 10); Imgcodecs.imwrite("D:/Users/xinjian09/Desktop/binary.jpg", binary); return binary; } /** * 模糊處理 * @param mat * @return */ public static Mat blur (Mat mat) { Mat blur = new Mat(); Imgproc.blur(mat,blur,new Size(5,5)); Imgcodecs.imwrite("D:/Users/xinjian09/Desktop/blur.jpg", blur); return blur; } /** *膨脹 * @param mat * @return */ public static Mat dilate (Mat mat,int size){ Mat dilate=new Mat(); Mat element = Imgproc.getStructuringElement(Imgproc.MORPH_RECT, new Size(size,size)); //膨脹 Imgproc.dilate(mat, dilate, element, new Point(-1, -1), 1); Imgcodecs.imwrite("D:/Users/xinjian09/Desktop/dilate.jpg", dilate); return dilate; } /** * 腐蝕 * @param mat * @return */ public static Mat erode (Mat mat,int size){ Mat erode=new Mat(); Mat element = Imgproc.getStructuringElement(Imgproc.MORPH_RECT, new Size(size,size)); //腐蝕 Imgproc.erode(mat, erode, element, new Point(-1, -1), 1); Imgcodecs.imwrite("D:/Users/xinjian09/Desktop/erode.jpg", erode); return erode; } /** * 邊緣檢測 * @param mat * @return */ public static Mat carry(Mat mat){ Mat dst=new Mat(); //高斯平滑濾波器卷積降噪 Imgproc.GaussianBlur(mat, dst, new Size(3,3), 0); //邊緣檢測 Imgproc.Canny(mat, dst, 50, 150); Imgcodecs.imwrite("D:/Users/xinjian09/Desktop/canny.jpg",dst); return dst; } /** * 輪廓檢測 * @param mat * @return */ public static List<MatOfPoint> findContours(Mat mat){ List<MatOfPoint> contours=new ArrayList<>(); Mat hierarchy = new Mat(); Imgproc.findContours(mat, contours, hierarchy, Imgproc.RETR_LIST, Imgproc.CHAIN_APPROX_SIMPLE); return contours; } /** * 人臉識別 * @param mat * @return */ public static Mat face(Mat mat){ CascadeClassifier faceDetector = new CascadeClassifier( System.getProperty("user.dir")+"\\opencv\\haarcascades\\haarcascade_frontalface_alt2.xml"); // 在圖片中檢測人臉 MatOfRect faceDetections = new MatOfRect(); //指定人臉識別的最大和最小畫素範圍 Size minSize = new Size(200, 200); Size maxSize = new Size(500, 500); //引數設定為scaleFactor=1.1f, minNeighbors=4, flags=0 以此來增加識別人臉的正確率 faceDetector.detectMultiScale(mat, faceDetections, 1.1f, 3, 0, minSize, maxSize); Rect[] rects = faceDetections.toArray(); if(rects != null && rects.length == 1){ // 在每一個識別出來的人臉周圍畫出一個方框 Rect rect = rects[0]; /* Imgproc.rectangle(mat, new Point(rect.x, rect.y), new Point(rect.x + rect.width, rect.y + rect.height), new Scalar(0)); Imgcodecs.imwrite("D:/Users/xinjian09/Desktop/face.jpg", mat);*/ return mat; }else{ return null; } } /** * 迴圈進行人臉識別 * */ public static Mat faceLoop(Map src){ Mat face=new Mat(); //預設人臉識別失敗時影象旋轉90度 int k=90; while (k>0){ double angel=0; for(int i=0;i<360/k;i++){ //人臉識別 face= OpencvUtil.face(src); angel=angel+k; if(face==null){ src = rotate3(src,angel); }else{ break; } } if(face!=null){ break; }else{ k=k-30; } } return face; } /** * 累計概率hough變換直線檢測 * @param mat */ public static Mat houghLinesP(Mat begin,Mat mat){ Mat storage = new Mat(); Imgproc.HoughLinesP(mat, storage, 1, Math.PI / 180, 10, 0, 10); List<double[]> lines=new ArrayList<>(); //在mat上劃線 for (int x = 0; x < storage.rows(); x++) { double[] vec = storage.get(x, 0); double x1 = vec[0], y1 = vec[1], x2 = vec[2], y2 = vec[3]; Point start = new Point(x1, y1); Point end = new Point(x2, y2); //獲取與影象x邊緣近似平行的直線 if(Math.abs(start.y-end.y)<5){ if(Math.abs(x2-x1)>20){ lines.add(vec); //Imgproc.line(mat, start, end, new Scalar(255), 10); } } //獲取與影象y邊緣近似平行的直線 if(Math.abs(start.x-end.x)<5){ if(Math.abs(y2-y1)>20){ lines.add(vec); //Imgproc.line(mat, start, end, new Scalar(255), 10); } } Imgproc.line(mat, start, end, new Scalar(255), 10); } //獲取最大的和最小的X,Y座標 double maxX=0.0,minX=10000,minY=10000,maxY=0.0; for(int i=0;i<lines.size();i++){ double[] vec = lines.get(i); double x1 = vec[0], y1 = vec[1], x2 = vec[2], y2 = vec[3]; maxX=maxX>x1?maxX:x1; maxX=maxX>x2?maxX:x2; minX=minX>x1?x1:minX; minX=minX>x2?x2:minX; maxY=maxY>y1?maxY:y1; maxY=maxY>y2?maxY:y2; minY=minY>y1?y1:minY; minY=minY>y2?y2:minY; } Imgcodecs.imwrite("D:/Users/xinjian09/Desktop/houghLines.jpg", mat); if((maxX-minX)>mat.cols()*0.5&&(maxY-minY)>mat.rows()*0.5){ List<Point> list=new ArrayList<>(); Point point1=new Point(minX+10,minY+10); Point point2=new Point(minX+10,maxY-10); Point point3=new Point(maxX-10,minY+10); Point point4=new Point(maxX-10,maxY-10); list.add(point1); list.add(point2); list.add(point3); list.add(point4); mat=shear(begin,list); }else{ mat=begin; } Imgcodecs.imwrite("D:/Users/xinjian09/Desktop/houghLinesP.jpg", mat); return mat; } /** * 判斷集合中是否包含數字相差size範圍的數字 * @param list * @param num * @param size * @return */ public static double number(List<Double> list,Double num,int size){ double res=0.0; for(int i=0;i<list.size();i++){ if(Math.abs(list.get(i)-num)<size){ res=list.get(i); } } return res; } /** * 累計概率hough變換直線檢測 * @param mat */ public static Mat houghLines(Mat mat){ Mat storage = new Mat(); Imgproc.HoughLines(mat, storage, 1, Math.PI / 180, 50, 0, 0, 0, 1); for (int x = 0; x < storage.rows(); x++) { double[] vec = storage.get(x, 0); double rho = vec[0]; double theta = vec[1]; Point pt1 = new Point(); Point pt2 = new Point(); double a = Math.cos(theta); double b = Math.sin(theta); double x0 = a * rho; double y0 = b * rho; pt1.x = Math.round(x0 + 1000 * (-b)); pt1.y = Math.round(y0 + 1000 * (a)); pt2.x = Math.round(x0 - 1000 * (-b)); pt2.y = Math.round(y0 - 1000 * (a)); if (theta >= 0) { Imgproc.line(mat, pt1, pt2, new Scalar(255), 3); } } Imgcodecs.imwrite("D:/Users/xinjian09/Desktop/houghLines.jpg", mat); return mat; } /** * 根據四點座標擷取模板圖片 * @param mat * @param pointList * @return */ public static Mat shear (Mat mat,List<Point> pointList){ int x=minX(pointList); int y=minY(pointList); int xl=xLength(pointList)>mat.cols()-x?mat.cols()-x:xLength(pointList); int yl=yLength(pointList)>mat.rows()-y?mat.rows()-y:yLength(pointList); Rect re=new Rect(x,y,xl,yl); Mat shear=new Mat(mat,re); return shear; } /** * 圖片旋轉 * @param splitImage * @param angle * @return */ public static Mat rotate3(Mat splitImage, double angle){ double thera = angle * Math.PI / 180; double a = Math.sin(thera); double b = Math.cos(thera); int wsrc = splitImage.width(); int hsrc = splitImage.height(); int wdst = (int) (hsrc * Math.abs(a) + wsrc * Math.abs(b)); int hdst = (int) (wsrc * Math.abs(a) + hsrc * Math.abs(b)); Mat imgDst = new Mat(hdst, wdst, splitImage.type()); Point pt = new Point(splitImage.cols() / 2, splitImage.rows() / 2); // 獲取仿射變換矩陣 Mat affineTrans = Imgproc.getRotationMatrix2D(pt, angle, 1.0); //System.out.println(affineTrans.dump()); // 改變變換矩陣第三列的值 affineTrans.put(0, 2, affineTrans.get(0, 2)[0] + (wdst - wsrc) / 2); affineTrans.put(1, 2, affineTrans.get(1, 2)[0] + (hdst - hsrc) / 2); Imgproc.warpAffine(splitImage, imgDst, affineTrans, imgDst.size(), Imgproc.INTER_CUBIC | Imgproc.WARP_FILL_OUTLIERS); return imgDst; } /** * 影象直方圖處理 * @param mat * @return */ public static Mat equalizeHist(Mat mat){ Mat dst = new Mat(); List<Mat> mv = new ArrayList<>(); Core.split(mat, mv); for (int i = 0; i < mat.channels(); i++) { Imgproc.equalizeHist(mv.get(i), mv.get(i)); } Core.merge(mv, dst); return dst; } /** * 8鄰域降噪,又有點像9宮格降噪;即如果9宮格中心被異色包圍,則同化 * @param pNum 預設值為1 */ public static Mat navieRemoveNoise(Mat mat,int pNum) { int i, j, m, n, nValue, nCount; int nWidth = mat.cols(); int nHeight = mat.rows(); /* // 對影象的邊緣進行預處理 for (i = 0; i < nWidth; ++i) { mat.put(i, 0, WHITE); mat.put(i, nHeight - 1, WHITE); } for (i = 0; i < nHeight; ++i) { mat.put(0, i, WHITE); mat.put(nWidth - 1, i, WHITE); }*/ // 如果一個點的周圍都是白色的,而它確是黑色的,刪除它 for (j = 1; j < nHeight - 1; ++j) { for (i = 1; i < nWidth - 1; ++i) { nValue = (int)mat.get(j, i)[0]; if (nValue == 0) { nCount = 0; // 比較以(j ,i)為中心的9宮格,如果周圍都是白色的,同化 for (m = j - 1; m <= j + 1; ++m) { for (n = i - 1; n <= i + 1; ++n) { if ((int)mat.get(m, n)[0] == 0) { nCount++; } } } if (nCount <= pNum) { // 周圍黑色點的個數小於閥值pNum,把該點設定白色 mat.put(j, i, WHITE); } } else { nCount = 0; // 比較以(j ,i)為中心的9宮格,如果周圍都是黑色的,同化 for (m = j - 1; m <= j + 1; ++m) { for (n = i - 1; n <= i + 1; ++n) { if ((int)mat.get(m, n)[0] == 0) { nCount++; } } } if (nCount >= 7) { // 周圍黑色點的個數大於等於7,把該點設定黑色;即周圍都是黑色 mat.put(j, i, BLACK); } } } } return mat; } /** * 連通域降噪 * @param pArea 預設值為1 */ public static Mat contoursRemoveNoise(Mat mat,double pArea) { //mat=floodFill(mat,mat.new Point(mat.cols()/2,mat.rows()/2),new Color(225,0,0)); int i, j, color = 1; int nWidth = mat.cols(), nHeight = mat.rows(); for (i = 0; i < nWidth; ++i) { for (j = 0; j < nHeight; ++j) { if ((int) mat.get(j, i)[0] == BLACK) { //用不同顏色填充連線區域中的每個黑色點 //floodFill就是把一個點x的所有相鄰的點都塗上x點的顏色,一直填充下去,直到這個區域內所有的點都被填充完為止 Imgproc.floodFill(mat, new Mat(), new Point(i, j), new Scalar(color)); color++; } } } //統計不同顏色點的個數 int[] ColorCount = new int[255]; for (i = 0; i < nWidth; ++i) { for (j = 0; j < nHeight; ++j) { if ((int) mat.get(j, i)[0] != 255) { ColorCount[(int) mat.get(j, i)[0] - 1]++; } } } //去除噪點 for (i = 0; i < nWidth; ++i) { for (j = 0; j < nHeight; ++j) { if (ColorCount[(int) mat.get(j, i)[0] - 1] <= pArea) { mat.put(j, i, WHITE); } } } for (i = 0; i < nWidth; ++i) { for (j = 0; j < nHeight; ++j) { if ((int) mat.get(j, i)[0] < WHITE) { mat.put(j, i, BLACK); } } } return mat; } /** * Mat轉換成BufferedImage * * @param matrix * 要轉換的Mat * @param fileExtension * 格式為 ".jpg", ".png", etc * @return */ public static BufferedImage Mat2BufImg (Mat matrix, String fileExtension) { MatOfByte mob = new MatOfByte(); Imgcodecs.imencode(fileExtension, matrix, mob); byte[] byteArray = mob.toArray(); BufferedImage bufImage = null; try { InputStream in = new ByteArrayInputStream(byteArray); bufImage = ImageIO.read(in); } catch (Exception e) { e.printStackTrace(); } return bufImage; } /** * BufferedImage轉換成Mat * * @param original * 要轉換的BufferedImage * @param imgType * bufferedImage的型別 如 BufferedImage.TYPE_3BYTE_BGR * @param matType * 轉換成mat的type 如 CvType.CV_8UC3 */ public static Mat BufImg2Mat (BufferedImage original, int imgType, int matType) { if (original == null) { throw new IllegalArgumentException("original == null"); } if (original.getType() != imgType) { BufferedImage image = new BufferedImage(original.getWidth(), original.getHeight(), imgType); Graphics2D g = image.createGraphics(); try { g.setComposite(AlphaComposite.Src); g.drawImage(original, 0, 0, null); } finally { g.dispose(); } } DataBufferByte dbi =(DataBufferByte)original.getRaster().getDataBuffer(); byte[] pixels = dbi.getData(); Mat mat = Mat.eye(original.getHeight(), original.getWidth(), matType); mat.put(0, 0, pixels); return mat; } /** * 人眼識別 * @param mat * @return */ public static List<Point> eye(Mat mat){ List<Point> eyeList=new ArrayList<>(); CascadeClassifier eyeDetector = new CascadeClassifier( System.getProperty("user.dir")+"\\opencv\\haarcascades\\haarcascade_eye.xml"); // 在圖片中檢測人眼 MatOfRect eyeDetections = new MatOfRect(); //指定人臉識別的最大和最小畫素範圍 Size minSize = new Size(20, 20); Size maxSize = new Size(30, 30); eyeDetector.detectMultiScale(mat, eyeDetections, 1.1f, 3, 0, minSize, maxSize); Rect[] rects = eyeDetections.toArray(); if(rects != null && rects.length == 2){ Point point1=new Point(rects[0].x,rects[0].y); eyeList.add(point1); Point point2=new Point(rects[1].x,rects[1].y); eyeList.add(point2); }else{ return null; } return eyeList; } /** * 獲取最大輪廓面積 * @param contours * @return */ public static Mat maxArea(Mat mat, List<MatOfPoint> contours){ double maxArea=0.0; RotatedRect maxRect= new RotatedRect(); MatOfPoint mp=new MatOfPoint(); for(int i=0;i<contours.size();i++){ MatOfPoint2f mat2f=new MatOfPoint2f(); contours.get(i).convertTo(mat2f,CvType.CV_32FC1); RotatedRect rect=Imgproc.minAreaRect(mat2f); double area=rect.boundingRect().area(); if(area>maxArea){ maxArea=area; maxRect=rect; mp=contours.get(i); } } //獲取最大輪廓頂點座標 MatOfPoint2f mat2f=new MatOfPoint2f(); mp.convertTo(mat2f,CvType.CV_32FC1); RotatedRect rect=Imgproc.minAreaRect(mat2f); Mat points=new Mat(); Imgproc.boxPoints(rect,points); List<Point> pointList=getPoints(points.dump()); //返回擷取的模板圖片 return shear(mat,pointList); } /** * 獲取輪廓的頂點座標 * @param contour * @return */ public static List<Point> getPointList(MatOfPoint contour){ MatOfPoint2f mat2f=new MatOfPoint2f(); contour.convertTo(mat2f,CvType.CV_32FC1); RotatedRect rect=Imgproc.minAreaRect(mat2f); Mat points=new Mat(); Imgproc.boxPoints(rect,points); return getPoints(points.dump()); } /** * 獲取輪廓的面積 * @param contour * @return */ public static double area (MatOfPoint contour){ MatOfPoint2f mat2f=new MatOfPoint2f(); contour.convertTo(mat2f,CvType.CV_32FC1); RotatedRect rect=Imgproc.minAreaRect(mat2f); return rect.boundingRect().area(); } /** * 獲取點座標集合 * @param str * @return */ public static List<Point> getPoints(String str){ List<Point> points=new ArrayList<>(); str=str.replace("[","").replace("]",""); String[] pointStr=str.split(";"); for(int i=0;i<pointStr.length;i++){ double x=Double.parseDouble(pointStr[i].split(",")[0]); double y=Double.parseDouble(pointStr[i].split(",")[1]); Point po=new Point(x,y); points.add(po); } return points; } /** * 獲取最小的X座標 * @param points * @return */ public static int minX(List<Point> points){ Collections.sort(points, new XComparator(false)); return (int)(points.get(0).x>0?points.get(0).x:-points.get(0).x); } /** * 獲取最小的Y座標 * @param points * @return */ public static int minY(List<Point> points){ Collections.sort(points, new YComparator(false)); return (int)(points.get(0).y>0?points.get(0).y:-points.get(0).y); } /** * 獲取最長的X座標距離 * @param points * @return */ public static int xLength(List<Point> points){ Collections.sort(points, new XComparator(false)); return (int)(points.get(3).x-points.get(0).x); } /** * 獲取最長的Y座標距離 * @param points * @return */ public static int yLength(List<Point> points){ Collections.sort(points, new YComparator(false)); return (int)(points.get(3).y-points.get(0).y); } //集合排序規則(根據X座標排序) public static class XComparator implements Comparator<Point> { private boolean reverseOrder; // 是否倒序 public XComparator(boolean reverseOrder) { this.reverseOrder = reverseOrder; } public int compare(Point arg0, Point arg1) { if(reverseOrder) return (int)arg1.x - (int)arg0.x; else return (int)arg0.x - (int)arg1.x; } } //集合排序規則(根據Y座標排序) public static class YComparator implements Comparator<Point> { private boolean reverseOrder; // 是否倒序 public YComparator(boolean reverseOrder) { this.reverseOrder = reverseOrder; } public int compare(Point arg0, Point arg1) { if(reverseOrder) return (int)arg1.y - (int)arg0.y; else return (int)arg0.y - (int)arg1.y; } } }

OCRUtil類:

package com.xinjian.x.common.ocr; import net.sourceforge.tess4j.ITesseract; import net.sourceforge.tess4j.Tesseract; import net.sourceforge.tess4j.util.LoadLibs; import java.awt.image.BufferedImage; import java.io.File; public class OCRUtil { /** * 識別圖片資訊 * @param img * @return */ public static String getImageMessage(BufferedImage img,String language){ String result="end"; try{ ITesseract instance = new Tesseract(); File tessDataFolder = LoadLibs.extractTessResources("tessdata"); instance.setLanguage(language); instance.setDatapath(tessDataFolder.getAbsolutePath()); result = instance.doOCR(img); //System.out.println(result); }catch(Exception e){ System.out.println(e.getMessage()); } return result; } }

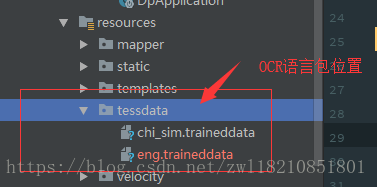

language為語言包名稱eng或者chi_sim,chi_sim語言包可能與jar包不匹配需要注意

語言包下載地址:https://download.csdn.net/download/psdnfu/5187836

<!--OCR Tesseract--> <dependency> <groupId>net.java.dev.jna</groupId> <artifactId>jna</artifactId> <version>4.1.0</version> </dependency> <dependency> <groupId>net.sourceforge.tess4j</groupId> <artifactId>tess4j</artifactId> <version>2.0.1</version> <exclusions> <exclusion> <groupId>com.sun.jna</groupId> <artifactId>jna</artifactId> </exclusion> </exclusions> </dependency>

Main 方法:

package com.xinjian.x.modules.orc;

import com.xinjian.x.common.ocr.OCRUtil;

import com.xinjian.x.common.utils.OpencvUtil;

import org.opencv.core.*;

import org.opencv.core.Point;

import org.opencv.imgcodecs.Imgcodecs;

import org.opencv.imgproc.Imgproc;

import java.awt.image.BufferedImage;

import java.util.ArrayList;

import java.util.List;

public class OrcTest {

static {

System.loadLibrary(Core.NATIVE_LIBRARY_NAME);

//注意程式執行的時候需要在VM option新增該行 指明opencv的dll檔案所在路徑

//-Djava.library.path=$PROJECT_DIR$\opencv\x64

}

public static void main(String[] args){

long start=System.currentTimeMillis();

String path="D:/Users/xinjian09/Desktop/b.jpg";

//根據邊框線提取圖片

Mat mat=lines(path);

//身份證正面識別

cardUp(mat);

//cardDown(mat);

}

/**

* 提取特徵線條

*/

public static Mat lines(String path){

Mat mat= Imgcodecs.imread(path);

//若圖片比例過大,壓縮比例

if(mat.cols()>2000){

Imgproc.resize(mat, mat,new Size(mat.cols()*0.5,mat.rows()*0.5));

}

if(mat.cols()<1000){

Imgproc.resize(mat, mat,new Size(mat.cols()*1.5,mat.rows()*1.5));

}

Mat begin=mat.clone();

//灰度

mat=OpencvUtil.gray(mat);

//二值化

mat=OpencvUtil.binary(mat);

//腐蝕

mat=OpencvUtil.erode(mat,3);

//輪廓檢測,清除小的輪廓部分

List<MatOfPoint> list=OpencvUtil.findContours(mat);

for(int i=0;i<list.size();i++){

double area=OpencvUtil.area(list.get(i));

if(area<5000){

Imgproc.drawContours(mat, list, i, new Scalar( 0, 0, 0), -1);

}

}

//直線檢測

return OpencvUtil.houghLinesP(begin,mat);

}

/**

* 身份證反面識別

*/

public static void cardDown(Mat mat){

//灰度

mat=OpencvUtil.gray(mat);

//二值化

mat=OpencvUtil.binary(mat);

//腐蝕

mat=OpencvUtil.erode(mat,3);

//膨脹

mat=OpencvUtil.dilate(mat,3);

//檢測是否有居民身份證字型,若有為正向,若沒有則旋轉圖片

for(int i=0;i<4;i++){

String temp=temp(mat);

if(!temp.contains("居")&&!temp.contains("民")){

mat=OpencvUtil.rotate3(mat,90);

}else{

break;

}

}

Imgcodecs.imwrite("D:/Users/xinjian09/Desktop/result.jpg", mat);

String organization=organization (mat);

System.out.print("簽發機關是:"+organization);

String time=time (mat);

System.out.print("有效期限是:"+time);

}

public static String temp (Mat mat){

Point point1=new Point(mat.cols()*0.30,mat.rows()*0.25);

Point point2=new Point(mat.cols()*0.30,mat.rows()*0.25);

Point point3=new Point(mat.cols()*0.90,mat.rows()*0.45);

Point point4=new Point(mat.cols()*0.90,mat.rows()*0.45);

List<Point> list=new ArrayList<>();

list.add(point1);

list.add(point2);

list.add(point3);

list.add(point4);

Mat temp=OpencvUtil.shear(mat,list);

List<MatOfPoint> nameContours=OpencvUtil.findContours(temp);

for (int i = 0; i < nameContours.size(); i++)

{

double area=OpencvUtil.area(nameContours.get(i));

if(area<100){

Imgproc.drawContours(temp, nameContours, i, new Scalar( 0, 0, 0), -1);

}

}

Imgcodecs.imwrite("D:/Users/xinjian09/Desktop/temp.jpg", temp);

BufferedImage nameBuffer=OpencvUtil.Mat2BufImg(temp,".jpg");

String nameStr=OCRUtil.getImageMessage(nameBuffer,"chi_sim");

nameStr=nameStr.replace("\n","");

return nameStr;

}

public static String organization (Mat mat){

Point point1=new Point(mat.cols()*0.36,mat.rows()*0.65);

Point point2=new Point(mat.cols()*0.36,mat.rows()*0.65);

Point point3=new Point(mat.cols()*0.80,mat.rows()*0.78);

Point point4=new Point(mat.cols()*0.80,mat.rows()*0.78);

List<Point> list=new ArrayList<>();

list.add(point1);

list.add(point2);

list.add(point3);

list.add(point4);

Mat name=OpencvUtil.shear(mat,list);

List<MatOfPoint> nameContours=OpencvUtil.findContours(name);

for (int i = 0; i < nameContours.size(); i++)

{

double area=OpencvUtil.area(nameContours.get(i));

if(area<100){

Imgproc.drawContours(name, nameContours, i, new Scalar( 0, 0, 0), -1);

}

}

Imgcodecs.imwrite("D:/Users/xinjian09/Desktop/organization.jpg", name);

BufferedImage nameBuffer=OpencvUtil.Mat2BufImg(name,".jpg");

String nameStr=OCRUtil.getImageMessage(nameBuffer,"chi_sim");

nameStr=nameStr.replace("\n","");

return nameStr+"\n";

}

public static String time (Mat mat){

Point point1=new Point(mat.cols()*0.38,mat.rows()*0.80);

Point point2=new Point(mat.cols()*0.38,mat.rows()*0.80);

Point point3=new Point(mat.cols()*0.85,mat.rows()*0.92);

Point point4=new Point(mat.cols()*0.85,mat.rows()*0.92);

List<Point> list=new ArrayList<>();

list.add(point1);

list.add(point2);

list.add(point3);

list.add(point4);

Mat time=OpencvUtil.shear(mat,list);

List<MatOfPoint> timeContours=OpencvUtil.findContours(time);

for (int i = 0; i < timeContours.size(); i++)

{

double area=OpencvUtil.area(timeContours.get(i));

if(area<100){

Imgproc.drawContours(time, timeContours, i, new Scalar( 0, 0, 0), -1);

}

}

Imgcodecs.imwrite("D:/Users/xinjian09/Desktop/time.jpg", time);

//起始日期

Point startPoint1=new Point(0,0);

Point startPoint2=new Point(0,time.rows());

Point startPoint3=new Point(time.cols()*0.47,0);

Point startPoint4=new Point(time.cols()*0.47,time.rows());

List<Point> startList=new ArrayList<>();

startList.add(startPoint1);

startList.add(startPoint2);

startList.add(startPoint3);

startList.add(startPoint4);

Mat start=OpencvUtil.shear(time,startList);

Imgcodecs.imwrite("D:/Users/xinjian09/Desktop/start.jpg", start);

BufferedImage yearBuffer=OpencvUtil.Mat2BufImg(start,".jpg");

String startStr=OCRUtil.getImageMessage(yearBuffer,"eng");

startStr=startStr.replace("-","");

startStr=startStr.replace(" ","");

startStr=startStr.replace("\n","");

//截止日期

Point endPoint1=new Point(time.cols()*0.47,0);

Point endPoint2=new Point(time.cols()*0.47,time.rows());

Point endPoint3=new Point(time.cols(),0);

Point endPoint4=new Point(time.cols(),time.rows());

List<Point> endList=new ArrayList<>();

endList.add(endPoint1);

endList.add(endPoint2);

endList.add(endPoint3);

endList.add(endPoint4);

Mat end=OpencvUtil.shear(time,endList);

Imgcodecs.imwrite("D:/Users/xinjian09/Desktop/end.jpg", end);

BufferedImage endBuffer=OpencvUtil.Mat2BufImg(end,".jpg");

String endStr=OCRUtil.getImageMessage(endBuffer,"chi_sim");

if(!endStr.contains("長")&&!endStr.contains("期")){

endStr=OCRUtil.getImageMessage(endBuffer,"eng");

endStr=endStr.replace("-","");

endStr=endStr.replace(" ","");

}

return startStr+"-"+endStr;

}

/**

* 身份證正面識別

*/

public static void cardUp (Mat mat){

//迴圈進行人臉識別,調整影象為正面像

mat=OpencvUtil.faceLoop(mat);

//灰度

mat=OpencvUtil.gray(mat);

//二值化

mat=OpencvUtil.binary(mat);

//腐蝕

mat=OpencvUtil.erode(mat,3);

//膨脹

mat=OpencvUtil.dilate(mat,3);

//獲取名稱

String name=name(mat);

System.out.print("姓名是:"+name);

//獲取性別

String sex=sex(mat);

System.out.print("性別是:"+sex);

//獲取民族

String nation=nation(mat);

System.out.print("民族是:"+nation);

//獲取出生日期

String birthday=birthday(mat);

System.out.print("出生日期是:"+birthday);

//獲取住址

String address=address(mat);

System.out.print("住址是:"+address);

//獲取身份證

String card=card(mat);

System.out.print("身份證號是:"+card);

}

public static String name(Mat mat){

Point point1=new Point(mat.cols()*0.18,mat.rows()*0.11);

Point point2=new Point(mat.cols()*0.18,mat.rows()*0.22);

Point point3=new Point(mat.cols()*0.4,mat.rows()*0.11);

Point point4=new Point(mat.cols()*0.4,mat.rows()*0.22);

List<Point> list=new ArrayList<>();

list.add(point1);

list.add(point2);

list.add(point3);

list.add(point4);

Mat name=OpencvUtil.shear(mat,list);

List<MatOfPoint> nameContours=OpencvUtil.findContours(name);

for (int i = 0; i < nameContours.size(); i++)

{

double area=OpencvUtil.area(nameContours.get(i));

if(area<100){

Imgproc.drawContours(name, nameContours, i, new Scalar( 0, 0, 0), -1);

}

}

Imgcodecs.imwrite("D:/Users/xinjian09/Desktop/name.jpg", name);

BufferedImage nameBuffer=OpencvUtil.Mat2BufImg(name,".jpg");

String nameStr=OCRUtil.getImageMessage(nameBuffer,"chi_sim");

nameStr=nameStr.replace("\n","");

return nameStr+"\n";

}

public static String sex(Mat mat){

Point point1=new Point(mat.cols()*0.18,mat.rows()*0.25);

Point point2=new Point(mat.cols()*0.18,mat.rows()*0.33);

Point point3=new Point(mat.cols()*0.25,mat.rows()*0.25);

Point point4=new Point(mat.cols()*0.25,mat.rows()*0.33);

List<Point> list=new ArrayList<>();

list.add(point1);

list.add(point2);

list.add(point3);

list.add(point4);

Mat sex=OpencvUtil.shear(mat,list);

sex=OpencvUtil.erode(sex,3);

List<MatOfPoint> sexContours=OpencvUtil.findContours(sex);

for (int i = 0; i < sexContours.size(); i++)

{

double area=OpencvUtil.area(sexContours.get(i));

if(area<100){

Imgproc.drawContours(sex, sexContours, i, new Scalar( 0, 0, 0), -1);

}

}

sex=OpencvUtil.dilate(sex,4);

Imgcodecs.imwrite("D:/Users/xinjian09/Desktop/sex.jpg", sex);

BufferedImage sexBuffer=OpencvUtil.Mat2BufImg(sex,".jpg");

String sexStr=OCRUtil.getImageMessage(sexBuffer,"chi_sim");

sexStr=sexStr.replace("\n","");

return sexStr+"\n";

}

public static String nation(Mat mat){

Point point1=new Point(mat.cols()*0.39,mat.rows()*0.25);

Point point2=new Point(mat.cols()*0.39,mat.rows()*0.34);

Point point3=new Point(mat.cols()*0.55,mat.rows()*0.25);

Point point4=new Point(mat.cols()*0.55,mat.rows()*0.34);

List<Point> list=new ArrayList<>();

list.add(point1);

list.add(point2);

list.add(point3);

list.add(point4);

Mat nation=OpencvUtil.shear(mat,list);

List<MatOfPoint> nationContours=OpencvUtil.findContours(nation);

for (int i = 0; i < nationContours.size(); i++)

{

double area=OpencvUtil.area(nationContours.get(i));

if(area<100){

Imgproc.drawContours(nation, nationContours, i, new Scalar( 0, 0, 0), -1);

}

}

Imgcodecs.imwrite("D:/Users/xinjian09/Desktop/nation.jpg", nation);

BufferedImage nationBuffer=OpencvUtil.Mat2BufImg(nation,".jpg");

String nationStr=OCRUtil.getImageMessage(nationBuffer,"chi_sim");

nationStr=nationStr.replace("\n","");

return nationStr+"\n";

}

public static String birthday(Mat mat){

Point point1=new Point(mat.cols()*0.18,mat.rows()*0.35);

Point point2=new Point(mat.cols()*0.18,mat.rows()*0.35);

Point point3=new Point(mat.cols()*0.55,mat.rows()*0.45);

Point point4=new Point(mat.cols()*0.55,mat.rows()*0.45);

List<Point> list=new ArrayList<>();

list.add(point1);

list.add(point2);

list.add(point3);

list.add(point4);

Mat birthday=OpencvUtil.shear(mat,list);

birthday=OpencvUtil.erode(birthday,3);

List<MatOfPoint> birthdayContours=OpencvUtil.findContours(birthday);

for (int i = 0; i < birthdayContours.size(); i++)

{

double area=OpencvUtil.area(birthdayContours.get(i));

if(area<150){

Imgproc.drawContours(birthday, birthdayContours, i, new Scalar( 0, 0, 0), -1);

}

}

birthday=OpencvUtil.dilate(birthday,3);

Imgcodecs.imwrite("D:/Users/xinjian09/Desktop/birthday.jpg", birthday);

//年份

Point yearPoint1=new Point(0,0);

Point yearPoint2=new Point(0,birthday.rows());

Point yearPoint3=new Point(birthday.cols()*0.29,0);

Point yearPoint4=new Point(birthday.cols()*0.29,birthday.rows());

List<Point> yearList=new ArrayList<>();

yearList.add(yearPoint1);

yearList.add(yearPoint2);

yearList.add(yearPoint3);

yearList.add(yearPoint4);

Mat year=OpencvUtil.shear(birthday,yearList);

Imgcodecs.imwrite("D:/Users/xinjian09/Desktop/year.jpg", year);

BufferedImage yearBuffer=OpencvUtil.Mat2BufImg(year,".jpg");

String yearStr=OCRUtil.getImageMessage(yearBuffer,"eng");

//月份

Point monthPoint1=new Point(birthday.cols()*0.44,0);

Point monthPoint2=new Point(birthday.cols()*0.44,birthday.rows());

Point monthPoint3=new Point(birthday.cols()*0.575,0);

Point monthPoint4=new Point(birthday.cols()*0.575,birthday.rows());

List<Point> monthList=new ArrayList<>();

monthList.add(monthPoint1);

monthList.add(monthPoint2);

monthList.add(monthPoint3);

monthList.add(monthPoint4);

Mat month=OpencvUtil.shear(birthday,monthList);

Imgcodecs.imwrite("D:/Users/xinjian09/Desktop/month.jpg", month);

BufferedImage monthBuffer=OpencvUtil.Mat2BufImg(month,".jpg");

String monthStr=OCRUtil.getImageMessage(monthBuffer,"eng");

//日期

Point dayPoint1=new Point(birthday.cols()*0.69,0);

Point dayPoint2=new Point(birthday.cols()*0.69,birthday.rows());

Point dayPoint3=new Point(birthday.cols()*0.82,0);

Point dayPoint4=new Point(birthday.cols()*0.82,birthday.rows());

List<Point> dayList=new ArrayList<>();

dayList.add(dayPoint1);

dayList.add(dayPoint2);

dayList.add(dayPoint3);

dayList.add(dayPoint4);

Mat day=OpencvUtil.shear(birthday,dayList);

Imgcodecs.imwrite("D:/Users/xinjian09/Desktop/day.jpg", day);

BufferedImage dayBuffer=OpencvUtil.Mat2BufImg(day,".jpg");

String dayStr=OCRUtil.getImageMessage(dayBuffer,"eng");

String birthdayStr=yearStr+"年"+monthStr+"月"+dayStr+"日";

birthdayStr=birthdayStr.replace("\n","");

return birthdayStr+"\n";

}

public static String address(Mat mat){

Point point1=new Point(mat.cols()*0.18,mat.rows()*0.48);

Point point2=new Point(mat.cols()*0.18,mat.rows()*0.48);

Point point3=new Point(mat.cols()*0.61,mat.rows()*0.76);

Point point4=new Point(mat.cols()*0.61,mat.rows()*0.76);

List<Point> list=new ArrayList<>();

list.add(point1);

list.add(point2);

list.add(point3);

list.add(point4);

Mat address=OpencvUtil.shear(mat,list);

List<MatOfPoint> addressContours=OpencvUtil.findContours(address);

for (int i = 0; i < addressContours.size(); i++)

{

double area=OpencvUtil.area(addressContours.get(i));

if(area<100){

Imgproc.drawContours(address, addressContours, i, new Scalar( 0, 0, 0),-1 );

}

}

Imgcodecs.imwrite("D:/Users/xinjian09/Desktop/address.jpg", address);

BufferedImage addressBuffer=OpencvUtil.Mat2BufImg(address,".jpg");

String addressStr=OCRUtil.getImageMessage(addressBuffer,"chi_sim");

addressStr=addressStr.replace("\n","");

return addressStr+"\n";

}

public static String card(Mat mat){

Point point1=new Point(mat.cols()*0.34,mat.rows()*0.75);

Point point2=new Point(mat.cols()*0.34,mat.rows()*0.75);

Point point3=new Point(mat.cols()*0.89,mat.rows()*0.91);

Point point4=new Point(mat.cols()*0.89,mat.rows()*0.91);

List<Point> list=new ArrayList<>();

list.add(point1);

list.add(point2);

list.add(point3);

list.add(point4);

Mat card=OpencvUtil.shear(mat,list);

card=OpencvUtil.erode(card,3);

List<MatOfPoint> cardContours=OpencvUtil.findContours(card);

for (int i = 0; i < cardContours.size(); i++)

{

double area=OpencvUtil.area(cardContours.get(i));

if(area<150){

Imgproc.drawContours(card, cardContours, i, new Scalar( 0, 0, 0),-1 );

}

}

card=OpencvUtil.dilate(card,3);

Imgcodecs.imwrite("D:/Users/xinjian09/Desktop/card.jpg", card);

BufferedImage cardBuffer=OpencvUtil.Mat2BufImg(card,".jpg");

String cardStr=OCRUtil.getImageMessage(cardBuffer,"eng");

return cardStr;

}

}