python BeautifulSoup庫詳解

阿新 • • 發佈:2018-12-10

BeautifulSoup

Beautiful Soup 是一個可以從HTML或XML檔案中提取資料的Python庫.它能夠通過你喜歡的轉換器實現慣用的文件導航,查詢,修改文件的方式

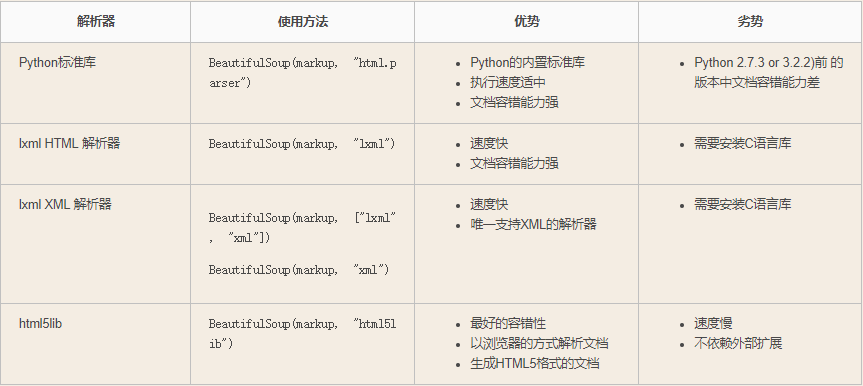

解析器

對網頁進行析取時,若未規定解析器,此時使用的是python內部預設的解析器“html.parser”。

解析器是什麼呢? BeautifulSoup做的工作就是對html標籤進行解釋和分類,不同的解析器對相同html標籤會做出不同解釋。

舉個官方文件上的例子:

BeautifulSoup("<a></p>", "lxml")

# <html><body><a></a></body></html>

BeautifulSoup("<a></p>", "html5lib")

# <html><head></head><body><a><p></p></a></body></html>

BeautifulSoup("<a></p>", "html.parser")

# <a></a>官方文件上多次提到推薦使用"lxml"和"html5lib"解析器,因為預設的"html.parser"自動補全標籤的功能很差,經常會出問題。

| Parser | Typical usage | Advantages | Disadvantages |

| Python’s html.parser | BeautifulSoup(markup,"html.parser") |

|

|

| lxml’s HTML parser | BeautifulSoup(markup,"lxml") |

|

|

| lxml’s XML parser | BeautifulSoup(markup,"lxml-xml") |

|

|

| html5lib | BeautifulSoup(markup,"html5lib") |

|

|

可以看出,“lxml”的解析速度非常快,對錯誤也有一定的容忍性。“html5lib”對錯誤的容忍度是最高的,而且一定能解析出合法的html5程式碼,但速度很慢。

我們在實際爬取網站的時候,原網頁的編碼方式不統一,其中有一句亂碼,用“html.parser”和“lxml”都解析到亂碼的那句,後面的所有標籤都被忽略了。而“html5lib”能夠完美解決這個問題。

安裝及基本使用

安裝:

#安裝 Beautiful Soup pip install beautifulsoup4 #安裝解析器 Beautiful Soup支援Python標準庫中的HTML解析器,還支援一些第三方的解析器,其中一個是 lxml .根據作業系統不同,可以選擇下列方法來安裝lxml: $ apt-get install Python-lxml $ easy_install lxml $ pip install lxml 另一個可供選擇的解析器是純Python實現的 html5lib , html5lib的解析方式與瀏覽器相同,可以選擇下列方法來安裝html5lib: $ apt-get install Python-html5lib $ easy_install html5lib $ pip install html5lib

簡單使用:

html_doc = """ <html><head><title>The Dormouse's story</title></head> <body> <p class="title"><b>The Dormouse's story</b></p> <p class="story">Once upon a time there were three little sisters; and their names were <a href="http://example.com/elsie" class="sister" id="link1">Elsie</a>, <a href="http://example.com/lacie" class="sister" id="link2">Lacie</a> and <a href="http://example.com/tillie" class="sister" id="link3">Tillie</a>; and they lived at the bottom of a well.</p> <p class="story">...</p> """ #基本使用:容錯處理,文件的容錯能力指的是在html程式碼不完整的情況下,使用該模組可以識別該錯誤。使用BeautifulSoup解析上述程式碼,能夠得到一個 BeautifulSoup 的物件,並能按照標準的縮排格式的結構輸出 from bs4 import BeautifulSoup soup=BeautifulSoup(html_doc,'lxml') #具有容錯功能 res=soup.prettify() #處理好縮排,結構化顯示 print(res)

各種api詳解

- 1. name,標籤名稱

import requests

from bs4 import BeautifulSoup

ret = requests.get(url="https://www.autohome.com.cn/news/")

soup = BeautifulSoup(ret.text, 'lxml')

print(type(soup))

# <class 'bs4.BeautifulSoup'>

tag = soup.find('a')

name = tag.name # 獲取

print("=" * 120)

print(tag)

# <a class="orangelink" href="//www.autohome.com.cn/beijing/cheshi/" target="_blank"><i class="topbar-icon topbar-icon16 topbar-icon16-building"></i>進入北京車市</a>

print(type(tag))

# <class 'bs4.element.Tag'>

print("=" * 120)

print(name) # a

tag.name = 'span' # 設定,將標籤設定為span

print(soup) # a標籤已經被修改成了span標籤

# <html>....<span class="orangelink" href="//www.autohome.com.cn/beijing/cheshi/" target="_blank"><i class="topbar-icon topbar-icon16 topbar-icon16-building"></i>進入北京車市</span>....</html>

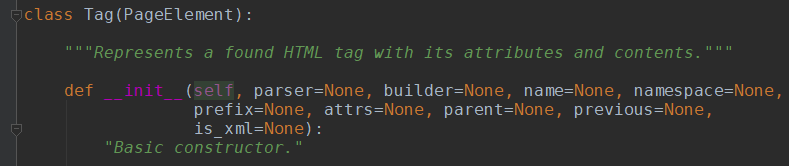

soup型別為BeautifulSoup,tag型別為bs4.element.tag,下面是tag的一些屬性

- 2. attr,標籤屬性

tag = soup.find('a')

attrs = tag.attrs # 獲取

print(tag)

# <a class="orangelink" href="//www.autohome.com.cn/beijing/cheshi/" target="_blank">

print(attrs)

# {'target': '_blank', 'href': '//www.autohome.com.cn/beijing/cheshi/', 'class': ['orangelink']}

tag.attrs = {'ik': 123} # 設定

tag.attrs['id'] = 'iiiii' # 新增

print(soup.find("a"))

# <a id="iiiii" ik="123">

- 2.5 contents 獲取標籤內所有內容

body = soup.find('body')

v = body.contents

- 3. children,所有子標籤

# body = soup.find('body')

# v = body.children

- 4. descendants,所有子子孫孫標籤

# body = soup.find('body')

# v = body.descendants

- 4.5 parent 父節點

body = soup.find('a')

v = body.parent

- 4.6 parents 獲取所有祖先節點

body = soup.find('a')

v = body.parents

print(v)

# <generator object parents at 0x000001E1225C4E60>

是迭代器,要遍歷輸出

- 5. clear,將標籤的所有子標籤全部清空(保留標籤名)

# tag = soup.find('body')

# tag.clear()

# print(soup)

- 6. decompose,遞迴的刪除所有的標籤

# body = soup.find('body')

# body.decompose()

# print(soup)

- 7. extract,遞迴的刪除所有的標籤,並獲取刪除的標籤

# body = soup.find('body')

# v = body.extract()

# print(soup)

- 8. decode,轉換為字串(含當前標籤);decode_contents(不含當前標籤)

# body = soup.find('body')

# v = body.decode()

# v = body.decode_contents()

# print(v)

- 9. encode,轉換為位元組(含當前標籤);encode_contents(不含當前標籤)

# body = soup.find('body')

# v = body.encode()

# v = body.encode_contents()

# print(v)

- 10. find,獲取匹配的第一個標籤

# tag = soup.find('a')

# print(tag)

# tag = soup.find(name='a', attrs={'class': 'sister'}, recursive=True, text='Lacie')

# tag = soup.find(name='a', class_='sister', recursive=True, text='Lacie')

# print(tag)

- 11. find_all,獲取匹配的所有標籤

# tags = soup.find_all('a')

# print(tags)

# tags = soup.find_all('a',limit=1)

# print(tags)

# tags = soup.find_all(name='a', attrs={'class': 'sister'}, recursive=True, text='Lacie')

# # tags = soup.find(name='a', class_='sister', recursive=True, text='Lacie')

# print(tags)

# ####### 列表 #######

# v = soup.find_all(name=['a','div'])

# print(v)

# v = soup.find_all(class_=['sister0', 'sister'])

# print(v)

# v = soup.find_all(text=['Tillie'])

# print(v, type(v[0]))

# v = soup.find_all(id=['link1','link2'])

# print(v)

# v = soup.find_all(href=['link1','link2'])

# print(v)

# ####### 正則 #######

import re

# rep = re.compile('p')

# rep = re.compile('^p')

# v = soup.find_all(name=rep)

# print(v)

# rep = re.compile('sister.*')

# v = soup.find_all(class_=rep)

# print(v)

# rep = re.compile('http://www.oldboy.com/static/.*')

# v = soup.find_all(href=rep)

# print(v)

# ####### 方法篩選 #######

# def func(tag):

# return tag.has_attr('class') and tag.has_attr('id')

# v = soup.find_all(name=func)

# print(v)

# ## get,獲取標籤屬性

# tag = soup.find('a')

# v = tag.get('id')

# print(v)

- 12. has_attr,檢查標籤是否具有該屬性

# tag = soup.find('a')

# v = tag.has_attr('id')

# print(v)

- 13. get_text,獲取標籤內部文字內容

# tag = soup.find('a')

# v = tag.get_text('id')

# print(v)

- 14. index,檢查標籤在某標籤中的索引位置

# tag = soup.find('body')

# v = tag.index(tag.find('div'))

# print(v)

# tag = soup.find('body')

# for i,v in enumerate(tag):

# print(i,v)

- 15. is_empty_element,是否是空標籤(是否可以是空)或者自閉合標籤,

判斷是否是如下標籤:'br' , 'hr', 'input', 'img', 'meta','spacer', 'link', 'frame', 'base'

# tag = soup.find('br')

# v = tag.is_empty_element

# print(v)

- 16. 兄弟節點,當前的關聯標籤

# soup.next # soup.next_element # soup.next_elements # soup.next_sibling # soup.next_siblings # # tag.previous # tag.previous_element # tag.previous_elements # tag.previous_sibling # tag.previous_siblings # # tag.parent # tag.parents

- 17. 查詢某標籤的關聯標籤

# tag.find_next(...) # tag.find_all_next(...) # tag.find_next_sibling(...) # tag.find_next_siblings(...) # tag.find_previous(...) # tag.find_all_previous(...) # tag.find_previous_sibling(...) # tag.find_previous_siblings(...) # tag.find_parent(...) # tag.find_parents(...) # 引數同find_all

- 18. select,select_one, CSS選擇器

soup.select("title")

soup.select("p nth-of-type(3)")

soup.select("body a")

soup.select("html head title")

tag = soup.select("span,a")

soup.select("head > title")

soup.select("p > a")

soup.select("p > a:nth-of-type(2)")

soup.select("p > #link1")

soup.select("body > a")

soup.select("#link1 ~ .sister")

soup.select("#link1 + .sister")

soup.select(".sister")

soup.select("[class~=sister]")

soup.select("#link1")

soup.select("a#link2")

soup.select('a[href]')

soup.select('a[href="http://example.com/elsie"]')

soup.select('a[href^="http://example.com/"]')

soup.select('a[href$="tillie"]')

soup.select('a[href*=".com/el"]')

from bs4.element import Tag

def default_candidate_generator(tag):

for child in tag.descendants:

if not isinstance(child, Tag):

continue

if not child.has_attr('href'):

continue

yield child

tags = soup.find('body').select("a", _candidate_generator=default_candidate_generator)

print(type(tags), tags)

from bs4.element import Tag

def default_candidate_generator(tag):

for child in tag.descendants:

if not isinstance(child, Tag):

continue

if not child.has_attr('href'):

continue

yield child

tags = soup.find('body').select("a", _candidate_generator=default_candidate_generator, limit=1)

print(type(tags), tags)

- 19. 標籤的內容(str)

# tag = soup.find('span')

# print(tag.string) # 獲取

# tag.string = 'new content' # 設定

# print(soup)

# tag = soup.find('body')

# print(tag.string)

# tag.string = 'xxx'

# print(soup)

# tag = soup.find('body')

# v = tag.stripped_strings # 遞迴內部獲取所有標籤的文字

# print(v)

- 20.append在當前標籤內部追加一個標籤

# tag = soup.find('body')

# tag.append(soup.find('a'))

# print(soup)

#

# from bs4.element import Tag

# obj = Tag(name='i',attrs={'id': 'it'})

# obj.string = '我是一個新來的'

# tag = soup.find('body')

# tag.append(obj)

# print(soup)

- 21.insert在當前標籤內部指定位置插入一個標籤

# from bs4.element import Tag

# obj = Tag(name='i', attrs={'id': 'it'})

# obj.string = '我是一個新來的'

# tag = soup.find('body')

# tag.insert(2, obj)

# print(soup)

- 22. insert_after,insert_before 在當前標籤後面或前面插入

# from bs4.element import Tag

# obj = Tag(name='i', attrs={'id': 'it'})

# obj.string = '我是一個新來的'

# tag = soup.find('body')

# # tag.insert_before(obj)

# tag.insert_after(obj)

# print(soup)

- 23. replace_with 在當前標籤替換為指定標籤

# from bs4.element import Tag

# obj = Tag(name='i', attrs={'id': 'it'})

# obj.string = '我是一個新來的'

# tag = soup.find('div')

# tag.replace_with(obj)

# print(soup)

- 24. 建立標籤之間的關係

# tag = soup.find('div')

# a = soup.find('a')

# tag.setup(previous_sibling=a)

# print(tag.previous_sibling)

- 25. wrap,將指定標籤把當前標籤包裹起來

# from bs4.element import Tag

# obj1 = Tag(name='div', attrs={'id': 'it'})

# obj1.string = '我是一個新來的'

#

# tag = soup.find('a')

# v = tag.wrap(obj1)

# print(soup)

# tag = soup.find('a')

# v = tag.wrap(soup.find('p'))

# print(soup)

- 26. unwrap,去掉當前標籤,將保留其包裹的標籤

# tag = soup.find('a')

# v = tag.unwrap()

# print(soup)

111