maven環境下使用java、scala混合開發spark應用

熟悉java的開發者在開發spark應用時,常常會遇到spark對java的介面文件不完善或者不提供對應的java介面的問題。這個時候,如果在java專案中能直接使用scala來開發spark應用,同時使用java來處理專案中的其它需求,將在一定程度上降低開發spark專案的難度。下面就來探索一下java、scala、spark、maven這一套開發環境要怎樣來搭建。

1、下載scala sdk

(後面在intellijidea中建立.scala字尾原始碼時,ide會智慧感知並提示你設定scala sdk,按提示指定sdk目錄為解壓目錄即可)

也可以手動配置scala SDK:ideal =>File =>project struct.. =>library..=> +...

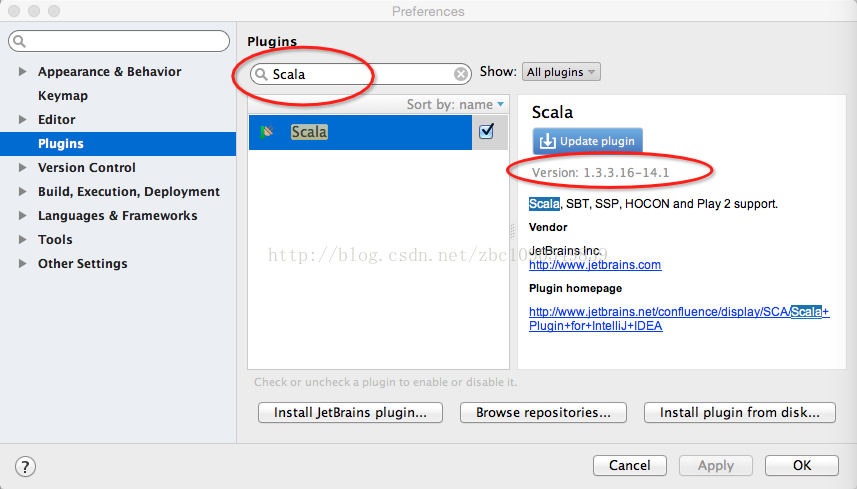

2、下載scala forintellij idea的外掛

如上圖,直接在plugins裡搜尋Scala,然後安裝即可,如果不具備上網環境,或網速不給力。也可以直接到http://plugins.jetbrains.com/plugin/?idea&id=1347手動下載外掛的zip包,手動下載時,要特別注意版本號,一定要跟本機的intellij idea的版本號匹配,否則下載後無法安裝。下載完成後,在上圖中,點選“Install plugin from disk...”,選擇外掛包的zip即可。

3、如何跟maven整合

使用maven對專案進行打包的話,需要在pom檔案中配置scala-maven-plugin這個外掛。同時,由於是spark開發,jar包需要打包為可執行java包,還需要在pom檔案中配置maven-assembly-plugin和maven-shade-plugin外掛並設定mainClass。經過實驗摸索,下面貼出一個可用的pom檔案,使用時只需要在包依賴上進行增減即可使用。

-

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" -

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/maven-v4_0_0.xsd"> -

<modelVersion>4.0.0</modelVersion> -

<groupId>my-project-groupid</groupId> -

<artifactId>sparkTest</artifactId> -

<packaging>jar</packaging> -

<version>1.0-SNAPSHOT</version> -

<name>sparkTest</name> -

<url>http://maven.apache.org</url> -

<properties> -

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding> -

<hbase.version>0.98.3</hbase.version> -

<!--<spark.version>1.3.1</spark.version>--> -

<spark.version>1.6.0</spark.version> -

<jdk.version>1.7</jdk.version> -

<scala.version>2.10.5</scala.version> -

<!--<scala.maven.version>2.11.1</scala.maven.version>--> -

</properties> -

<repositories> -

<repository> -

<id>repo1.maven.org</id> -

<url>http://repo1.maven.org/maven2</url> -

<releases> -

<enabled>true</enabled> -

</releases> -

<snapshots> -

<enabled>false</enabled> -

</snapshots> -

</repository> -

<repository> -

<id>repository.jboss.org</id> -

<url>http://repository.jboss.org/nexus/content/groups/public/ -

</url> -

<snapshots> -

<enabled>false</enabled> -

</snapshots> -

</repository> -

<repository> -

<id>cloudhopper</id> -

<name>Repository for Cloudhopper</name> -

<url>http://maven.cloudhopper.com/repos/third-party/</url> -

<releases> -

<enabled>true</enabled> -

</releases> -

<snapshots> -

<enabled>false</enabled> -

</snapshots> -

</repository> -

<repository> -

<id>mvnr</id> -

<name>Repository maven</name> -

<url>http://mvnrepository.com/</url> -

<releases> -

<enabled>true</enabled> -

</releases> -

<snapshots> -

<enabled>false</enabled> -

</snapshots> -

</repository> -

<repository> -

<id>scala</id> -

<name>Scala Tools</name> -

<url>https://mvnrepository.com/</url> -

<releases> -

<enabled>true</enabled> -

</releases> -

<snapshots> -

<enabled>false</enabled> -

</snapshots> -

</repository> -

</repositories> -

<pluginRepositories> -

<pluginRepository> -

<id>scala</id> -

<name>Scala Tools</name> -

<url>https://mvnrepository.com/</url> -

<releases> -

<enabled>true</enabled> -

</releases> -

<snapshots> -

<enabled>false</enabled> -

</snapshots> -

</pluginRepository> -

</pluginRepositories> -

<dependencies> -

<dependency> -

<groupId>org.scala-lang</groupId> -

<artifactId>scala-library</artifactId> -

<version>${scala.version}</version> -

<scope>compile</scope> -

</dependency> -

<dependency> -

<groupId>org.scala-lang</groupId> -

<artifactId>scala-compiler</artifactId> -

<version>${scala.version}</version> -

<scope>compile</scope> -

</dependency> -

<!-- https://mvnrepository.com/artifact/javax.mail/javax.mail-api --> -

<dependency> -

<groupId>javax.mail</groupId> -

<artifactId>javax.mail-api</artifactId> -

<version>1.4.7</version> -

</dependency> -

<dependency> -

<groupId>junit</groupId> -

<artifactId>junit</artifactId> -

<version>3.8.1</version> -

<scope>test</scope> -

</dependency> -

<!-- https://mvnrepository.com/artifact/org.apache.spark/spark-core_2.10 --> -

<dependency> -

<groupId>org.apache.spark</groupId> -

<artifactId>spark-core_2.10</artifactId> -

<version>${spark.version}</version> -

</dependency> -

<!-- https://mvnrepository.com/artifact/org.apache.spark/spark-sql_2.10 --> -

<dependency> -

<groupId>org.apache.spark</groupId> -

<artifactId>spark-sql_2.10</artifactId> -

<version>${spark.version}</version> -

</dependency> -

<!-- https://mvnrepository.com/artifact/org.apache.spark/spark-streaming_2.10 --> -

<dependency> -

<groupId>org.apache.spark</groupId> -

<artifactId>spark-streaming_2.10</artifactId> -

<version>${spark.version}</version> -

</dependency> -

<!-- https://mvnrepository.com/artifact/org.apache.spark/spark-mllib_2.10 --> -

<dependency> -

<groupId>org.apache.spark</groupId> -

<artifactId>spark-mllib_2.10</artifactId> -

<version>${spark.version}</version> -

</dependency> -

<!-- https://mvnrepository.com/artifact/org.apache.spark/spark-hive_2.10 --> -

<dependency> -

<groupId>org.apache.spark</groupId> -

<artifactId>spark-hive_2.10</artifactId> -

<version>${spark.version}</version> -

</dependency> -

<!-- https://mvnrepository.com/artifact/org.apache.spark/spark-graphx_2.10 --> -

<dependency> -

<groupId>org.apache.spark</groupId> -

<artifactId>spark-graphx_2.10</artifactId> -

<version>${spark.version}</version> -

</dependency> -

<dependency> -

<groupId>mysql</groupId> -

<artifactId>mysql-connector-java</artifactId> -

<version>5.1.30</version> -

</dependency> -

<!--<dependency>--> -

<!--<groupId>org.spark-project.akka</groupId>--> -

<!--<artifactId>akka-actor_2.10</artifactId>--> -

<!--<version>2.3.4-spark</version>--> -

<!--</dependency>--> -

<!--<dependency>--> -

<!--<groupId>org.spark-project.akka</groupId>--> -

<!--<artifactId>akka-remote_2.10</artifactId>--> -

<!--<version>2.3.4-spark</version>--> -

<!--</dependency>--> -

<dependency> -

<groupId>com.google.guava</groupId> -

<artifactId>guava</artifactId> -

<version>14.0.1</version> -

</dependency> -

<dependency> -

<groupId>org.apache.hadoop</groupId> -

<artifactId>hadoop-common</artifactId> -

<version>2.6.0</version> -

</dependency> -

<dependency> -

<groupId>org.apache.hadoop</groupId> -

<artifactId>hadoop-client</artifactId> -

<version>2.6.0</version> -

</dependency> -

<dependency> -

<groupId>org.apache.spark</groupId> -

<artifactId>spark-hive_2.10</artifactId> -

<version>${spark.version}</version> -

</dependency> -

<dependency> -

<groupId>com.alibaba</groupId> -

<artifactId>fastjson</artifactId> -

<version>1.2.3</version> -

</dependency> -

<dependency> -

<groupId>p6spy</groupId> -

<artifactId>p6spy</artifactId> -

<version>1.3</version> -

</dependency> -

<dependency> -

<groupId>org.apache.commons</groupId> -

<artifactId>commons-math3</artifactId> -

<version>3.3</version> -

</dependency> -

<dependency> -

<groupId>org.jdom</groupId> -

<artifactId>jdom</artifactId> -

<version>2.0.2</version> -

</dependency> -

<dependency> -

<groupId>com.google.guava</groupId> -

<artifactId>guava</artifactId> -

<version>14.0.1</version> -

</dependency> -

<dependency> -

<groupId>org.apache.hadoop</groupId> -

<artifactId>hadoop-common</artifactId> -

<version>2.6.0</version> -

</dependency> -

<dependency> -

<groupId>org.apache.hadoop</groupId> -

<artifactId>hadoop-hdfs</artifactId> -

<version>2.6.0</version> -

</dependency> -

<dependency> -

<groupId>redis.clients</groupId> -

<artifactId>jedis</artifactId> -

<version>2.6.0</version> -

</dependency> -

<!-- https://mvnrepository.com/artifact/org.apache.hbase/hbase-client --> -

<dependency> -

<groupId>org.apache.hbase</groupId> -

<artifactId>hbase-client</artifactId> -

<version>0.98.6-hadoop2</version> -

</dependency> -

<!-- https://mvnrepository.com/artifact/org.apache.hbase/hbase --> -

<dependency> -

<groupId>org.apache.hbase</groupId> -

<artifactId>hbase</artifactId> -

<version>0.98.6-hadoop2</version> -

<type>pom</type> -

</dependency> -

<!-- https://mvnrepository.com/artifact/org.apache.hbase/hbase-common --> -

<dependency> -

<groupId>org.apache.hbase</groupId> -

<artifactId>hbase-common</artifactId> -

<version>0.98.6-hadoop2</version> -

</dependency> -

<!-- https://mvnrepository.com/artifact/org.apache.hbase/hbase-server --> -

<dependency> -

<groupId>org.apache.hbase</groupId> -

<artifactId>hbase-server</artifactId> -

<version>0.98.6-hadoop2</version> -

</dependency> -

<dependency> -

<groupId>org.testng</groupId> -

<artifactId>testng</artifactId> -

<version>6.8.8</version> -

<scope>test</scope> -

</dependency> -

<dependency> -

<groupId>mysql</groupId> -

<artifactId>mysql-connector-java</artifactId> -

<version>5.1.30</version> -

</dependency> -

<dependency> -

<groupId>com.fasterxml.jackson.jaxrs</groupId> -

<artifactId>jackson-jaxrs-json-provider</artifactId> -

<version>2.4.4</version> -

</dependency> -

<dependency> -

<groupId>com.fasterxml.jackson.core</groupId> -

<artifactId>jackson-databind</artifactId> -

<version>2.4.4</version> -

</dependency> -

<dependency> -

<groupId>net.sf.json-lib</groupId> -

<artifactId>json-lib</artifactId> -

<version>2.4</version> -

<classifier>jdk15</classifier> -

</dependency> -

<!-- https://mvnrepository.com/artifact/javax.mail/javax.mail-api --> -

<dependency> -

<groupId>javax.mail</groupId> -

<artifactId>javax.mail-api</artifactId> -

<version>1.4.7</version> -

</dependency> -

<dependency> -

<groupId>junit</groupId> -

<artifactId>junit</artifactId> -

<version>3.8.1</version> -

<scope>test</scope> -

</dependency> -

</dependencies> -

<build> -

<plugins> -

<!--<打包後的專案必須spark submit方式提交給spark執行,勿使用java -jar執行java包>--> -

<plugin> -

<artifactId>maven-assembly-plugin</artifactId> -

<configuration> -

<appendAssemblyId>false</appendAssemblyId> -

<descriptorRefs> -

<descriptorRef>jar-with-dependencies</descriptorRef> -

</descriptorRefs> -

<archive> -

<manifest> -

<mainClass>rrkd.dt.sparkTest.HelloWorld</mainClass> -

</manifest> -

</archive> -

</configuration> -

<executions> -

<execution> -

<id>make-assembly</id> -

<phase>package</phase> -

<goals> -

<goal>assembly</goal> -

</goals> -

</execution> -

</executions> -

</plugin> -

<plugin> -

<groupId>org.apache.maven.plugins</groupId> -

<artifactId>maven-compiler-plugin</artifactId> -

<version>3.1</version> -

<configuration> -

<source>${jdk.version}</source> -

<target>${jdk.version}</target> -

<encoding>${project.build.sourceEncoding}</encoding> -

</configuration> -

</plugin> -

<plugin> -

<groupId>org.apache.maven.plugins</groupId> -

<artifactId>maven-shade-plugin</artifactId> -

<version>2.1</version> -

<configuration> -

<createDependencyReducedPom>false</createDependencyReducedPom> -

</configuration> -

<executions> -

<execution> -

<phase>package</phase> -

<goals> -

<goal>shade</goal> -

</goals> -

<configuration> -

<shadedArtifactAttached>true</shadedArtifactAttached> -

<shadedClassifierName>allinone</shadedClassifierName> -

<artifactSet> -

<includes> -

<include>*:*</include> -

</includes> -

</artifactSet> -

<filters> -

<filter> -

<artifact>*:*</artifact> -

<excludes> -

<exclude>META-INF/*.SF</exclude> -

<exclude>META-INF/*.DSA</exclude> -

<exclude>META-INF/*.RSA</exclude> -

</excludes> -

</filter> -

</filters> -

<transformers> -

<transformer -

implementation="org.apache.maven.plugins.shade.resource.AppendingTransformer"> -

<resource>reference.conf</resource> -

</transformer> -

<transformer implementation="org.apache.maven.plugins.shade.resource.ManifestResourceTransformer"> -

<mainClass>rrkd.dt.sparkTest.HelloWorld</mainClass> -

</transformer> -

</transformers> -

</configuration> -

</execution> -

</executions> -

</plugin> -

<!--< build circular dependencies between Java and Scala>--> -

<plugin> -

<groupId>net.alchim31.maven</groupId> -

<artifactId>scala-maven-plugin</artifactId> -

<version>3.2.0</version> -

<executions> -

<execution> -

<id>compile-scala</id> -

<phase>compile</phase> -

<goals> -

<goal>add-source</goal> -

<goal>compile</goal> -

</goals> -

</execution> -

<execution> -

<id>test-compile-scala</id> -

<phase>test-compile</phase> -

<goals> -

<goal>add-source</goal> -

<goal>testCompile</goal> -

</goals> -

</execution> -

</executions> -

<configuration> -

<scalaVersion>${scala.version}</scalaVersion> -

</configuration> -

</plugin> -

</plugins> -

</build> -

</project>

主要是build部分的配置,其它的毋須過多關注。

專案的目錄結構,大體跟maven的預設約定一樣,只是src下多了一個scala目錄,主要還是為了便於組織java原始碼和scala原始碼,如下圖:

在java目錄下建立HelloWorld類HelloWorld.class:

-

package test; -

import test.Hello; -

/** -

* Created by L on 2017/1/5. -

*/ -

public class HelloWorld { -

public static void main(String[] args){ -

System.out.print("test"); -

Hello.sayHello("scala"); -

Hello.runSpark(); -

} -

}

在scala目錄下建立hello類hello.scala:

-

package test -

import org.apache.spark.graphx.{Graph, Edge, VertexId, GraphLoader} -

import org.apache.spark.rdd.RDD -

import org.apache.spark.{SparkContext, SparkConf} -

import breeze.linalg.{Vector, DenseVector, squaredDistance} -

/** -

* Created by L on 2017/1/5. -

*/ -

object Hello { -

def sayHello(x: String): Unit = { -

println("hello," + x); -

} -

// def main(args: Array[String]) { -

def runSpark() { -

val sparkConf = new SparkConf().setAppName("SparkKMeans").setMaster("local[*]") -

val sc = new SparkContext(sparkConf) -

// Create an RDD for the vertices -

val users: RDD[(VertexId, (String, String))] = -

sc.parallelize(Array((3L, ("rxin", "student")), (7L, ("jgonzal", "postdoc")), -

(5L, ("franklin", "prof")), (2L, ("istoica", "prof")), -

(4L, ("peter", "student")))) -

// Create an RDD for edges -

val relationships: RDD[Edge[String]] = -

sc.parallelize(Array(Edge(3L, 7L, "collab"), Edge(5L, 3L, "advisor"), -

Edge(2L, 5L, "colleague"), Edge(5L, 7L, "pi"), -

Edge(4L, 0L, "student"), Edge(5L, 0L, "colleague"))) -

// Define a default user in case there are relationship with missing user -

val defaultUser = ("John Doe", "Missing") -

// Build the initial Graph -

val graph = Graph(users, relationships, defaultUser) -

// Notice that there is a user 0 (for which we have no information) connected to users -

// 4 (peter) and 5 (franklin). -

graph.triplets.map( -

triplet => triplet.srcAttr._1 + " is the " + triplet.attr + " of " + triplet.dstAttr._1 -

).collect.foreach(println(_)) -

// Remove missing vertices as well as the edges to connected to them -

val validGraph = graph.subgraph(vpred = (id, attr) => attr._2 != "Missing") -

// The valid subgraph will disconnect users 4 and 5 by removing user 0 -

validGraph.vertices.collect.foreach(println(_)) -

validGraph.triplets.map( -

triplet => triplet.srcAttr._1 + " is the " + triplet.attr + " of " + triplet.dstAttr._1 -

).collect.foreach(println(_)) -

sc.stop() -

} -

}

這樣子,在scala專案中呼叫spark的介面來執行一些spark應用,在java專案中再呼叫scala。

4、scala專案maven的編譯打包

java/scala混合的專案,怎麼先編譯scala再編譯java,可以使用以下maven 命令來進行編譯打包:

mvn clean scala:compile assembly:assembly

5、spark專案的jar包的執行問題

在開發時,我們可能會以local模式在IDEA中執行,然後使用了上面的命令進行打包。打包後的spark專案必須要放到spark叢集下以spark-submit的方式提交執行。

--------------------- 本文來自 大愚若智_ 的CSDN 部落格 ,全文地址請點選:https://blog.csdn.net/zbc1090549839/article/details/54290233?utm_source=copy