python爬取美團所有結婚商家(包括詳情)

阿新 • • 發佈:2018-12-14

本文章主要介紹爬取美團結婚欄目所有商家資訊(電話)

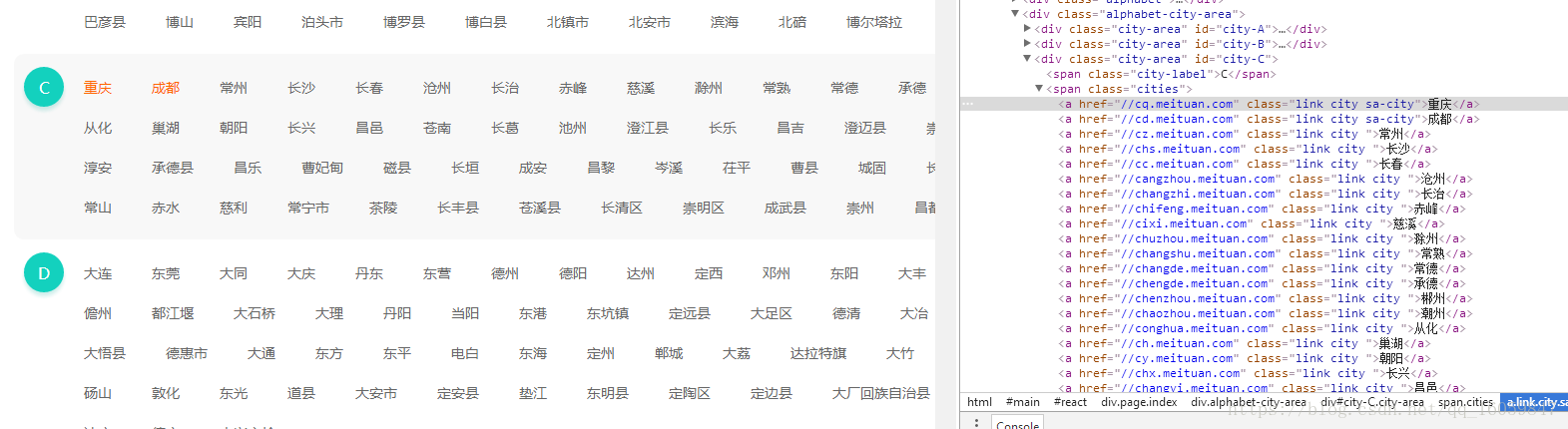

第一步:爬取區域

分析鞍山結婚頁面

https://as.meituan.com/jiehun/

分析重慶結婚頁面

https://cq.meituan.com/jiehun/

分析可得:url基本相同,我們只需爬取美團的選擇城市,然後構建我們的url,即可爬取所有區域的結婚資訊

主要實現程式碼:

def find_all_citys(): response = requests.get('http://www.meituan.com/changecity/') if response.status_code == 200: results = [] soup = BeautifulSoup(response.text,'html.parser') links = soup.select('.alphabet-city-area a') for link in links: temp = { 'href' : link.get('href'), 'name' : link.get_text().strip(), } results.append(temp) return results else: return None

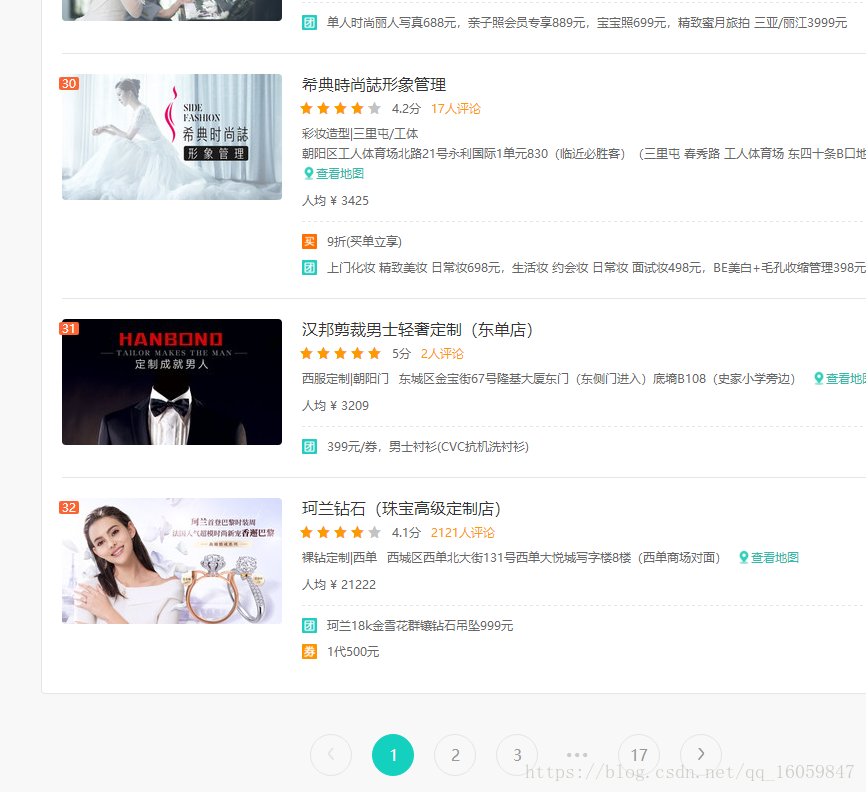

第二步:構建完所有的url後,爬取每個url的列表資訊

每個區域url最多32頁,爬取每個商家,直到列表資料為空

主要程式碼如下:

for page in range(1,32): print("*" *30) url = need['url'] + 'pn' + str(page) +'/' # url = 'https://jingzhou.meituan.com/jiehun/b16269/pn1/' headers = requests_headers() print(url+"開始抓取") response = requests.get(url, headers=headers, timeout = 10) # , allow_redirects=False # if response.status_code == 302 or response.status_code == 301: # raise Exception("30*跳轉") pattern = re.compile('"errorMsg":"(\d*?)"',re.S) h_code = re.findall(pattern, response.text) if len(h_code) != 0 and h_code[0] == '403': raise Exception("403:錯誤資訊:<!-- -->伺服器拒絕請求") pattern = re.compile('"searchResult"\:(.*?),\"recommendResult\"\:',re.S) items = re.findall(pattern, response.text) json_text = items[0] + "}" # print(json_text) json_data = json.loads(json_text) # print(len(json_data['searchResult'])) if len(json_data['searchResult']) == 0: print(url+"未匹配到,列表頁抓取完畢") print("*" *30) update_url_to_complete(need['id']) break for store in json_data['searchResult']: # 建立sql 語句,並執行 sql = 'INSERT INTO `jiehun_detail` (`url`,`poi_id`, `front_img`, `title`, `address`) \ VALUES ("%s","%s","%s","%s","%s")' % (url, store['id'],store['imageUrl'],store['title'],store['address']) # print(sql) cursor.execute(sql) # 提交SQL connection.commit() update_url_to_complete(need['id']) print(url+ "抓取完畢") print("*" *30)

第三步:爬取商家詳情

url為https://www.meituan.com/jiehun/68109543/

其中68109543為商家id,已經在第二步爬取到,拼接完後即可爬取商家詳情

try: headers = {} print(need) print("*" *30) url = 'https://www.meituan.com/jiehun/' + str(need['poi_id']) + '/' headers = requests_headers() print(url+"開始抓取") response = requests.get(url, headers=headers, timeout = 10) pattern = re.compile('"errorMsg":"(\d*?)"',re.S) h_code = re.findall(pattern, response.text) if len(h_code) != 0 and h_code[0] == '403': raise Exception("403:錯誤資訊:<!-- -->伺服器拒絕請求") # print(response.text) # exit() soup = BeautifulSoup(response.text,'html.parser') errorMessage = soup.select('.errorMessage') if len(errorMessage) != 0: update_url_to_complete(need['id'], '', '') raise Exception(errorMessage[0].select('h2')[0].get_text()) open_time = soup.select('.more-item')[1].get_text().strip() phone = soup.select('.icon-phone')[0].get_text().strip() update_url_to_complete(need['id'], open_time, phone) print(url+ "抓取完畢") print("*" *30) except Exception as e: print(e) print(headers) cookies = create_cookies()

其中美團會驗證你爬蟲的user-agent,cookie和ip,IP可通過代理ip,其實頁可以通過手機分享熱點,ip會自動更換,當ip被封時,重新分享熱點即可,但需要人為操作。

cookie美團封的很快,必須程式自動切換,我這裡簡單的用Phantomjs模擬來獲取headers頭

def create_cookies():

driver = webdriver.PhantomJS()

cookiestr = []

# for x in range(1,10):

driver.get("https://bj.meituan.com/jiehun/")

driver.implicitly_wait(5)

cookie = [item["name"] + "=" + item["value"] for item in driver.get_cookies()]

print("生成cookie")

print(cookie)

cookiestr.append(';'.join(item for item in cookie))

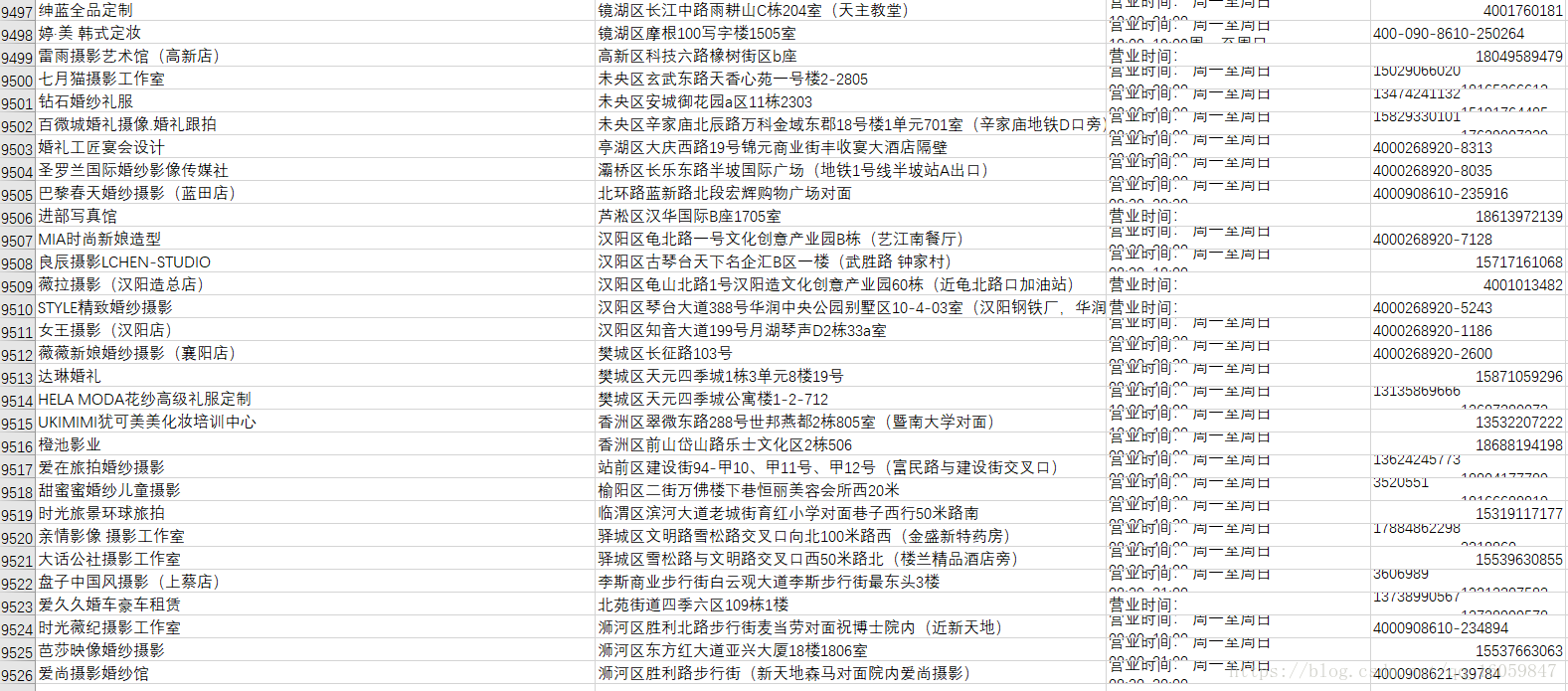

return cookiestr到此,資料已全部爬取完畢,大概花了2天時間,一共9526條商家,不多時因為美團上只有這些商家,此方法也可爬取美食欄目,可有90萬+的商家。結婚商家資訊如下