Octavia 建立 Listener、Pool、Member、L7policy、L7 rule 與 Health Manager 的實現與分析

目錄

文章目錄

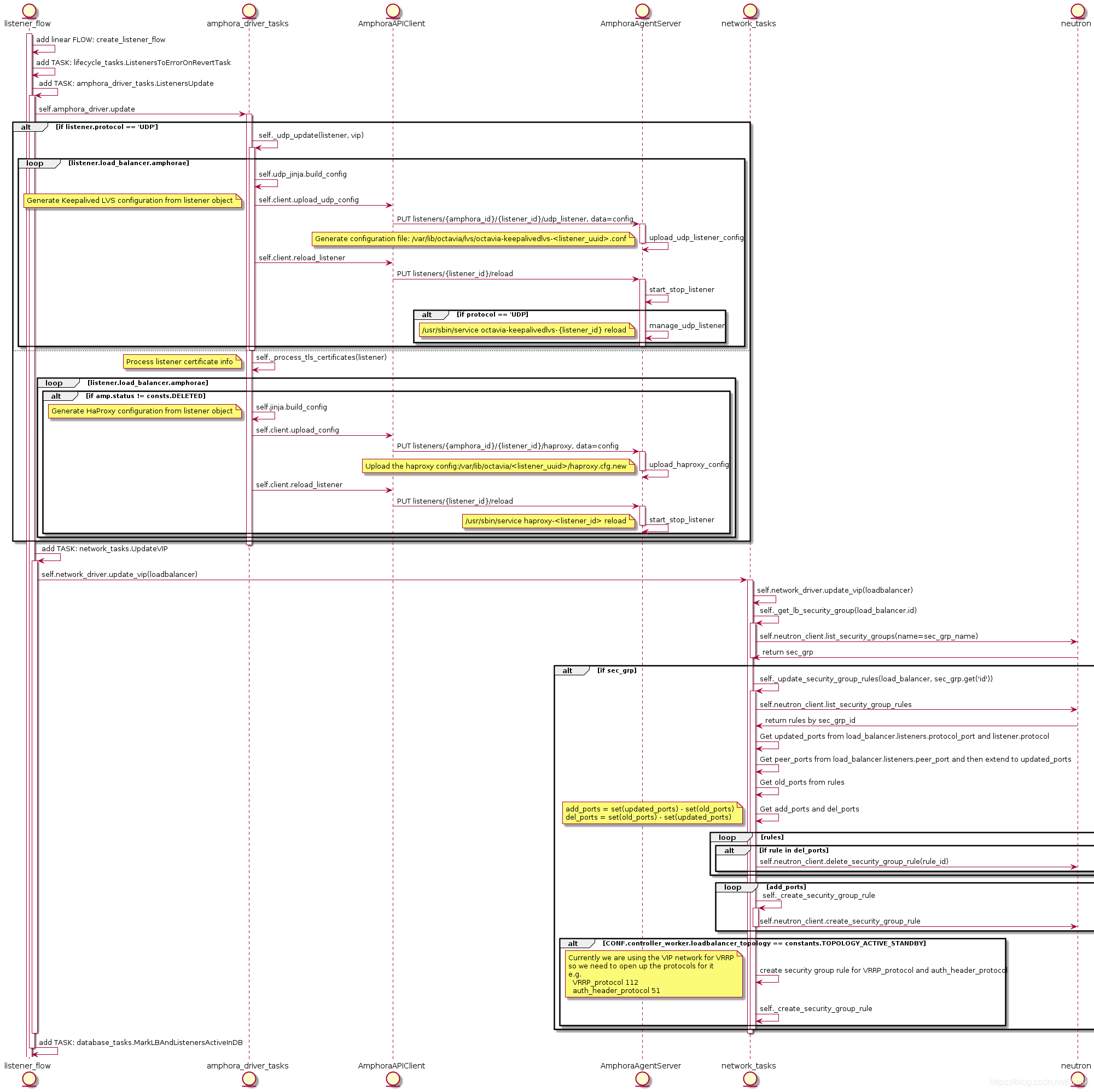

建立 Listener

我們知道只有為 loadbalancer 建立 listener 時才會啟動 haproxy 服務程序。

從 UML 可知,執行指令 openstack loadbalancer listener create --protocol HTTP --protocol-port 8080 lb-1 建立 Listener 時會執行到 Task:ListenersUpdate,由 ListenersUpdate 完成了 haproxy 配置檔案的 Upload 和 haproxy 服務程序的 Reload。

配置檔案 /var/lib/octavia/1385d3c4-615e-4a92-aea1-c4fa51a75557/haproxy.cfg,其中 1385d3c4-615e-4a92-aea1-c4fa51a75557 為 Listener UUID:

# Configuration for loadbalancer 01197be7-98d5-440d-a846-cd70f52dc503 global daemon user nobody log /dev/log local0 log /dev/log local1 notice stats socket /var/lib/octavia/1385d3c4-615e-4a92-aea1-c4fa51a75557.sock mode 0666 level user maxconn 1000000 defaults log global retries 3 option redispatch peers 1385d3c4615e4a92aea1c4fa51a75557_peers peer l_Ustq0qE-h-_Q1dlXLXBAiWR8U 172.16.1.7:1025 peer O08zAgUhIv9TEXhyYZf2iHdxOkA 172.16.1.3:1025 frontend 1385d3c4-615e-4a92-aea1-c4fa51a75557 option httplog maxconn 1000000 bind 172.16.1.10:8080 mode http timeout client 50000

因為此時的 Listener 只指定了監聽的協議和埠,所以 frontend section 也設定了相應的 bind 172.16.1.10:8080 和 mode http。

服務程序:systemctl status haproxy-1385d3c4-615e-4a92-aea1-c4fa51a75557.service 其中 1385d3c4-615e-4a92-aea1-c4fa51a75557 為 Listener UUID:

# file: /usr/lib/systemd/system/haproxy-1385d3c4-615e-4a92-aea1-c4fa51a75557.service

[Unit]

Description=HAProxy Load Balancer

After=network.target syslog.service amphora-netns.service

Before=octavia-keepalived.service

Wants=syslog.service

Requires=amphora-netns.service

[Service]

# Force context as we start haproxy under "ip netns exec"

SELinuxContext=system_u:system_r:haproxy_t:s0

Environment="CONFIG=/var/lib/octavia/1385d3c4-615e-4a92-aea1-c4fa51a75557/haproxy.cfg" "USERCONFIG=/var/lib/octavia/haproxy-default-user-group.conf" "PIDFILE=/var/lib/octavia/1385d3c4-615e-4a92-aea1-c4fa51a75557/1385d3c4-615e-4a92-aea1-c4fa51a75557.pid"

ExecStartPre=/usr/sbin/haproxy -f $CONFIG -f $USERCONFIG -c -q -L O08zAgUhIv9TEXhyYZf2iHdxOkA

ExecReload=/usr/sbin/haproxy -c -f $CONFIG -f $USERCONFIG -L O08zAgUhIv9TEXhyYZf2iHdxOkA

ExecReload=/bin/kill -USR2 $MAINPID

ExecStart=/sbin/ip netns exec amphora-haproxy /usr/sbin/haproxy-systemd-wrapper -f $CONFIG -f $USERCONFIG -p $PIDFILE -L O08zAgUhIv9TEXhyYZf2iHdxOkA

KillMode=mixed

Restart=always

LimitNOFILE=2097152

[Install]

WantedBy=multi-user.target

從服務程序配置可以看出實際啟動的服務為 /usr/sbin/haproxy-systemd-wrapper,它是執行在 namespace amphora-haproxy 中的,該指令碼做的事情可以從日誌瞭解到:

Nov 15 10:12:01 amphora-cd444019-ce8f-4f89-be6b-0edf76f41b77 ip[13206]: haproxy-systemd-wrapper: executing /usr/sbin/haproxy -f /var/lib/octavia/1385d3c4-615e-4a92-aea1-c4fa51a75557/haproxy.cfg -f /var/lib/octavia/haproxy-default-user-group.conf -p /var/lib/octavia/1385d3c4-615e-4a92-aea1-c4fa51a75557/1385d3c4-615e-4a92-aea1-c4fa51a75557.pid -L O08zAgUhIv9TEXhyYZf2iHdxOkA -Ds

就是呼叫了 /usr/sbin/haproxy 指令而已。

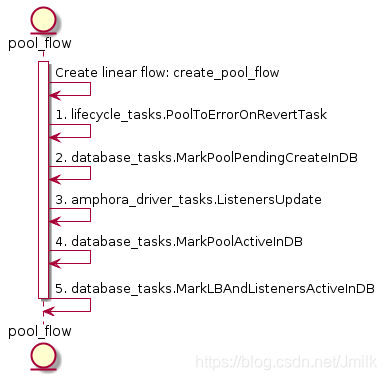

建立 Pool

我們還是主要關注 octavia-worker 的 create pool flow。

create pool flow 中最重要的還是在於 Task:ListenersUpdate,更新 haproxy 的配置檔案。比如:我們執行指令 openstack loadbalancer pool create --protocol HTTP --lb-algorithm ROUND_ROBIN --listener 1385d3c4-615e-4a92-aea1-c4fa51a75557 為 listener 建立一個 default pool。

[[email protected] ~]# openstack loadbalancer listener list

+--------------------------------------+--------------------------------------+------+----------------------------------+----------+---------------+----------------+

| id | default_pool_id | name | project_id | protocol | protocol_port | admin_state_up |

+--------------------------------------+--------------------------------------+------+----------------------------------+----------+---------------+----------------+

| 1385d3c4-615e-4a92-aea1-c4fa51a75557 | 8196f752-a367-4fb4-9194-37c7eab95714 | | 9e4fe13a6d7645269dc69579c027fde4 | HTTP | 8080 | True |

+--------------------------------------+--------------------------------------+------+----------------------------------+----------+---------------+----------------+

haproxy 的配置檔案會新增一個 backend section,並且根據上述 CLI 傳入的引數設定了 backend mode http 和 balance roundrobin。

# Configuration for loadbalancer 01197be7-98d5-440d-a846-cd70f52dc503

global

daemon

user nobody

log /dev/log local0

log /dev/log local1 notice

stats socket /var/lib/octavia/1385d3c4-615e-4a92-aea1-c4fa51a75557.sock mode 0666 level user

maxconn 1000000

defaults

log global

retries 3

option redispatch

peers 1385d3c4615e4a92aea1c4fa51a75557_peers

peer l_Ustq0qE-h-_Q1dlXLXBAiWR8U 172.16.1.7:1025

peer O08zAgUhIv9TEXhyYZf2iHdxOkA 172.16.1.3:1025

frontend 1385d3c4-615e-4a92-aea1-c4fa51a75557

option httplog

maxconn 1000000

bind 172.16.1.10:8080

mode http

default_backend 8196f752-a367-4fb4-9194-37c7eab95714 # UUID of pool

timeout client 50000

backend 8196f752-a367-4fb4-9194-37c7eab95714

mode http

balance roundrobin

fullconn 1000000

option allbackups

timeout connect 5000

timeout server 50000

需要注意的是,建立一個 pool 時可以指定一個 listener uuid 或者 loadbalancer uuid。當指定了前者時,意味著為 listener 指定了一個 default pool,listener 只能有一個 default pool。後續再為該 listener 指定 default pool 則會觸發異常;當指定了 loadbalancer uuid 時,則建立了一個 shared pool。shared pool 能被同屬一個 loadbalancer 下的所有 listener 共享,常被用於輔助實現 l7policy 的功能。當 Listener 的 l7policy 動作被設定為為「轉發至另一個 pool」時,此時就可以選定一個 shared pool。shared pool 可以接受同屬 loadbalancer 下所有 listener 的轉發請求。

執行指令建立一個 shared pool:

[[email protected] ~]# openstack loadbalancer pool create --protocol HTTP --lb-algorithm ROUND_ROBIN --loadbalancer 01197be7-98d5-440d-a846-cd70f52dc503

+---------------------+--------------------------------------+

| Field | Value |

+---------------------+--------------------------------------+

| admin_state_up | True |

| created_at | 2018-11-20T03:35:08 |

| description | |

| healthmonitor_id | |

| id | 822f78c3-ea2c-4770-bef0-e97f1ac2eba8 |

| lb_algorithm | ROUND_ROBIN |

| listeners | |

| loadbalancers | 01197be7-98d5-440d-a846-cd70f52dc503 |

| members | |

| name | |

| operating_status | OFFLINE |

| project_id | 9e4fe13a6d7645269dc69579c027fde4 |

| protocol | HTTP |

| provisioning_status | PENDING_CREATE |

| session_persistence | None |

| updated_at | None |

+---------------------+--------------------------------------+

注意,單純的建立 shared pool 不將其繫結到 listener 的話,listener 對應的 haproxy 配置檔案的內容是不會被更改的。

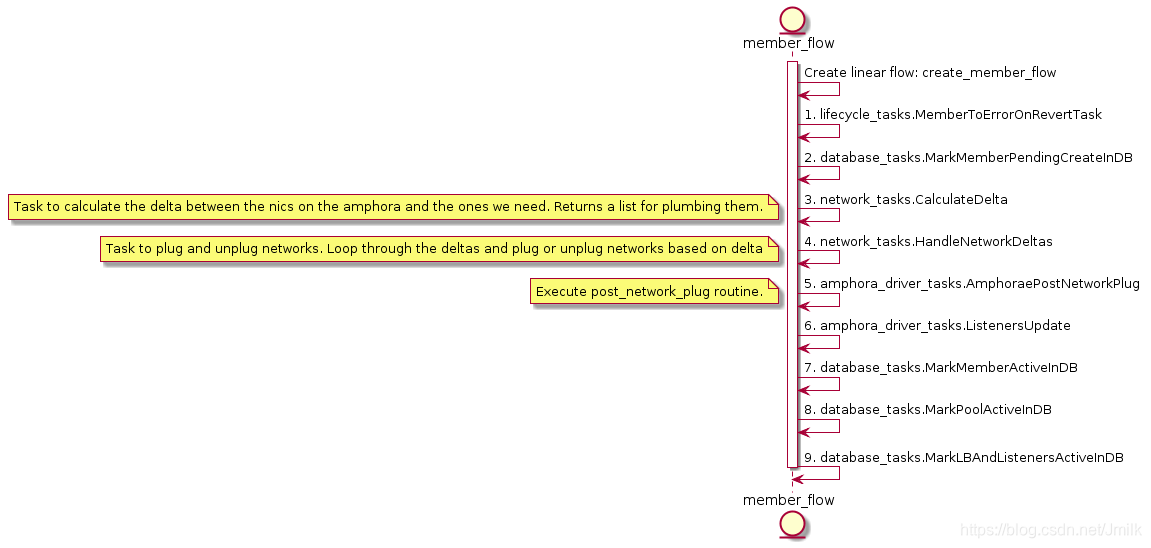

建立 Member

使用下述指令建立一個 member 到 default pool,選項指定了雲主機所在的 subnet、ipaddress 以及接收資料轉發的 protocol-port。

[[email protected] ~]# openstack loadbalancer member create --subnet-id 2137f3fb-00ee-41a9-b66e-06705c724a36 --address 192.168.1.14 --protocol-port 80 8196f752-a367-4fb4-9194-37c7eab95714

+---------------------+--------------------------------------+

| Field | Value |

+---------------------+--------------------------------------+

| address | 192.168.1.14 |

| admin_state_up | True |

| created_at | 2018-11-20T06:09:58 |

| id | b6e464fd-dd1e-4775-90f2-4231444a0bbe |

| name | |

| operating_status | NO_MONITOR |

| project_id | 9e4fe13a6d7645269dc69579c027fde4 |

| protocol_port | 80 |

| provisioning_status | PENDING_CREATE |

| subnet_id | 2137f3fb-00ee-41a9-b66e-06705c724a36 |

| updated_at | None |

| weight | 1 |

| monitor_port | None |

| monitor_address | None |

| backup | False |

+---------------------+--------------------------------------+

在 octavia-api 層面首先會通過配置 CONF.networking.reserved_ips 驗證該 menber 的 ipaddress 是否可用,驗證 member 所在的 subnet 是否存在,然後再進入 octavia-worker 的流程。

CalculateDelta

CalculateDelta 輪詢 loadbalancer 下屬的 amphorae 執行 Task:CalculateAmphoraDelta,用於計算 Amphora 上的 NICs 數量與需要的 NICs 之間的 “差值”。

# file: /opt/rocky/octavia/octavia/controller/worker/tasks/network_tasks.py

class CalculateAmphoraDelta(BaseNetworkTask):

default_provides = constants.DELTA

def execute(self, loadbalancer, amphora):

LOG.debug("Calculating network delta for amphora id: %s", amphora.id)

# Figure out what networks we want

# seed with lb network(s)

vrrp_port = self.network_driver.get_port(amphora.vrrp_port_id)

desired_network_ids = {vrrp_port.network_id}.union(

CONF.controller_worker.amp_boot_network_list)

for pool in loadbalancer.pools:

member_networks = [

self.network_driver.get_subnet(member.subnet_id).network_id

for member in pool.members

if member.subnet_id

]

desired_network_ids.update(member_networks)

nics = self.network_driver.get_plugged_networks(amphora.compute_id)

# assume we don't have two nics in the same network

actual_network_nics = dict((nic.network_id, nic) for nic in nics)

del_ids = set(actual_network_nics) - desired_network_ids

delete_nics = list(

actual_network_nics[net_id] for net_id in del_ids)

add_ids = desired_network_ids - set(actual_network_nics)

add_nics = list(n_data_models.Interface(

network_id=net_id) for net_id in add_ids)

delta = n_data_models.Delta(

amphora_id=amphora.id, compute_id=amphora.compute_id,

add_nics=add_nics, delete_nics=delete_nics)

return delta

簡單來說,首先獲取所需要的 networks:desired_network_ids 和已經存在的 networks:actual_network_nics。然後計算出待刪除的 networks:delete_nics 和待新增的 networks:add_nics,並最終 returns 一個 Delta data models 到 Task:HandleNetworkDeltas 執行實際的 Amphora NICs 新增與刪除。

HandleNetworkDeltas

Task:HandleNetworkDelta —— Plug or unplug networks based on delta。

# file: /opt/rocky/octavia/octavia/controller/worker/tasks/network_tasks.py

class HandleNetworkDelta(BaseNetworkTask):

"""Task to plug and unplug networks

Plug or unplug networks based on delta

"""

def execute(self, amphora, delta):

"""Handle network plugging based off deltas."""

added_ports = {}

added_ports[amphora.id] = []

for nic in delta.add_nics:

interface = self.network_driver.plug_network(delta.compute_id,

nic.network_id)

port = self.network_driver.get_port(interface.port_id)

port.network = self.network_driver.get_network(port.network_id)

for fixed_ip in port.fixed_ips:

fixed_ip.subnet = self.network_driver.get_subnet(

fixed_ip.subnet_id)

added_ports[amphora.id].append(port)

for nic in delta.delete_nics:

try:

self.network_driver.unplug_network(delta.compute_id,

nic.network_id)

except base.NetworkNotFound:

LOG.debug("Network %d not found ", nic.network_id)

except Exception:

LOG.exception("Unable to unplug network")

return added_ports

根據 Task:CalculateAmphoraDelta returns 的 Delta data models 來進行 amphorae interfaces 的掛載和解除安裝。

# file: /opt/rocky/octavia/octavia/network/drivers/neutron/allowed_address_pairs.py

def plug_network(self, compute_id, network_id, ip_address=None):

try:

interface = self.nova_client.servers.interface_attach(

server=compute_id, net_id=network_id, fixed_ip=ip_address,

port_id=None)

except nova_client_exceptions.NotFound as e:

if 'Instance' in str(e):

raise base.AmphoraNotFound(str(e))

elif 'Network' in str(e):

raise base.NetworkNotFound(str(e))

else:

raise base.PlugNetworkException(str(e))

except Exception:

message = _('Error plugging amphora (compute_id: {compute_id}) '

'into network {network_id}.').format(

compute_id=compute_id,

network_id=network_id)

LOG.exception(message)

raise base.PlugNetworkException(message)

return self._nova_interface_to_octavia_interface(compute_id, interface)

def unplug_network(self, compute_id, network_id, ip_address=None):

interfaces = self.get_plugged_networks(compute_id)

if not interfaces:

msg = ('Amphora with compute id {compute_id} does not have any '

'plugged networks').format(compute_id=compute_id)

raise base.NetworkNotFound(msg)

unpluggers = self._get_interfaces_to_unplug(interfaces, network_id,

ip_address=ip_address)

for index, unplugger in enumerate(unpluggers):

try:

self.nova_client.servers.interface_detach(

server=compute_id, port_id=unplugger.port_id)

except Exception:

LOG.warning('Error unplugging port {port_id} from amphora '

'with compute ID {compute_id}. '

'Skipping.'.format(port_id=unplugger.port_id,

compute_id=compute_id))

最後會將 added_port return 到後續的 TASK:AmphoraePostNetworkPlug 使用。

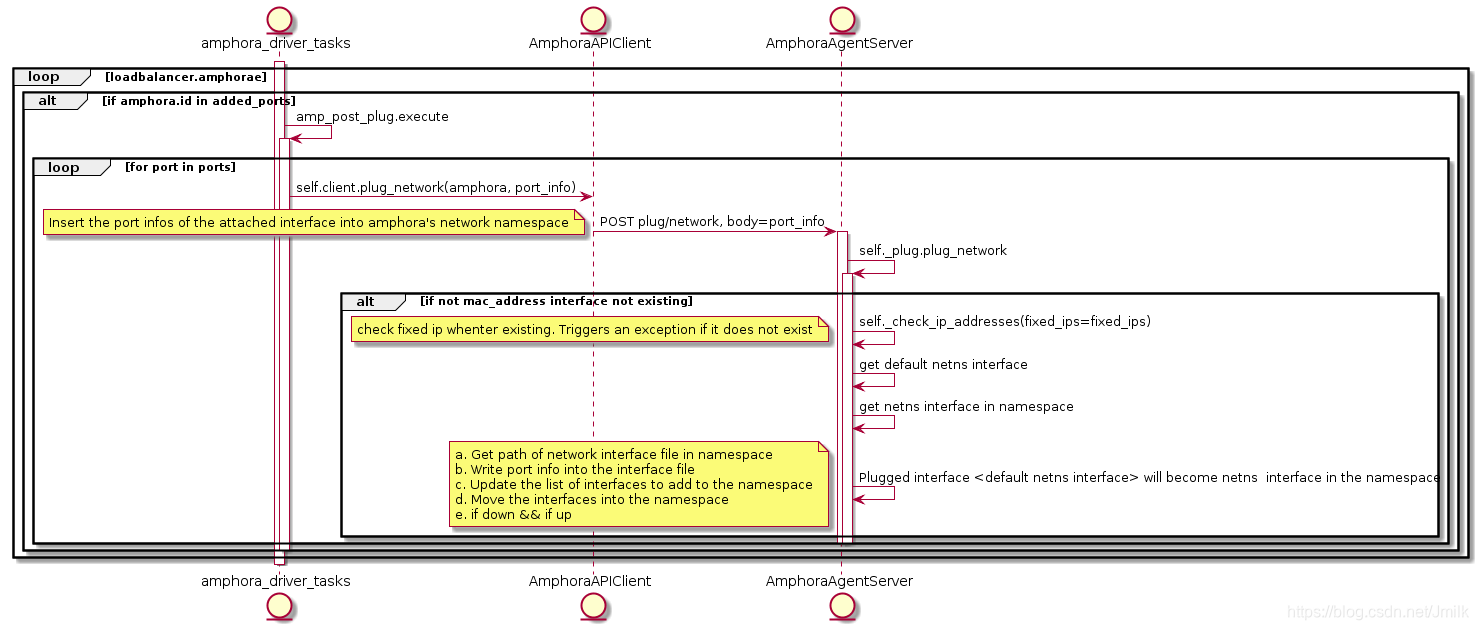

AmphoraePostNetworkPlug

Task:AmphoraePostNetworkPlug 的主要工作是將通過 novaclient 掛載到 amphora 上的 interface 注入到 namespace 中。

回顧一下 Amphora 的網路拓撲:

- Amphora 初始狀態下只有一個用於與 lb-mgmt-net 通訊的埠:

[email protected]:~# ifconfig

ens3 Link encap:Ethernet HWaddr fa:16:3e:b6:8f:a5

inet addr:192.168.0.9 Bcast:192.168.0.255 Mask:255.255.255.0

inet6 addr: fe80::f816:3eff:feb6:8fa5/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1450 Metric:1

RX packets:19462 errors:14099 dropped:0 overruns:0 frame:14099

TX packets:70317 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:1350041 (1.3 MB) TX bytes:15533572 (15.5 MB)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:65536 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1

RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)

如上,inet addr:192.168.0.9 是有 lb-mgmt-net DHCP 分配的。

- 在 Amphora 被分配到 loadbalancer 之後會新增一個 vrrp_port 型別的埠,vrrp_port 充當著 Keepalived 虛擬路由的一張網絡卡,被注入到 namespace 中,一般是 eth1。

[email protected]:~# ip netns exec amphora-haproxy ifconfig

eth1 Link encap:Ethernet HWaddr fa:16:3e:f4:69:4b

inet addr:172.16.1.3 Bcast:172.16.1.255 Mask:255.255.255.0

inet6 addr: fe80::f816:3eff:fef4:694b/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1450 Metric:1

RX packets:12705 errors:0 dropped:0 overruns:0 frame:0

TX packets:613211 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:762300 (762.3 KB) TX bytes:36792968 (36.7 MB)

eth1:0 Link encap:Ethernet HWaddr fa:16:3e:f4:69:4b

inet addr:172.16.1.10 Bcast:172.16.1.255 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1450 Metric:1

VRRP IP: 172.16.1.3 和 VIP: 172.16.1.10 均由 lb-vip-network 的 DHCP 分配,分別對應 lb-vip-network 上的 ports octavia-lb-vrrp-<amphora_uuid> 與 octavia-lb-<loadbalancer_uuid>。interface eth1 的配置為:

[email protected]:~# ip netns exec amphora-haproxy cat /etc/network/interfaces.d/eth1

auto eth1

iface eth1 inet dhcp

[email protected]:~# ip netns exec amphora-haproxy cat /etc/network/interfaces.d/eth1.cfg

# Generated by Octavia agent

auto eth1 eth1:0

iface eth1 inet static

address 172.16.1.3

broadcast 172.16.1.255

netmask 255.255.255.0

gateway 172.16.1.1

mtu 1450

iface eth1:0 inet static

address 172.16.1.10

broadcast 172.16.1.255

netmask 255.255.255.0

# Add a source routing table to allow members to access the VIP

post-up /sbin/ip route add 172.16.1.0/24 dev eth1 src 172.16.1.10 scope link table 1

post-up /sbin/ip route add default via 172.16.1.1 dev eth1 onlink table 1

post-down /sbin/ip route del default via 172.16.1.1 dev eth1 onlink table 1

post-down /sbin/ip route del 172.16.1.0/24 dev eth1 src 172.16.1.10 scope link table 1

post-up /sbin/ip rule add from 172.16.1.10/32 table 1 priority 100

post-down /sbin/ip rule del from 172.16.1.10/32 table 1 priority 100

post-up /sbin/iptables -t nat -A POSTROUTING -p udp -o eth1 -j MASQUERADE

post-down /sbin/iptables -t nat -D POSTROUTING -p udp -o eth1 -j MASQUERADE

Task:AmphoraePostNetworkPlug 主要就是為了生成這些配置檔案的內容。

- 在為 loadbalancer 建立了 member 之後,會根據 menber 所在的 subnet 再為 amphora 新增新的 interfaces。當然了,如果說 member 與 vrrp_port 來自同一個 subnet,則不再為 amphora 新增新的 inferface 了。

[email protected]:~# ip netns exec amphora-haproxy ifconfig

eth1 Link encap:Ethernet HWaddr fa:16:3e:f4:69:4b

inet addr:172.16.1.3 Bcast:172.16.1.255 Mask:255.255.255.0

inet6 addr: fe80::f816:3eff:fef4:694b/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1450 Metric:1

RX packets:12705 errors:0 dropped:0 overruns:0 frame:0

TX packets:613211 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:762300 (762.3 KB) TX bytes:36792968 (36.7 MB)

eth1:0 Link encap:Ethernet HWaddr fa:16:3e:f4:69:4b

inet addr:172.16.1.10 Bcast:172.16.1.255 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1450 Metric:1

eth2 Link encap:Ethernet HWaddr fa:16:3e:18:23:7a

inet addr:192.168.1.3 Bcast:192.168.1.255 Mask:255.255.255.0

inet6 addr: fe80::f816:3eff:fe18:237a/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1450 Metric:1

RX packets:8 errors:2 dropped:0 overruns:0 frame:2

TX packets:8 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:2156 (2.1 KB) TX bytes:808 (808.0 B)

inet addr:192.168.1.3 由 member 所在的 subnet DHCP 分配,配置檔案如下:

# Generated by Octavia agent

auto eth2

iface eth2 inet static

address 192.168.1.3

broadcast 192.168.1.255

netmask 255.255.255.0

mtu 1450

post-up /sbin/iptables -t nat -A POSTROUTING -p udp -o eth2 -j MASQUERADE

post-down /sbin/iptables -t nat -D POSTROUTING -p udp -o eth2 -j MASQUERADE

ListenersUpdate

顯然,最後 haproxy 配置的更改還是由 Task:ListenersUpdate 完成。

# Configuration for loadbalancer 01197be7-98d5-440d-a846-cd70f52dc503

global

daemon

user nobody

log /dev/log local0

log /dev/log local1 notice

stats socket /var/lib/octavia/1385d3c4-615e-4a92-aea1-c4fa51a75557.sock mode 0666 level user

maxconn 1000000

defaults

log global

retries 3

option redispatch

peers 1385d3c4615e4a92aea1c4fa51a75557_peers

peer l_Ustq0qE-h-_Q1dlXLXBAiWR8U 172.16.1.7:1025

peer O08zAgUhIv9TEXhyYZf2iHdxOkA 172.16.1.3:1025

frontend 1385d3c4-615e-4a92-aea1-c4fa51a75557

option httplog

maxconn 1000000

bind 172.16.1.10:8080

mode http

default_backend 8196f752-a367-4fb4-9194-37c7eab95714

timeout client 50000

backend 8196f752-a367-4fb4-9194-37c7eab95714

mode http

balance roundrobin

fullconn 1000000

option allbackups

timeout connect 5000

timeout server 50000

server b6e464fd-dd1e-4775-90f2-4231444a0bbe 192.168.1.14:80 weight 1

實際上,新增 member 就是在 backend(default pool)中加入了 server <member_id> 192.168.1.14:80 weight 1 專案,表示該雲主機作為了 default pool 的一部分。

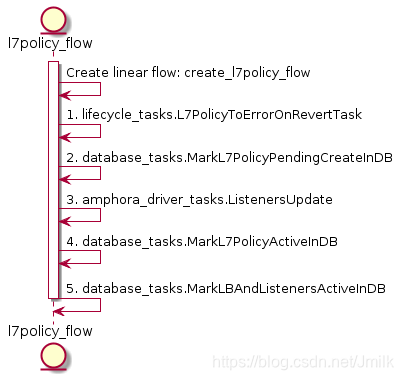

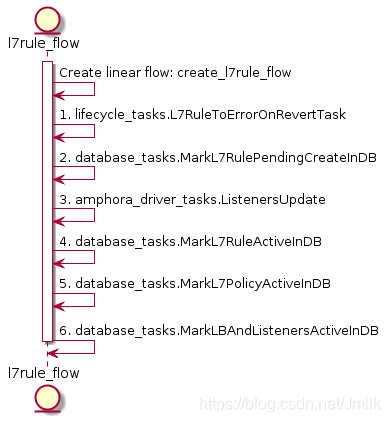

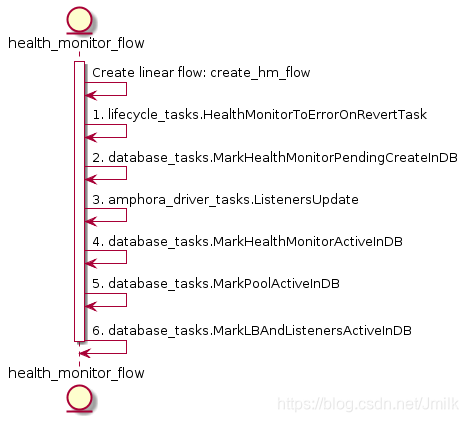

建立 L7policy & L7rule & Health Monitor

L7policy 物件在 Octavia 中的語義是用於描述轉發的動作型別(e.g. 轉發至 pool?轉發至 URL?拒絕轉發?)以及 L7rule 的容器,下屬於 Listener。

L7Rule 物件在 Octavia 中的語義是資料轉發的匹配域,描述了轉發的路由關係,下屬於 L7policy。

Health Monitor 物件用於對 Pool 中 Member 的健康狀態進行監控,本質就是一條資料庫記錄,描述了健康檢查的的規則,下屬於 Pool。

從上述三個 UML 圖可以感受到,Create L7policy、L7rule、Health-Monitor 和 Pool 實際上非常類似,關鍵都是在於 TASK:ListenersUpdate 對 haproxy 配置檔案內容的更新。所以,我們主要通過一些例子來觀察 haproxy 配置檔案的更改規律即可。

- EXAMPLE 1

# 建立 pool 8196f752-a367-4fb4-9194-37c7eab95714 的 healthmonitor

[[email protected] ~]# openstack loadbalancer healthmonitor create --name healthmonitor1 --type PING --delay 5 --timeout 10 --max-retries 3 8196f752-a367-4fb4-9194-37c7eab95714

# pool 8196f752-a367-4fb4-9194-37c7eab95714 下屬有一個 member

[[email protected] ~]# openstack loadbalancer member list 8196f752-a367-4fb4-9194-37c7eab95714

+--------------------------------------+------+----------------------------------+---------------------+--------------+---------------+------------------+--------+

| id | name | project_id | provisioning_status | address | protocol_port | operating_status | weight |

+--------------------------------------+------+----------------------------------+---------------------+--------------+---------------+------------------+--------+

| b6e464fd-dd1e-4775-90f2-4231444a0bbe | | 9e4fe13a6d7645269dc69579c027fde4 | ACTIVE | 192.168.1.14 | 80 | ONLINE | 1 |

+--------------------------------------+------+----------------------------------+---------------------+--------------+---------------+------------------+--------+

# listener 的 1385d3c4-615e-4a92-aea1-c4fa51a75557 l7policy 就是轉發至 default pool

[[email protected] ~]# openstack loadbalancer l7policy create --name l7p1 --action REDIRECT_TO_POOL --redirect-pool 8196f752-a367-4fb4-9194-37c7eab95714 1385d3c4-615e-4a92-aea1-c4fa51a75557

# 轉發的匹配與就是 hostname 以 server 開頭的 instances

[[email protected] ~]# openstack loadbalancer l7rule create --type HOST_NAME --compare-type STARTS_WITH --value "server" 87593985-e02f-4880-b80f-22a4095c05a7

haproxy 配置檔案:

# Configuration for loadbalancer 01197be7-98d5-440d-a846-cd70f52dc503

global

daemon

user nobody

log /dev/log local0

log /dev/log local1 notice

stats socket /var/lib/octavia/1385d3c4-615e-4a92-aea1-c4fa51a75557.sock mode 0666 level user

maxconn 1000000

external-check

defaults

log global

retries 3

option redispatch

peers 1385d3c4615e4a92aea1c4fa51a75557_peers

peer l_Ustq0qE-h-_Q1dlXLXBAiWR8U 172.16.1.7:1025

peer O08zAgUhIv9TEXhyYZf2iHdxOkA 172.16.1.3:1025

frontend 1385d3c4-615e-4a92-aea1-c4fa51a75557

option httplog

maxconn 1000000

# 前端監聽 http://172.16.1.10:8080

bind 172.16.1.10:8080

mode http

# ACL 轉發規則

acl 8d9b8b1e-83d7-44ca-a5b4-0103d5f90cb9 req.hdr(host) -i -m beg server

# if ACL 8d9b8b1e-83d7-44ca-a5b4-0103d5f90cb9 滿足,則轉發至 backend 8196f752-a367-4fb4-9194-37c7eab95714

use_backend 8196f752-a367-4fb4-9194-37c7eab95714 if 8d9b8b1e-83d7-44ca-a5b4-0103d5f90cb9

# 如果沒有匹配到任何 ACL 規則,則轉發至預設 backend 8196f752-a367-4fb4-9194-37c7eab95714

default_backend 8196f752-a367-4fb4-9194-37c7eab95714

timeout client 50000

backend 8196f752-a367-4fb4-9194-37c7eab95714

# 後端監聽協議為 http

mode http

# 負載均衡演算法為 RR 輪詢

balance roundrobin

timeout check 10s

option external-check

# 使用指令碼 ping-wrapper.sh 對 server 進行健康檢查

external-check command /var/lib/octavia/ping-wrapper.sh

fullconn 1000000

option allbackups

timeout connect 5000

timeout server 50000

# 後端真實伺服器(real server),服務埠為 80,監控檢查規則為 inter 5s fall 3 rise 3

server b6e464fd-dd1e-4775-90f2-4231444a0bbe 192.168.1.14:80 weight 1 check inter 5s fall 3 rise 3

健康檢查指令碼:

#!/bin/bash

if [[ $HAPROXY_SERVER_ADDR =~ ":" ]]; then

/bin/ping6 -q -n -w 1 -c 1 $HAPROXY_SERVER_ADDR > /dev/null 2>&1

else

/bin/ping -q -n -w 1 -c 1 $HAPROXY_SERVER_ADDR > /dev/null 2>&1

fi

指令碼的內容,正如我們設定的監控方式為 PING。

- EXAMPLE 2

[[email protected] ~]# openstack loadbalancer healthmonitor create --name healthmonitor1 --type PING --delay 5 --timeout 10 --max-retries 3 822f78c3-ea2c-4770-bef0-e97f1ac2eba8

[[email protected] ~]# openstack loadbalancer l7policy create --name l7p1 --action REDIRECT_TO_POOL --redirect-pool 822f78c3-ea2c-4770-bef0-e97f1ac2eba8 1385d3c4-615e-4a92-aea1-c4fa51a75557

[[email protected] ~]# openstack loadbalancer l7rule create --type HOST_NAME --compare-type STARTS_WITH --value "server" fb90a3b5-c97c-4d99-973e-118840a7a236

haproxy 的配置檔案內容變更為:

# Configuration for loadbalancer 01197be7-98d5-440d-a846-cd70f52dc503

global

daemon

user nobody

log /dev/log local0

log /dev/log local1 notice

stats socket /var/lib/octavia/1385d3c4-615e-4a92-aea1-c4fa51a75557.sock mode 0666 level user

maxconn 1000000

external-check

defaults

log global

retries 3

option redispatch

peers 1385d3c4615e4a92aea1c4fa51a75557_peers

peer l_Ustq0qE-h-_Q1dlXLXBAiWR8U 172.16.1.7:1025

peer O08zAgUhIv9TEXhyYZf2iHdxOkA 172.16.1.3:1025

frontend 1385d3c4-615e-4a92-aea1-c4fa51a75557

option httplog

maxconn 1000000

bind 172.16.1.10:8080

mode http

acl 8d9b8b1e-83d7-44ca-a5b4-0103d5f90cb9 req.hdr(host) -i -m beg server

use_backend 8196f752-a367-4fb4-9194-37c7eab95714 if 8d9b8b1e-83d7-44ca-a5b4-0103d5f90cb9

acl c76f36bc-92c0-4f48-8d57-a13e3b1f09e1 req.hdr(host) -i -m beg server

use_backend 822f78c3-ea2c-4770-bef0-e97f1ac2eba8 if c76f36bc-92c0-4f48-8d57-a13e3b1f09e1

default_backend 8196f752-a367-4fb4-9194-37c7eab95714

timeout client 50000

backend 8196f752-a367-4fb4-9194-37c7eab95714

mode http

balance roundrobin

timeout check 10s

option external-check

external-check command /var/lib/octavia/ping-wrapper.sh

fullconn 1000000

option allbackups

timeout connect 5000

timeout server 50000

server b6e464fd-dd1e-4775-90f2-4231444a0bbe 192.168.1.14:80 weight 1 check inter 5s fall 3 rise 3

backend 822f78c3-ea2c-4770-bef0-e97f1ac2eba8

mode http

balance roundrobin

timeout check 10s

option external-check

external-check command /var/lib/octavia/ping-wrapper.sh

fullconn 1000000

option allbackups

timeout connect 5000

timeout server 50000

server 7da6f176-36c6-479a-9d86-c892ecca6ae5 192.168.1.6:80 weight 1 check inter 5s fall 3 rise 3

可見,在為 listener 添加了 shared pool 之後,會在增加一個 backend section 對應 shared pool 822f78c3-ea2c-4770-bef0-e97f1ac2eba8。