FFMPEG結構體分析:AVFrame

注:寫了一系列的結構體的分析的文章,在這裡列一個列表:

FFMPEG有幾個最重要的結構體,包含了解協議,解封裝,解碼操作,此前已經進行過分析:

在此不再詳述,其中AVFrame是包含碼流引數較多的結構體。本文將會詳細分析一下該結構體裡主要變數的含義和作用。

首先看一下結構體的定義(位於avcodec.h):

/* *雷霄驊 *[email protected] *中國傳媒大學/數字電視技術 *//** * Audio Video Frame. * New fields can be added to the end of AVFRAME with minor version * bumps. Similarly fields that are marked as to be only accessed by * av_opt_ptr() can be reordered. This allows 2 forks to add fields * without breaking compatibility with each other. * Removal, reordering and changes in the remaining cases require * a major version bump. * sizeof(AVFrame) must not be used outside libavcodec. */ 下面看幾個主要變數的作用(在這裡考慮解碼的情況):

uint8_t *data[AV_NUM_DATA_POINTERS]:解碼後原始資料(對視訊來說是YUV,RGB,對音訊來說是PCM)

int linesize[AV_NUM_DATA_POINTERS]:data中“一行”資料的大小。注意:未必等於影象的寬,一般大於影象的寬。

int width, height:視訊幀寬和高(1920x1080,1280x720...)

int nb_samples:音訊的一個AVFrame中可能包含多個音訊幀,在此標記包含了幾個

int format:解碼後原始資料型別(YUV420,YUV422,RGB24...)

int key_frame:是否是關鍵幀

enum AVPictureType pict_type:幀型別(I,B,P...)

AVRational sample_aspect_ratio:寬高比(16:9,4:3...)

int64_t pts:顯示時間戳

int coded_picture_number:編碼幀序號

int display_picture_number:顯示幀序號

int8_t *qscale_table:QP表

uint8_t *mbskip_table:跳過巨集塊表

int16_t (*motion_val[2])[2]:運動矢量表

uint32_t *mb_type:巨集塊型別表

short *dct_coeff:DCT係數,這個沒有提取過

int8_t *ref_index[2]:運動估計參考幀列表(貌似H.264這種比較新的標準才會涉及到多參考幀)

int interlaced_frame:是否是隔行掃描

uint8_t motion_subsample_log2:一個巨集塊中的運動向量取樣個數,取log的

其他的變數不再一一列舉,原始碼中都有詳細的說明。在這裡重點分析一下幾個需要一定的理解的變數:

1.data[]

對於packed格式的資料(例如RGB24),會存到data[0]裡面。

對於planar格式的資料(例如YUV420P),則會分開成data[0],data[1],data[2]...(YUV420P中data[0]存Y,data[1]存U,data[2]存V)

2.pict_type

包含以下型別:

enum AVPictureType { AV_PICTURE_TYPE_NONE = 0, ///< Undefined AV_PICTURE_TYPE_I, ///< Intra AV_PICTURE_TYPE_P, ///< Predicted AV_PICTURE_TYPE_B, ///< Bi-dir predicted AV_PICTURE_TYPE_S, ///< S(GMC)-VOP MPEG4 AV_PICTURE_TYPE_SI, ///< Switching Intra AV_PICTURE_TYPE_SP, ///< Switching Predicted AV_PICTURE_TYPE_BI, ///< BI type};寬高比是一個分數,FFMPEG中用AVRational表達分數:

/** * rational number numerator/denominator */typedef struct AVRational{ int num; ///< numerator int den; ///< denominator} AVRational;QP表指向一塊記憶體,裡面儲存的是每個巨集塊的QP值。巨集塊的標號是從左往右,一行一行的來的。每個巨集塊對應1個QP。

qscale_table[0]就是第1行第1列巨集塊的QP值;qscale_table[1]就是第1行第2列巨集塊的QP值;qscale_table[2]就是第1行第3列巨集塊的QP值。以此類推...

巨集塊的個數用下式計算:

注:巨集塊大小是16x16的。

每行巨集塊數:

int mb_stride = pCodecCtx->width/16+1巨集塊的總數:

int mb_sum = ((pCodecCtx->height+15)>>4)*(pCodecCtx->width/16+1)5.motion_subsample_log2

1個運動向量所能代表的畫面大小(用寬或者高表示,單位是畫素),注意,這裡取了log2。

程式碼註釋中給出以下資料:

4->16x16, 3->8x8, 2-> 4x4, 1-> 2x2

即1個運動向量代表16x16的畫面的時候,該值取4;1個運動向量代表8x8的畫面的時候,該值取3...以此類推

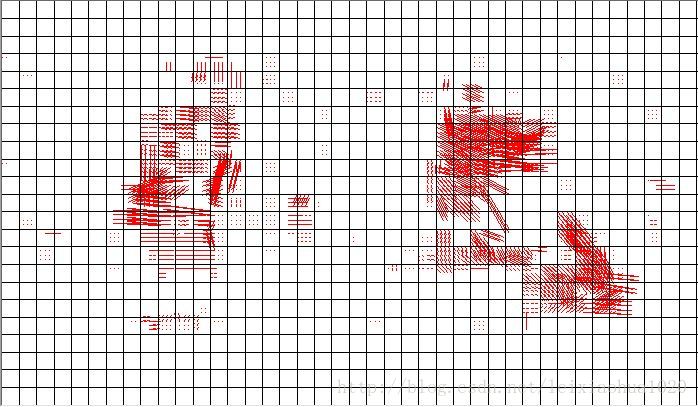

6.motion_val

運動矢量表儲存了一幀視訊中的所有運動向量。

該值的儲存方式比較特別:

int16_t (*motion_val[2])[2];註釋中給了一段程式碼:

int mv_sample_log2= 4 - motion_subsample_log2;int mb_width= (width+15)>>4;int mv_stride= (mb_width << mv_sample_log2) + 1;motion_val[direction][x + y*mv_stride][0->mv_x, 1->mv_y];大概知道了該資料的結構:

1.首先分為兩個列表L0和L1

2.每個列表(L0或L1)儲存了一系列的MV(每個MV對應一個畫面,大小由motion_subsample_log2決定)

3.每個MV分為橫座標和縱座標(x,y)

注意,在FFMPEG中MV和MB在儲存的結構上是沒有什麼關聯的,第1個MV是螢幕上左上角畫面的MV(畫面的大小取決於motion_subsample_log2),第2個MV是螢幕上第1行第2列的畫面的MV,以此類推。因此在一個巨集塊(16x16)的運動向量很有可能如下圖所示(line代表一行運動向量的個數):

//例如8x8劃分的運動向量與巨集塊的關係: //------------------------- //| | | //|mv[x] |mv[x+1] | //------------------------- //| | | //|mv[x+line]|mv[x+line+1]| //-------------------------7.mb_type

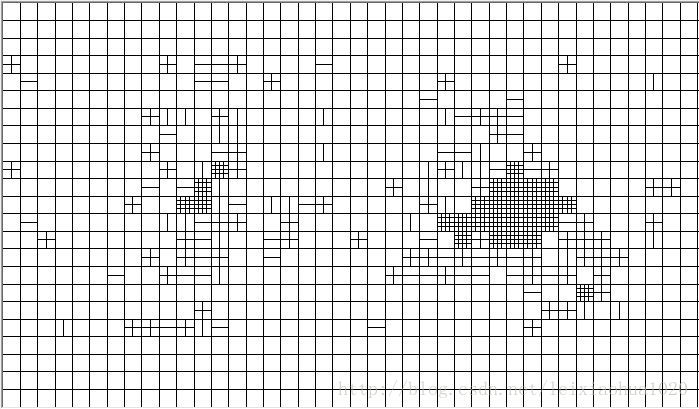

巨集塊型別表儲存了一幀視訊中的所有巨集塊的型別。其儲存方式和QP表差不多。只不過其是uint32型別的,而QP表是uint8型別的。每個巨集塊對應一個巨集塊型別變數。

巨集塊型別如下定義所示:

//The following defines may change, don't expect compatibility if you use them.#define MB_TYPE_INTRA4x4 0x0001#define MB_TYPE_INTRA16x16 0x0002 //FIXME H.264-specific#define MB_TYPE_INTRA_PCM 0x0004 //FIXME H.264-specific#define MB_TYPE_16x16 0x0008#define MB_TYPE_16x8 0x0010#define MB_TYPE_8x16 0x0020#define MB_TYPE_8x8 0x0040#define MB_TYPE_INTERLACED 0x0080#define MB_TYPE_DIRECT2 0x0100 //FIXME#define MB_TYPE_ACPRED 0x0200#define MB_TYPE_GMC 0x0400#define MB_TYPE_SKIP 0x0800#define MB_TYPE_P0L0 0x1000#define MB_TYPE_P1L0 0x2000#define MB_TYPE_P0L1 0x4000#define MB_TYPE_P1L1 0x8000#define MB_TYPE_L0 (MB_TYPE_P0L0 | MB_TYPE_P1L0)#define MB_TYPE_L1 (MB_TYPE_P0L1 | MB_TYPE_P1L1)#define MB_TYPE_L0L1 (MB_TYPE_L0 | MB_TYPE_L1)#define MB_TYPE_QUANT 0x00010000#define MB_TYPE_CBP 0x00020000//Note bits 24-31 are reserved for codec specific use (h264 ref0, mpeg1 0mv, ...)8.ref_index

運動估計參考幀列表儲存了一幀視訊中所有巨集塊的參考幀索引。這個列表其實在比較早的壓縮編碼標準中是沒有什麼用的。只有像H.264這樣的編碼標準才有多參考幀的概念。但是這個欄位目前我還沒有研究透。只是知道每個巨集塊包含有4個該值,該值反映的是參考幀的索引。以後有機會再進行細研究吧。

在這裡展示一下自己做的碼流分析軟體的執行結果。將上文介紹的幾個列表影象化顯示了出來(在這裡是使用MFC的繪圖函式畫出來的)

視訊幀:

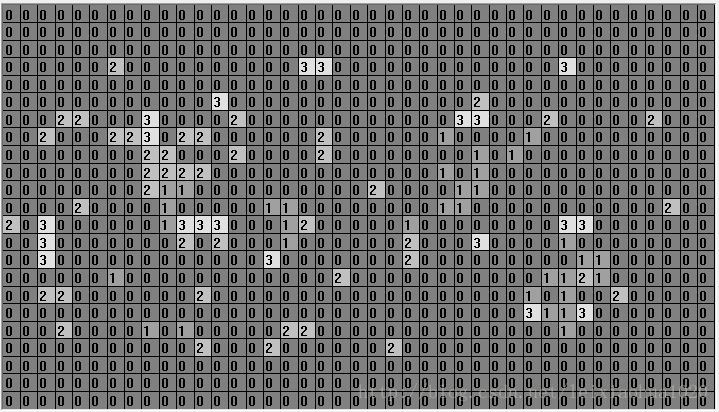

QP引數提取的結果:

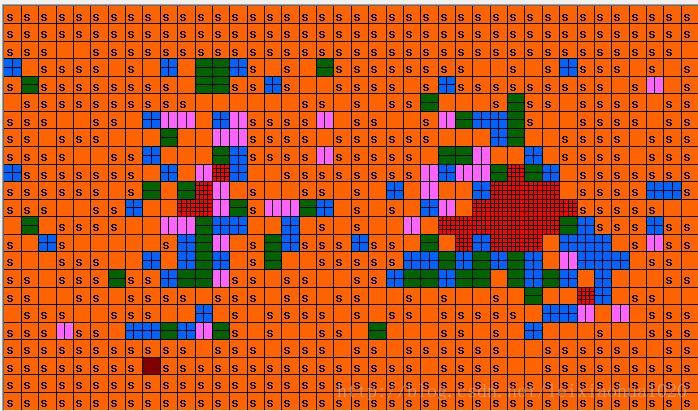

美化過的(加上了顏色):

巨集塊型別引數提取的結果:

美化過的(加上了顏色,更清晰一些,s代表skip巨集塊):

運動向量引數提取的結果(在這裡是List0):

運動估計參考幀引數提取的結果: