目標跟蹤技術

阿新 • • 發佈:2019-01-01

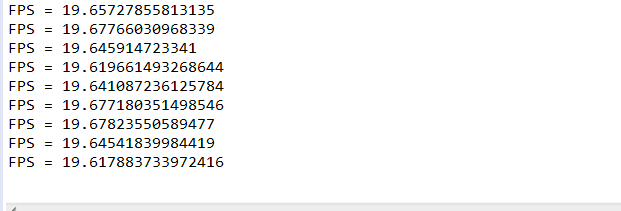

Boofcv研究:動態目標追蹤

public class RemovalMoving {

public static void main(String[] args) {

String fileName = UtilIO.pathExample("D:\\JavaProject\\Boofcv\\example\\tracking\\chipmunk.mjpeg");

ImageType imageType = ImageType.single(GrayF32.class);

//ImageType imageType = ImageType.il(3, InterleavedF32.class);