HAWQ與Hive查詢效能對比測試

阿新 • • 發佈:2019-01-04

一、實驗目的

本實驗通過模擬一個典型的應用場景和實際資料量,測試並對比HAWQ內部表、外部表與Hive的查詢效能。二、硬體環境

1. 四臺VMware虛機組成的Hadoop叢集。2. 每臺機器配置如下:

(1)15K RPM SAS 100GB

(2)Intel(R) Xeon(R) E5-2620 v2 @ 2.10GHz,雙核雙CPU

(3)8G記憶體,8GSwap

(4)10000Mb/s虛擬網絡卡

三、軟體環境

1. Linux:CentOS release 6.4,核心2.6.32-358.el6.x86_642. Ambari:2.4.1

3. Hadoop:HDP 2.5.0

4. Hive(Hive on Tez):2.1.0

5. HAWQ:2.1.1.0

6. HAWQ PXF:3.1.1

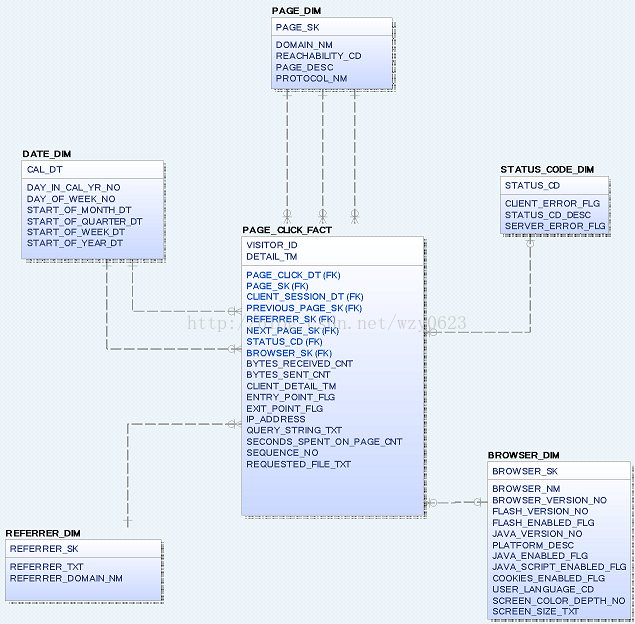

四、資料模型

1. 表結構

實驗模擬一個記錄頁面點選資料的應用場景。資料模型中包含日期、頁面、瀏覽器、引用、狀態5個維度表,1個頁面點選事實表。表結構和關係如圖1所示。 圖1

圖12. 記錄數

各表的記錄數如表1所示。表名 | 行數 |

page_click_fact | 1億 |

page_dim | 20萬 |

referrer_dim | 100萬 |

browser_dim | 2萬 |

status_code | 70 |

date_dim | 366 |

五、建表並生成資料

1. 建立hive庫表

說明:hive表使用ORCfile儲存格式。create database test; use test; create table browser_dim( browser_sk bigint, browser_nm varchar(100), browser_version_no varchar(100), flash_version_no varchar(100), flash_enabled_flg int, java_version_no varchar(100), platform_desc string, java_enabled_flg int, java_script_enabled_flg int, cookies_enabled_flg int, user_language_cd varchar(100), screen_color_depth_no varchar(100), screen_size_txt string) row format delimited fields terminated by ',' stored as orc; create table date_dim( cal_dt date, day_in_cal_yr_no int, day_of_week_no int, start_of_month_dt date, start_of_quarter_dt date, start_of_week_dt date, start_of_year_dt date) row format delimited fields terminated by ',' stored as orc; create table page_dim( page_sk bigint, domain_nm varchar(200), reachability_cd string, page_desc string, protocol_nm varchar(20)) row format delimited fields terminated by ',' stored as orc; create table referrer_dim( referrer_sk bigint, referrer_txt string, referrer_domain_nm varchar(200)) row format delimited fields terminated by ',' stored as orc; create table status_code_dim( status_cd varchar(100), client_error_flg int, status_cd_desc string, server_error_flg int) row format delimited fields terminated by ',' stored as orc; create table page_click_fact( visitor_id varchar(100), detail_tm timestamp, page_click_dt date, page_sk bigint, client_session_dt date, previous_page_sk bigint, referrer_sk bigint, next_page_sk bigint, status_cd varchar(100), browser_sk bigint, bytes_received_cnt bigint, bytes_sent_cnt bigint, client_detail_tm timestamp, entry_point_flg int, exit_point_flg int, ip_address varchar(20), query_string_txt string, seconds_spent_on_page_cnt int, sequence_no int, requested_file_txt string) row format delimited fields terminated by ',' stored as orc;

2. 用Java程式生成hive表資料

ORC壓縮後的各表對應的HDFS檔案大小如下:2.2 M /apps/hive/warehouse/test.db/browser_dim 641 /apps/hive/warehouse/test.db/date_dim 4.1 G /apps/hive/warehouse/test.db/page_click_fact 16.1 M /apps/hive/warehouse/test.db/page_dim 22.0 M /apps/hive/warehouse/test.db/referrer_dim 1.1 K /apps/hive/warehouse/test.db/status_code_dim

3. 分析hive表

analyze table date_dim compute statistics;

analyze table browser_dim compute statistics;

analyze table page_dim compute statistics;

analyze table referrer_dim compute statistics;

analyze table status_code_dim compute statistics;

analyze table page_click_fact compute statistics;4. 建立HAWQ外部表

create schema ext;

set search_path=ext;

create external table date_dim(

cal_dt date,

day_in_cal_yr_no int4,

day_of_week_no int4,

start_of_month_dt date,

start_of_quarter_dt date,

start_of_week_dt date,

start_of_year_dt date

)

location ('pxf://hdp1:51200/test.date_dim?profile=hiveorc')

format 'custom' (formatter='pxfwritable_import');

create external table browser_dim(

browser_sk int8,

browser_nm varchar(100),

browser_version_no varchar(100),

flash_version_no varchar(100),

flash_enabled_flg int,

java_version_no varchar(100),

platform_desc text,

java_enabled_flg int,

java_script_enabled_flg int,

cookies_enabled_flg int,

user_language_cd varchar(100),

screen_color_depth_no varchar(100),

screen_size_txt text

)

location ('pxf://hdp1:51200/test.browser_dim?profile=hiveorc')

format 'custom' (formatter='pxfwritable_import');

create external table page_dim(

page_sk int8,

domain_nm varchar(200),

reachability_cd text,

page_desc text,

protocol_nm varchar(20)

)

location ('pxf://hdp1:51200/test.page_dim?profile=hiveorc')

format 'custom' (formatter='pxfwritable_import');

create external table referrer_dim(

referrer_sk int8,

referrer_txt text,

referrer_domain_nm varchar(200)

)

location ('pxf://hdp1:51200/test.referrer_dim?profile=hiveorc')

format 'custom' (formatter='pxfwritable_import');

create external table status_code_dim(

status_cd varchar(100),

client_error_flg int4,

status_cd_desc text,

server_error_flg int4

)

location ('pxf://hdp1:51200/test.status_code_dim?profile=hiveorc')

format 'custom' (formatter='pxfwritable_import');

create external table page_click_fact(

visitor_id varchar(100),

detail_tm timestamp,

page_click_dt date,

page_sk int8,

client_session_dt date,

previous_page_sk int8,

referrer_sk int8,

next_page_sk int8,

status_cd varchar(100),

browser_sk int8,

bytes_received_cnt int8,

bytes_sent_cnt int8,

client_detail_tm timestamp,

entry_point_flg int4,

exit_point_flg int4,

ip_address varchar(20),

query_string_txt text,

seconds_spent_on_page_cnt int4,

sequence_no int4,

requested_file_txt text

)

location ('pxf://hdp1:51200/test.page_click_fact?profile=hiveorc')

format 'custom' (formatter='pxfwritable_import');5. 建立HAWQ內部表

set search_path=public;

create table date_dim(

cal_dt date,

day_in_cal_yr_no int4,

day_of_week_no int4,

start_of_month_dt date,

start_of_quarter_dt date,

start_of_week_dt date,

start_of_year_dt date) with (compresstype=snappy,appendonly=true);

create table browser_dim(

browser_sk int8,

browser_nm varchar(100),

browser_version_no varchar(100),

flash_version_no varchar(100),

flash_enabled_flg int,

java_version_no varchar(100),

platform_desc text,

java_enabled_flg int,

java_script_enabled_flg int,

cookies_enabled_flg int,

user_language_cd varchar(100),

screen_color_depth_no varchar(100),

screen_size_txt text

) with (compresstype=snappy,appendonly=true);

create table page_dim(

page_sk int8,

domain_nm varchar(200),

reachability_cd text,

page_desc text,

protocol_nm varchar(20)

) with (compresstype=snappy,appendonly=true);

create table referrer_dim(

referrer_sk int8,

referrer_txt text,

referrer_domain_nm varchar(200)

) with (compresstype=snappy,appendonly=true);

create table status_code_dim(

status_cd varchar(100),

client_error_flg int4,

status_cd_desc text,

server_error_flg int4

) with (compresstype=snappy,appendonly=true);

create table page_click_fact(

visitor_id varchar(100),

detail_tm timestamp,

page_click_dt date,

page_sk int8,

client_session_dt date,

previous_page_sk int8,

referrer_sk int8,

next_page_sk int8,

status_cd varchar(100),

browser_sk int8,

bytes_received_cnt int8,

bytes_sent_cnt int8,

client_detail_tm timestamp,

entry_point_flg int4,

exit_point_flg int4,

ip_address varchar(20),

query_string_txt text,

seconds_spent_on_page_cnt int4,

sequence_no int4,

requested_file_txt text

) with (compresstype=snappy,appendonly=true);6. 生成HAWQ內部表資料

insert into date_dim select * from hcatalog.test.date_dim;

insert into browser_dim select * from hcatalog.test.browser_dim;

insert into page_dim select * from hcatalog.test.page_dim;

insert into referrer_dim select * from hcatalog.test.referrer_dim;

insert into status_code_dim select * from hcatalog.test.status_code_dim;

insert into page_click_fact select * from hcatalog.test.page_click_fact;6.2 K /hawq_data/16385/177422/177677

3.3 M /hawq_data/16385/177422/177682

23.9 M /hawq_data/16385/177422/177687

39.3 M /hawq_data/16385/177422/177707

1.8 K /hawq_data/16385/177422/177726

7.9 G /hawq_data/16385/177422/1777317. 分析HAWQ內部表

analyze date_dim;

analyze browser_dim;

analyze page_dim;

analyze referrer_dim;

analyze status_code_dim;

analyze page_click_fact;六、執行查詢

分別在hive表、HAWQ外部表、HAWQ內部表上執行以下5個查詢語句,記錄執行時間。1. 查詢給定週中support.sas.com站點上訪問最多的目錄

-- hive查詢

select top_directory, count(*) as unique_visits

from (select distinct visitor_id, substr(requested_file_txt,1,10) top_directory

from page_click_fact, page_dim, browser_dim

where domain_nm = 'support.sas.com'

and flash_enabled_flg=1

and weekofyear(detail_tm) = 19

and year(detail_tm) = 2017

) directory_summary

group by top_directory

order by unique_visits;

-- HAWQ查詢,只是用extract函式代替了hive的weekofyear和year函式,與hive的查詢語句等價。

select top_directory, count(*) as unique_visits

from (select distinct visitor_id, substr(requested_file_txt,1,10) top_directory

from page_click_fact, page_dim, browser_dim

where domain_nm = 'support.sas.com'

and flash_enabled_flg=1

and extract(week from detail_tm) = 19

and extract(year from detail_tm) = 2017

) directory_summary

group by top_directory

order by unique_visits;2. 查詢各月從www.google.com訪問的頁面

-- hive查詢

select domain_nm, requested_file_txt, count(*) as unique_visitors, month

from (select distinct domain_nm, requested_file_txt, visitor_id, month(detail_tm) as month

from page_click_fact, page_dim, referrer_dim

where domain_nm = 'support.sas.com'

and referrer_domain_nm = 'www.google.com'

) visits_pp_ph_summary

group by domain_nm, requested_file_txt, month

order by domain_nm, requested_file_txt, unique_visitors desc, month asc;

-- HAWQ查詢,只是用extract函式代替了hive的month函式,與hive的查詢語句等價。

select domain_nm, requested_file_txt, count(*) as unique_visitors, month

from (select distinct domain_nm, requested_file_txt, visitor_id, extract(month from detail_tm) as month

from page_click_fact, page_dim, referrer_dim

where domain_nm = 'support.sas.com'

and referrer_domain_nm = 'www.google.com'

) visits_pp_ph_summary

group by domain_nm, requested_file_txt, month

order by domain_nm, requested_file_txt, unique_visitors desc, month asc;3. 給定年份support.sas.com站點上的搜尋字串計數

-- hive查詢

select query_string_txt, count(*) as count

from page_click_fact, page_dim

where query_string_txt <> ''

and domain_nm='support.sas.com'

and year(detail_tm) = '2017'

group by query_string_txt

order by count desc;

-- HAWQ查詢,只是用extract函式代替了hive的year函式,與hive的查詢語句等價。

select query_string_txt, count(*) as count

from page_click_fact, page_dim

where query_string_txt <> ''

and domain_nm='support.sas.com'

and extract(year from detail_tm) = '2017'

group by query_string_txt

order by count desc;4. 查詢使用Safari瀏覽器訪問每個頁面的人數

-- hive查詢

select domain_nm, requested_file_txt, count(*) as unique_visitors

from (select distinct domain_nm, requested_file_txt, visitor_id

from page_click_fact, page_dim, browser_dim

where domain_nm='support.sas.com'

and browser_nm like '%Safari%'

and weekofyear(detail_tm) = 19

and year(detail_tm) = 2017

) uv_summary

group by domain_nm, requested_file_txt

order by unique_visitors desc;

-- HAWQ查詢,只是用extract函式代替了hive的weekofyear和year函式,與hive的查詢語句等價。

select domain_nm, requested_file_txt, count(*) as unique_visitors

from (select distinct domain_nm, requested_file_txt, visitor_id

from page_click_fact, page_dim, browser_dim

where domain_nm='support.sas.com'

and browser_nm like '%Safari%'

and extract(week from detail_tm) = 19

and extract(year from detail_tm) = 2017

) uv_summary

group by domain_nm, requested_file_txt

order by unique_visitors desc;5. 查詢給定週中support.sas.com站點上瀏覽超過10秒的頁面

-- hive查詢

select domain_nm, requested_file_txt, count(*) as unique_visits

from (select distinct domain_nm, requested_file_txt, visitor_id

from page_click_fact, page_dim

where domain_nm='support.sas.com'

and weekofyear(detail_tm) = 19

and year(detail_tm) = 2017

and seconds_spent_on_page_cnt > 10

) visits_summary

group by domain_nm, requested_file_txt

order by unique_visits desc;

-- HAWQ查詢,只是用extract函式代替了hive的weekofyear和year函式,與hive的查詢語句等價。

select domain_nm, requested_file_txt, count(*) as unique_visits

from (select distinct domain_nm, requested_file_txt, visitor_id

from page_click_fact, page_dim

where domain_nm='support.sas.com'

and extract(week from detail_tm) = 19

and extract(year from detail_tm) = 2017

and seconds_spent_on_page_cnt > 10

) visits_summary

group by domain_nm, requested_file_txt

order by unique_visits desc;七、測試結果

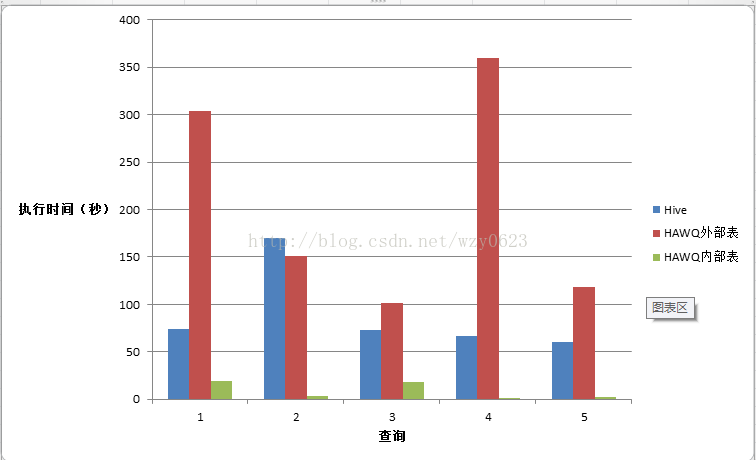

Hive、HAWQ外部表、HAWQ內部表查詢時間對比如表2所示。每種查詢情況執行三次取平均值。查詢 | Hive(秒) | HAWQ外部表(秒) | HAWQ內部表(秒) |

1 | 74.337 | 304.134 | 19.232 |

2 | 169.521 | 150.882 | 3.446 |

3 | 73.482 | 101.216 | 18.565 |

4 | 66.367 | 359.778 | 1.217 |

5 | 60.341 | 118.329 | 2.789 |

從圖2中的對比可以看到,HAWQ內部表比Hive on Tez快的多(4-50倍)。同樣的查詢,在HAWQ的Hive外部表上執行卻很慢。因此,在執行分析型查詢時最好使用HAWQ內部表。如果不可避免地需要使用外部表,為了獲得滿意的查詢效能,需要保證外部表資料量儘可能小。同時要使查詢儘可能簡單,儘量避免在外部表上執行聚合、分組、排序等複雜操作。

圖2

圖2