Spark 核心程式設計(10)-Top N

阿新 • • 發佈:2019-01-11

1 TopN

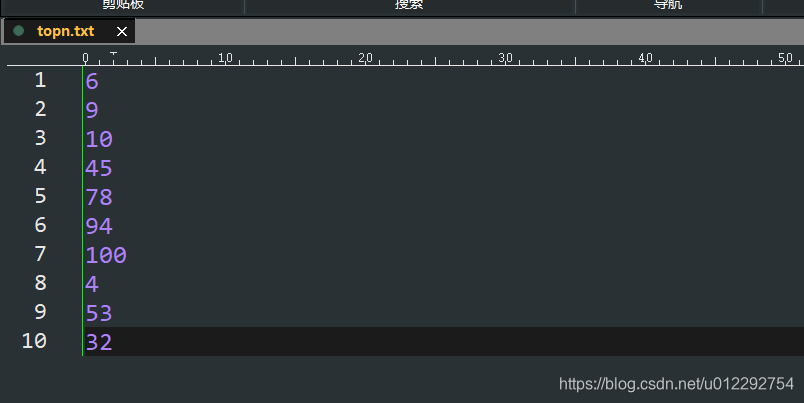

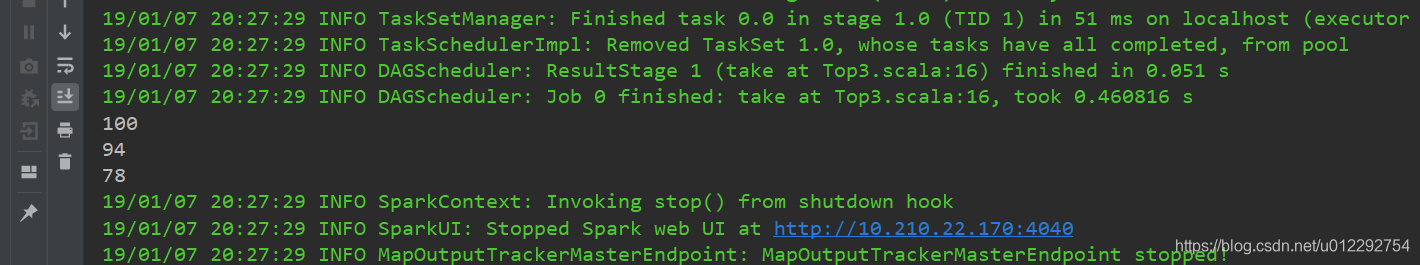

1.1 對檔案內數字,取最大的前 3 個

- Java 版本

package topn;

import org.apache.spark.SparkConf;

import org.apache.spark.api.java.JavaPairRDD;

import org.apache.spark.api.java.JavaRDD;

import org.apache.spark.api.java.JavaSparkContext;

import org.apache.spark.api.java.function.Function;

import - Scala 版本

import org.apache.spark.{SparkConf, SparkContext}

object Top3 {

def main(args: Array[String]): Unit = {

val conf = new SparkConf().setAppName("Top3").setMaster("local")

val sc = new SparkContext(conf)

val lines = sc.textFile("D:/topn.txt")

val pairs = lines.map(line=>(line.toInt,line))

val sortedPairs = pairs.sortByKey(false)

val sortedNums = sortedPairs.map(_._1)

val top3 = sortedNums.take(3)

top3.foreach(println)

}

}

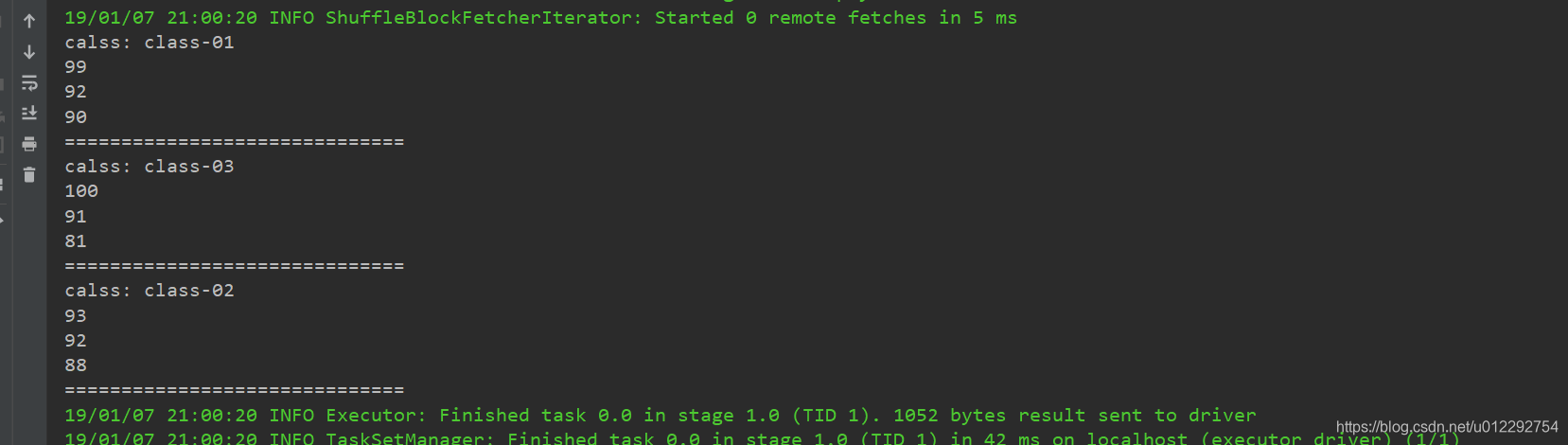

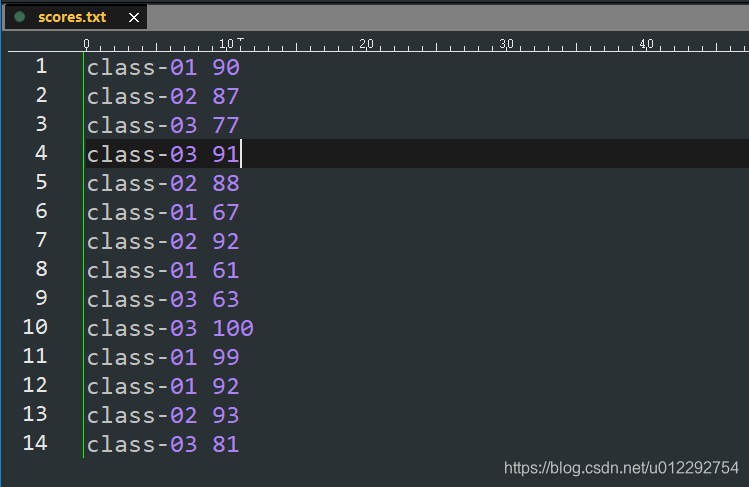

2 對每個班級內的學生成績,取出前3

- 分組取 topN

2.1 Java 版本

package topn;

import org.apache.spark.SparkConf;

import org.apache.spark.api.java.JavaPairRDD;

import org.apache.spark.api.java.JavaRDD;

import org.apache.spark.api.java.JavaSparkContext;

import org.apache.spark.api.java.function.PairFunction;

import org.apache.spark.api.java.function.VoidFunction;

import scala.Tuple2;

import java.util.Arrays;

import java.util.Iterator;

;

public class GroupTop3 {

public static void main(String[] args) {

SparkConf conf = new SparkConf().setAppName("Top3").setMaster("local");

JavaSparkContext sc = new JavaSparkContext(conf);

JavaRDD<String> lines = sc.textFile("D:/scores.txt");

JavaPairRDD<String, Integer> pairs = lines.mapToPair(new PairFunction<String, String, Integer>() {

@Override

public Tuple2<String, Integer> call(String s) throws Exception {

String[] lineSplited = s.split(" ");

return new Tuple2<>(lineSplited[0], Integer.valueOf(lineSplited[1]));

}

});

JavaPairRDD<String, Iterable<Integer>> groupedPairs = pairs.groupByKey();

JavaPairRDD<String, Iterable<Integer>> top3Score = groupedPairs.mapToPair(new PairFunction<Tuple2<String, Iterable<Integer>>, String, Iterable<Integer>>() {

@Override

public Tuple2<String, Iterable<Integer>> call(Tuple2<String, Iterable<Integer>> classScores) throws Exception {

Integer[] top3 = new Integer[3];

String className = classScores._1;

Iterator<Integer> scores = classScores._2.iterator();

while (scores.hasNext()) {

Integer score = scores.next();

for (int i = 0; i < 3; i++) {

if (top3[i] == null) {

top3[i] = score;

break;

} else if (score > top3[i]) { //後移一位

for (int j = 2; j > i; j--) {

top3[j] = top3[j - 1];

}

top3[i] = score;

break;

}

}

}

return new Tuple2<String, Iterable<Integer>>(className, Arrays.asList(top3));

}

});

top3Score.foreach(new VoidFunction<Tuple2<String, Iterable<Integer>>>() {

@Override

public void call(Tuple2<String, Iterable<Integer>> t) throws Exception {

System.out.println("calss: " + t._1);

Iterator<Integer> it = t._2.iterator();

while (it.hasNext()) {

Integer score = it.next();

System.out.println(score);

}

System.out.println("==============================");

}

});

}

}