New Amazon S3 Encryption & Security Features

Back in 2006, when I announced S3, I wrote ” Further, each block is protected by an ACL (Access Control List) allowing the developer to keep the data private, share it for reading, or share it for reading and writing, as desired.”

Starting from that initial model, with private buckets and ACLs to grant access, we have added support for

Today we are adding five new encryption and security features to S3:

Default Encryption – You can now mandate that all objects in a bucket must be stored in encrypted form without having to construct a bucket policy that rejects objects that are not encrypted.

Permission Checks – The S3 Console now displays a prominent indicator next to each S3 bucket that is publicly accessible.

Cross-Region Replication ACL Overwrite – When you replicate objects across AWS accounts, you can now specify that the object gets a new ACL that gives full access to the destination account.

Cross-Region Replication with KMS – You can now replicate objects that are encrypted with keys that are managed by AWS Key Management Service (KMS).

Detailed Inventory Report – The S3 Inventory report now includes the encryption status of each object. The report itself can also be encrypted.

Let’s take a look at each one…

Default Encryption

You have three server-side encryption options for your S3 objects: SSE-S3 with keys that are managed by S3, SSE-KMS with keys that are managed by AWS KMS, and SSE-C with keys that you manage. Some of our customers, particularly those who need to meet compliance requirements that dictate the use of encryption at rest, have used bucket policies to ensure that every newly stored object is encrypted. While this helps them to meet their requirements, simply rejecting the storage of unencrypted objects is an imperfect solution.

You can now mandate that all objects in a bucket must be stored in encrypted form by installing a bucket encryption configuration. If an unencrypted object is presented to S3 and the configuration indicates that encryption must be used, the object will be encrypted using encryption option specified for the bucket (the PUT request can also specify a different option).

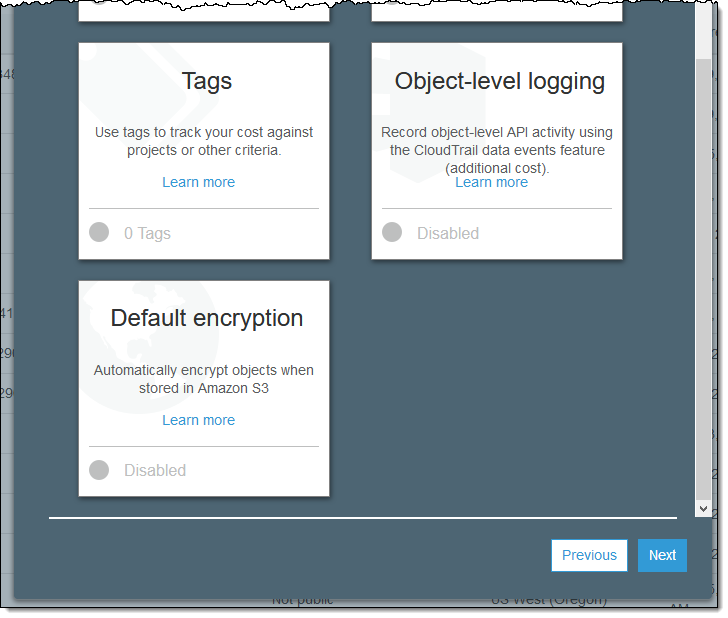

Here’s how to enable this feature using the S3 Console when you create a new bucket. Enter the name of the bucket as usual and click on Next. Then scroll down and click on Default encryption:

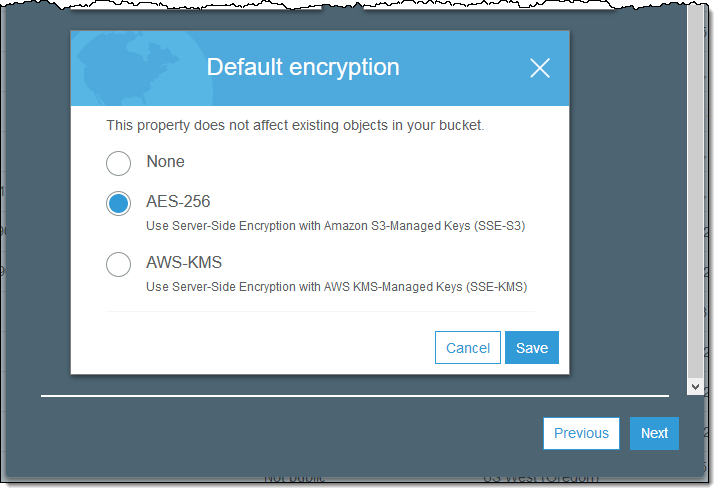

Select the desired option and click on Save (if you select AWS-KMS you also get to designate a KMS key):

You can also make this change via a call to the PUT Bucket Encryption function. It must specify an SSE algorithm (either SSE-S3 or SSE-KMS) and can optionally reference a KMS key.

Keep the following in mind when you implement this feature:

SigV4 – Access to the bucket policy via the S3 REST API must be signed with SigV4 and made over an SSL connection.

Updating Bucket Policies – You should examine and then carefully modify existing bucket policies that currently reject unencrypted objects.

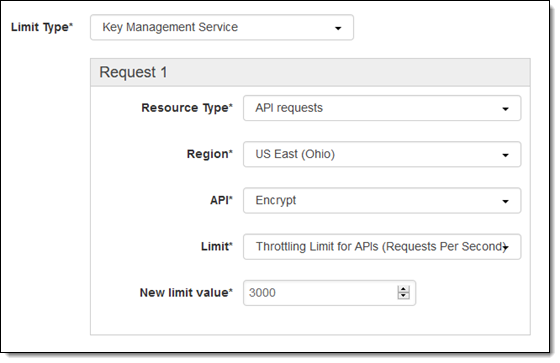

High-Volume Use – If you are using SSE-KMS and are uploading many hundreds or thousands of objects per second, you may bump in to the KMS Limit on the Encrypt and Decrypt operations. Simply file a Support Case and ask for a higher limit:

Cross-Region Replication – Unencrypted objects will be encrypted according to the configuration of the destination bucket. Encrypted objects will remain as such.

Permission Checks

The combination of bucket policies, bucket ACLs, and Object ACLs give you very fine-grained control over access to your buckets and the objects within them. With the goal of making sure that your policies and ACLs combine to create the desired effect, we recently launched a set of Managed Config Rules to Secure Your S3 Buckets. As I mentioned in the post, these rules make use of some of our work to put Automated Formal Reasoning to use.

We are now using the same underlying technology to help you see the impact of changes to your bucket policies and ACLs as soon as you make them. You will know right away if you open up a bucket for public access, allowing you to make changes with confidence.

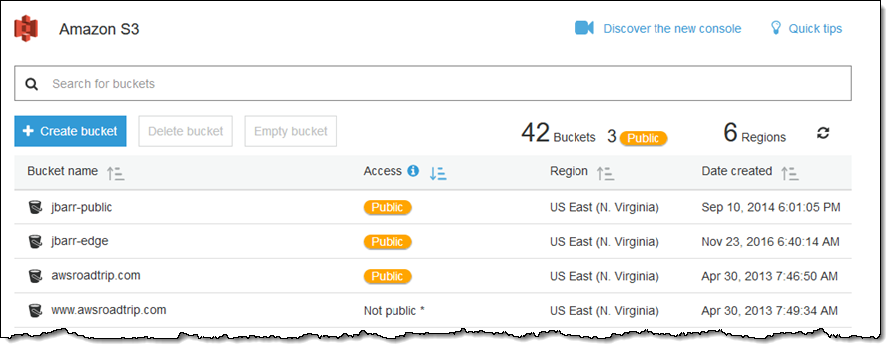

Here’s what it looks like on the main page of the S3 Console (I’ve sorted by the Access column for convenience):

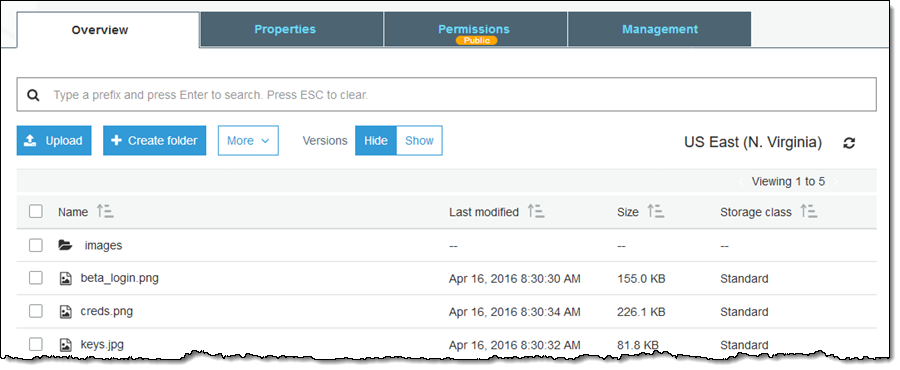

The Public indicator is also displayed when you look inside a single bucket:

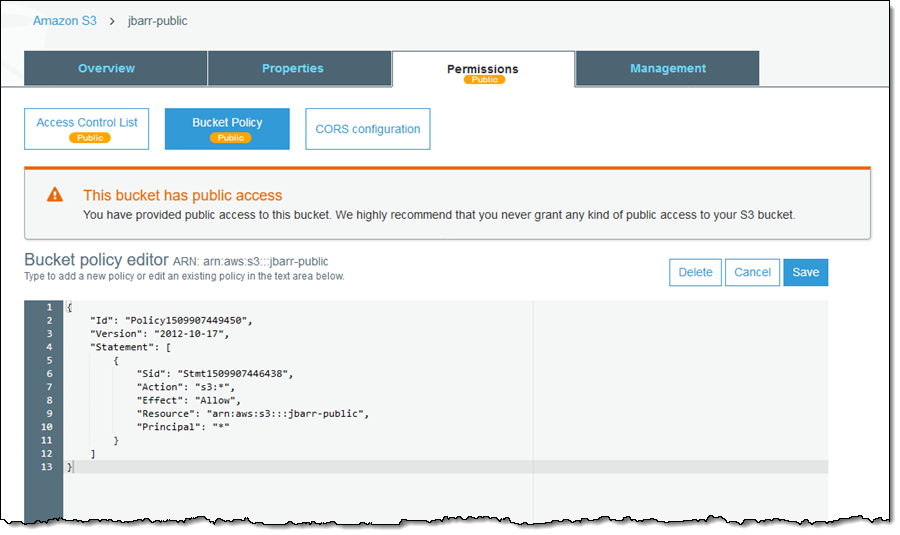

You can also see which Permission elements (ACL, Bucket Policy, or both) are enabling the public access:

Cross-Region Replication ACL Overwrite

Our customers often use S3’s Cross-Region Replication to copy their mission-critical objects and data to a destination bucket in a separate AWS account. In addition to copying the object, the replication process copies the object ACL and any tags associated with the object.

We’re making this feature even more useful by allowing you to enable replacement of the ACL as it is in transit so that it grants full access to the owner of the destination bucket. With this change, ownership of the source and the destination data is split across AWS accounts, allowing you to maintain separate and distinct stacks of ownership for the original objects and their replicas.

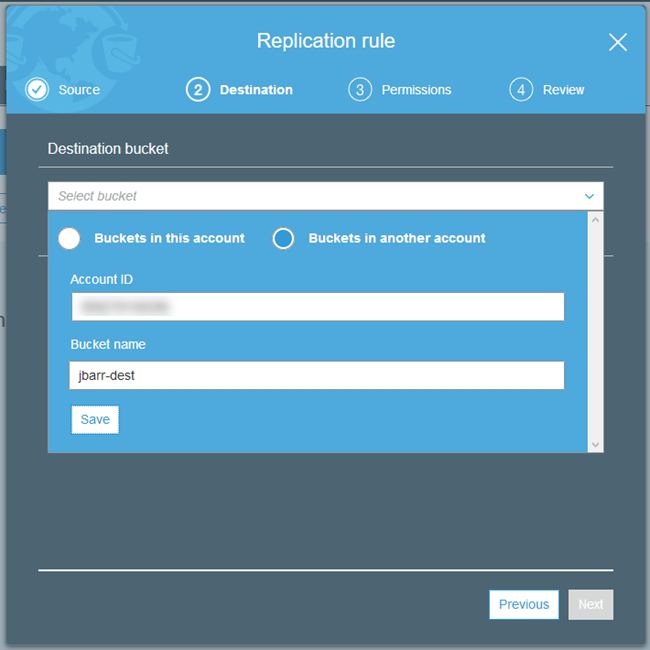

To enable this feature when you are setting up replication, choose a destination bucket in a different account and Region by specifying the Account ID and Bucket name, and clicking on Save:

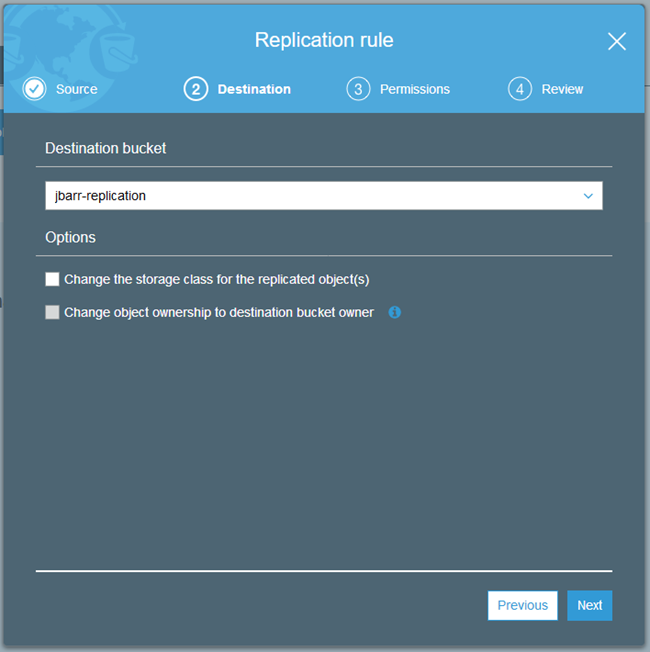

Then click on Change object ownership…:

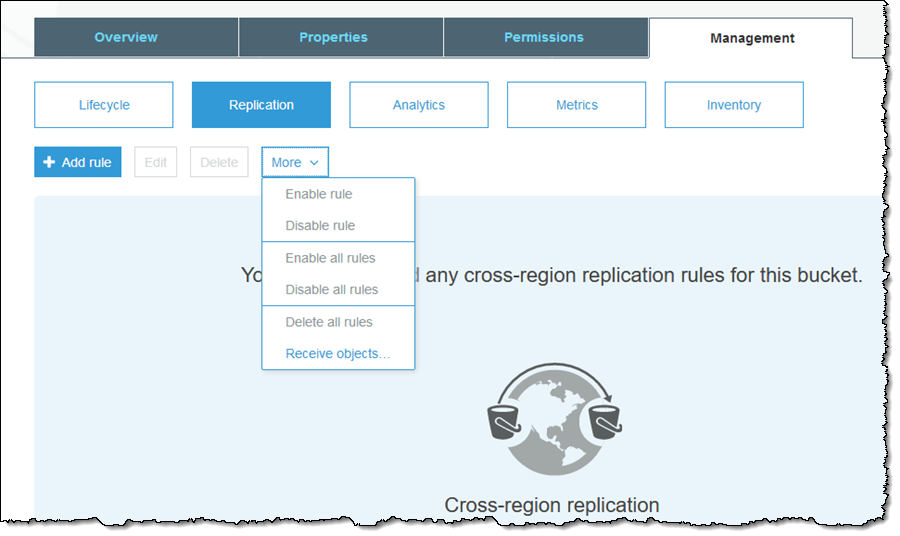

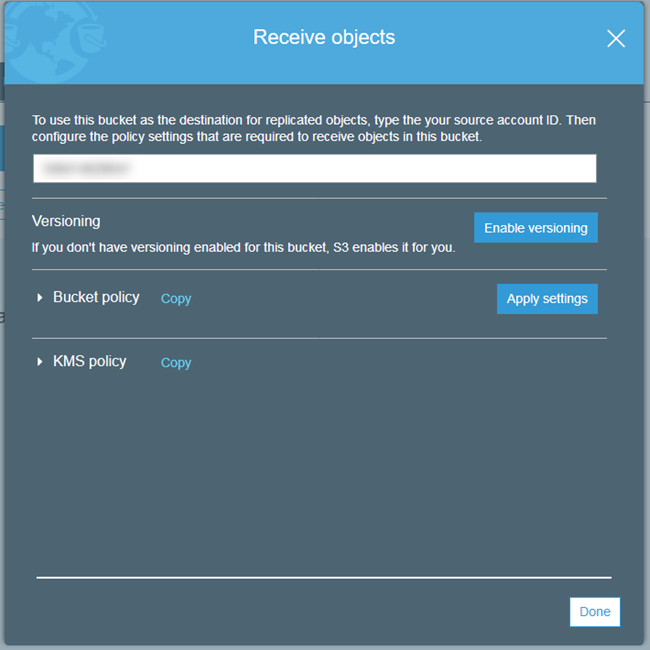

We’ve also made it easier for you to set up the key policy for the destination bucket in the destination account. Simply log in to the account and find the bucket, then click on Management and Replication, then choose Receive objects… from the More menu:

Enter the source Account ID, enable versioning, inspect the policies, apply them, and click on Done:

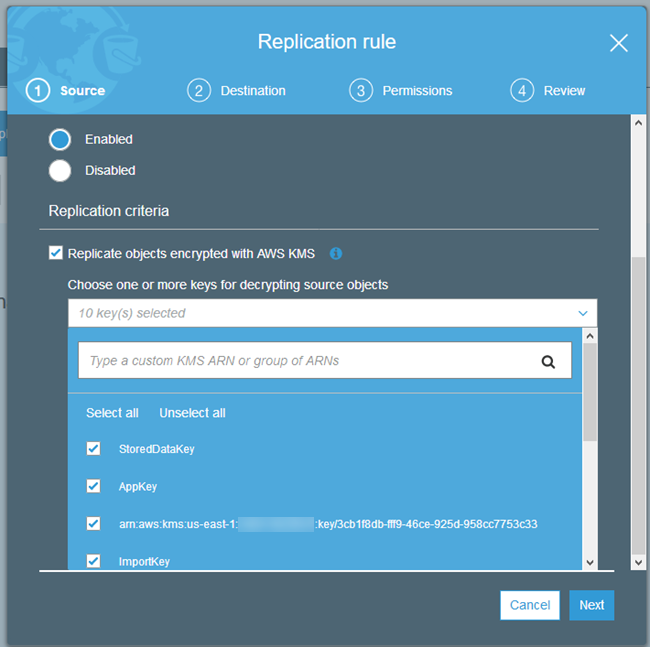

Cross-Region Replication with KMS

Replicating objects that have been encrypted using SSE-KMS across regions poses an interesting challenge that we are addressing today. Because the KMS keys are specific to a particular region, simply replicating the encrypted object would not work.

You can now choose the destination key when you set up cross-region replication. During the replication process, encrypted objects are replicated to the destination over an SSL connection. At the destination, the data key is encrypted with the KMS master key you specified in the replication configuration. The object remains in its original, encrypted form throughout; only the envelope containing the keys is actually changed.

Here’s how you enable this feature when you set up a replication rule:

As I mentioned earlier, you may need to request an increase in your KMS limits before you start to make heavy use of this feature.

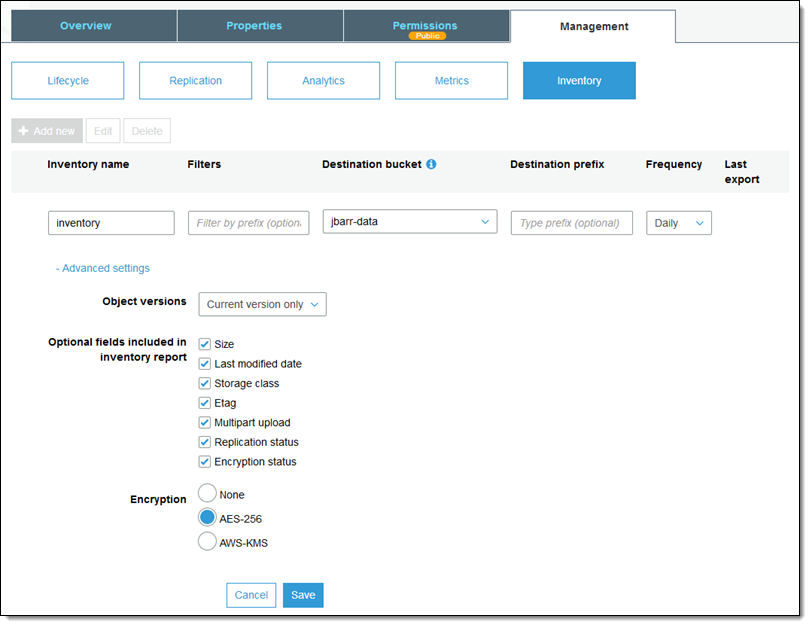

Detailed Inventory Report

Last but not least, you can now request that the daily or weekly S3 inventory reports include information on the encryption status of each object:

As you can see, you can also request SSE-S3 or SSE-KMS encryption for the report.

Now Available

All of these features are available now and you can start using them today! There is no charge for the features, but you will be charged the usual rates for calls to KMS, S3 storage, S3 requests, and inter-region data transfer.

— Jeff;

相關推薦

New Amazon S3 Encryption & Security Features

Back in 2006, when I announced S3, I wrote ” Further, each block is protected by an ACL (Access Control List) allowing the developer to keep the d

New – Amazon S3 Server Side Encryption for Data at Rest

A lot of technical tasks that seem simple in theory are often very complex to implement. For example, let’s say that you want to encrypt

New – VPC Endpoint for Amazon S3

I would like to tell you about a new AWS feature that will allow you to make even better use of Amazon Virtual Private Cloud and Amazon Simple Sto

New – Server-Side Encryption for Amazon Simple Queue Service (SQS)

As one of the most venerable members of the AWS family of services, Amazon Simple Queue Service (SQS) is an essential part of many applications. P

New: Server-Side Encryption for Amazon Kinesis Streams

In this age of smart homes, big data, IoT devices, mobile phones, social networks, chatbots, and game consoles, streaming data scenarios are every

New – Cross-Region Replication for Amazon S3

We launched Amazon S3 nine years ago as of last week! Since that time we have added dozens of features, expanded across the globe, and red

Amazon S3

binary embed rest 客戶端 傳輸 自己 topic amazon s3 權限 1. Buckets: Buckets form the top-level namespace for Amazon S3, and bucket names are

Amazon S3 功能介紹

tlist summary UC cee let ktr mode cto secret 引自:https://blog.csdn.net/tomcat_2014/article/details/50582547 一 .Amazon S3介紹 Amazon Simple S

CloudFusion,支持Dropbox, Sugarsync, Amazon S3, Google Storage, Google Drive or WebDAV

in use earth dropbox pytho ase wrapper ora rop nova Linux file system (FUSE) to access Dropbox, Sugarsync, Amazon S3, Google Storage, Goo

amazon s3 的使用者驗證 access key secrete key

分享一下我老師大神的人工智慧教程!零基礎,通俗易懂!http://blog.csdn.net/jiangjunshow 也歡迎大家轉載本篇文章。分享知識,造福人民,實現我們中華民族偉大復興!

Amazon S3資料儲存

從官網下載aws 的unity外掛,並做了簡單修改(主要用修改PostObject),問題: (一)獲取Pool ID 通過服務-Cognito-管理/新建使用者池,可以新建或者獲取Pool ID (二)上傳失敗問題 使用unity外掛中S3Example中PostObject時拋異常,但是獲取G

Splunk宣佈與全新的Amazon Web Services Security Hub整合

整合將加快檢測、調查和響應,以幫助進一步保護AWS環境 美國拉斯維加斯 -- (美國商業資訊) -- 2018年AWS re:Invent大會 -- 致力於將資料轉化為行動和價值的 Splunk公司(NASDAQ:SPLK)今天宣佈與新推出的Amazon

雲端計算儲存之Amazon s3、s3a、s3n的區別是什麼?

文章目錄 s3、s3a、s3n s3基於塊, s3n / s3a 基於物件 s3a 是 s3n 的升級版 詳情請閱讀... s3、s3a、s3n s3://bucket/ s3a://bucket/ s3n://buck

大資料BigData之hadoop連線Amazon s3時,core-site.xml檔案該怎麼配置?

hadoop連線Amazon s3時,core-site.xml檔案該怎麼配置? 文章目錄 1. 注意 2. s3的配置模板 3. s3n的配置模板 4. s3a的配置模板 5. 必須要新增的配置 5.1 配置 endpo

How One Website Exploited Amazon S3 to Outrank Everyone on Google

So, something is obviously off here. I naturally had to dig a little bit deeper.First off, the site seems completely bare. For something ranking so high fo

AWS Announces New Amazon SageMaker Capabilities and Enhancements

Amazon Web Services recently announced that users now have the ability to build an Amazon SageMaker notebook from the AWS Glue Console and then connect it

Migrate to Apache HBase on Amazon S3 on Amazon EMR: Guidelines and Best Practices

This blog post provides guidance and best practices about how to migrate from Apache HBase on HDFS to Apache HBase on Amazon S3 on Amazon EMR.

Tutorial for building a Web Application with Amazon S3, Lambda, DynamoDB and API Gateway

Tutorial for building a Web Application with Amazon S3, Lambda, DynamoDB and API GatewayI recently attended Serverless Day at the AWS Loft in downtown San

Introducing support for Amazon S3 Select in the AWS SDK for PHP

We’re excited to announce support for the Amazon Simple Storage Service (Amazon S3) SelectObjectContent API with event streams in the AWS SDK for