人臉檢測(1)——HOG特徵

一、概述

前面一個系列,我們對車牌識別的相關技術進行了研究,但是車牌識別相對來說還是比較簡單的,後續本人會對人臉檢測、人臉識別,人臉姿態估計和人眼識別做一定的學習和研究。其中人臉檢測相對來說比較簡單,譬如Dlib庫中直接封裝了現成的庫函式 frontal_face_detector 供相關人員使用,但是Dlib的執行速率並不是很高,另外於仕琪老師的 libfaceDetection 庫具有較高的識別率和相對較快的執行速度,具體可以從github 上獲取 https://github.com/ShiqiYu/libfacedetection 。但是該庫並沒有提供原始碼分析,只有現成的lib庫可以直接使用。

從學習和研究的角度來說,我們還是希望能夠直接從原始碼中進行相關學習,因此此處我們通過Dlib庫程式碼解讀,來對人臉檢測的相關技術做一定的分析。Dlib是一個機器學習的C++庫,包含了許多機器學習常用的演算法,並且文件和例子都非常詳細。 Dlib官網地址: http://www.dlib.net 。下面我們通過一個簡單的例子,來看下人臉檢測是如何工作的,程式碼如下所示:

1 try 2 { 3 frontal_face_detector detector = get_frontal_face_detector(); 4 image_window win;View Code5 6 string filePath = "E:\\dlib-18.16\\dlib-18.16\\examples\\faces\\2008_002079.jpg"; 7 cout << "processing image " << filePath << endl; 8 array2d<unsigned char> img; 9 load_image(img, filePath); 10 // Make the image bigger by a factor of two. This is useful since11 // the face detector looks for faces that are about 80 by 80 pixels 12 // or larger. Therefore, if you want to find faces that are smaller 13 // than that then you need to upsample the image as we do here by 14 // calling pyramid_up(). So this will allow it to detect faces that 15 // are at least 40 by 40 pixels in size. We could call pyramid_up() 16 // again to find even smaller faces, but note that every time we 17 // upsample the image we make the detector run slower since it must 18 // process a larger image. 19 20 pyramid_up(img); 21 22 // Now tell the face detector to give us a list of bounding boxes 23 // around all the faces it can find in the image. 24 std::vector<rectangle> dets = detector(img); 25 cout << "Number of faces detected: " << dets.size() << endl; 26 // Now we show the image on the screen and the face detections as 27 // red overlay boxes. 28 win.clear_overlay(); 29 win.set_image(img); 30 win.add_overlay(dets, rgb_pixel(255, 0, 0)); 31 32 cout << "Hit enter to process the next image..." << endl; 33 cin.get(); 34 } 35 catch (exception& e) 36 { 37 cout << "\nexception thrown!" << endl; 38 cout << e.what() << endl; 39 }

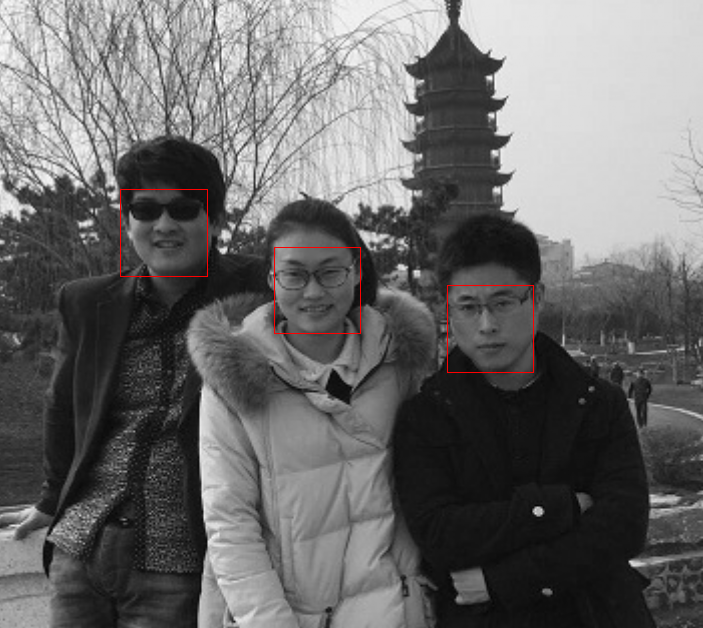

如上圖所示,frontal_face_detector 將影象中所有的人臉都檢測了出來,從程式碼中也可以看到,該方法的使用過程及其簡單,當然人臉檢測的內部邏輯是極其複雜的。

二、程式碼分析

下面我們一步步跟蹤下程式碼,看看Dlib人臉檢測內部究竟是如何工作的。

object_detector

typedef object_detector<scan_fhog_pyramid<pyramid_down<6> > > frontal_face_detector;

類 frontal_face_detector 是Dlib庫中定義的,位於 “frontal_face_detector.h” 中,可以看到類 frontal_face_detector 是類 object_detector的一種特殊情況;具體關於object_detector的內容後面再詳細介紹。

定義Scanner,用於掃描圖片並提取特徵

類scan_fhog_pyramid 定義來自於”scan_fhog_pyramid.h ”

template <typename Pyramid_type, typename Feature_extractor_type =default_fhog_feature_extractor>

class scan_fhog_pyramid : noncopyable{...}

類模板中引數Pyramid_type表示影象金字塔的型別,本文使用的是pyramid_down<6>,表示影象金字塔進行下采樣的比率為5/6,即對原影象不斷縮小5/6,構成多級金字塔。當影象的大小小於掃描視窗大小的時候,停止下采樣。

引數 Feature_extractor_type 表示特徵提取器,預設情況下使用 "fhog.h"中的extract_fhog_feature() 提取特徵,函式原型為:

template <typename image_type, typename T, typename mm>

void extract_fhog_features(

const image_type& img,

array2d<matrix<T,31,1>,mm>& hog,

int cell_size = 8,

int filter_rows_padding = 1,

int filter_cols_padding = 1

)

{

impl_fhog::impl_extract_fhog_features(img, hog, cell_size, filter_rows_padding, filter_cols_padding);

}

此函式提取的HOG特徵來自於Felzenszwalb 版本的HOG [1] (簡稱fhog)它是對每個8*8畫素大小的cell提取31維的 fhog運算元,然後儲存到上述hog array中供後續計算使用。

HOG的發明者是Navneet Dalal,在2005年其在CVPR上發表了《Histograms of Oriented Gradients forHuman Detection》這一篇論文,HOG一戰成名。當然ND大神也就是我們經常使用的Inria資料集的締造者。其博士的畢業論文《Finding People in Images and Videos》更是HOG研究者的一手資料。

HOG演算法思想:

在計算機視覺以及數字影象處理中梯度方向直方圖(HOG)是一種能對物體進行檢測的基於形狀邊緣特徵的描述運算元,它的基本思想是利用梯度資訊能很好的反映影象目標的邊緣資訊並通過區域性梯度的大小將影象區域性的外觀和形狀特徵化。

HOG特徵的提取可以用下面過程表示: 顏色空間的歸一化是為了減少光照以及背景等因素的影響;劃分檢測視窗成大小相同的細胞單元(cell),並分別提取相應的梯度資訊;組合相鄰的細胞單元成大的相互有重疊的塊(block),這樣能有效的利用重疊的邊緣資訊,以統計整個塊的直方圖;並對每個塊內的梯度直方圖進行歸一化,從而進一步減少背景顏色及噪聲的影響;最後將整個視窗中所有塊的HOG特徵收集起來,並使用特徵向量來表示其特徵。

顏色空間歸一化:

在現實的情況,影象目標會出現在不同的環境中,光照也會有所不一樣,顏色空間歸一化就是對整幅影象的顏色資訊作歸一化處理從而減少不同光照及背景的影響,也為了提高檢測的魯棒性,引入影象Gamma和顏色空間歸一化來作為特徵提取的預處理手段。ND大神等人也對不同的影象畫素點的表達方式包括灰度空間等進行了評估,最終驗證RGB還有LAB色彩空間能使檢測結果大致相同且能起到積極的影響,且另一方面,ND大神等人在研究中分別在每個顏色通道上使用了兩種不同的Gamma歸一化方式,取平方根或者使用對數法,最終驗證這一預處理對檢測的結果幾乎沒有影響,而不能對影象進行高斯平滑處理,因平滑處理會降低影象目標邊緣資訊的辨識度,影響檢測結果。

梯度計算:

邊緣是由影象區域性特徵包括灰度、顏色和紋理的突變導致的。一幅影象中相鄰的畫素點之間變化比較少,區域變化比較平坦,則梯度幅值就會比較小,反之,則梯度幅值就會比較大。梯度在影象中對應的就是其一階導數。模擬影象f(x,y)中任一畫素點(x,y)的梯度是一個向量:

其中,Gx是沿x方向上的梯度,Gy是沿y方向上的梯度,梯度的幅值及方向角可表示如下:

數字影象中畫素點的梯度是用差分來計算的:

一維離散微分模板在將影象的梯度資訊簡單、快速且有效地計算出來,其公式如下:

式中,Gx,Gy,H(x,y)分別表示的是畫素點(x,y)在水平方向上及垂直方向上的梯度以及畫素的灰度值,其梯度的幅值及方向計算公式如下:

計算細胞單元的梯度直方圖:

對於整個目標視窗,我們需要將其分成互不重疊大小相同的細胞單元(cell),然後分別計算出每個cell的梯度資訊,包括梯度大小和梯度方向。ND大神等人實驗指出,將畫素的梯度方向在0-180°區間內平均劃分為9個bins,超過9個時不僅檢測效能沒有明顯的提高反而增加了檢測運算量, 每個cell內的畫素為其所在的梯度方向直方圖進行加權投票,加權的權值可以是畫素本身的梯度幅值,也可以是幅值的平方或平方根等,而若使用平方或平方根,實驗的檢測效能會有所降低,ND大神等人也驗證,使用梯度幅值的實驗效果更可靠。

對組合成塊的梯度直方圖作歸一化:

從梯度計算公式中可以看出,梯度幅值絕對值的大小容易受到前景與背景對比度及區域性光照的影響,要減少這種影響得到較準確的檢測效果就必須對區域性細胞單元進行歸一化處理。歸一化方法多種多樣,但整體思想基本上是一致的:將幾個細胞單元(cell)組合成更大的塊(block),這時整幅影象就可看成是待檢測視窗,將更大的塊看成是滑動視窗,依次從左到右從上到下進行滑動,得到一些有重複細胞單元的塊及一些相同細胞單元(cell)在不同塊(block)中的梯度資訊,再對這些塊(block)資訊分別作歸一化處理,不同的細胞單元尺寸大小及不同塊的尺寸大小會影響最終的檢測效果。

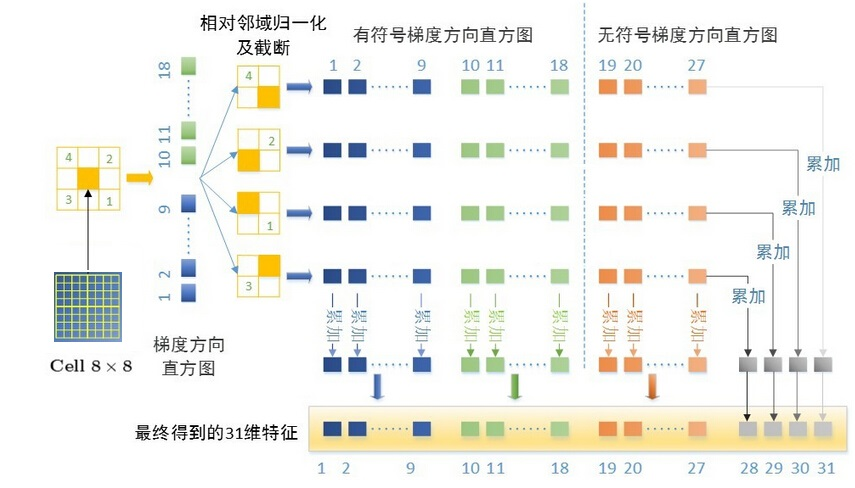

介紹完HOG運算元的基本概念,這邊分析下31維的 fhog運算元具體是從何而來呢?

其中,31D fhog=18D+9D+4D。

- 18D來自於對cell做18個bin的梯度方向直方圖,即將360°劃分為18個bin,然後令cell中的每個畫素根據其梯度方向加權投影到直方圖相應的bin中,這樣就得到了18維有符號的fhog梯度。

- 9D來自於對cell做9個bin的梯度方向直方圖,此時是將180°劃分為9個bin,則得到無符號的9維fhog梯度。

- 最後的4D是來自於當前cell和其對角線臨域的4個領域cell的歸一化操作。具體地,取block=2*2 cell,則得到無符號fhog梯度4*9維,將其看成矩陣做按行按列累加可得到1D特徵,4個領域則可得到4個block,共4維特徵。

最終,每個cell的31維fhog特徵就來自於上述三部分的串聯。

具體程式碼如下所示:

1 template < 2 typename image_type, 3 typename out_type 4 > 5 void impl_extract_fhog_features( 6 const image_type& img_, 7 out_type& hog, 8 int cell_size, 9 int filter_rows_padding, 10 int filter_cols_padding 11 ) 12 { 13 const_image_view<image_type> img(img_); 14 // make sure requires clause is not broken 15 DLIB_ASSERT( cell_size > 0 && 16 filter_rows_padding > 0 && 17 filter_cols_padding > 0 , 18 "\t void extract_fhog_features()" 19 << "\n\t Invalid inputs were given to this function. " 20 << "\n\t cell_size: " << cell_size 21 << "\n\t filter_rows_padding: " << filter_rows_padding 22 << "\n\t filter_cols_padding: " << filter_cols_padding 23 ); 24 25 /* 26 This function implements the HOG feature extraction method described in 27 the paper: 28 P. Felzenszwalb, R. Girshick, D. McAllester, D. Ramanan 29 Object Detection with Discriminatively Trained Part Based Models 30 IEEE Transactions on Pattern Analysis and Machine Intelligence, Vol. 32, No. 9, Sep. 2010 31 32 Moreover, this function is derived from the HOG feature extraction code 33 from the features.cc file in the voc-releaseX code (see 34 http://people.cs.uchicago.edu/~rbg/latent/) which is has the following 35 license (note that the code has been modified to work with grayscale and 36 color as well as planar and interlaced input and output formats): 37 38 Copyright (C) 2011, 2012 Ross Girshick, Pedro Felzenszwalb 39 Copyright (C) 2008, 2009, 2010 Pedro Felzenszwalb, Ross Girshick 40 Copyright (C) 2007 Pedro Felzenszwalb, Deva Ramanan 41 42 Permission is hereby granted, free of charge, to any person obtaining 43 a copy of this software and associated documentation files (the 44 "Software"), to deal in the Software without restriction, including 45 without limitation the rights to use, copy, modify, merge, publish, 46 distribute, sublicense, and/or sell copies of the Software, and to 47 permit persons to whom the Software is furnished to do so, subject to 48 the following conditions: 49 50 The above copyright notice and this permission notice shall be 51 included in all copies or substantial portions of the Software. 52 53 THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, 54 EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF 55 MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND 56 NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE 57 LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION 58 OF CONTRACT, TORT OR OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION 59 WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE. 60 */ 61 62 if (cell_size == 1) 63 { 64 impl_extract_fhog_features_cell_size_1(img_,hog,filter_rows_padding,filter_cols_padding); 65 return; 66 } 67 68 // unit vectors used to compute gradient orientation 69 matrix<double,2,1> directions[9]; 70 directions[0] = 1.0000, 0.0000; 71 directions[1] = 0.9397, 0.3420; 72 directions[2] = 0.7660, 0.6428; 73 directions[3] = 0.500, 0.8660; 74 directions[4] = 0.1736, 0.9848; 75 directions[5] = -0.1736, 0.9848; 76 directions[6] = -0.5000, 0.8660; 77 directions[7] = -0.7660, 0.6428; 78 directions[8] = -0.9397, 0.3420; 79 80 81 82 // First we allocate memory for caching orientation histograms & their norms. 83 const int cells_nr = (int)((double)img.nr()/(double)cell_size + 0.5); 84 const int cells_nc = (int)((double)img.nc()/(double)cell_size + 0.5); 85 86 if (cells_nr == 0 || cells_nc == 0) 87 { 88 hog.clear(); 89 return; 90 } 91 92 // We give hist extra padding around the edges (1 cell all the way around the 93 // edge) so we can avoid needing to do boundary checks when indexing into it 94 // later on. So some statements assign to the boundary but those values are 95 // never used. 96 array2d<matrix<float,18,1> > hist(cells_nr+2, cells_nc+2); 97 for (long r = 0; r < hist.nr(); ++r) 98 { 99 for (long c = 0; c < hist.nc(); ++c) 100 { 101 hist[r][c] = 0; 102 } 103 } 104 105 array2d<float> norm(cells_nr, cells_nc); 106 assign_all_pixels(norm, 0); 107 108 // memory for HOG features 109 const int hog_nr = std::max(cells_nr-2, 0); 110 const int hog_nc = std::max(cells_nc-2, 0); 111 if (hog_nr == 0 || hog_nc == 0) 112 { 113 hog.clear(); 114 return; 115 } 116 const int padding_rows_offset = (filter_rows_padding-1)/2; 117 const int padding_cols_offset = (filter_cols_padding-1)/2; 118 init_hog(hog, hog_nr, hog_nc, filter_rows_padding, filter_cols_padding); 119 120 const int visible_nr = std::min((long)cells_nr*cell_size,img.nr())-1; 121 const int visible_nc = std::min((long)cells_nc*cell_size,img.nc())-1; 122 123 // First populate the gradient histograms 124 for (int y = 1; y < visible_nr; y++) 125 { 126 const double yp = ((double)y+0.5)/(double)cell_size - 0.5; 127 const int iyp = (int)std::floor(yp); 128 const double vy0 = yp-iyp; 129 const double vy1 = 1.0-vy0; 130 int x; 131 for (x = 1; x < visible_nc-3; x+=4) 132 { 133 simd4f xx(x,x+1,x+2,x+3); 134 // v will be the length of the gradient vectors. 135 simd4f grad_x, grad_y, v; 136 get_gradient(y,x,img,grad_x,grad_y,v); 137 138 // We will use bilinear interpolation to add into the histogram bins. 139 // So first we precompute the values needed to determine how much each 140 // pixel votes into each bin. 141 simd4f xp = (xx+0.5)/(float)cell_size + 0.5; 142 simd4i ixp = simd4i(xp); 143 simd4f vx0 = xp-ixp; 144 simd4f vx1 = 1.0f-vx0; 145 146 v = sqrt(v); 147 148 // Now snap the gradient to one of 18 orientations 149 simd4f best_dot = 0; 150 simd4f best_o = 0; 151 for (int o = 0; o < 9; o++) 152 { 153 simd4f dot = grad_x*directions[o](0) + grad_y*directions[o](1); 154 simd4f_bool cmp = dot>best_dot; 155 best_dot = select(cmp,dot,best_dot); 156 dot *= -1; 157 best_o = select(cmp,o,best_o); 158 159 cmp = dot>best_dot; 160 best_dot = select(cmp,dot,best_dot); 161 best_o = select(cmp,o+9,best_o); 162 } 163 164 165 // Add the gradient magnitude, v, to 4 histograms around pixel using 166 // bilinear interpolation. 167 vx1 *= v; 168 vx0 *= v; 169 // The amounts for each bin 170 simd4f v11 = vy1*vx1; 171 simd4f v01 = vy0*vx1; 172 simd4f v10 = vy1*vx0; 173 simd4f v00 = vy0*vx0; 174 175 int32 _best_o[4]; simd4i(best_o).store(_best_o); 176 int32 _ixp[4]; ixp.store(_ixp); 177 float _v11[4]; v11.store(_v11); 178 float _v01[4]; v01.store(_v01); 179 float _v10[4]; v10.store(_v10); 180 float _v00[4]; v00.store(_v00); 181 182 hist[iyp+1] [_ixp[0] ](_best_o[0]) += _v11[0]; 183 hist[iyp+1+1][_ixp[0] ](_best_o[0]) += _v01[0]; 184 hist[iyp+1] [_ixp[0]+1](_best_o[0]) += _v10[0]; 185 hist[iyp+1+1][_ixp[0]+1](_best_o[0]) += _v00[0]; 186 187 hist[iyp+1] [_ixp[1] ](_best_o[1]) += _v11[1]; 188 hist[iyp+1+1][_ixp[1] ](_best_o[1]) += _v01[1]; 189 hist[iyp+1] [_ixp[1]+1](_best_o[1]) += _v10[1]; 190 hist[iyp+1+1][_ixp[1]+1](_best_o[1]) += _v00[1]; 191 192 hist[iyp+1] [_ixp[2] ](_best_o[2]) += _v11[2]; 193 hist[iyp+1+1][_ixp[2] ](_best_o[2]) += _v01[2]; 194 hist[iyp+1] [_ixp[2]+1](_best_o[2]) += _v10[2]; 195 hist[iyp+1+1][_ixp[2]+1](_best_o[2]) += _v00[2]; 196 197 hist[iyp+1] [_ixp[3] ](_best_o[3]) += _v11[3]; 198 hist[iyp+1+1][_ixp[3] ](_best_o[3]) += _v01[3]; 199 hist[iyp+1] [_ixp[3]+1](_best_o[3]) += _v10[3]; 200 hist[iyp+1+1][_ixp[3]+1](_best_o[3]) += _v00[3]; 201 } 202 // Now process the right columns that don't fit into simd registers. 203 for (; x < visible_nc; x++) 204 { 205 matrix<double,2,1> grad; 206 double v; 207 get_gradient(y,x,img,grad,v); 208 209 // snap to one of 18 orientations 210 double best_dot = 0; 211 int best_o = 0; 212 for (int o = 0; o < 9; o++) 213 { 214 const double dot = dlib::dot(directions[o], grad); 215 if (dot > best_dot) 216 { 217 best_dot = dot; 218 best_o = o; 219 } 220 else if (-dot > best_dot) 221 { 222 best_dot = -dot; 223 best_o = o+9; 224 } 225 } 226 227 v = std::sqrt(v); 228 // add to 4 histograms around pixel using bilinear interpolation 229 const double xp = ((double)x+0.5)/(double)cell_size - 0.5; 230 const int ixp = (int)std::floor(xp); 231 const double vx0 = xp-ixp; 232 const double vx1 = 1.0-vx0; 233 234 hist[iyp+1][ixp+1](best_o) += vy1*vx1*v; 235 hist[iyp+1+1][ixp+1](best_o) += vy0*vx1*v; 236 hist[iyp+1][ixp+1+1](best_o) += vy1*vx0*v; 237 hist[iyp+1+1][ixp+1+1](best_o) += vy0*vx0*v; 238 } 239 } 240 241 // compute energy in each block by summing over orientations 242 for (int r = 0; r < cells_nr; ++r) 243 { 244 for (int c = 0; c < cells_nc; ++c) 245 { 246 for (int o = 0; o < 9; o++) 247 { 248 norm[r][c] += (hist[r+1][c+1](o) + hist[r+1][c+1](o+9)) * (hist[r+1][c+1](o) + hist[r+1][c+1](o+9)); 249 } 250 } 251 } 252 253 const double eps = 0.0001; 254 // compute features 255 for (int y = 0; y < hog_nr; y++) 256 { 257 const int yy = y+padding_rows_offset; 258 for (int x = 0; x < hog_nc; x++) 259 { 260 const simd4f z1(norm[y+1][x+1], 261 norm[y][x+1], 262 norm[y+1][x], 263 norm[y][x]); 264 265 const simd4f z2(norm[y+1][x+2], 266 norm[y][x+2], 267 norm[y+1][x+1], 268 norm[y][x+1]); 269 270 const simd4f z3(norm[y+2][x+1], 271 norm[y+1][x+1], 272 norm[y+2][x], 273 norm[y+1][x]); 274 275 const simd4f z4(norm[y+2][x+2], 276 norm[y+1][x+2], 277 norm[y+2][x+1], 278 norm[y+1][x+1]); 279 280 const simd4f nn = 0.2*sqrt(z1+z2+z3+z4+eps); 281 const simd4f n = 0.1/nn; 282 283 simd4f t = 0; 284 285 const int xx = x+padding_cols_offset; 286