Android前置攝像頭預覽並檢測人臉,獲取人臉區域亮度

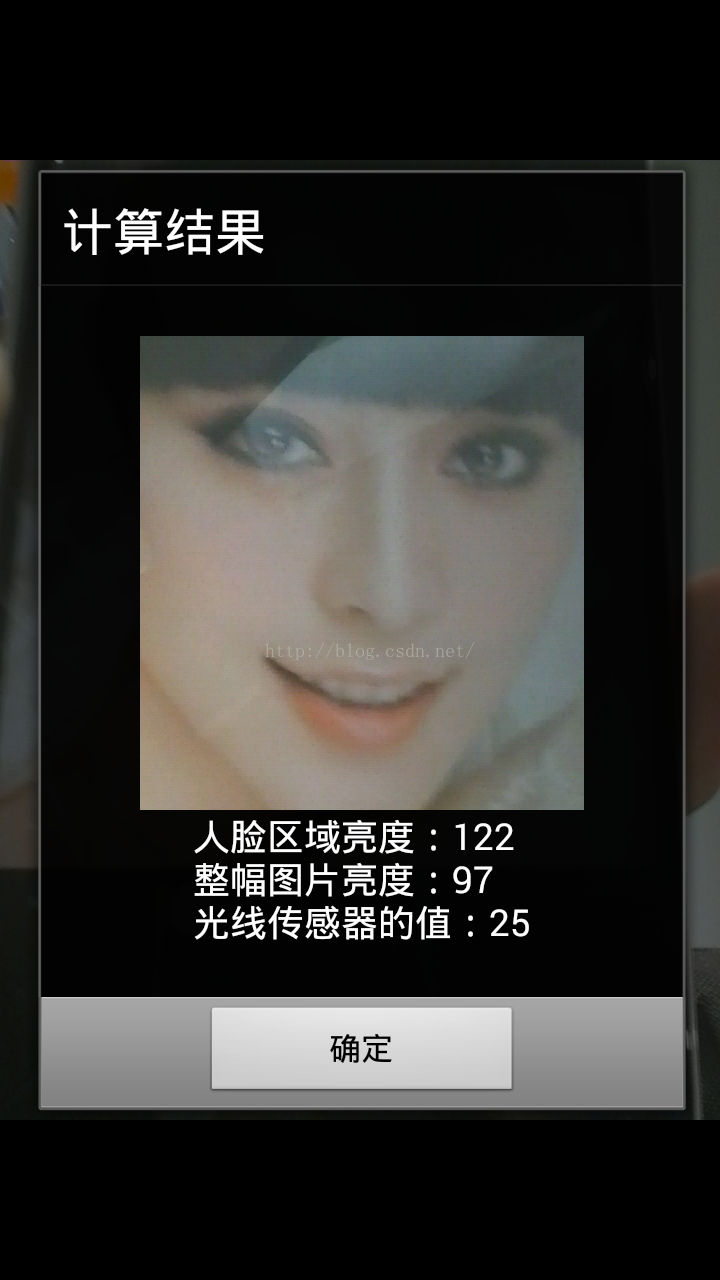

本篇博文記錄如何使用Android的前置攝像頭預覽,並獲取人臉部分,然後計算人臉區域的亮度,下面是程式執行截圖:

下面上程式碼:

1、前置攝像頭預覽時需要用到的類CameraView:

2、檢測到人臉後將人臉區域畫出來的FaceView類:package test.com.getbright; import android.app.Activity; import android.content.Context; import android.graphics.Bitmap; import android.graphics.BitmapFactory; import android.graphics.Point; import android.graphics.PointF; import android.hardware.Camera; import android.hardware.Camera.Size; import android.media.FaceDetector; import android.util.AttributeSet; import android.util.DisplayMetrics; import android.util.Log; import android.view.SurfaceHolder; import android.view.SurfaceView; import android.view.View; import android.view.ViewGroup; import android.view.WindowManager; import java.io.IOException; import java.util.List; /** * Created by yubo on 2015/9/20. */ public class CameraView extends SurfaceView implements SurfaceHolder.Callback, Camera.PreviewCallback { private Context context; private Camera camera; private FaceView faceView; private OnFaceDetectedListener onFaceDetectedListener; /** * 攝像頭最大的預覽尺寸 */ private Size maxPreviewSize; /** * 預覽時Frame的計數器 */ private int frameCount; /** * 是否正在檢測人臉 */ private boolean isDetectingFace = false; /** * 是否已檢測到人臉 */ private boolean detectedFace = false; public CameraView(Context context) { super(context); init(context); } public CameraView(Context context, AttributeSet attrs) { super(context, attrs); init(context); } private void init(Context context) { this.context = context; getHolder().addCallback(this); } public void setFaceView(FaceView faceView) { if (faceView != null) { this.faceView = faceView; } } /** 攝像頭重新開始預覽 */ public void reset() { if(faceView != null) { faceView.setVisibility(View.GONE); } if(camera != null) { camera.setPreviewCallback(this); camera.startPreview(); } frameCount = 0; detectedFace = false; isDetectingFace = false; } @Override public void surfaceCreated(SurfaceHolder holder) { try { camera = Camera.open(Camera.CameraInfo.CAMERA_FACING_FRONT); if(camera != null) { camera.setDisplayOrientation(90); camera.setPreviewDisplay(holder); camera.setPreviewCallback(this); } } catch (IOException e) { e.printStackTrace(); } } @Override public void surfaceChanged(SurfaceHolder holder, int format, int width, int height) { if (camera != null) { maxPreviewSize = getMaxPreviewSize(camera); if (maxPreviewSize != null) { ViewGroup.LayoutParams params = getLayoutParams(); Point point = getScreenSize(); params.width = point.x; params.height = maxPreviewSize.width * point.x / maxPreviewSize.height; setLayoutParams(params); if(faceView != null) { faceView.setLayoutParams(params); } Camera.Parameters parameters = camera.getParameters(); parameters.setPreviewSize(maxPreviewSize.width, maxPreviewSize.height); camera.setParameters(parameters); } camera.startPreview(); frameCount = 0; detectedFace = false; } } @Override public void surfaceDestroyed(SurfaceHolder holder) { if (camera != null) { try { camera.stopPreview(); camera.setPreviewDisplay(null); camera.setPreviewCallback(null); camera.release(); camera = null; } catch (IOException e) { e.printStackTrace(); } } } /** * 獲取手機螢幕的尺寸 * * @return */ private Point getScreenSize() { WindowManager manager = (WindowManager) context.getSystemService(Context.WINDOW_SERVICE); DisplayMetrics outMetrics = new DisplayMetrics(); manager.getDefaultDisplay().getMetrics(outMetrics); return new Point(outMetrics.widthPixels, outMetrics.heightPixels); } /** * 獲取攝像頭最大的預覽尺寸 * * @param camera * @return */ private Size getMaxPreviewSize(Camera camera) { List<Size> list = camera.getParameters().getSupportedPreviewSizes(); if (list != null) { int max = 0; Size maxSize = null; for (Size size : list) { int n = size.width * size.height; if (n > max) { max = n; maxSize = size; } } return maxSize; } return null; } @Override public void onPreviewFrame(byte[] data, Camera camera) { Log.e("yubo", "onPreviewFrame..."); frameCount++; //前面15幀丟棄 if (frameCount > 15 && !isDetectingFace && !detectedFace) { Size size = camera.getParameters().getPreviewSize(); final byte[] byteArray = ImageUtils.yuv2Jpeg(data, size.width, size.height); isDetectingFace = true; new Thread() { @Override public void run() { detectFaces(byteArray); } }.start(); } } /** * 檢測data資料中是否有人臉,這裡需要先旋轉一下圖片,該方法執行在子執行緒中 * * @param data */ private void detectFaces(byte[] data) { Bitmap bitmap = BitmapFactory.decodeByteArray(data, 0, data.length); bitmap = ImageUtils.rotateBitmap(bitmap, -90); bitmap = bitmap.copy(Bitmap.Config.RGB_565, true); FaceDetectorUtils.detectFace(bitmap, new FaceDetectorUtils.Callback() { @Override public void onFaceDetected(final FaceDetector.Face[] faces, final Bitmap bm) { isDetectingFace = false; Log.e("yubo", "face detected..."); if (!detectedFace) { detectedFace = true; ((Activity) context).runOnUiThread(new Runnable() { @Override public void run() { if (camera != null) { camera.stopPreview(); } if (faceView != null) { float scaleRate = bm.getWidth() * 1.0f / getScreenSize().x; faceView.setScaleRate(scaleRate); faceView.setFaces(faces, bm); faceView.setVisibility(View.VISIBLE); } if (onFaceDetectedListener != null) { onFaceDetectedListener.onFaceDetected(bm); } } }); } } @Override public void onFaceNotDetected(Bitmap bm) { bm.recycle(); if (faceView != null) { faceView.clear(); } isDetectingFace = false; } }); } /** * 檢測到人臉的監聽器 */ public interface OnFaceDetectedListener { void onFaceDetected(Bitmap bm); } /** * 設定監聽器,監聽檢測到人臉的動作 */ public void setOnFaceDetectedListener(OnFaceDetectedListener listener) { if (listener != null) { this.onFaceDetectedListener = listener; } } }

3、人臉檢測的工具類:package test.com.getbright; import android.content.Context; import android.graphics.Bitmap; import android.graphics.BitmapFactory; import android.graphics.Canvas; import android.graphics.Color; import android.graphics.Paint; import android.graphics.PointF; import android.media.FaceDetector; import android.util.AttributeSet; import android.util.Log; import android.widget.ImageView; /** * Created by yubo on 2015/9/5. * 畫人臉區域的View */ public class FaceView extends ImageView { private FaceDetector.Face[] faces; private Paint paint; private Bitmap bitmap; private float left; private float top; private float right; private float bottom; private int x; private int y; private int width; public FaceView(Context context) { super(context); init(context); } public FaceView(Context context, AttributeSet attrs) { super(context, attrs); init(context); } public FaceView(Context context, AttributeSet attrs, int defStyleAttr) { super(context, attrs, defStyleAttr); init(context); } private void init(Context context) { paint = new Paint(); paint.setColor(Color.GREEN); paint.setStrokeWidth(5); paint.setStyle(Paint.Style.STROKE);//設定話出的是空心方框而不是實心方塊 } public void setFaces(FaceDetector.Face[] faces, Bitmap bitmap) { if(faces != null && faces.length > 0) { Log.e("yubo", "FaceView setFaces, face size = " + faces.length); this.faces = faces; this.bitmap = bitmap; setImageBitmap(bitmap); calculateFaceArea(); invalidate(); }else{ Log.e("yubo", "FaceView setFaces, faces == null"); } } /** 計算人臉區域 */ private void calculateFaceArea(){ float eyesDistance = 0;//兩眼間距 for(int i = 0; i < faces.length; i++){ FaceDetector.Face face = faces[i]; if(face != null){ PointF pointF = new PointF(); face.getMidPoint(pointF);//獲取人臉中心點 eyesDistance = face.eyesDistance();//獲取人臉兩眼的間距 //計算人臉的區域 float delta = eyesDistance / 2; left = (pointF.x - eyesDistance) / scaleRate; top = (pointF.y - eyesDistance + delta) / scaleRate; right = (pointF.x + eyesDistance) / scaleRate; bottom = (pointF.y + eyesDistance + delta) / scaleRate; x = (int) (pointF.x - eyesDistance); y = (int) (pointF.y - eyesDistance + delta); width = (int) (eyesDistance * 2); } } } private float scaleRate = 1.0f; public void setScaleRate(float rate) { this.scaleRate = rate; } /** 清除資料 */ public void clear(){ this.faces = null; postInvalidate(); } /** 獲取人臉區域,適當擴大了一點人臉區域 */ public Bitmap getFaceArea(){ if(this.bitmap != null) { int bmWidth = bitmap.getWidth(); int bmHeight = bitmap.getHeight(); int delta = 50; width += 50; int height = width; x = (int) (left - delta); y = (int) (top - delta); if(x < 0) { x = 0; } if(y < 0) { y = 0; } if(width > bmWidth) { width = bmWidth; } if(height > bmHeight) { height = bmHeight; } return Bitmap.createBitmap(bitmap, x, y, width, height); } return null; } @Override protected void onDraw(Canvas canvas) { super.onDraw(canvas); if(this.faces == null || faces.length == 0) { return ; } canvas.drawRect(left, top, right, bottom, paint); } }

4、處理影象的工具類:package test.com.getbright; import android.graphics.Bitmap; import android.media.FaceDetector; import android.media.FaceDetector.Face; /** * Created by yubo on 2015/9/5. * 人臉檢測工具類 */ public class FaceDetectorUtils { private static FaceDetector faceDetector; private FaceDetectorUtils(){ } public interface Callback{ void onFaceDetected(Face[] faces, Bitmap bitmap); void onFaceNotDetected(Bitmap bitmap); } /** * 檢測bitmap中的人臉,在callback中返回人臉資料 * @param bitmap * @param callback */ public static void detectFace(Bitmap bitmap, Callback callback){ try { faceDetector = new FaceDetector(bitmap.getWidth(), bitmap.getHeight(), 1); Face[] faces = new Face[1]; int faceNum = faceDetector.findFaces(bitmap, faces); if(faceNum > 0) { if(callback != null) { callback.onFaceDetected(faces, bitmap); } }else{ if(callback != null) { callback.onFaceNotDetected(bitmap); bitmap.recycle(); } } } catch (Exception e) { e.printStackTrace(); } } }

package test.com.getbright;

import android.graphics.Bitmap;

import android.graphics.ImageFormat;

import android.graphics.Matrix;

import android.graphics.Rect;

import android.graphics.YuvImage;

import java.io.ByteArrayOutputStream;

import java.io.File;

import java.io.FileNotFoundException;

import java.io.FileOutputStream;

/**

* Created by yubo on 2015/8/31.

* 影象處理的工具類

*/

public class ImageUtils {

/** 將yuv資料轉換為jpeg */

public static byte[] yuv2Jpeg(byte[] yuvBytes, int width, int height) {

YuvImage yuvImage = new YuvImage(yuvBytes, ImageFormat.NV21, width, height, null);

ByteArrayOutputStream baos = new ByteArrayOutputStream();

yuvImage.compressToJpeg(new Rect(0, 0, width, height), 100, baos);

return baos.toByteArray();

}

/** 旋轉影象 */

public static Bitmap rotateBitmap(Bitmap sourceBitmap, int degree) {

Matrix matrix = new Matrix();

//旋轉90度,並做鏡面翻轉

matrix.setRotate(degree);

matrix.postScale(-1, 1);

return Bitmap.createBitmap(sourceBitmap, 0, 0, sourceBitmap.getWidth(), sourceBitmap.getHeight(), matrix, true);

}

/** 儲存bitmap到檔案 */

public static void saveBitmap(Bitmap bitmap, String path) {

if(bitmap != null) {

try {

bitmap.compress(Bitmap.CompressFormat.JPEG, 100, new FileOutputStream(new File(path)));

} catch (FileNotFoundException e) {

e.printStackTrace();

}

}

}

}

<?xml version="1.0" encoding="utf-8"?>

<FrameLayout

xmlns:android="http://schemas.android.com/apk/res/android"

android:layout_width="match_parent"

android:layout_height="match_parent"

android:gravity="center"

android:background="@color/black">

<test.com.getbright.CameraView

android:id="@+id/camera_view"

android:layout_gravity="center"

android:layout_width="match_parent"

android:layout_height="wrap_content"/>

<test.com.getbright.FaceView

android:id="@+id/face_view"

android:layout_gravity="center"

android:visibility="gone"

android:layout_width="match_parent"

android:layout_height="wrap_content"/>

</FrameLayout>

package test.com.getbright;

import android.annotation.SuppressLint;

import android.app.Activity;

import android.app.AlertDialog;

import android.content.DialogInterface;

import android.graphics.Bitmap;

import android.hardware.Camera;

import android.hardware.Sensor;

import android.hardware.SensorEvent;

import android.hardware.SensorEventListener;

import android.hardware.SensorManager;

import android.os.Bundle;

import android.view.LayoutInflater;

import android.view.View;

import android.widget.ImageView;

import android.widget.TextView;

import android.widget.Toast;

public class MainActivity extends Activity {

CameraView cameraView;

FaceView faceView;

Bitmap fullBitmap;

private SensorManager sensorManager;

private Sensor sensor;

private MySensorListener mySensorListener;

private int sensorBright = 0;

@Override

protected void onCreate(Bundle savedInstanceState) {

super.onCreate(savedInstanceState);

setContentView(R.layout.activity_main);

if(!hasFrontCamera()) {

Toast.makeText(this, "沒有前置攝像頭", Toast.LENGTH_SHORT).show();

return ;

}

sensorManager = (SensorManager) getSystemService(SENSOR_SERVICE);

sensor = sensorManager.getDefaultSensor(Sensor.TYPE_LIGHT);

mySensorListener = new MySensorListener();

sensorManager.registerListener(mySensorListener, sensor, SensorManager.SENSOR_DELAY_NORMAL);

initView();

}

private void initView(){

cameraView = (CameraView) findViewById(R.id.camera_view);

faceView = (FaceView) findViewById(R.id.face_view);

cameraView.setFaceView(faceView);

cameraView.setOnFaceDetectedListener(new CameraView.OnFaceDetectedListener() {

@Override

public void onFaceDetected(Bitmap bm) {

//檢測到人臉後的回撥方法

fullBitmap = bm;

showDialog();

}

});

}

private class MySensorListener implements SensorEventListener {

@Override

public void onSensorChanged(SensorEvent sensorEvent) {

//光線感測器亮度改變

sensorBright = (int) sensorEvent.values[0];

}

@Override

public void onAccuracyChanged(Sensor sensor, int i) {

}

}

private void showDialog(){

AlertDialog.Builder builder = new AlertDialog.Builder(this);

builder.setTitle("計算結果");

View contentView = LayoutInflater.from(this).inflate(R.layout.pop_win_layout, null);

ImageView imageView = (ImageView) contentView.findViewById(R.id.imageview);

TextView textView = (TextView) contentView.findViewById(R.id.textview);

builder.setView(contentView);

Bitmap bm = faceView.getFaceArea();

imageView.setImageBitmap(bm);

textView.setText("人臉區域亮度:" + getBright(bm) + "\n整幅圖片亮度:" + getBright(fullBitmap) + "\n光線感測器的值:" + sensorBright);

builder.setPositiveButton("確定", new DialogInterface.OnClickListener(){

@Override

public void onClick(DialogInterface dialogInterface, int i) {

cameraView.reset();

}

});

builder.setCancelable(false);

builder.create().show();

}

public int getBright(Bitmap bm) {

int width = bm.getWidth();

int height = bm.getHeight();

int r, g, b;

int count = 0;

int bright = 0;

for(int i = 0; i < width; i++) {

for(int j = 0; j < height; j++) {

count++;

int localTemp = bm.getPixel(i, j);

r = (localTemp | 0xff00ffff) >> 16 & 0x00ff;

g = (localTemp | 0xffff00ff) >> 8 & 0x0000ff;

b = (localTemp | 0xffffff00) & 0x0000ff;

bright = (int) (bright + 0.299 * r + 0.587 * g + 0.114 * b);

}

}

return bright / count;

}

/**

* 判斷是否有前置攝像

* @return

*/

@SuppressLint("NewApi")

public static boolean hasFrontCamera(){

Camera.CameraInfo info = new Camera.CameraInfo();

int count = Camera.getNumberOfCameras();

for(int i = 0; i < count; i++){

Camera.getCameraInfo(i, info);

if(info.facing == Camera.CameraInfo.CAMERA_FACING_FRONT){

return true;

}

}

return false;

}

@Override

protected void onDestroy() {

super.onDestroy();

sensorManager.unregisterListener(mySensorListener);

}

}

相關推薦

Android前置攝像頭預覽並檢測人臉,獲取人臉區域亮度

本篇博文記錄如何使用Android的前置攝像頭預覽,並獲取人臉部分,然後計算人臉區域的亮度,下面是程式執行截圖: 下面上程式碼: 1、前置攝像頭預覽時需要用到的類CameraView: package test.com.getbright; import androi

Android Camera2.0 API實現攝像頭預覽並獲取人臉關鍵座標

Android 5.0(API Level 21)以後推出了新的camera2.0 API,原有的Camera1.0已被廢棄,確實新的camera API有更好的架構,更低的耦合,可以使開發人員發揮更大的空間。 API簡介 主要的類有以下幾個: 1.Cam

Android 前置攝像頭的預設是180度,導致應用拍照和錄製視訊是倒立的問題修改

出問題的平板使用的是mediatek平臺晶片,所以修改檔案路徑如下: vendor/mediatek/proprietary/custom/hq8735_6ttb_b2b_m/hal/imgsensor_metadata/common/config_static_metadata_common.

Android Camera開發 給攝像頭預覽介面加個ZoomBar(附完整程式碼下載)

廢話不說了,就是加個seekbar,拖動的話能夠調節焦距,讓畫面變大或縮小。下面是核心程式:一,camera的佈局檔案<LinearLayout xmlns:android="http://schemas.android.com/apk/res/android" x

【JNI】 Android呼叫JNI的進階例項(攝像頭預覽資料轉碼RGB播放)

這裡要說下,儘量不要用Java寫編解碼的東西,就算你是大神,你寫的出來,但那也是不實用的,就像切西瓜一樣,拿一把削水果刀去切西瓜,肯定比不上用西瓜刀方便吧,還是老老實實寫個JNI呼叫得了,也不復雜C/C++方便的很,當然,這裡不是說Java不行,語言只是工具,做什

Opencv For Android: 如何顯示攝像頭預覽

它是在 CameraBridgeBase 這個類裡有一個 方法, deliverAndDrawFrame(CvCameraViewFrame ), 在這個函式裡面, 它將CvCameraViewListener2 的onCameraFrame 返回的Mat 轉換為 b

Android之Camera預覽過程中插拔攝像頭節點後移

現象: 在使用Camera Preview時;熱插拔攝像頭會導致裝置節點由/dev/video0變為/dev/video1,或者插入多個video裝置時,會變為/dev/video1、/dev/video2......。 一、首先看裝置節點的建立 drivers/media

cropper.js實現圖片裁剪預覽並轉換為base64發送至服務端。

urlencode button 圖片 all 完成 r.js borde lan meta 一 、準備工作 1.首先需要先下載cropper,常規使用npm,進入項目路徑後執行以下命令: npm install cropper 2. cropper基於

AndroidOpenCV攝像頭預覽旋轉90度問題

將下圖檔案中的 deliverAndDrawFrame 方法 修改為 protected void deliverAndDrawFrame(CvCameraViewFrame frame){ Mat modified;

android使用webview預覽png,pdf,doc,xls,txt,等檔案

最近有專案有一個需求,就是線上直接預覽pdf,doc,xls,txt等檔案,ios的webview比較強大,可以直接解析地址,然後預覽。但是android的webview就比較差強人意了。當然,開啟各種型別的檔案,我麼可以使用intent來做,但是這個明顯跟我們的需求不一致啊

照片上傳預覽並儲存的功能

一、現在jsp中定義一個上傳檔案的form表單 <form id="imgForm" action="" method="post" enctype="multipart/form-data"> <p class="upload clear

【我的Android進階之旅】自定義控制元件之使用ViewPager實現可以預覽的畫廊效果,並且自定義畫面切換的動畫效果的切換時間

我們來看下效果 在這裡,我們實現的是,一個ViewPager來顯示圖片列表。這裡一個頁面,ViewPage展示了前後的預覽,我們讓預覽頁進行Y軸的壓縮,並設定透明度為0.5f,所有我們看到gif最後,左右兩邊的圖片有點朦朧感。讓預覽頁和主頁面有主從感。我們用分

html5預覽並上傳圖片的功能

html5支援圖片預覽 具體程式碼:上傳頁面 upload_h5.html <!DOCTYPE html> <html> <head> <meta charset="UTF-8"> <title>使用html5

手機+PC雙屏顯示:android端即時預覽PC端修改的程式碼

前言 如何讓手機充當第二個顯示器,用來隨時預覽PC端的程式碼?前一陣子寫程式碼時,一直在琢磨這個問題。 因為辦公室電腦配置低下,且只配備一個17寸顯示器,每當反覆除錯預覽網頁時,都要儲存,重新整理。用過 brackets即使預覽功能,總是不太習慣。於是就想

c# 頁面列印預覽 並儲存為PDF

這次列印的方法主要是獲取頁面的html檔案 進行整理 並生成列印預覽 1.C# 的.aspx 頁面 需要設定 <!--startprint--> 和 <!--endprint--> 用於設定列印內容 2.列印按鈕設定onclick="previe

html將圖片讀取為base64格式預覽並傳到伺服器

<!DOCTYPE html> <html lang="en"> <head> <meta charset="UTF-8"> <title>Document</title> <style ty

關於Android手機拍照預覽、剪裁介面出現照片九十度旋轉的問題

案場還原: 最近做的專案,測試機小米6X及本人的努比亞Z11測試拍照環節均正常,但在領導的三星手機及Oppo FindX上就出現了奇葩現象,拍照完預覽照片、剪裁照片出現了九十度的旋轉,如果這時候你用模擬器,比如Genymotion也能發現此問題,預覽及剪裁出現

Android遠端桌面助手(B1185)for Android P開發者預覽版

sunrain_hjb的BLOG ARM.WinCE.Android.Robot.Linux.IoT.VR... Develop Helpful and Effective apps to make Jobs easier and lives Better!

ionic3從手機相簿選擇多張照片預覽並上傳到伺服器

安裝外掛①image-picker選擇多張照片--參照https://ionicframework.com/docs/native/image-picker/命令--ionic cordova plugin add cordova-plugin-telerik-imagepi

android studio佈局預覽報The following classes could not be found錯的問題

本文轉自:http://my.oschina.net/u/2425146/blog/546649?p=1 剛剛進入studio 的小夥伴們遇到很多問題吧,這個是我曾經遇到的問題,希望能幫助大家,如果覺的有幫助您記得給個贊哦 Rendering Problems The f