Kubernetes+Docker+Calico叢集安裝配置

一、環境說明

| 作業系統 | 主機名 | 節點及功能 | IP | 備註 |

| CentOS7.5 X86_64 |

k8s-master |

master/etcd/registry | 192.168.168.2 | kube-apiserver、kube-controller-manager、kube-scheduler、etcd、docker、calico-image |

| CentOS7.5 X86_64 |

work-node01 |

node01/etcd | 192.168.168.3 | kube-proxy、kubelet、etcd、docker、calico |

| CentOS7.5 X86_64 | work-node02 | node02/etcd | 192.168.168.4 | kube-proxy、kubelet、etcd、docker、calico |

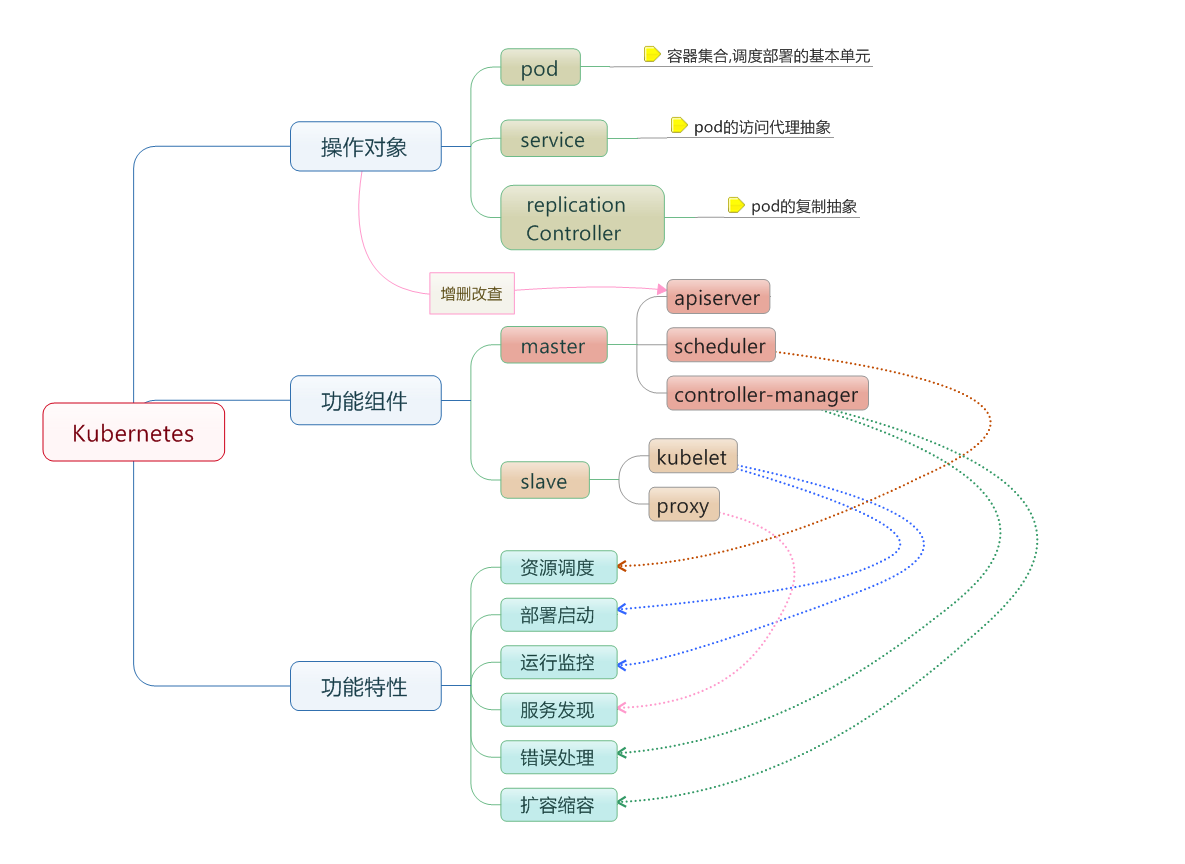

叢集功能各模組功能描述:

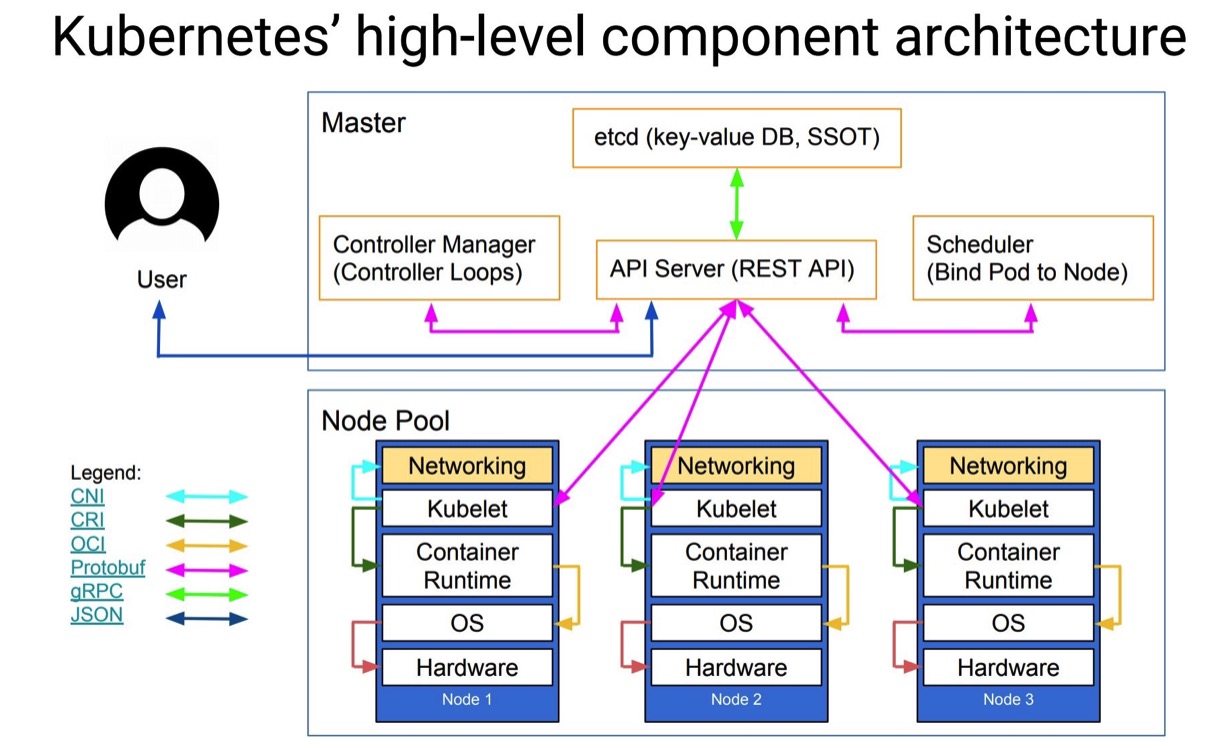

Master節點:

Master節點上面主要由四個模組組成,APIServer,schedule,controller-manager,etcd

APIServer: APIServer負責對外提供RESTful的kubernetes API的服務,它是系統管理指令的統一介面,任何對資源的增刪該查都要交給APIServer處理後再交給etcd,如圖,kubectl(kubernetes提供的客戶端工具,該工具內部是對kubernetes API的呼叫)是直接和APIServer互動的。

schedule: schedule負責排程Pod到合適的Node上,如果把scheduler看成一個黑匣子,那麼它的輸入是pod和由多個Node組成的列表,輸出是Pod和一個Node的繫結。 kubernetes目前提供了排程演算法,同樣也保留了介面。使用者根據自己的需求定義自己的排程演算法。

controller manager: 如果APIServer做的是前臺的工作的話,那麼controller manager就是負責後臺的。每一個資源都對應一個控制器。而control manager就是負責管理這些控制器的,比如我們通過APIServer建立了一個Pod,當這個Pod建立成功後,APIServer的任務就算完成了。

etcd:etcd是一個高可用的鍵值儲存系統,kubernetes使用它來儲存各個資源的狀態,從而實現了Restful的API。

Node節點:

每個Node節點主要由三個模板組成:kublet, kube-proxy

kube-proxy: 該模組實現了kubernetes中的服務發現和反向代理功能。kube-proxy支援TCP和UDP連線轉發,預設基Round Robin演算法將客戶端流量轉發到與service對應的一組後端pod。服務發現方面,kube-proxy使用etcd的watch機制監控叢集中service和endpoint物件資料的動態變化,並且維護一個service到endpoint的對映關係,從而保證了後端pod的IP變化不會對訪問者造成影響,另外,kube-proxy還支援session affinity。

kublet:kublet是Master在每個Node節點上面的agent,是Node節點上面最重要的模組,它負責維護和管理該Node上的所有容器,但是如果容器不是通過kubernetes建立的,它並不會管理。本質上,它負責使Pod的執行狀態與期望的狀態一致。

二、3臺主機安裝前準備

1)更新軟體包和核心

yum -y update

2) 關閉防火牆

systemctl disable firewalld.service

3) 關閉SELinux

vi /etc/selinux/config

改SELINUX=enforcing為SELINUX=disabled

4)安裝常用

yum -y install net-tools ntpdate conntrack-tools

5)優化核心引數

net.ipv4.ip_forward=1

net.bridge.bridge-nf-call-iptables=1

net.bridge.bridge-nf-call-ip6tables=1

net.ipv4.ip_local_port_range = 30000 60999

net.netfilter.nf_conntrack_max = 26214400

net.netfilter.nf_conntrack_tcp_timeout_established = 86400

net.netfilter.nf_conntrack_tcp_timeout_close_wait = 3600三、修改三臺主機命名

1) k8s-master

hostnamectl --static set-hostname k8s-master

2) work-node01

hostnamectl --static set-hostname work-node01

3) work-node02

hostnamectl --static set-hostname work-node02

四、製作CA證書

1.建立生成證書和存放證書目錄(3臺主機上都進行此操作)

mkdir /root/ssl

mkdir -p /opt/kubernetes/{conf,bin,ssl,yaml}2.設定環境變數(3臺主機上都進行此操作)

vi /etc/profile.d/kubernetes.sh

K8S_HOME=/opt/kubernetes

export PATH=$K8S_HOME/bin/:$PATH

source /etc/profile.d/kubernetes.sh3.安裝CFSSL並複製到node01號node02節點

cd /root/ssl

wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64

wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64

wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64

chmod +x cfssl*

mv cfssl-certinfo_linux-amd64 /opt/kubernetes/bin/cfssl-certinfo

mv cfssljson_linux-amd64 /opt/kubernetes/bin/cfssljson

mv cfssl_linux-amd64 /opt/kubernetes/bin/cfsslscp /opt/kubernetes/bin/cfssl* 192.168.168.3:/opt/kubernetes/bin

scp /opt/kubernetes/bin/cfssl* 192.168.168.4:/opt/kubernetes/bin4.建立用來生成 CA 檔案的 JSON 配置檔案

cd /root/ssl

cat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "87600h"

}

}

}

}

EOF

server auth表示client可以用該ca對server提供的證書進行驗證

client auth表示server可以用該ca對client提供的證書進行驗證

5.建立用來生成 CA 證書籤名請求(CSR)的 JSON 配置檔案

cat > ca-csr.json <<EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF6.生成CA證書和私鑰

# cfssl gencert -initca ca-csr.json | cfssljson -bare ca

# ls ca*

ca-config.json ca.csr ca-csr.json ca-key.pem ca.pem將SCP證書分發到各節點

# cp ca.csr ca.pem ca-key.pem ca-config.json /opt/kubernetes/ssl

# scp ca.csr ca.pem ca-key.pem ca-config.json [email protected]:/opt/kubernetes/ssl

# scp ca.csr ca.pem ca-key.pem ca-config.json [email protected]:/opt/kubernetes/ssl7.建立etcd證書請求

# cat > etcd-csr.json <<EOF

{

"CN": "etcd",

"hosts": [

"127.0.0.1",

"192.168.168.2",

"192.168.168.3",

"192.168.168.4",

"k8s-master",

"work-node01",

"work-node02"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF8.生成 etcd 證書和私鑰

# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes etcd-csr.json | cfssljson -bare etcd

# ls etc*

etcd.csr etcd-key.pem etcd.pem分發證書檔案

# cp /root/ssl/etcd*.pem /opt/kubernetes/ssl

# scp /root/ssl/etcd*.pem [email protected]/opt/kubernetes/ssl

# scp /root/ssl/etcd*.pem [email protected]/opt/kubernetes/ssl五、Etcd叢集安裝配置(配置前3臺主機需時間同步)

1.修改hosts(3臺主機上都進行此操作)

vi /etc/hosts

# echo '192.168.168.2 k8s-master

192.168.168.3 work-node01

192.168.168.4 work-node02' >> /etc/hosts2.下載etcd安裝包

3.解壓安裝etcd(3臺主機做同樣配置)

mkdir /var/lib/etcd

tar -zxvf etcd-v3.3.7-linux-amd64.tar.gz

cp etcd etcdctl /opt/kubernetes/bin4.建立etcd啟動檔案

cat > /usr/lib/systemd/system/etcd.service <<EOF

[Unit]

Description=Etcd Server

After=network.target

[Service]

Type=simple

WorkingDirectory=/var/lib/etcd

EnvironmentFile=/opt/kubernetes/conf/etcd.conf

# set GOMAXPROCS to number of processors

ExecStart=/bin/bash -c "GOMAXPROCS=1 /opt/kubernetes/bin/etcd"

Type=notify

[Install]

WantedBy=multi-user.target

EOF將etcd.service檔案分發到各node節點

scp /usr/lib/systemd/system/etcd.service [email protected]:/usr/lib/systemd/system/etcd.service

scp /usr/lib/systemd/system/etcd.service [email protected]:/usr/lib/systemd/system/etcd.service5.k8s-master(192.168.168.2)編譯etcd.conf檔案

vi /opt/kubernetes/conf/ectd.conf

#[Member]

#ETCD_CORS=""

ETCD_DATA_DIR="/var/lib/etcd/k8s-master.etcd"

#ETCD_WAL_DIR=""

ETCD_LISTEN_PEER_URLS="https://0.0.0.0:2380"

ETCD_LISTEN_CLIENT_URLS="https://0.0.0.0:2379,http://127.0.0.1:4001"

#ETCD_MAX_SNAPSHOTS="5"

#ETCD_MAX_WALS="5"

ETCD_NAME="k8s-master"

#ETCD_SNAPSHOT_COUNT="100000"

#ETCD_HEARTBEAT_INTERVAL="100"

#ETCD_ELECTION_TIMEOUT="1000"

#ETCD_QUOTA_BACKEND_BYTES="0"

#ETCD_MAX_REQUEST_BYTES="1572864"

#ETCD_GRPC_KEEPALIVE_MIN_TIME="5s"

#ETCD_GRPC_KEEPALIVE_INTERVAL="2h0m0s"

#ETCD_GRPC_KEEPALIVE_TIMEOUT="20s"

#

#[Clustering]

#ETCD_INITIAL_ADVERTISE_PEER_URLS="http://localhost:2380"

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.168.2:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.168.2:2379"

#ETCD_DISCOVERY=""

#ETCD_DISCOVERY_FALLBACK="proxy"

#ETCD_DISCOVERY_PROXY=""

#ETCD_DISCOVERY_SRV=""

ETCD_INITIAL_CLUSTER="k8s-master=https://192.168.168.2:2380,work-node01=https://192.168.168.3:2380,work-node02=https://192.168.168.4:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

#ETCD_STRICT_RECONFIG_CHECK="true"

#ETCD_ENABLE_V2="true"

#

#[Proxy]

#ETCD_PROXY="off"

#ETCD_PROXY_FAILURE_WAIT="5000"

#ETCD_PROXY_REFRESH_INTERVAL="30000"

#ETCD_PROXY_DIAL_TIMEOUT="1000"

#ETCD_PROXY_WRITE_TIMEOUT="5000"

#ETCD_PROXY_READ_TIMEOUT="0"

#

#[Security]

CLIENT_CERT_AUTH="true"

ETCD_CA_FILE="/opt/kubernetes/ssl/ca.pem"

ETCD_CERT_FILE="/opt/kubernetes/ssl/etcd.pem"

ETCD_KEY_FILE="/opt/kubernetes/ssl/etcd-key.pem"

PEER_CLIENT_CERT_AUTH="true"

ETCD_PEER_CA_FILE="/opt/kubernetes/ssl/ca.pem"

ETCD_PEER_CERT_FILE="/opt/kubernetes/ssl/etcd.pem"

ETCD_PEER_KEY_FILE="/opt/kubernetes/ssl/etcd-key.pem"

#

#[Logging]

#ETCD_DEBUG="false"

#ETCD_LOG_PACKAGE_LEVELS=""

#ETCD_LOG_OUTPUT="default"

#

#[Unsafe]

#ETCD_FORCE_NEW_CLUSTER="false"

#

#[Version]

#ETCD_VERSION="false"

#ETCD_AUTO_COMPACTION_RETENTION="0"

#

#[Profiling]

#ETCD_ENABLE_PPROF="false"

#ETCD_METRICS="basic"

#

#[Auth]

#ETCD_AUTH_TOKEN="simple"6.work-node01(192.168.168.3)編譯etcd.conf檔案

vi /opt/kubernetes/conf/ectd.conf

#[Member]

#ETCD_CORS=""

ETCD_DATA_DIR="/var/lib/etcd/work-node01.etcd"

#ETCD_WAL_DIR=""

ETCD_LISTEN_PEER_URLS="https://0.0.0.0:2380"

ETCD_LISTEN_CLIENT_URLS="https://0.0.0.0:2379,https://127.0.0.1:4001"

#ETCD_MAX_SNAPSHOTS="5"

#ETCD_MAX_WALS="5"

ETCD_NAME="work-node01"

#ETCD_SNAPSHOT_COUNT="100000"

#ETCD_HEARTBEAT_INTERVAL="100"

#ETCD_ELECTION_TIMEOUT="1000"

#ETCD_QUOTA_BACKEND_BYTES="0"

#ETCD_MAX_REQUEST_BYTES="1572864"

#ETCD_GRPC_KEEPALIVE_MIN_TIME="5s"

#ETCD_GRPC_KEEPALIVE_INTERVAL="2h0m0s"

#ETCD_GRPC_KEEPALIVE_TIMEOUT="20s"

#

#[Clustering]

#ETCD_INITIAL_ADVERTISE_PEER_URLS="http://localhost:2380"

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.168.3:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.168.3:2379"

#ETCD_DISCOVERY=""

#ETCD_DISCOVERY_FALLBACK="proxy"

#ETCD_DISCOVERY_PROXY=""

#ETCD_DISCOVERY_SRV=""

ETCD_INITIAL_CLUSTER="k8s-master=https://192.168.168.2:2380,work-node01=https://192.168.168.3:2380,work-node02=https://192.168.168.4:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

#ETCD_STRICT_RECONFIG_CHECK="true"

#ETCD_ENABLE_V2="true"

#

#[Proxy]

#ETCD_PROXY="off"

#ETCD_PROXY_FAILURE_WAIT="5000"

#ETCD_PROXY_REFRESH_INTERVAL="30000"

#ETCD_PROXY_DIAL_TIMEOUT="1000"

#ETCD_PROXY_WRITE_TIMEOUT="5000"

#ETCD_PROXY_READ_TIMEOUT="0"

#

#[Security]

CLIENT_CERT_AUTH="true"

ETCD_CA_FILE="/opt/kubernetes/ssl/ca.pem"

ETCD_CERT_FILE="/opt/kubernetes/ssl/etcd.pem"

ETCD_KEY_FILE="/opt/kubernetes/ssl/etcd-key.pem"

PEER_CLIENT_CERT_AUTH="true"

ETCD_PEER_CA_FILE="/opt/kubernetes/ssl/ca.pem"

ETCD_PEER_CERT_FILE="/opt/kubernetes/ssl/etcd.pem"

ETCD_PEER_KEY_FILE="/opt/kubernetes/ssl/etcd-key.pem"

#

#[Logging]

#ETCD_DEBUG="false"

#ETCD_LOG_PACKAGE_LEVELS=""

#ETCD_LOG_OUTPUT="default"

#

#[Unsafe]

#ETCD_FORCE_NEW_CLUSTER="false"

#

#[Version]

#ETCD_VERSION="false"

#ETCD_AUTO_COMPACTION_RETENTION="0"

#

#[Profiling]

#ETCD_ENABLE_PPROF="false"

#ETCD_METRICS="basic"

#

#[Auth]

#ETCD_AUTH_TOKEN="simple"7.work-node02編譯etcd.conf檔案

vi /opt/kubernetes/conf/ectd.conf

#[Member]

#ETCD_CORS=""

ETCD_DATA_DIR="/var/lib/etcd/work-node02.etcd"

#ETCD_WAL_DIR=""

ETCD_LISTEN_PEER_URLS="https://0.0.0.0:2380"

ETCD_LISTEN_CLIENT_URLS="https://0.0.0.0:2379,https://127.0.0.1:4001"

#ETCD_MAX_SNAPSHOTS="5"

#ETCD_MAX_WALS="5"

ETCD_NAME="work-node02"

#ETCD_SNAPSHOT_COUNT="100000"

#ETCD_HEARTBEAT_INTERVAL="100"

#ETCD_ELECTION_TIMEOUT="1000"

#ETCD_QUOTA_BACKEND_BYTES="0"

#ETCD_MAX_REQUEST_BYTES="1572864"

#ETCD_GRPC_KEEPALIVE_MIN_TIME="5s"

#ETCD_GRPC_KEEPALIVE_INTERVAL="2h0m0s"

#ETCD_GRPC_KEEPALIVE_TIMEOUT="20s"

#

#[Clustering]

#ETCD_INITIAL_ADVERTISE_PEER_URLS="http://localhost:2380"

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.168.4:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.168.4:2379"

#ETCD_DISCOVERY=""

#ETCD_DISCOVERY_FALLBACK="proxy"

#ETCD_DISCOVERY_PROXY=""

#ETCD_DISCOVERY_SRV=""

ETCD_INITIAL_CLUSTER="k8s-master=https://192.168.168.2:2380,work-node01=https://192.168.168.3:2380,work-node02=https://192.168.168.4:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

#ETCD_STRICT_RECONFIG_CHECK="true"

#ETCD_ENABLE_V2="true"

#

#[Proxy]

#ETCD_PROXY="off"

#ETCD_PROXY_FAILURE_WAIT="5000"

#ETCD_PROXY_REFRESH_INTERVAL="30000"

#ETCD_PROXY_DIAL_TIMEOUT="1000"

#ETCD_PROXY_WRITE_TIMEOUT="5000"

#ETCD_PROXY_READ_TIMEOUT="0"

#

#[Security]

CLIENT_CERT_AUTH="true"

ETCD_CA_FILE="/opt/kubernetes/ssl/ca.pem"

ETCD_CERT_FILE="/opt/kubernetes/ssl/etcd.pem"

ETCD_KEY_FILE="/opt/kubernetes/ssl/etcd-key.pem"

PEER_CLIENT_CERT_AUTH="true"

ETCD_PEER_CA_FILE="/opt/kubernetes/ssl/ca.pem"

ETCD_PEER_CERT_FILE="/opt/kubernetes/ssl/etcd.pem"

ETCD_PEER_KEY_FILE="/opt/kubernetes/ssl/etcd-key.pem"

#

#[Logging]

#ETCD_DEBUG="false"

#ETCD_LOG_PACKAGE_LEVELS=""

#ETCD_LOG_OUTPUT="default"

#

#[Unsafe]

#ETCD_FORCE_NEW_CLUSTER="false"

#

#[Version]

#ETCD_VERSION="false"

#ETCD_AUTO_COMPACTION_RETENTION="0"

#

#[Profiling]

#ETCD_ENABLE_PPROF="false"

#ETCD_METRICS="basic"

#

#[Auth]

#ETCD_AUTH_TOKEN="simple"8.各etcd節點啟動etcd並設定開機自動啟動

# systemctl daemon-reload

# systemctl enable etcd

# systemctl start etcd.service

# systemctl status etcd.service

9.各etcd節點測試驗證etcd叢集配置

# etcd --version //檢視etcd安裝版本

etcd Version: 3.3.7

Git SHA: 56536de55

Go Version: go1.9.6

Go OS/Arch: linux/amd64檢視etcd健康叢集狀態

# etcdctl --endpoints=https://192.168.168.2:2379 \

--ca-file=/opt/kubernetes/ssl/ca.pem \

--cert-file=/opt/kubernetes/ssl/etcd.pem \

--key-file=/opt/kubernetes/ssl/etcd-key.pem cluster-health

member b1840b0a404e1103 is healthy: got healthy result from https://192.168.168.2:2379

member d15b66900329a12d is healthy: got healthy result from https://192.168.168.4:2379

member f9794412c46a9cb0 is healthy: got healthy result from https://192.168.168.3:2379

cluster is healthy檢視etcd叢集狀態

# etcdctl --endpoints=https://192.168.168.2:2379 \

--ca-file=/opt/kubernetes/ssl/ca.pem \

--cert-file=/opt/kubernetes/ssl/etcd.pem \

--key-file=/opt/kubernetes/ssl/etcd-key.pem member list

b1840b0a404e1103: name=k8s-master peerURLs=https://192.168.168.2:2380 clientURLs=https://192.168.168.2:2379 isLeader=false

d15b66900329a12d: name=work-node02 peerURLs=https://192.168.168.4:2380 clientURLs=https://192.168.168.4:2379 isLeader=true

f9794412c46a9cb0: name=work-node01 peerURLs=https://192.168.168.3:2380 clientURLs=https://192.168.168.3:2379 isLeader=false六、3臺主機上安裝docker-engine

1.詳細步驟見“Oracle Linux7安裝Docker”

2.配置Docker連線ETCD叢集

設定docker的JSON檔案

# vi /etc/docker/daemon.json

{

"bip": "172.17.0.1/24",

"exec-opts": ["native.cgroupdriver=systemd"],

"registry-mirrors": ["https://wghlmi3i.mirror.aliyuncs.com"],

"cluster-store": "etcd://192.168.168.2:2379,192.168.168.3:2379,192.168.168.4:2379"

}注:各node節點docker bip地址不重複,如node01為172.17.1.1/24,node02為172.17.2.1/24

設定docer啟動檔案

# vi /usr/lib/systemd/system/docker.service

將

ExecStart=/usr/bin/dockerd

改為

ExecStart=/usr/bin/dockerd --tlsverify \

--tlscacert=/opt/kubernetes/ssl/ca.pem \

--tlscert=/opt/kubernetes/ssl/etcd.pem \

--tlskey=/opt/kubernetes/ssl/etcd-key.pem -H tcp://0.0.0.0:2375 -H unix:///var/run/docker.sock# systemctl daemon-reload

# systemctl enable docker.service

# systemctl start docker.service

# systemctl status docker.service3.測試Docker TLS配置驗證情況

七、Kubernetes叢集安裝配置

kubernetes-server-linux-amd64.tar.gz

kubernetes-node-linux-amd64.tar.gz

2.解壓Kubernets壓縮包,生成一個kubernetes目錄

tar -zxvf kubernetes-server-linux-amd64.tar.gz

tar -zxvf kubernetes-node-linux-amd64.tar.gz3.配置k8s-master(192.168.168.2)

1)將k8s可執行檔案拷貝至kubernets/bin目錄下

# cp -r /opt/software/kubernetes/server/bin/{kube-apiserver,kube-controller-manager,kube-scheduler,kubectl,kubeadm} /opt/kubernetes/bin/

# scp /opt/software/kubernetes/node/bin/{kubectl,kube-proxy,kubelet} [email protected]:/opt/kubernetes/bin/

# scp /opt/software/kubernetes/node/bin/{kubectl,kube-proxy,kubelet} [email protected]:/opt/kubernetes/bin/2)建立生成K8S csr的JSON配置檔案:

# cd /root/ssl

# cat > kubernetes-csr.json <<EOF

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"192.168.168.2",

"192.168.168.3",

"192.168.168.4",

"10.1.0.1"

"10.2.0.1",

"localhost",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF注:10.1.0.1地址為service-cluster網段中第一個ip,10.2.0.1地址為cluster-cidr網段中第一個ip

3)在/root/ssl目錄下生成k8s證書和私鑰,並分發到各節點

# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kubernetes-csr.json | cfssljson -bare kubernetes

# cp kubernetes*.pem /opt/kubernetes/ssl/

# scp cp kubernetes*.pem [email protected]:/opt/kubernetes/ssl/

# scp cp kubernetes*.pem [email protected]:/opt/kubernetes/ssl/4)建立生成admin證書csr的JSON配置檔案

# cat > admin-csr.json <<EOF

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

EOF5)生成admin證書和私鑰

# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

# cp admin*.pem /opt/kubernetes/ssl/6)建立kube-apiserver使用的客戶端token檔案

# mkdir /opt/kubernetes/token //在各k8s節點執行相同步驟

# export BOOTSTRAP_TOKEN=$(head -c 16 /dev/urandom | od -An -t x | tr -d ' ') //生成一個程式登入3rd_session

# cat > /opt/kubernetes/token/bootstrap-token.csv <<EOF

${BOOTSTRAP_TOKEN},kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF7)建立基礎使用者名稱/密碼認證配置

# vi /opt/kubernetes/token/basic-auth.csv //新增如下內容

admin,admin,1

readonly,readonly,28)建立Kube API Server啟動檔案

# vi /usr/lib/systemd/system/kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=network.target

After=etcd.service

[Service]

EnvironmentFile=/opt/kubernetes/conf/kube.conf

EnvironmentFile=/opt/kubernetes/conf/apiserver.conf

ExecStart=/opt/kubernetes/bin/kube-apiserver \

$KUBE_LOGTOSTDERR \

$KUBE_LOG_LEVEL \

$KUBE_ETCD_SERVERS \

$KUBE_API_ADDRESS \

$KUBE_API_PORT \

$KUBELET_PORT \

$KUBE_ALLOW_PRIV \

$KUBE_SERVICE_ADDRESSES \

$KUBE_ADMISSION_CONTROL \

$KUBE_API_ARGS

Restart=on-failure

RestartSec=5

Type=notify

LimitNOFILE=65536

[Install]

WantedBy=multi-user.targetmkdir /var/log/kubernetes //在各k8s節點執行相同步驟mkdir /var/log/kubernetes/apiserver9)建立kube.conf檔案

# vi /opt/kubernetes/conf/kube.conf

###

# kubernetes system config

#

# The following values are used to configure various aspects of all

# kubernetes services, including

#

# kube-apiserver.service

# kube-controller-manager.service

# kube-scheduler.service

# kubelet.service

# kube-proxy.service

# logging to stderr means we get it in the systemd journal

KUBE_LOGTOSTDERR="--logtostderr=true"

#

# journal message level, 0 is debug

KUBE_LOG_LEVEL="--v=2" //log級別

#

# Should this cluster be allowed to run privileged docker containers

KUBE_ALLOW_PRIV="--allow-privileged=true"

#

# How the controller-manager, scheduler, and proxy find the apiserver

#KUBE_MASTER="--master=http://sz-pg-oam-docker-test-001.tendcloud.com:8080"

KUBE_MASTER="--master=http://127.0.0.1:8080"注:該配置檔案同時被kube-apiserver、kube-controller-manager、kube-scheduler、kubelet、kube-proxy共用,在各node節點KUBE_MASTER值登出

向各分發kube.conf檔案

# scp kube.conf [email protected]:/opt/kubernetes/conf/

# scp kube.conf [email protected]:/opt/kubernetes/conf/10)生成高階審計配置

cat > /opt/kubernetes/yaml/audit-policy.yaml <<EOF

# Log all requests at the Metadata level.

apiVersion: audit.k8s.io/v1beta1

kind: Policy

rules:

- level: Metadata

EOF11)建立kube API Server配置檔案並啟動

# vi /opt/kubernetes/conf/apiserver.conf

###

## kubernetes system config

##

## The following values are used to configure the kube-apiserver

##

#

## The address on the local server to listen to.

#KUBE_API_ADDRESS="--insecure-bind-address=sz-pg-oam-docker-test-001.tendcloud.com"

KUBE_API_ADDRESS="--advertise-address=0.0.0.0 --bind-address=0.0.0.0 --insecure-bind-address=127.0.0.1"

#

## The port on the local server to listen on.

#KUBE_API_PORT="--port=8080"

#

## Port minions listen on

#KUBELET_PORT="--kubelet-port=10250"

#

## Comma separated list of nodes in the etcd cluster

KUBE_ETCD_SERVERS="--etcd-servers=https://192.168.168.2:2379,https://192.168.168.3:2379,https://192.168.168.4:2379"

#

## Address range to use for services

KUBE_SERVICE_ADDRESSES="--service-cluster-ip-range=10.1.0.0/16"

#

## default admission control policies

KUBE_ADMISSION_CONTROL="--admission-control=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota,NodeRestriction,MutatingAdmissionWebhook,ValidatingAdmissionWebhook"

#

## Add your own!

KUBE_API_ARGS="--authorization-mode=Node,RBAC \

--runtime-config=rbac.authorization.k8s.io/v1 \

--kubelet-https=true \

--anonymous-auth=false \

--enable-bootstrap-token-auth \

--basic-auth-file=/opt/kubernetes/token/basic-auth.csv \

--token-auth-file=/opt/kubernetes/token/bootstrap-token.csv \

--service-node-port-range=30000-32767 \

--tls-cert-file=/opt/kubernetes/ssl/kubernetes.pem \

--tls-private-key-file=/opt/kubernetes/ssl/kubernetes-key.pem \

--client-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \

--etcd-cafile=/opt/kubernetes/ssl/ca.pem \

--etcd-certfile=/opt/kubernetes/ssl/kubernetes.pem \

--etcd-keyfile=/opt/kubernetes/ssl/kubernetes-key.pem \

--allow-privileged=true \

--enable-swagger-ui=true \

--apiserver-count=3 \

--audit-policy-file=/opt/kubernetes/yaml/audit-policy.yaml \

--audit-log-maxage=30 \

--audit-log-maxbackup=3 \

--audit-log-maxsize=100 \

--audit-log-path=/var/log/kubernetes/apiserver/api-audit.log \

--log-dir=/var/log/kubernetes/apiserver \

--event-ttl=1h" \

--requestheader-client-ca-file=/opt/kubernetes/ssl/ca.pem \

--requestheader-allowed-names=aggregator \

--requestheader-extra-headers-prefix=X-Remote-Extra- \

--requestheader-group-headers=X-Remote-Group \

--requestheader-username-headers=X-Remote-User \

--enable-aggregator-routing=true"# systemctl daemon-reload

# systemctl enable kube-apiserver

# systemctl start kube-apiserver

# systemctl status kube-apiserver11)建立Kube Controller Manager啟動檔案

# vi /usr/lib/systemd/system/kube-controller-manager.service

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/conf/kube.conf

EnvironmentFile=/opt/kubernetes/conf/controller-manager.conf

ExecStart=/opt/kubernetes/bin/kube-controller-manager \

$KUBE_LOGTOSTDERR \

$KUBE_LOG_LEVEL \

$KUBE_MASTER \

$KUBE_CONTROLLER_MANAGER_ARGS

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.targetmkdir /var/log/kubernetes/controller-manager13)建立kube Controller Manager配置檔案並啟動

# vi /opt/kubernetes/conf/controller-manager.conf

###

# The following values are used to configure the kubernetes controller-manager

#

# defaults from config and apiserver should be adequate

#

# Add your own!

KUBE_CONTROLLER_MANAGER_ARGS="--address=127.0.0.1 \

--service-cluster-ip-range=10.1.0.0/16 \

--cluster-cidr=10.2.0.0/16 \

--cluster-name=kubernetes \

--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \

--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \

--service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \

--root-ca-file=/opt/kubernetes/ssl/ca.pem \

--leader-elect=true \

--log-dir=/var/log/kubernetes/controller-manager"注:--service-cluster-ip-range引數指定Cluster中Service 的CIDR範圍,該網路在各Node間必須路由不可達,必須和kube-apiserver中的引數一致,--cluster-cidr引數指定pod網段

# systemctl daemon-reload

# systemctl enable kube-controller-manager

# systemctl start kube-controller-manager

# systemctl status kube-controller-manager14)建立Kube Scheduler啟動檔案

# vi /usr/lib/systemd/system/kube-scheduler.service

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/conf/kube.conf

EnvironmentFile=/opt/kubernetes/conf/scheduler.conf

ExecStart=/opt/kubernetes/bin/kube-scheduler \

$KUBE_LOGTOSTDERR \

$KUBE_LOG_LEVEL \

$KUBE_MASTER \

$KUBE_SCHEDULER_ARGS

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.targetmkdir /var/log/kubernetes/scheduler15)建立Kube Scheduler配置檔案並啟動

# vi /opt/kubernetes/conf/scheduler.conf

###

# kubernetes scheduler config

#

# default config should be adequate

#

# Add your own!

KUBE_SCHEDULER_ARGS="--leader-elect=true \

--address=127.0.0.1 \

--log-dir=/var/log/kubernetes/scheduler"# systemctl daemon-reload

# systemctl enable kube-scheduler

# systemctl start kube-scheduler

# systemctl status kube-scheduler16)建立kubectl kubeconfig檔案

設定叢集引數

# kubectl config set-cluster kubernetes --certificate-authority=/opt/kubernetes/ssl/ca.pem --embed-certs=true --server=https://192.168.168.2:6443

Cluster "kubernetes" set.Cluster "kubernetes" set.設定客戶端認證引數

# kubectl config set-credentials admin --client-certificate=/opt/kubernetes/ssl/admin.pem --embed-certs=true --client-key=/opt/kubernetes/ssl/admin-key.pem

User "admin" set.設定上下文引數

# kubectl config set-context kubernetes --cluster=kubernetes --user=admin

Context "kubernetes" created.設定預設上下文

# kubectl config use-context kubernetes

Switched to context "kubernetes".17)驗證各元件健康狀況

# kubectl get cs

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-0 Healthy {"health":"true"}

etcd-1 Healthy {"health":"true"}

etcd-2 Healthy {"health":"true"} 18)建立角色繫結

# kubectl create clusterrolebinding --user system:serviceaccount:kube-system:default kube-system-cluster-admin --clusterrole cluster-admin

clusterrolebindings.rbac.authorization.k8s.io "kube-system-cluster-admin"注:在kubernetes-1.11.0以前使用如下命令

# kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap

clusterrolebinding.rbac.authorization.k8s.io "kubelet-bootstrap" created19)建立kubelet bootstrapping kubeconfig檔案

設定叢集引數

# kubectl config set-cluster kubernetes --certificate-authority=/opt/kubernetes/ssl/ca.pem --embed-certs=true --server=https://192.168.168.2:6443 --kubeconfig=bootstrap.kubeconfig

Cluster "kubernetes" set.設定客戶端認證引數

# kubectl config set-credentials kubelet-bootstrap --token=1cd425206a373f7cc75c958fd363e3fe --kubeconfig=bootstrap.kubeconfig

User "kubelet-bootstrap" set.token值為master建立token檔案時生成的128bit字串

設定上下文引數

# kubectl config set-context default --cluster=kubernetes --user=kubelet-bootstrap --kubeconfig=bootstrap.kubeconfig

Context "default" created.設定預設上下文

# kubectl config use-context default --kubeconfig=bootstrap.kubeconfig

Switched to context "default".將生成的bootstrap.kubeconfig檔案分發到各節點

cp bootstrap.kubeconfig /opt/kubernetes/conf/

scp bootstrap.kubeconfig [email protected]:/opt/kubernetes/conf/

scp bootstrap.kubeconfig [email protected]:/opt/kubernetes/conf/20)建立生成kube-proxy證書csr的JSON配置檔案

# cd /root/ssl

# cat > kube-proxy-csr.json << EOF

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF21)生成kube-proxy證書和私鑰

# cfssl gencert -ca=/opt/kubernetes/ssl/ca.pem -ca-key=/opt/kubernetes/ssl/ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

# cp kube-proxy*.pem /opt/kubernetes/ssl/

# scp kube-proxy*.pem [email protected]:/opt/kubernetes/ssl/

# scp kube-proxy*.pem [email protected]:/opt/kubernetes/ssl/21)建立kube-proxy kubeconfig檔案

設定叢集引數

# kubectl config set-cluster kubernetes --certificate-authority=/opt/kubernetes/ssl/ca.pem --embed-certs=true --server=https://192.168.168.2:6443 --kubeconfig=kube-proxy.kubeconfig

Cluster "kubernetes" set.設定客戶端認證引數

# kubectl config set-credentials kube-proxy --client-certificate=/opt/kubernetes/ssl/kube-proxy.pem --client-key=/opt/kubernetes/ssl/kube-proxy-key.pem --embed-certs=true --kubeconfig=kube-proxy.kubeconfig

User "kube-proxy" set.設定上下文引數

# kubectl config set-context default --cluster=kubernetes --user=kube-proxy --kubeconfig=kube-proxy.kubeconfig

Context "default" created.設定預設上下文

# kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

Switched to context "default".分發kube-proxy.kubeconfig檔案到各node節點

cp /root/ssl/kube-proxy.kubeconfig /opt/kubernetes/conf/

scp /root/ssl/kube-proxy.kubeconfig [email protected]:/opt/kubernetes/conf/

scp /root/ssl/kube-proxy.kubeconfig [email protected]:/opt/kubernetes/conf/4.配置work-node01/02

1)安裝ipvsadm等工具(各node節點相同操作)

yum install -y ipvsadm ipset bridge-utils2)建立kubelet工作目錄(各node節點做相同操作)

mkdir /var/lib/kubelet3)建立kubelet配置檔案

# vi /opt/kubernetes/conf/kubelet.conf

###

## kubernetes kubelet (minion) config

#

## The address for the info server to serve on (set to 0.0.0.0 or "" for all interfaces)

KUBELET_ADDRESS="--address=192.168.168.3"

#

## The port for the info server to serve on

#KUBELET_PORT="--port=10250"

#

## You may leave this blank to use the actual hostname

KUBELET_HOSTNAME="--hostname-override=work-node01"

#

## pod infrastructure container

KUBELET_POD_INFRA_CONTAINER="--pod_infra_container_image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0"

#

##

#KUBELET_API_SERVER="--api-servers=https://192.168.168.2:6443"

#

## Add your own!

KUBELET_ARGS="--cluster-dns=10.1.0.2 \

--cgroup-driver=systemd \

--resolv-conf=/etc/resolv.conf \

--experimental-bootstrap-kubeconfig=/opt/kubernetes/conf/bootstrap.kubeconfig \

--kubeconfig=/opt/kubernetes/conf/kubelet.kubeconfig \

--cert-dir=/opt/kubernetes/ssl \

--network-plugin=cni \

--cni-conf-dir=/etc/cni/net.d \

--cni-bin-dir=/opt/cni/bin \

--allow-privileged=true \

--cluster-domain=cluster.local. \

--hairpin-mode=promiscuous-bridge \

--fail-swap-on=false \

--serialize-image-pulls=false \

--log-dir=/var/log/kubernetes/kubelet"注:KUBELET_ADDRESS設定為各節點本機IP,KUBELET_HOSTNAME設為各節點的主機名,KUBELET_POD_INFRA_CONTAINER可設定為私有容器倉庫地址,如有可設定為KUBELET_POD_INFRA_CONTAINER="--pod_infra_container_image={私有映象倉庫ip}:80/k8s/pause-amd64:v3.0",cni-bin-dir值的路徑在建立calico網路時會自動新增

mkdir /var/log/kubernetes/kubelet //各node節點做相同操作分發kubelet.conf到各node節點

scp /opt/kubernetes/conf/kubelet.conf [email protected]:/opt/kubernetes/conf/4)建立CNI網路配置檔案

mkdir -p /etc/cni/net.d

cat >/etc/cni/net.d/10-calico.conf <<EOF

{

"name": "calico-k8s-network",

"cniVersion": "0.3.0",

"type": "calico",

"etcd_endpoints": "https://192.168.168.2:2379,https://192.168.168.3:2379,https://192.168.168.4:2379",

"etcd_key_file": "/opt/kubernetes/ssl/etcd-key.pem",

"etcd_cert_file": "/opt/kubernetes/ssl/etcd.pem",

"etcd_ca_cert_file": "/opt/kubernetes/ssl/ca.pem",

"log_level": "info",

"mtu": 1500,

"ipam": {

"type": "calico-ipam"

},

"policy": {

"type": "k8s"

},

"kubernetes": {

"kubeconfig": "/opt/kubernetes/conf/kubelet.conf"

}

}

EOF分發到各nodes節點

scp /etc/cni/net.d/10-calico.conf [email protected]:/etc/cni/net.d

5)建立Kubelet配置檔案並啟動

# vi /usr/lib/systemd/system/kubelet.service

[Unit]

Description=Kubernetes Kubelet Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=docker.service

Requires=docker.service

[Service]

WorkingDirectory=/var/lib/kubelet

EnvironmentFile=/opt/kubernetes/conf/kube.conf

EnvironmentFile=/opt/kubernetes/conf/kubelet.conf

ExecStart=/opt/kubernetes/bin/kubelet \

$KUBE_LOGTOSTDERR \

$KUBE_LOG_LEVEL \

$KUBELET_ADDRESS \

$KUBELET_PORT \

$KUBELET_HOSTNAME \

$KUBE_ALLOW_PRIV \

$KUBELET_POD_INFRA_CONTAINER \

$KUBELET_ARGS

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target分發kubelet.service檔案到各node節點

scp /usr/lib/systemd/system/kubelet.service [email protected]:/usr/lib/systemd/system/systemctl daemon-reload

systemctl enable kubelet

systemctl start kubelet

systemctl status kubelet注:如無法自動在/opt/kubernetes/conf/沒有自動生成kubelet.kubeconfig檔案可將master中$HOME/.kube/config檔案重新命名為kubelet.kubeconfig並拷貝至各nodes節點的/opt/kubernetes/conf/目錄下

6)檢視CSR證書請求(在k8s-master上執行)

kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-0Vg_d__0vYzmrMn7o2S7jsek4xuQJ2v_YuCKwWN9n7M 4h kubelet-bootstrap Pending7)批准kubelet 的 TLS 證書請求(在k8s-master上執行)

# kubectl get csr|grep 'Pending' | awk 'NR>0{print $1}'| xargs kubectl certificate approve

certificatesigningrequest.certificates.k8s.io "node-csr-0Vg_d__0vYzmrMn7o2S7jsek4xuQJ2v_YuCKwWN9n7M" approved8)檢視節點狀態如果是Ready的狀態就說明一切正常(在k8s-master上執行)

# kubectl get node

NAME STATUS ROLES AGE VERSION

work-node01 Ready <none> 11h v1.10.4

work-node02 Ready <none> 11h v1.10.49)建立kube-proxy工作目錄(各node節點做相同操作)

mkdir /var/lib/kube-proxy10)建立kube-proxy的啟動檔案

# vi /usr/lib/systemd/system/kube-proxy.service

[Unit]

Description=Kubernetes Kube-Proxy Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=network.target

[Service]

WorkingDirectory=/var/lib/kube-proxy

EnvironmentFile=/opt/kubernetes/conf/kube.conf

EnvironmentFile=/opt/kubernetes/conf/kube-proxy.conf

ExecStart=/opt/kubernetes/bin/kube-proxy \

$KUBE_LOGTOSTDERR \

$KUBE_LOG_LEVEL \

$KUBE_MASTER \

$KUBE_PROXY_ARGS

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target分發kube-proxy.service到各node節點

scp /usr/lib/systemd/system/kube-proxy.service [email protected]:/usr/lib/systemd/system/11)建立kube-proxy配置檔案並啟動

# vi /opt/kubernetes/conf/kube-proxy.conf

###

# kubernetes proxy config

# default config should be adequate

# Add your own!

KUBE_PROXY_ARGS="--bind-address=192.168.168.3 \

--hostname-override=work-node01 \

--kubeconfig=/opt/kubernetes/conf/kube-proxy.kubeconfig \

--feature-gates=SupportIPVSProxyMode=true \

--proxy-mode=ipvs \

--ipvs-min-sync-period=5s \

--ipvs-sync-period=5s \

--ipvs-scheduler=rr \

--cluster-cidr=10.2.0.0/16 \

--log-dir=/var/log/kubernetes/kube-proxy"注:bind-address值設為各node節點本機IP,hostname-override值設為各node節點主機名

分發kube-proxy.conf到各node節點

scp /opt/kubernetes/conf/kube-proxy.conf [email protected]:/opt/kubernetes/conf/

# systemctl daemon-reload

# systemctl enable kube-proxy

# systemctl start kube-proxy

# systemctl status kube-proxy 12)檢視LVS狀態

# ipvsadm -L -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 10.1.0.1:443 rr persistent 10800

-> 192.168.168.2:6443 Masq 1 0 0 5.配置calico網路

1)下載calico外掛

在各主機執行:

# wget -N -P /usr/bin/ https://github.com/projectcalico/calicoctl/releases/download/v3.1.3/calicoctl

# chmod +x /usr/bin/calicoctl

# mkdir -p /etc/calico/{conf,yaml}

# mkdir -p /opt/cni/bin

# wget -N -P /opt/cni/bin https://github.com/projectcalico/cni-plugin/releases/download/v3.1.3/calico

# wget -N -P /opt/cni/bin https://github.com/projectcalico/cni-plugin/releases/download/v3.1.3/calico-ipam

# chmod +x /opt/cni/bin/calico /opt/cni/bin/calico-ipam

# docker pull quay.io/calico/node:v3.1.3

# docker pull quay.io/calico/kube-controllers:v3.1.3

# docekr pull quay.io/calico/cni:v3.1.3檢視docker calico image資訊

# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

quay.io/calico/node v3.1.3 7eca10056c8e 7 weeks ago 248MB

quay.io/calico/kube-controllers v3.1.3 240a82836573 7 weeks ago 55MB

quay.io/calico/cni v3.1.3 9f355e076ea7 7 weeks ago 68.8MB2)建立calico和etcd互動檔案

# vi /etc/calico/calicoctl.cfg

apiVersion: projectcalico.org/v3

kind: CalicoAPIConfig

metadata:

spec:

datastoreType: "etcdv3"

etcdEndpoints: "https://192.168.168.2:2379,https://192.168.168.3:2379,https://192.168.168.4:2379"

etcdKeyFile: "/opt/kubernetes/ssl/etcd-key.pem"

etcdCertFile: "/opt/kubernetes/ssl/etcd.pem"

etcdCACertFile: "/opt/kubernetes/ssl/ca.pem"3)獲取calico.yaml(在master執行)

wget -N -P /etc/calico/yaml https://docs.projectcalico.org/v3.1/getting-started/kubernetes/installation/rbac.yaml

wget -N -P /etc/calico/yaml https://docs.projectcalico.org/v3.1/getting-started/kubernetes/installation/hosted/calico.yaml注:此處版本與docker calico image版本相同

修改calico.yaml檔案內容

# cd /etc/calico/yaml

### 替換 Etcd 地址

sed -i '[email protected]*etcd_endpoints:.*@\ \ etcd_endpoints:\ \"https://192.168.168.2:2379,https://192.168.168.3:2379,https://192.168.168.4:2379\"@gi' calico.yaml

### 替換 Etcd 證書

export ETCD_CERT=`cat /opt/kubernetes/ssl/etcd.pem | base64 | tr -d '\n'`

export ETCD_KEY=`cat /opt/kubernetes/ssl/etcd-key.pem | base64 | tr -d '\n'`

export ETCD_CA=`cat /opt/kubernetes/ssl/ca.pem | base64 | tr -d '\n'`

sed -i "[email protected]*etcd-cert:.*@\ \ etcd-cert:\ ${ETCD_CERT}@gi" calico.yaml

sed -i "[email protected]*etcd-key:.*@\ \ etcd-key:\ ${ETCD_KEY}@gi" calico.yaml

sed -i "[email protected]*etcd-ca:.*@\ \ etcd-ca:\ ${ETCD_CA}@gi" calico.yaml

sed -i '[email protected]*etcd_ca:.*@\ \ etcd_ca:\ "/calico-secrets/etcd-ca"@gi' calico.yaml

sed -i '[email protected]*etcd_cert:.*@\ \ etcd_cert:\ "/calico-secrets/etcd-cert"@gi' calico.yaml

sed -i '[email protected]*etcd_key:.*@\ \ etcd_key:\ "/calico-secrets/etcd-key"@gi' calico.yaml

### 替換 IPPOOL 地址

sed -i 's/192.168.0.0/10.2.0.0/g' calico.yamlcalico資源進行配置

kubectl apply -f /etc/calico/yaml/rbac.yaml -n kube-system

kubectl create -f /etc/calico/yaml/calico.yaml -n kube-system4)建立calico配置檔案

# vi /etc/calico/conf/calico.conf

CALICO_NODENAME=""

ETCD_ENDPOINTS=https://192.168.168.2:2379,https://192.168.168.3:2379,https://192.168.168.4:2379

ETCD_CA_CERT_FILE="/opt/kubernetes/ssl/ca.pem"

ETCD_CERT_FILE="/opt/kubernetes/ssl/etcd.pem"

ETCD_KEY_FILE="/opt/kubernetes/ssl/etcd-key.pem"

CALICO_IP=""

CALICO_IP6=""

CALICO_AS="65142"

CALICO_LIBNETWORK_ENABLED=true

CALICO_NETWORKING_BACKEND=bird

FELIX_IPV6SUPPORT=false

FELIX_DEFAULTENDPOINTTOHOSTACTION=ACCEPT

FELIX_LOGSEVERITYSCREEN=info注:CALICO_AS值是同一IDC內設定為同一個AS號

分發calico.conf檔案到各node節點

scp /etc/calico/conf/calico.conf [email protected]:/etc/calico/conf/

scp /etc/calico/conf/calico.conf [email protected]:/etc/calico/conf/5)建立calico啟動檔案

# vi /usr/lib/systemd/system/calico-node.service

[Unit]

Description=calico-node

After=docker.service

Requires=docker.service

[Service]

User=root

PermissionsStartOnly=true

EnvironmentFile=/etc/calico/conf/calico.conf

ExecStart=/usr/bin/docker run --net=host --privileged --name=calico-node \

-e NODENAME=${CALICO_NODENAME} \

-e ETCD_ENDPOINTS=${ETCD_ENDPOINTS} \

-e ETCD_CA_CERT_FILE=${ETCD_CA_CERT_FILE} \

-e ETCD_CERT_FILE=${ETCD_CERT_FILE} \

-e ETCD_KEY_FILE=${ETCD_KEY_FILE} \

-e IP=${CALICO_IP} \

-e IP6=${CALICO_IP6} \

-e AS=${CALICO_AS} \

-e CALICO_LIBNETWORK_ENABLED=${CALICO_LIBNETWORK_ENABLED} \

-e CALICO_NETWORKING_BACKEND=${CALICO_NETWORKING_BACKEND} \

-e FELIX_IPV6SUPPORT=${FELIX_IPV6SUPPORT} \

-e FELIX_DEFAULTENDPOINTTOHOSTACTION=${FELIX_DEFAULTENDPOINTTOHOSTACTION} \

-e FELIX_LOGSEVERITYSCREEN=${FELIX_LOGSEVERITYSCREEN} \

-v /opt/kubernetes/ssl/ca.pem:/opt/kubernetes/ssl/ca.pem \

-v /opt/kubernetes/ssl/etcd.pem:/opt/kubernetes/ssl/etcd.pem \

-v /opt/kubernetes/ssl/etcd-key.pem:/opt/kubernetes/ssl/etcd-key.pem \

-v /run/docker/plugins:/run/docker/plugins \

-v /lib/modules:/lib/modules \

-v /var/run/calico:/var/run/calico \

-v /var/log/calico:/var/log/calico \

-v /var/lib/calico:/var/lib/calico \

quay.io/calico/node:v3.1.3

ExecStop=/usr/bin/docker rm -f calico-node

Restart=always

RestartSec=10

[Install]

WantedBy=multi-user.targetmkdir /var/log/calico //各主機同樣操作

mkdir /var/lib/calico 注:NODENAME值為各主機名,IP值為各主機外連網口IP

分發calico-node檔案到各node節點

scp /usr/lib/systemd/system/calico-node.service [email protected]:/usr/lib/systemd/system/

scp /usr/lib/systemd/system/calico-node.service [email protected]:/usr/lib/systemd/system/# systemctl daemon-reload

# systemctl enable calico-node

# systemctl start calico-node

# systemctl status calico-node 6)啟動各主機上的calico

master主機:

calicoctl node run --node-image=quay.io/calico/node:v3.1.3 --ip=192.168.168.2node主機:

calicoctl node run --node-image=quay.io/calico/node:v3.1.3 --ip=192.168.168.3

calicoctl node run --node-image=quay.io/calico/node:v3.1.3 --ip=192.168.168.47)檢視calico node資訊

# # calicoctl get node -o wide

NAME ASN IPV4 IPV6

k8s-master 65412 192.168.168.2/32

work-node01 65412 192.168.168.3/24

wrok-node02 65412 192.168.168.4/24 8)檢視peer資訊

# calicoctl node status

Calico process is running.

IPv4 BGP status

+---------------+-------------------+-------+----------+-------------+

| PEER ADDRESS | PEER TYPE | STATE | SINCE | INFO |

+---------------+-------------------+-------+----------+-------------+

| 192.168.168.2 | node-to-node mesh | up | 07:41:40 | Established |

| 192.168.168.3 | node-to-node mesh | up | 07:41:43 | Established |

+---------------+-------------------+-------+----------+-------------+

IPv6 BGP status

No IPv6 peers found.9)檢視建立的IPPOOL

# calicoctl get ippool -o wide

NAME CIDR NAT IPIPMODE DISABLED

default-ipv4-ippool 10.2.0.0/16 true Always false

default-ipv6-ippool fd93:317a:e57d::/48 false Never false 10)建立docker network網段(關閉IP-in-IP)

calicoctl apply -f - << EOF

apiVersion: projectcalico.org/v3

kind: IPPool

metadata:

name: ippool-docker-01

spec:

cidr: 172.17.0.0/16

ipipMode: Never

natOutgoing: true

EOF# calicoctl get ippool -o wide

NAME CIDR NAT IPIPMODE DISABLED

default-ipv6-ippool fd93:317a:e57d::/48 false Never false

ippool-docker 10.2.0.0/16 true Always false

ippool-docker-172 172.17.0.0/16 true Never false 檢視路由表

# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 192.168.168.1 0.0.0.0 UG 100 0 0 ens34

10.2.178.64 192.168.168.3 255.255.255.192 UG 0 0 0 tunl0

10.2.233.128 192.168.168.4 255.255.255.192 UG 0 0 0 tunl0

10.2.235.192 0.0.0.0 255.255.255.192 U 0 0 0 *

172.17.0.0 0.0.0.0 255.255.255.0 U 0 0 0 docker0

172.17.1.0 192.168.168.3 255.255.255.0 UG 0 0 0 ens34

172.17.2.0 192.168.168.4 255.255.255.0 UG 0 0 0 ens34

192.168.168.0 0.0.0.0 255.255.255.0 U 101 0 0 ens346.部署kubernetes DNS(在master執行)

# cd /opt/software

# tar -zxvf kubernetes.tar.gz

# mv /opt/software/kubernetes/cluster/addons/dns/coredns.yaml.base /opt/kubernetes/yaml/coredns.yaml2)修改coredns.yaml配置檔案

# vi /opt/kubernetes/yaml/coredns.yaml

將配置檔案coredns.yaml中,修改如下兩個地方為自己的domain和cluster ip地址.

1.kubernetes __PILLAR__DNS__DOMAIN__

改為 kubernetes cluster.local.

2.clusterIP: __PILLAR__DNS__SERVER__

改為:

clusterIP: 10.1.0.23)建立coreDNS

# kubectl create -f coredns.yaml

serviceaccount "coredns" created

clusterrole.rbac.authorization.k8s.io "system:coredns" created

clusterrolebinding.rbac.authorization.k8s.io "system:coredns" created

configmap "coredns" created

deployment.extensions "coredns" created

service "coredns" created4)檢視coreDNS服務狀態

# kubectl get pod -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE

calico-kube-controllers-98989846-5b2kv 1/1 Running 2 4d 10.10.10.213 node10-213

calico-node-bcv9m 2/2 Running 27 7d 10.10.10.214 node10-214

calico-node-jmr72 2/2 Running 18 7d 10.10.10.215 node10-215

calico-node-plxsb 2/2 Running 14 7d 10.10.10.213 node10-213

calico-node-wzthq 2/2 Running 14 7d 10.10.10.212 node10-212

coredns-77c989547b-46vp6 1/1 Running 4 2d 10.2.12.66 node10-215

coredns-77c989547b-qz4zp 1/1 Running 0 2d 10.2.191.130 node10-212# kubectl get svc --all-namespaces

NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

default kubernetes ClusterIP 10.1.0.1 <none> 443/TCP 11d

kube-system coredns ClusterIP 10.1.0.2 <none> 53/UDP,53/TCP 2d5)coreDNS解析測試

# kubectl run -i --tty busybox --image=docker.io/busybox /bin/sh

If you don't see a command prompt, try pressing enter.

/ # nslookup www.baidu.com

S