阿里雲構建Kafka單機叢集環境

阿新 • • 發佈:2019-01-25

簡介

在一臺ECS阿里雲伺服器上構建Kafa單個叢集環境需要如下的幾個步驟:

- 伺服器環境

- JDK的安裝

- ZooKeeper的安裝

- Kafka的安裝

1. 伺服器環境

- CPU: 1核

- 記憶體: 2048 MB (I/O優化) 1Mbps

- 作業系統 ubuntu14.04 64位

感覺伺服器效能還是很好的,當然不是給阿里打廣告,汗。

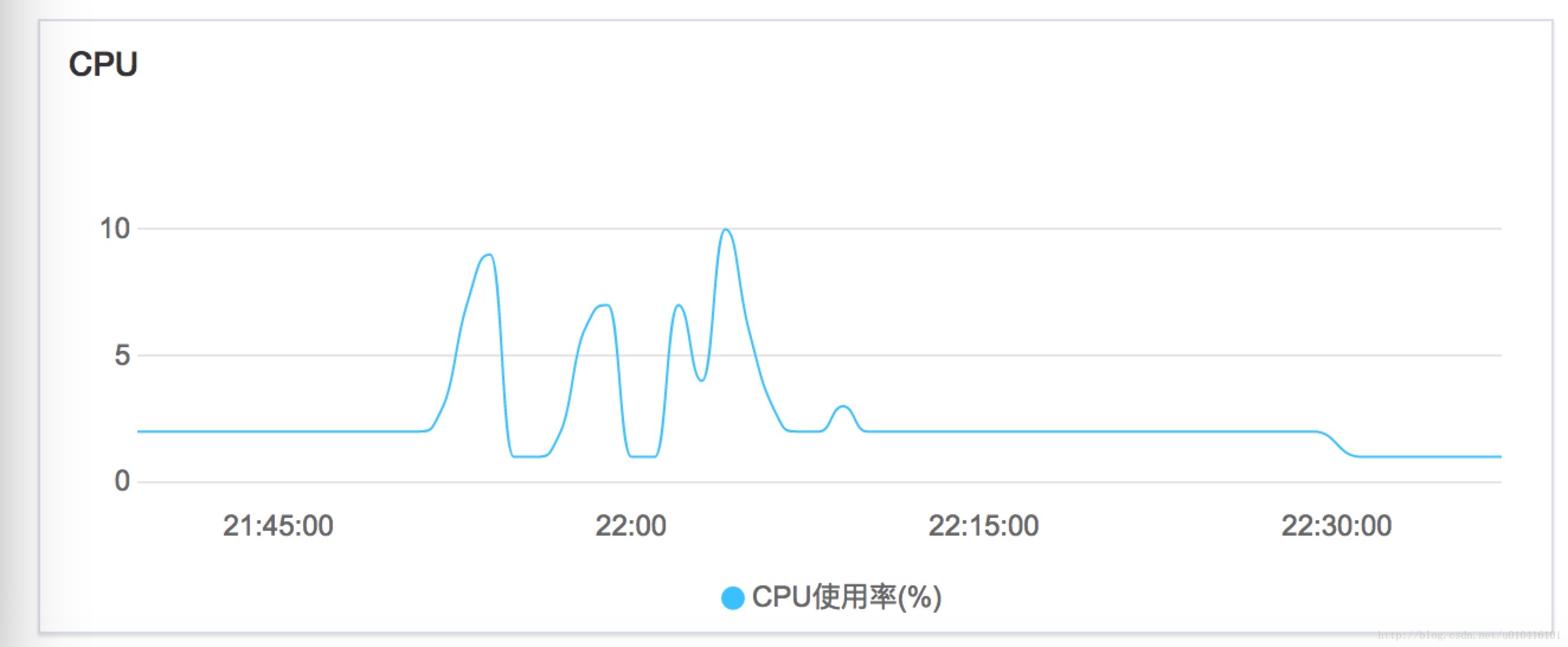

隨便向kafka裡面發了點資料,效能圖如下所示:

2. 安裝JDK

想要跑Java程式,就必須安裝JDK。JDK版本,本人用的是JDK1.7。

基本操作如下:

- 從JDK官網獲取JDK的tar.gz包;

- 將tar包上傳到伺服器上的opt/JDK下面;

- 解壓tar包;

- 更改etc/profile檔案,將下列資訊寫在後面;(ps mac環境需要sudo su 以root許可權進行操作)

cd /

cd etc

vim profile

然後進行修改 新增如下部分:

export JAVA_HOME=/opt/JDK/jdk1.7.0_79

export PATH=$JAVA_HOME/bin:$PATH

export CLASSPATH=.:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar改好後的profile檔案資訊如下:

# /etc/profile: system-wide .profile file for the Bourne shell (sh(1)) - 按下ESC鍵後,輸入“!wq”,按回車儲存資訊;

- 輸入 java -v 檢視是否生效(未生效的,貌似需要重新登陸下)。

3. 安裝ZooKeeper

Kafka叢集是通過ZooKeeper進行選舉Leader,和儲存儲存Topic的資訊的,所以想執行Kafka。還需要搭建Zookeeper環境。

ZooKeeper環境搭建步驟如下:

- 從官網獲取tar.gz包;

- 將tar.gz包上傳到阿里雲伺服器的opt/zookeeper下面;

- 執行tar -zxvf *.tar.gz 解壓縮;

- 進入解壓好的Zookeeper目錄下的conf目錄下面;

- 將zoo_sample.cfg檔案改名成zoo.cfg;(當然也可以備份)

- 根據需要修改zoo.cfg檔案,當然也可以不改;

- 啟動zookeeper。

3-7步驟具體的操作命令如下所示:

cd opt/zookeeper

tar -zxvf zookeeper-3.4.6.tar.gz

cd zookeeper-3.4.6/conf

scp zoo_sample.cfg zoo.cfg

cd ..

#開啟zookeeper命令

./bin/zkServer.sh start

#關閉zookeeper命令

./bin/zkServer.sh start結果後可以通過ps -ef|grep zookeeper 檢視zookeeper是否成功啟動

4. 安裝Kafka

經過上面3個步驟的折磨後,我們終於可以來構建自己的kafka單機叢集了。(單機你也說是叢集,汗——不服來打我QAQ)

kafka具體的步驟如下:

- 下載kafka安裝包,我下的包是kafka_2.11-0.10.1.0.tgz,這個官網可找到這;

- 將kafka包上傳到阿里雲伺服器上的opt/kafka目錄下;

- 將kafka包解壓;

- 進入config目錄下,修改server.properties檔案;

主要修改內容為:

# The id of the broker. This must be set to a unique integer for each broker.

broker.id=0

port=9092

host.name=阿里雲內網地址

advertised.host.name=阿里雲外網對映地址修改後的配置檔案如下:

# Licensed to the Apache Software Foundation (ASF) under one or more

# contributor license agreements. See the NOTICE file distributed with

# this work for additional information regarding copyright ownership.

# The ASF licenses this file to You under the Apache License, Version 2.0

# (the "License"); you may not use this file except in compliance with

# the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# see kafka.server.KafkaConfig for additional details and defaults

############################# Server Basics #############################

# The id of the broker. This must be set to a unique integer for each broker.

broker.id=0

port=9092

host.name=阿里雲內網地址

advertised.host.name=阿里雲外網對映地址

# Switch to enable topic deletion or not, default value is false

delete.topic.enable=true

############################# Socket Server Settings #############################

# The address the socket server listens on. It will get the value returned from

# java.net.InetAddress.getCanonicalHostName() if not configured.

# FORMAT:

# listeners = security_protocol://host_name:port

# EXAMPLE:

# listeners = PLAINTEXT://your.host.name:9092

#listeners=PLAINTEXT://:9092

# Hostname and port the broker will advertise to producers and consumers. If not set,

# it uses the value for "listeners" if configured. Otherwise, it will use the value

# returned from java.net.InetAddress.getCanonicalHostName().

#advertised.listeners=PLAINTEXT://your.host.name:9092

# The number of threads handling network requests

num.network.threads=3

# The number of threads doing disk I/O

num.io.threads=8

# The send buffer (SO_SNDBUF) used by the socket server

socket.send.buffer.bytes=102400

# The receive buffer (SO_RCVBUF) used by the socket server

socket.receive.buffer.bytes=102400

# The maximum size of a request that the socket server will accept (protection against OOM)

socket.request.max.bytes=104857600

############################# Log Basics #############################

# A comma seperated list of directories under which to store log files

log.dirs=/tmp/kafka-logs

# The default number of log partitions per topic. More partitions allow greater

# parallelism for consumption, but this will also result in more files across

# the brokers.

num.partitions=1

# The number of threads per data directory to be used for log recovery at startup and flushing at shutdown.

# This value is recommended to be increased for installations with data dirs located in RAID array.

num.recovery.threads.per.data.dir=1

############################# Log Flush Policy #############################

# Messages are immediately written to the filesystem but by default we only fsync() to sync

# the OS cache lazily. The following configurations control the flush of data to disk.

# There are a few important trade-offs here:

# 1. Durability: Unflushed data may be lost if you are not using replication.

# 2. Latency: Very large flush intervals may lead to latency spikes when the flush does occur as there will be a lot of data to flush.

# 3. Throughput: The flush is generally the most expensive operation, and a small flush interval may lead to exceessive seeks.

# The settings below allow one to configure the flush policy to flush data after a period of time or

# every N messages (or both). This can be done globally and overridden on a per-topic basis.

# The number of messages to accept before forcing a flush of data to disk

#log.flush.interval.messages=10000

# The maximum amount of time a message can sit in a log before we force a flush

#log.flush.interval.ms=1000

############################# Log Retention Policy #############################

# The following configurations control the disposal of log segments. The policy can

# be set to delete segments after a period of time, or after a given size has accumulated.

# A segment will be deleted whenever *either* of these criteria are met. Deletion always happens

# from the end of the log.

# The minimum age of a log file to be eligible for deletion

log.retention.hours=168

# A size-based retention policy for logs. Segments are pruned from the log as long as the remaining

# segments don't drop below log.retention.bytes.

#log.retention.bytes=1073741824

# The maximum size of a log segment file. When this size is reached a new log segment will be created.

log.segment.bytes=1073741824

# The interval at which log segments are checked to see if they can be deleted according

# to the retention policies

log.retention.check.interval.ms=300000

############################# Zookeeper #############################

# Zookeeper connection string (see zookeeper docs for details).

# This is a comma separated host:port pairs, each corresponding to a zk

# server. e.g. "127.0.0.1:3000,127.0.0.1:3001,127.0.0.1:3002".

# You can also append an optional chroot string to the urls to specify the

# root directory for all kafka znodes.

zookeeper.connect=localhost:2181

# Timeout in ms for connecting to zookeeper

zookeeper.connection.timeout.ms=6000- 啟動kafka。

nohup ./bin/kafka-server-start.sh config/server.properties > /dev/null 2>&1 &

6.驗證kafka是否啟動成功;

執行jps,檢視是否名為kafka的程序即可。

5. 踩過的坑

- 要配置hostname,port埠號 和 其他選項

Bug:ERROR org.apache.kafka.common.errors.InvalidReplicationFactorException: replication factor: 1 larger than available brokers: 0

說的很明白,可以使用的broker數量少於1個,可就是Kafka程序沒有啟動或宕機了。

解決辦法:1. 執行JPS 檢視是否有Kafka程序 ; 2.重新啟動Kafka。 - 無法繫結到某某地址

Bug:Socket server failed to bind to xxx.xxx.xxx.xxx:9092: Cannot assign requested address.

在ECS上面配置kafka的地址千萬不要寫外部地址,比如139.225.155.153(我隨便寫的),這樣事繫結不上去的,因為這個是阿里雲內部;它會去內網去尋找他的地址,所以配成127.0.0.1 會自動識別成本機地址/不然應該使用外網的對映地址。 - host name配置出問題

Bug:報錯:java.net.UnknownHostException: 主機名: 主機名

Caused by: java.net.UnknownHostException: iZuf6gsbgu35znsy7ve3s6x: iZuf6gsbgu35znsy7ve3s6x

at java.net.InetAddress.getLocalHost(InetAddress.java:1475)

at kafka.network.RequestChannel$.<init>(RequestChannel.scala:40)

at kafka.network.RequestChannel$.<clinit>(RequestChannel.scala)

... 10 more

4 外部呼叫無法消費kafka

21:45:58,162 DEBUG Selector:365 - Connection with /168.221.153.152 disconnected

java.net.ConnectException: Connection refused

at sun.nio.ch.SocketChannelImpl.checkConnect(Native Method)

at sun.nio.ch.SocketChannelImpl.finishConnect(SocketChannelImpl.java:739)

at org.apache.kafka.common.network.PlaintextTransportLayer.finishConnect(PlaintextTransportLayer.java:51)

at org.apache.kafka.common.network.KafkaChannel.finishConnect(KafkaChannel.java:73)

at org.apache.kafka.common.network.Selector.pollSelectionKeys(Selector.java:323)

at org.apache.kafka.common.network.Selector.poll(Selector.java:291)

at org.apache.kafka.clients.NetworkClient.poll(NetworkClient.java:260)

at org.apache.kafka.clients.producer.internals.Sender.run(Sender.java:236)

at org.apache.kafka.clients.producer.internals.Sender.run(Sender.java:135)

at java.lang.Thread.run(Thread.java:745)

21:45:58,162 DEBUG NetworkClient:463 - Node -1 disconnected.6. 其他

關於Kafka的配置檔案具體內容、Kafka如何構建叢集、Kafka常用命令、Kafka簡單Demo的編寫和Kafka Streams 例子的編寫,請看Kafka系列的其它部分內容。