博學之,審問之,慎思之,明辨之,篤行之。

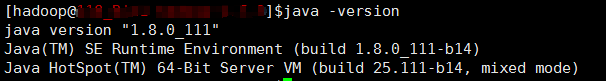

1、首先是安裝jdk:

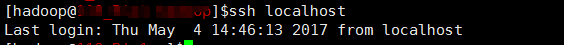

2、建立hadoop使用者,設定ssh 免密登入

useradd hadoop

ssh-keygen -t dsa -P '' -f ~/.ssh/id_dsa

cat ~/.ssh/id_dsa.pub >> ~/.ssh/authorized_keys

ssh localhost 測試是否成功

3、下載安裝hadoop2.7.3:

wget http://apache.claz.org/hadoop/common/hadoop-2.7.3/hadoop-2.7.3.tar.gz .

tar zxf hadoop-2.7.3.tar.gz

進入解壓之後目錄 /etc/hadoop/

core-site.xml

<configuration>

<property>

<name>hadoop.tmp.dir</name>

<value>/home/hadoop/tmp</value>

</property>

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost:9000</value>

</property>

</configuration>

hdfs-site.xml

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

</configuration>

mapred-site.xml

<property>

<name>mapred.job.tracker</name>

<value>localhost:9001</value>

</property>

hadoop-env.sh

export JAVA_HOME=*** 把java_home寫上

slaves

單節點預設一行 localhost

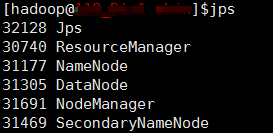

4、啟動hadoop

格式化hdfs檔案系統

bin/hadoop namenode -format

啟動、停止hadoop

sbin/start-all.sh 、sbin/stop-all.sh

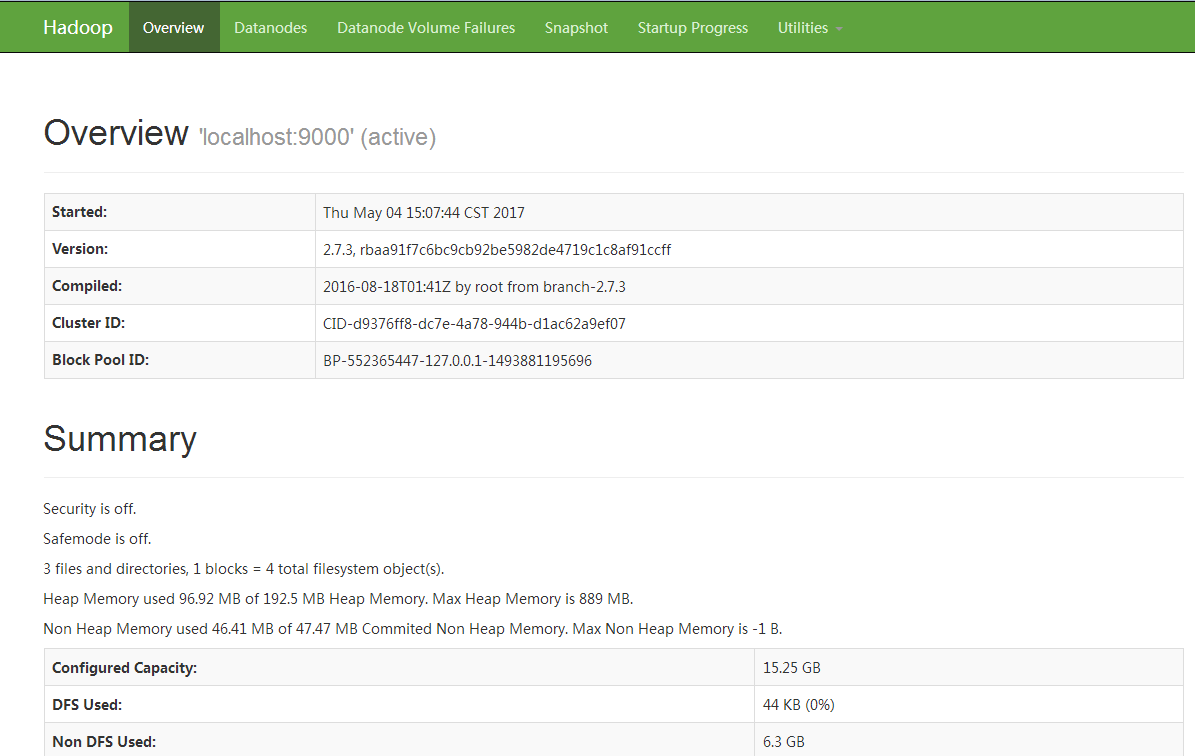

啟動成功

在網頁輸入ip:50070 hdfs