[IO系統]08 IO讀流程分析

阿新 • • 發佈:2019-01-31

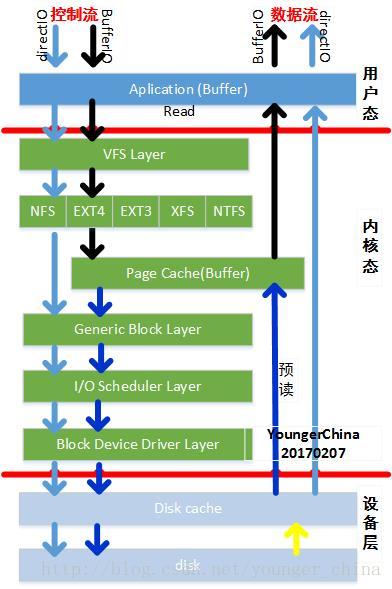

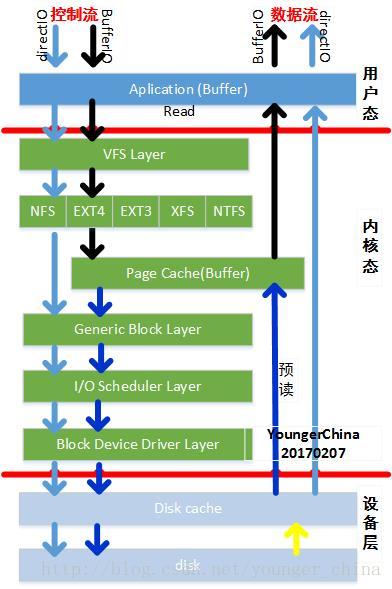

本文從整體來分析快取IO的控制流和資料流,並基於IO系統圖來解析讀IO:

注:對上述層次圖的理解參見文章《[IO系統]01 IO子系統》

一步一步往前走。(核心程式碼版本4.5.4)

1.1使用者態

程式的最終目的是要把資料寫到磁碟上,如前所述,使用者態有兩個“開啟”函式——read和fread。其中fread是glibc對系統呼叫read的封裝。

參見《[IO系統]02 使用者態的檔案IO操作》

fread的流程在使用者態的操作比較複雜,涉及到更多的資料複製和處理流程,本文不做介紹,後續單獨分析。

Poxis介面read直接通過系統呼叫read。

1.2read系統呼叫/VFS層

read系統呼叫是在fs/read_write.c中實現的:

函式解析:

1. 通過函式fdget_pos將整形的控制代碼ID fd轉化為核心資料結構fd,可稱之為檔案控制代碼描述符。

fd結構體如下:

struct fd {

struct file *file;/* 檔案物件 */

unsigned int flags;

};

核心中檔案系統各結構體之間的關係參照文章《[IO系統]因OPEN建立的結構體關係圖》

2. 獲取檔案file的操作位置,也可以理解游標(記錄在pos);

3. 呼叫VFS介面vfs_read()實現讀操作:檢查引數的有效性,通過__vfs_read函式呼叫具體檔案系統(如ext4,xfs,btrfs,ocfs2)的write函式。

當然如果沒有定義具體的read函式,則通過介面read_iter實現資料讀操作。

4. 更新file的pos變數。

1.3具體檔案系統層

如果具體檔案系統,比如ext4,沒有read介面,則呼叫read_iter介面來去讀取資料:

const struct file_operations ext4_file_operations = {

.llseek= ext4_llseek,

.read_iter= generic_file_read_iter,

.write_iter= ext4_file_write_iter,

…

};

而read_iter時直接呼叫generic_file_read_iter函式。

檔案系統通過預讀機制將磁碟上的資料讀入到page cache中

1.4Page Cache層

Page Cache是檔案資料在記憶體中的副本,因此Page Cache管理與記憶體管理系統和檔案系統都相關:一方面Page Cache作為實體記憶體的一部分,需要參與實體記憶體的分配回收過程,另一方面Page Cache中的資料來源於儲存裝置上的檔案,需要通過檔案系統與儲存裝置進行讀寫互動。從作業系統的角度考慮,Page Cache可以看做是記憶體管理系統與檔案系統之間的聯絡紐帶。因此,Page Cache管理是作業系統的一個重要組成部分,它的效能直接影響著檔案系統和記憶體管理系統的效能。

本文不做詳細介紹,後續講解。

1.5通用塊層

同IO寫流程分析。

1.6IO排程層

同IO寫流程分析。

1.7裝置驅動層

同IO寫流程分析。

1.8裝置層

暫不分析。

1.9參考文獻

[部落格] http://blog.chinaunix.net/uid-28236237-id-4030381.html

注:對上述層次圖的理解參見文章《[IO系統]01 IO子系統》

一步一步往前走。(核心程式碼版本4.5.4)

1.1使用者態

程式的最終目的是要把資料寫到磁碟上,如前所述,使用者態有兩個“開啟”函式——read和fread。其中fread是glibc對系統呼叫read的封裝。

參見《[IO系統]02 使用者態的檔案IO操作》

fread的流程在使用者態的操作比較複雜,涉及到更多的資料複製和處理流程,本文不做介紹,後續單獨分析。

Poxis介面read直接通過系統呼叫read。

1.2read系統呼叫/VFS層

read系統呼叫是在fs/read_write.c中實現的:

SYSCALL_DEFINE3(read, unsigned int, fd, char __user *, buf, size_t, count) { struct fd f = fdget_pos(fd); ssize_t ret = -EBADF; if (f.file) { loff_t pos = file_pos_read(f.file); ret = vfs_read(f.file, buf, count, &pos); if (ret >= 0) file_pos_write(f.file, pos); fdput_pos(f); } return ret; }

函式解析:

1. 通過函式fdget_pos將整形的控制代碼ID fd轉化為核心資料結構fd,可稱之為檔案控制代碼描述符。

fd結構體如下:

struct fd {

struct file *file;/* 檔案物件 */

unsigned int flags;

};

核心中檔案系統各結構體之間的關係參照文章《[IO系統]因OPEN建立的結構體關係圖》

2. 獲取檔案file的操作位置,也可以理解游標(記錄在pos);

3. 呼叫VFS介面vfs_read()實現讀操作:檢查引數的有效性,通過__vfs_read函式呼叫具體檔案系統(如ext4,xfs,btrfs,ocfs2)的write函式。

ssize_t vfs_read(struct file *file, char __user *buf, size_t count, loff_t *pos) { ssize_t ret; if (!(file->f_mode & FMODE_READ)) /* 判斷是否具有讀許可權 */ return -EBADF; if (!(file->f_mode & FMODE_CAN_READ)) return -EINVAL; if (unlikely(!access_ok(VERIFY_WRITE, buf, count))) return -EFAULT; ret = rw_verify_area(READ, file, pos, count); if (ret >= 0) { count = ret; ret = __vfs_read(file, buf, count, pos); if (ret > 0) { fsnotify_access(file); add_rchar(current, ret); } inc_syscr(current); } return ret; }

當然如果沒有定義具體的read函式,則通過介面read_iter實現資料讀操作。

new_sync_read是對引數進行一次封裝,封裝為結構體iov_iterssize_t __vfs_read(struct file *file, char __user *buf, size_t count, loff_t *pos) { if (file->f_op->read) return file->f_op->read(file, buf, count, pos); else if (file->f_op->read_iter) return new_sync_read(file, buf, count, pos); else return -EINVAL; } EXPORT_SYMBOL(__vfs_read);

4. 更新file的pos變數。

1.3具體檔案系統層

如果具體檔案系統,比如ext4,沒有read介面,則呼叫read_iter介面來去讀取資料:

const struct file_operations ext4_file_operations = {

.llseek= ext4_llseek,

.read_iter= generic_file_read_iter,

.write_iter= ext4_file_write_iter,

…

};

而read_iter時直接呼叫generic_file_read_iter函式。

/**

* generic_file_read_iter - generic filesystem read routine

* @iocb: kernel I/O control block

* @iter: destination for the data read

*

* This is the "read_iter()" routine for all filesystems

* that can use the page cache directly.

*/

ssize_t

generic_file_read_iter(struct kiocb *iocb, struct iov_iter *iter)

{

/* 解析引數*/

struct file *file = iocb->ki_filp;

ssize_t retval = 0;

loff_t *ppos = &iocb->ki_pos;

loff_t pos = *ppos;

size_t count = iov_iter_count(iter);

if (!count)

goto out; /* skip atime */

if (iocb->ki_flags & IOCB_DIRECT) { /* 直接IO讀 */

struct address_space *mapping = file->f_mapping;

struct inode *inode = mapping->host;

loff_t size;

size = i_size_read(inode);

retval = filemap_write_and_wait_range(mapping, pos,

pos + count - 1);/* 刷mapping下的髒頁,保證資料一致性 */

if (!retval) {/* 髒頁回刷失敗,則通過直接IO寫回儲存裝置 */

struct iov_iter data = *iter;

retval = mapping->a_ops->direct_IO(iocb, &data, pos);

}

if (retval > 0) {

*ppos = pos + retval;

iov_iter_advance(iter, retval);

}

/*

* Btrfs can have a short DIO read if we encounter

* compressed extents, so if there was an error, or if

* we've already read everything we wanted to, or if

* there was a short read because we hit EOF, go ahead

* and return. Otherwise fallthrough to buffered io for

* the rest of the read. Buffered reads will not work for

* DAX files, so don't bother trying.

*/

if (retval < 0 || !iov_iter_count(iter) || *ppos >= size ||

IS_DAX(inode)) {

file_accessed(file);

goto out;

}

}

/* 快取IO讀 */

retval = do_generic_file_read(file, ppos, iter, retval);

out:

return retval;

}

EXPORT_SYMBOL(generic_file_read_iter);

直接IO:

控制流,若為直接IO,在執行讀取操作時,首先會將mapping下的髒資料刷回磁碟,然後呼叫do_generic_file_read讀取資料。

資料流,資料依舊存放在使用者態快取中,並不需要將資料複製到page cache中,減少了資料複製次數。

快取IO:

控制流,若進入BufferIO,則直接呼叫do_generic_file_read來讀取資料。

資料流,資料從使用者態複製到核心態page cache中。

函式do_generic_file_read分析

/**

* do_generic_file_read - generic file read routine

* @filp: the file to read

* @ppos: current file position

* @iter: data destination

* @written: already copied

*

* This is a generic file read routine, and uses the

* mapping->a_ops->readpage() function for the actual low-level stuff.

*

* This is really ugly. But the goto's actually try to clarify some

* of the logic when it comes to error handling etc.

*/

static ssize_t do_generic_file_read(struct file *filp, loff_t *ppos,

struct iov_iter *iter, ssize_t written)

{

struct address_space *mapping = filp->f_mapping;

struct inode *inode = mapping->host;

struct file_ra_state *ra = &filp->f_ra;

pgoff_t index;

pgoff_t last_index;

pgoff_t prev_index;

unsigned long offset; /* offset into pagecache page */

unsigned int prev_offset;

int error = 0;

/* 因為讀取是按照頁來的,所以需要計算本次讀取的第一個page*/

index = *ppos >> PAGE_SHIFT;

prev_index = ra->prev_pos >> PAGE_SHIFT; /* 上次預讀page的起始索引 */

prev_offset = ra->prev_pos & (PAGE_SIZE-1); /* 上次預讀的起始位置 */

last_index = (*ppos + iter->count + PAGE_SIZE-1) >> PAGE_SHIFT; /*讀取最後一個頁 */

offset = *ppos & ~PAGE_MASK;

for (;;) {

struct page *page;

pgoff_t end_index;

loff_t isize;

unsigned long nr, ret;

cond_resched();

find_page:

page = find_get_page(mapping, index); /*在radix樹中查詢相應的page*/

if (!page) { /*在radix樹中查詢相應的page*/

/* 如果沒有找到page,記憶體中沒有將資料,先進行預讀 */

page_cache_sync_readahead(mapping,

ra, filp,

index, last_index - index);

page = find_get_page(mapping, index); /*在radix樹中再次查詢相應的page*/

if (unlikely(page == NULL))

goto no_cached_page;

}

if (PageReadahead(page)) {

/* 發現找到的page已經是預讀的情況了,再繼續非同步預讀,此處是基於經驗的優化 */

page_cache_async_readahead(mapping,

ra, filp, page,

index, last_index - index);

}

if (!PageUptodate(page)) {/* 資料內容不是最新,則需要更新資料內容 */

/*

* See comment in do_read_cache_page on why

* wait_on_page_locked is used to avoid unnecessarily

* serialisations and why it's safe.

*/

wait_on_page_locked_killable(page);

if (PageUptodate(page))

goto page_ok;

if (inode->i_blkbits == PAGE_SHIFT ||

!mapping->a_ops->is_partially_uptodate)

goto page_not_up_to_date;

if (!trylock_page(page))

goto page_not_up_to_date;

/* Did it get truncated before we got the lock? */

if (!page->mapping)

goto page_not_up_to_date_locked;

if (!mapping->a_ops->is_partially_uptodate(page,

offset, iter->count))

goto page_not_up_to_date_locked;

unlock_page(page);

}

page_ok: /* 資料內容是最新的 */

/*

* i_size must be checked after we know the page is Uptodate.

*

* Checking i_size after the check allows us to calculate

* the correct value for "nr", which means the zero-filled

* part of the page is not copied back to userspace (unless

* another truncate extends the file - this is desired though).

*/

/*下面這段程式碼是在page中的內容ok的情況下將page中的內容拷貝到使用者空間去,主要的邏輯分為檢查引數是否合法進性拷貝操作*/

/*合法性檢查*/

isize = i_size_read(inode);

end_index = (isize - 1) >> PAGE_SHIFT;

if (unlikely(!isize || index > end_index)) {

put_page(page);

goto out;

}

/* nr is the maximum number of bytes to copy from this page */

nr = PAGE_SIZE;

if (index == end_index) {

nr = ((isize - 1) & ~PAGE_MASK) + 1;

if (nr <= offset) {

put_page(page);

goto out;

}

}

nr = nr - offset;

/* If users can be writing to this page using arbitrary

* virtual addresses, take care about potential aliasing

* before reading the page on the kernel side.

*/

if (mapping_writably_mapped(mapping))

flush_dcache_page(page);

/*

* When a sequential read accesses a page several times,

* only mark it as accessed the first time.

*/

if (prev_index != index || offset != prev_offset)

mark_page_accessed(page);

prev_index = index;

/*

* Ok, we have the page, and it's up-to-date, so

* now we can copy it to user space...

*/

/* 將資料從核心態複製到使用者態 */

ret = copy_page_to_iter(page, offset, nr, iter);

offset += ret;

index += offset >> PAGE_SHIFT;

offset &= ~PAGE_MASK;

prev_offset = offset;

put_page(page);

written += ret;

if (!iov_iter_count(iter))

goto out;

if (ret < nr) {

error = -EFAULT;

goto out;

}

continue;

page_not_up_to_date:

/* Get exclusive access to the page ...互斥訪問 */

error = lock_page_killable(page);

if (unlikely(error))

goto readpage_error;

page_not_up_to_date_locked:

/* Did it get truncated before we got the lock? */

/* 獲取到鎖之後,發現這個page沒有被映射了,

* 可能是在獲取鎖之前就被其它模組釋放掉了,重新開始獲取lock*/

if (!page->mapping) {

unlock_page(page);

put_page(page);

continue;

}

/* Did somebody else fill it already? */

/* 獲取到鎖後發現page中的資料已經ok了,不需要再讀取資料 */

if (PageUptodate(page)) {

unlock_page(page);

goto page_ok;

}

readpage:

/* 資料讀取操作

* A previous I/O error may have been due to temporary

* failures, eg. multipath errors.

* PG_error will be set again if readpage fails.

*/

ClearPageError(page);

/* Start the actual read. The read will unlock the page. */

error = mapping->a_ops->readpage(filp, page);

…

if (…error) {

….

goto readpage_error;

}

…

goto page_ok;

readpage_error:

/* UHHUH! A synchronous read error occurred. Report it,同步讀取失敗 */

put_page(page);

goto out;

no_cached_page:

/* 系統中沒有資料,又不進行預讀的情況,顯示的分配page,並讀取page

* Ok, it wasn't cached, so we need to create a new page.. */

page = page_cache_alloc_cold(mapping);

…

error = add_to_page_cache_lru(page, mapping, index,

mapping_gfp_constraint(mapping, GFP_KERNEL));

if (error) {

…

goto out;

}

goto readpage;

}

out:

ra->prev_pos = prev_index;

ra->prev_pos <<= PAGE_SHIFT;

ra->prev_pos |= prev_offset;

*ppos = ((loff_t)index << PAGE_SHIFT) + offset;

file_accessed(filp);

return written ? written : error;

}檔案系統通過預讀機制將磁碟上的資料讀入到page cache中

1.4Page Cache層

Page Cache是檔案資料在記憶體中的副本,因此Page Cache管理與記憶體管理系統和檔案系統都相關:一方面Page Cache作為實體記憶體的一部分,需要參與實體記憶體的分配回收過程,另一方面Page Cache中的資料來源於儲存裝置上的檔案,需要通過檔案系統與儲存裝置進行讀寫互動。從作業系統的角度考慮,Page Cache可以看做是記憶體管理系統與檔案系統之間的聯絡紐帶。因此,Page Cache管理是作業系統的一個重要組成部分,它的效能直接影響著檔案系統和記憶體管理系統的效能。

本文不做詳細介紹,後續講解。

1.5通用塊層

同IO寫流程分析。

1.6IO排程層

同IO寫流程分析。

1.7裝置驅動層

同IO寫流程分析。

1.8裝置層

暫不分析。

1.9參考文獻

[部落格] http://blog.chinaunix.net/uid-28236237-id-4030381.html