spark2.1原始碼分析1:Win10下IDEA原始碼閱讀環境的搭建

環境:win10、IDEA2016.3、maven3.3.9、git、scala 2.11.8、java1.8.0_101、sbt0.13.12

下載:

#git bash中執行:

git clone https://github.com/apache/spark.git

git tag

git checkout v2.1.0-rc5

git checkout -b v2.1.0-rc5匯入IDEA,開始除錯:

file–open–選中根目錄pom.xml,open as project

編譯:

等待IDEA index檔案完成

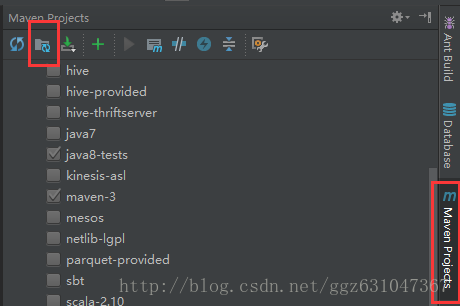

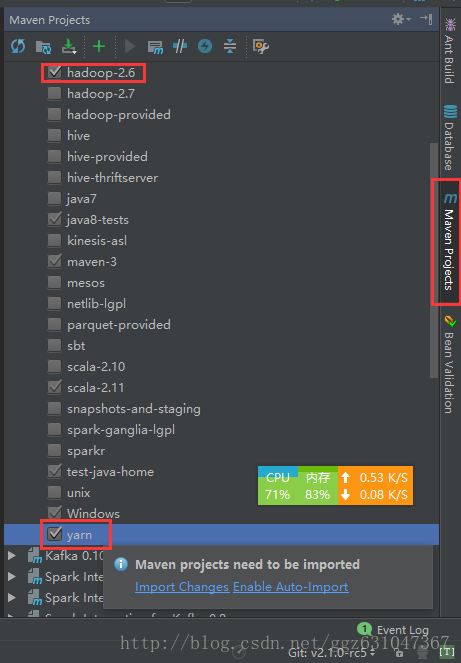

開啟Maven Project–Profiles–勾選:hadoop-2.6、yarn

點選import changes,再次等待IDEA index檔案完成

Maven Project–Generate Sources and Update Folders For All Projects

mvn -T 6 -Pyarn -Phadoop-2.6 -DskipTests clean package

bin/spark-shell錯誤:

Exception in thread "main" java.lang.ExceptionInInitializerError

at org.apache.spark.package$.<init>(package.scala:91)

at org.apache 解決方法:

檢視spark-core的pom.xml,會發現:

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-antrun-plugin</artifactId>

<executions>

<execution>

<phase>generate-resources</phase>

<configuration>

<!-- Execute the shell script to generate the spark build information. -->

<target>

<exec executable="bash">

<arg value="${project.basedir}/../build/spark-build-info"/>

<arg value="${project.build.directory}/extra-resources"/>

<arg value="${project.version}"/>

</exec>

</target>

</configuration>

<goals>

<goal>run</goal>

</goals>

</execution>

</executions>

</plugin>此處因為檔案路徑問題,指令碼執行失敗,所以沒有正常生成spark-version-info.properties檔案。手動執行(spark-tags_2.11根據變數自己查詢得出),因為沒有安裝模擬linux的環境,所以這句在git bash中執行:

build/spark-build-info /f/spark2/spark/core/target/extra-resources spark-tags_2.11spark\assembly\target\scala-2.11\jars中找到:spark-core_2.11-2.2.0-SNAPSHOT.jar

檢視包內根目錄是否有檔案:spark-version-info.properties,如果沒有新增進去,再嘗試bin/spark-shell

加斷點執行org.apache.spark.examples.SparkPi,在VM引數中新增-Dspark.master=local[*],點選執行等待build完成。

錯誤:

Exception in thread "main" java.lang.NoClassDefFoundError: scala/collection/Seq

at org.apache.spark.examples.SparkPi.main(SparkPi.scala)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at com.intellij.rt.execution.application.AppMain.main(AppMain.java:147)

Caused by: java.lang.ClassNotFoundException: scala.collection.Seq

at java.net.URLClassLoader.findClass(URLClassLoader.java:381)

at java.lang.ClassLoader.loadClass(ClassLoader.java:424)

at sun.misc.Launcher$AppClassLoader.loadClass(Launcher.java:331)

at java.lang.ClassLoader.loadClass(ClassLoader.java:357)

... 6 more

Process finished with exit code 1解決方法:

Menu -> File -> Project Structure -> Modules -> spark-examples_2.11 -> Dependencies 新增依賴 jars -> {spark dir}/spark/assembly/target/scala-2.11/jars/

錯誤:

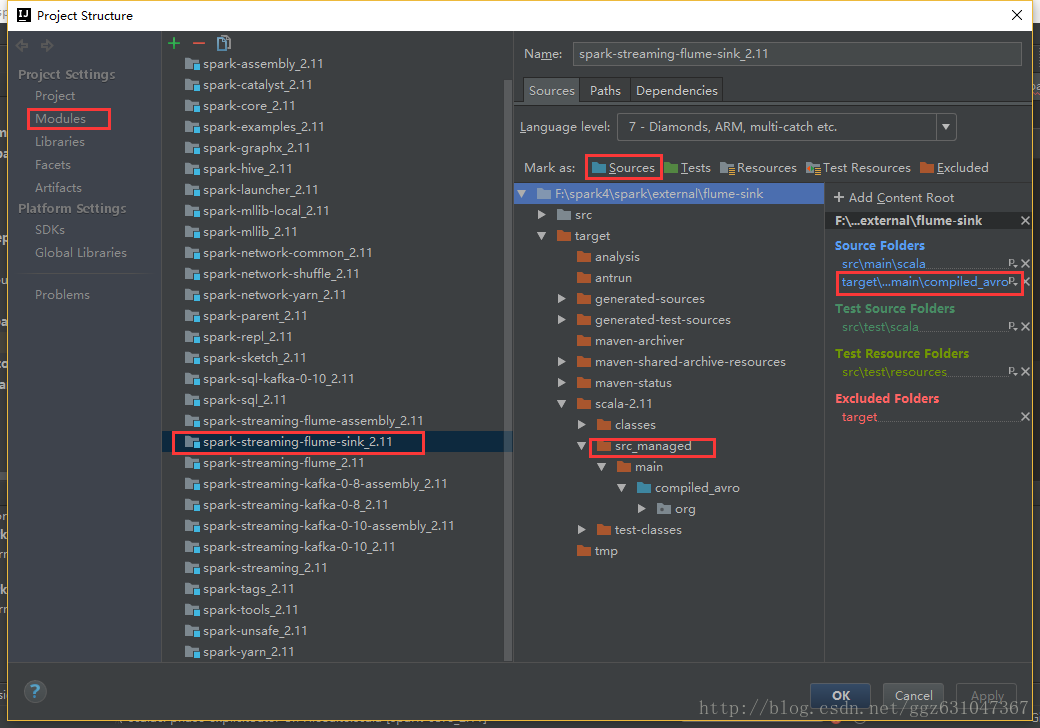

Error:(45, 66) not found: type SparkFlumeProtocol解決方法:

將子專案spark-streaming-flume-sink_2.11的compiled_avro也作為原始碼目錄,等待構建完成。