二、OpenCV3.4.2 實現影象拼接與融合

阿新 • • 發佈:2019-02-13

參考大神的帖子:

最終完整程式碼如下:

#include <iostream> #include <stdio.h> #include "opencv2/core.hpp" #include "opencv2/core/utility.hpp" #include "opencv2/core/ocl.hpp" #include "opencv2/imgcodecs.hpp" #include "opencv2/highgui.hpp" #include "opencv2/features2d.hpp" #include "opencv2/calib3d.hpp" #include "opencv2/imgproc.hpp" #include"opencv2/flann.hpp" #include"opencv2/xfeatures2d.hpp" #include"opencv2/ml.hpp" using namespace cv; using namespace std; using namespace cv::xfeatures2d; using namespace cv::ml; void OptimizeSeam(Mat& img1, Mat& trans, Mat& dst); typedef struct { Point2f left_top; Point2f left_bottom; Point2f right_top; Point2f right_bottom; }four_corners_t; four_corners_t corners; void CalcCorners(const Mat& H, const Mat& src) { double v2[] = { 0, 0, 1 };//左上角 double v1[3];//變換後的座標值 Mat V2 = Mat(3, 1, CV_64FC1, v2); //列向量 Mat V1 = Mat(3, 1, CV_64FC1, v1); //列向量 V1 = H * V2; //左上角(0,0,1) cout << "V2: " << V2 << endl; cout << "V1: " << V1 << endl; corners.left_top.x = v1[0] / v1[2]; corners.left_top.y = v1[1] / v1[2]; //左下角(0,src.rows,1) v2[0] = 0; v2[1] = src.rows; v2[2] = 1; V2 = Mat(3, 1, CV_64FC1, v2); //列向量 V1 = Mat(3, 1, CV_64FC1, v1); //列向量 V1 = H * V2; corners.left_bottom.x = v1[0] / v1[2]; corners.left_bottom.y = v1[1] / v1[2]; //右上角(src.cols,0,1) v2[0] = src.cols; v2[1] = 0; v2[2] = 1; V2 = Mat(3, 1, CV_64FC1, v2); //列向量 V1 = Mat(3, 1, CV_64FC1, v1); //列向量 V1 = H * V2; corners.right_top.x = v1[0] / v1[2]; corners.right_top.y = v1[1] / v1[2]; //右下角(src.cols,src.rows,1) v2[0] = src.cols; v2[1] = src.rows; v2[2] = 1; V2 = Mat(3, 1, CV_64FC1, v2); //列向量 V1 = Mat(3, 1, CV_64FC1, v1); //列向量 V1 = H * V2; corners.right_bottom.x = v1[0] / v1[2]; corners.right_bottom.y = v1[1] / v1[2]; } int main() { Mat a = imread("2.jpg", 1);//右圖 Mat b = imread("1.jpg", 1);//左圖 Ptr<SURF> surf; //建立方式和OpenCV2中的不一樣,並且要加上名稱空間xfreatures2d //否則即使配置好了還是顯示SURF為未宣告的識別符號 surf = SURF::create(800); BFMatcher matcher; //例項化一個暴力匹配器 Mat c, d; vector<KeyPoint>key1, key2; vector<DMatch> matches; //DMatch是用來描述匹配好的一對特徵點的類,包含這兩個點之間的相關資訊 //比如左圖有個特徵m,它和右圖的特徵點n最匹配,這個DMatch就記錄它倆最匹配,並且還記錄m和n的 //特徵向量的距離和其他資訊,這個距離在後面用來做篩選 surf->detectAndCompute(a, Mat(), key1, c);//輸入影象,輸入掩碼,輸入特徵點,輸出Mat,存放所有特徵點的描述向量 surf->detectAndCompute(b, Mat(), key2, d);//這個Mat行數為特徵點的個數,列數為每個特徵向量的尺寸,SURF是64(維) matcher.match(d, c, matches); //匹配,資料來源是特徵向量,結果存放在DMatch型別裡面 //sort函式對資料進行升序排列 sort(matches.begin(), matches.end()); //篩選匹配點,根據match裡面特徵對的距離從小到大排序 vector< DMatch > good_matches; int ptsPairs = std::min(50, (int)(matches.size() * 0.15)); cout << ptsPairs << endl; for (int i = 0; i < ptsPairs; i++) { good_matches.push_back(matches[i]);//距離最小的50個壓入新的DMatch } Mat outimg; //drawMatches這個函式直接畫出擺在一起的圖 drawMatches(b, key2, a, key1, good_matches, outimg, Scalar::all(-1), Scalar::all(-1), vector<char>(), DrawMatchesFlags::NOT_DRAW_SINGLE_POINTS); //繪製匹配點 imshow("桌面", outimg); ///////////////////////影象配準及融合//////////////////////// vector<Point2f> imagePoints1, imagePoints2; for (int i = 0; i<good_matches.size(); i++) { imagePoints2.push_back(key2[good_matches[i].queryIdx].pt); imagePoints1.push_back(key1[good_matches[i].trainIdx].pt); } //獲取影象1到影象2的投影對映矩陣 尺寸為3*3 Mat homo = findHomography(imagePoints1, imagePoints2, CV_RANSAC); ////也可以使用getPerspectiveTransform方法獲得透視變換矩陣,不過要求只能有4個點,效果稍差 //Mat homo=getPerspectiveTransform(imagePoints1,imagePoints2); cout << "變換矩陣為:\n" << homo << endl << endl; //輸出對映矩陣 //計算配準圖的四個頂點座標 CalcCorners(homo, a); cout << "left_top:" << corners.left_top << endl; cout << "left_bottom:" << corners.left_bottom << endl; cout << "right_top:" << corners.right_top << endl; cout << "right_bottom:" << corners.right_bottom << endl; //影象配準 Mat imageTransform1, imageTransform2; warpPerspective(a, imageTransform1, homo, Size(MAX(corners.right_top.x, corners.right_bottom.x), b.rows)); //warpPerspective(a, imageTransform2, adjustMat*homo, Size(b.cols*1.3, b.rows*1.8)); imshow("直接經過透視矩陣變換", imageTransform1); imwrite("trans1.jpg", imageTransform1); //建立拼接後的圖,需提前計算圖的大小 int dst_width = imageTransform1.cols; //取最右點的長度為拼接圖的長度 int dst_height = b.rows; Mat dst(dst_height, dst_width, CV_8UC3); dst.setTo(0); imageTransform1.copyTo(dst(Rect(0, 0, imageTransform1.cols, imageTransform1.rows))); b.copyTo(dst(Rect(0, 0, b.cols, b.rows))); imshow("b_dst", dst); OptimizeSeam(b, imageTransform1, dst); imshow("dst", dst); imwrite("dst.jpg", dst); waitKey(); return 0; } //優化兩圖的連線處,使得拼接自然 void OptimizeSeam(Mat& img1, Mat& trans, Mat& dst) { int start = MIN(corners.left_top.x, corners.left_bottom.x);//開始位置,即重疊區域的左邊界 double processWidth = img1.cols - start;//重疊區域的寬度 int rows = dst.rows; int cols = img1.cols; //注意,是列數*通道數 double alpha = 1;//img1中畫素的權重 for (int i = 0; i < rows; i++) { uchar* p = img1.ptr<uchar>(i); //獲取第i行的首地址 uchar* t = trans.ptr<uchar>(i); uchar* d = dst.ptr<uchar>(i); for (int j = start; j < cols; j++) { //如果遇到影象trans中無畫素的黑點,則完全拷貝img1中的資料 if (t[j * 3] == 0 && t[j * 3 + 1] == 0 && t[j * 3 + 2] == 0) { alpha = 1; } else { //img1中畫素的權重,與當前處理點距重疊區域左邊界的距離成正比,實驗證明,這種方法確實好 alpha = (processWidth - (j - start)) / processWidth; } d[j * 3] = p[j * 3] * alpha + t[j * 3] * (1 - alpha); d[j * 3 + 1] = p[j * 3 + 1] * alpha + t[j * 3 + 1] * (1 - alpha); d[j * 3 + 2] = p[j * 3 + 2] * alpha + t[j * 3 + 2] * (1 - alpha); } } }

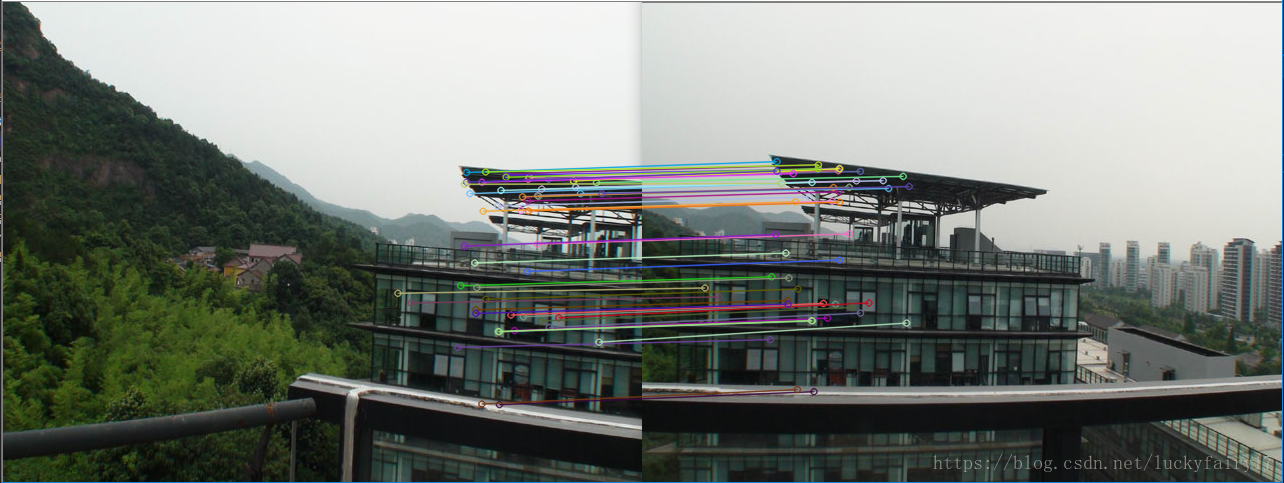

匹配結果:

右圖經過透射投影變換結果:

優化後的拼接融合效果:

備註:此拼接效果是相對於左圖做的變換,應將兩個圖均相對於中心座標做變換可得到更友好舒服的拼接效果,未完待續。

下面給出opencv2/stitching影象拼接函式的執行效果(具體原理還在研究):

#include <iostream> #include <stdio.h> #include "opencv2/core.hpp" #include "opencv2/core/utility.hpp" #include "opencv2/core/ocl.hpp" #include "opencv2/imgcodecs.hpp" #include "opencv2/highgui.hpp" #include "opencv2/features2d.hpp" #include "opencv2/calib3d.hpp" #include "opencv2/imgproc.hpp" #include"opencv2/flann.hpp" #include"opencv2/xfeatures2d.hpp" #include"opencv2/ml.hpp" #include <opencv2/stitching.hpp> using namespace cv; using namespace std; using namespace cv::xfeatures2d; using namespace cv::ml; bool try_use_gpu = false; vector<Mat> imgs; string result_name = "dst1.jpg"; int main(int argc, char * argv[]) { Mat img1 = imread("2.jpg"); Mat img2 = imread("1.jpg"); imshow("p1", img1); imshow("p2", img2); if (img1.empty() || img2.empty()) { cout << "Can't read image" << endl; return -1; } imgs.push_back(img1); imgs.push_back(img2); Stitcher stitcher = Stitcher::createDefault(try_use_gpu); // 使用stitch函式進行拼接 Mat pano; Stitcher::Status status = stitcher.stitch(imgs, pano); if (status != Stitcher::OK) { cout << "Can't stitch images, error code = " << int(status) << endl; return -1; } imwrite(result_name, pano); Mat pano2 = pano.clone(); // 顯示源影象,和結果影象 imshow("全景影象", pano); if (waitKey() == 27) return 0; }

原始影象如下:

拼接後效果(符合正常的視覺效果,中心對稱):

猜測:分別相對於中心座標做變換,或者相對於左圖變換後做了個旋轉