Win10系統下一步一步教你實現MASK_RCNN訓練自己的資料集(使用labelme製作自己的資料集)及需要注意的大坑

一、Labelme的安裝

二、製作自己的資料集

2.1 首先使用labelme標註如下樣式圖片(我的圖片是jpg格式)

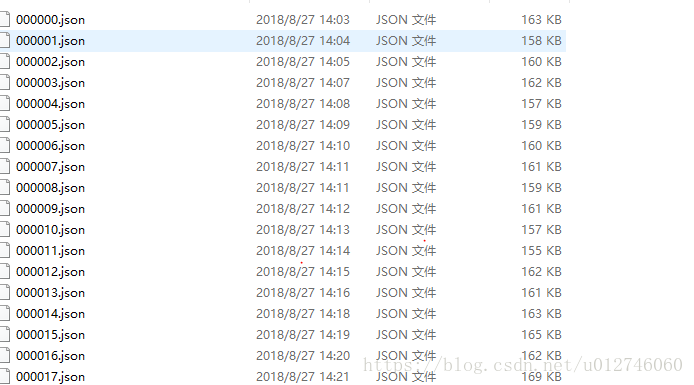

2.2每個檔案生成一個對應的.json檔案。如下

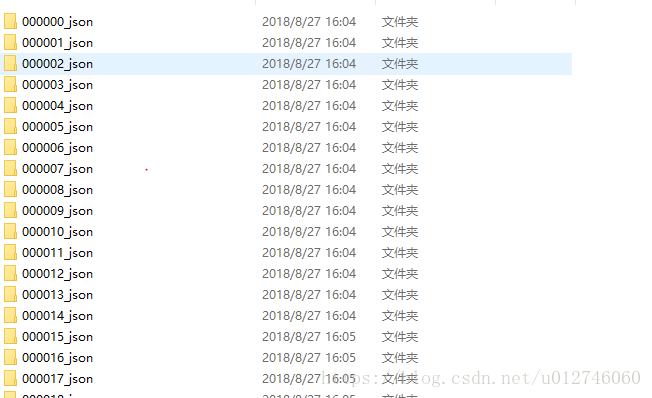

2.3執行上面參考部落格最後給出的指令碼程式批量將json檔案轉化資料集,生成如下格式的檔案:

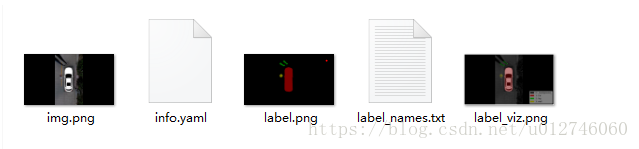

2.4開啟每個檔案可以看到生成的5個檔案,如下:

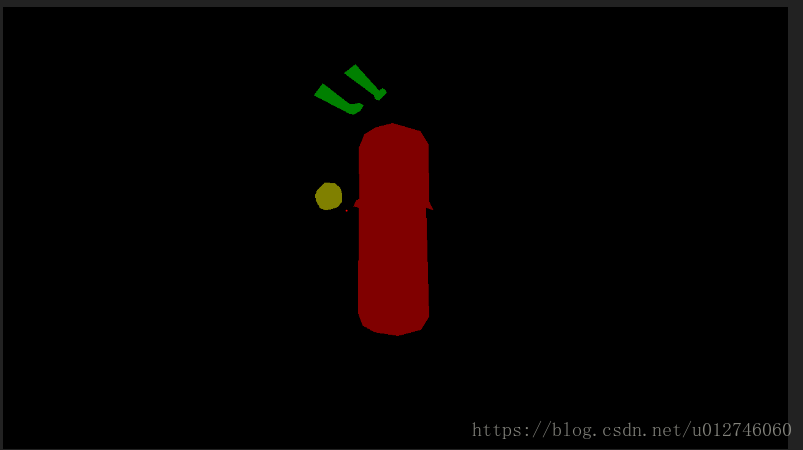

2.5值得注意的是,開啟label.png檔案,你會發現是彩色的,而且是有自己標註的形狀顯示的如下:

開啟該label.png檔案的詳細資訊,你會發現圖片是8位的,這與很多部落格寫的不一樣(別的部落格生成的都是24位的而且看起來全黑的label.png圖片,而且給出了matlab或者c++程式碼才能將24位轉化為8位的label.png,過程非常繁瑣。為什麼出現這種狀況呢?檢視labelme原始碼,這是由於labelme版本不同。發現截至到寫部落格日期最新的版本與他們使用的版本不同)。

生成的這個8位彩色圖片就是最終需要的label.png圖片。就是最終,最終,最終label.png圖片。重要的話說三遍!!!

三、mask_rcnn程式的訓練和預測

該部落格建立了四個檔案,為保持統一。我也建立四個相同的檔案:pic目錄存放原始的圖片如步驟2.1。json目錄存放labelme標註的json檔案如步驟2.2。label_json存放生成的datase如步驟2.3。cv2_mask存放如步驟2.6生成的特定物體對應特定顏色的8位彩色label.png圖片。

訓練程式train_model.py:

# -*- coding: utf-8 -*- import os import sys import random import math import re import time import numpy as np import cv2 import matplotlib import matplotlib.pyplot as plt import tensorflow as tf from mrcnn.config import Config #import utils from mrcnn import model as modellib,utils from mrcnn import visualize import yaml from mrcnn.model import log from PIL import Image #os.environ["CUDA_VISIBLE_DEVICES"] = "0" # Root directory of the project ROOT_DIR = os.getcwd() #ROOT_DIR = os.path.abspath("../") # Directory to save logs and trained model MODEL_DIR = os.path.join(ROOT_DIR, "logs") iter_num=0 # Local path to trained weights file COCO_MODEL_PATH = os.path.join(ROOT_DIR, "mask_rcnn_coco.h5") # Download COCO trained weights from Releases if needed if not os.path.exists(COCO_MODEL_PATH): utils.download_trained_weights(COCO_MODEL_PATH) class ShapesConfig(Config): """Configuration for training on the toy shapes dataset. Derives from the base Config class and overrides values specific to the toy shapes dataset. """ # Give the configuration a recognizable name NAME = "shapes" # Train on 1 GPU and 8 images per GPU. We can put multiple images on each # GPU because the images are small. Batch size is 8 (GPUs * images/GPU). GPU_COUNT = 1 IMAGES_PER_GPU = 1 # Number of classes (including background) NUM_CLASSES = 1 + 3 # background + 3 shapes # Use small images for faster training. Set the limits of the small side # the large side, and that determines the image shape. IMAGE_MIN_DIM = 320 IMAGE_MAX_DIM = 384 # Use smaller anchors because our image and objects are small RPN_ANCHOR_SCALES = (8 * 6, 16 * 6, 32 * 6, 64 * 6, 128 * 6) # anchor side in pixels # Reduce training ROIs per image because the images are small and have # few objects. Aim to allow ROI sampling to pick 33% positive ROIs. TRAIN_ROIS_PER_IMAGE = 100 # Use a small epoch since the data is simple STEPS_PER_EPOCH = 100 # use small validation steps since the epoch is small VALIDATION_STEPS = 50 config = ShapesConfig() config.display() class DrugDataset(utils.Dataset): # 得到該圖中有多少個例項(物體) def get_obj_index(self, image): n = np.max(image) return n # 解析labelme中得到的yaml檔案,從而得到mask每一層對應的例項標籤 def from_yaml_get_class(self, image_id): info = self.image_info[image_id] with open(info['yaml_path']) as f: temp = yaml.load(f.read()) labels = temp['label_names'] del labels[0] return labels # 重新寫draw_mask def draw_mask(self, num_obj, mask, image,image_id): #print("draw_mask-->",image_id) #print("self.image_info",self.image_info) info = self.image_info[image_id] #print("info-->",info) #print("info[width]----->",info['width'],"-info[height]--->",info['height']) for index in range(num_obj): for i in range(info['width']): for j in range(info['height']): #print("image_id-->",image_id,"-i--->",i,"-j--->",j) #print("info[width]----->",info['width'],"-info[height]--->",info['height']) at_pixel = image.getpixel((i, j)) if at_pixel == index + 1: mask[j, i, index] = 1 return mask # 重新寫load_shapes,裡面包含自己的自己的類別 # 並在self.image_info資訊中添加了path、mask_path 、yaml_path # yaml_pathdataset_root_path = "/tongue_dateset/" # img_floder = dataset_root_path + "rgb" # mask_floder = dataset_root_path + "mask" # dataset_root_path = "/tongue_dateset/" def load_shapes(self, count, img_floder, mask_floder, imglist, dataset_root_path): """Generate the requested number of synthetic images. count: number of images to generate. height, width: the size of the generated images. """ # Add classes self.add_class("shapes", 1, "car") self.add_class("shapes", 2, "leg") self.add_class("shapes", 3, "well") for i in range(count): # 獲取圖片寬和高 filestr = imglist[i].split(".")[0] #print(imglist[i],"-->",cv_img.shape[1],"--->",cv_img.shape[0]) #print("id-->", i, " imglist[", i, "]-->", imglist[i],"filestr-->",filestr) # filestr = filestr.split("_")[1] mask_path = mask_floder + "/" + filestr + ".png" yaml_path = dataset_root_path + "labelme_json/" + filestr + "_json/info.yaml" print(dataset_root_path + "labelme_json/" + filestr + "_json/img.png") cv_img = cv2.imread(dataset_root_path + "labelme_json/" + filestr + "_json/img.png") self.add_image("shapes", image_id=i, path=img_floder + "/" + imglist[i], width=cv_img.shape[1], height=cv_img.shape[0], mask_path=mask_path, yaml_path=yaml_path) # 重寫load_mask def load_mask(self, image_id): """Generate instance masks for shapes of the given image ID. """ global iter_num print("image_id",image_id) info = self.image_info[image_id] count = 1 # number of object img = Image.open(info['mask_path']) num_obj = self.get_obj_index(img) mask = np.zeros([info['height'], info['width'], num_obj], dtype=np.uint8) mask = self.draw_mask(num_obj, mask, img,image_id) occlusion = np.logical_not(mask[:, :, -1]).astype(np.uint8) for i in range(count - 2, -1, -1): mask[:, :, i] = mask[:, :, i] * occlusion occlusion = np.logical_and(occlusion, np.logical_not(mask[:, :, i])) labels = [] labels = self.from_yaml_get_class(image_id) labels_form = [] for i in range(len(labels)): if labels[i].find("car") != -1: # print "car" labels_form.append("car") elif labels[i].find("leg") != -1: # print "leg" labels_form.append("leg") elif labels[i].find("well") != -1: # print "well" labels_form.append("well") class_ids = np.array([self.class_names.index(s) for s in labels_form]) return mask, class_ids.astype(np.int32) def get_ax(rows=1, cols=1, size=8): """Return a Matplotlib Axes array to be used in all visualizations in the notebook. Provide a central point to control graph sizes. Change the default size attribute to control the size of rendered images """ _, ax = plt.subplots(rows, cols, figsize=(size * cols, size * rows)) return ax #基礎設定 dataset_root_path="train_data/" img_floder = dataset_root_path + "pic" mask_floder = dataset_root_path + "cv2_mask" #yaml_floder = dataset_root_path imglist = os.listdir(img_floder) count = len(imglist) #train與val資料集準備 dataset_train = DrugDataset() dataset_train.load_shapes(count, img_floder, mask_floder, imglist,dataset_root_path) dataset_train.prepare() #print("dataset_train-->",dataset_train._image_ids) dataset_val = DrugDataset() dataset_val.load_shapes(7, img_floder, mask_floder, imglist,dataset_root_path) dataset_val.prepare() #print("dataset_val-->",dataset_val._image_ids) # Load and display random samples #image_ids = np.random.choice(dataset_train.image_ids, 4) #for image_id in image_ids: # image = dataset_train.load_image(image_id) # mask, class_ids = dataset_train.load_mask(image_id) # visualize.display_top_masks(image, mask, class_ids, dataset_train.class_names) # Create model in training mode model = modellib.MaskRCNN(mode="training", config=config, model_dir=MODEL_DIR) # Which weights to start with? init_with = "coco" # imagenet, coco, or last if init_with == "imagenet": model.load_weights(model.get_imagenet_weights(), by_name=True) elif init_with == "coco": # Load weights trained on MS COCO, but skip layers that # are different due to the different number of classes # See README for instructions to download the COCO weights # print(COCO_MODEL_PATH) model.load_weights(COCO_MODEL_PATH, by_name=True, exclude=["mrcnn_class_logits", "mrcnn_bbox_fc", "mrcnn_bbox", "mrcnn_mask"]) elif init_with == "last": # Load the last model you trained and continue training model.load_weights(model.find_last()[1], by_name=True) # Train the head branches # Passing layers="heads" freezes all layers except the head # layers. You can also pass a regular expression to select # which layers to train by name pattern. model.train(dataset_train, dataset_val, learning_rate=config.LEARNING_RATE, epochs=10, layers='heads') # Fine tune all layers # Passing layers="all" trains all layers. You can also # pass a regular expression to select which layers to # train by name pattern. model.train(dataset_train, dataset_val, learning_rate=config.LEARNING_RATE / 10, epochs=30, layers="all")

預測程式test_model.py:

import os import sys import random import skimage.io from mrcnn.config import Config from datetime import datetime # Root directory of the project ROOT_DIR = os.getcwd() # Import Mask RCNN sys.path.append(ROOT_DIR) # To find local version of the library from mrcnn import utils import mrcnn.model as modellib from mrcnn import visualize # Directory to save logs and trained model MODEL_DIR = os.path.join(ROOT_DIR, "logs") # Local path to trained weights file COCO_MODEL_PATH = os.path.join(MODEL_DIR ,"mask_rcnn_shapes_0030.h5") # Download COCO trained weights from Releases if needed if not os.path.exists(COCO_MODEL_PATH): utils.download_trained_weights(COCO_MODEL_PATH) # Directory of images to run detection on IMAGE_DIR = os.path.join(ROOT_DIR, "images") class ShapesConfig(Config): """Configuration for training on the toy shapes dataset. Derives from the base Config class and overrides values specific to the toy shapes dataset. """ # Give the configuration a recognizable name NAME = "shapes" # Train on 1 GPU and 8 images per GPU. We can put multiple images on each # GPU because the images are small. Batch size is 8 (GPUs * images/GPU). GPU_COUNT = 1 IMAGES_PER_GPU = 1 # Number of classes (including background) NUM_CLASSES = 1 + 3 # background + 3 shapes # Use small images for faster training. Set the limits of the small side # the large side, and that determines the image shape. IMAGE_MIN_DIM = 320 IMAGE_MAX_DIM = 384 # Use smaller anchors because our image and objects are small RPN_ANCHOR_SCALES = (8 * 6, 16 * 6, 32 * 6, 64 * 6, 128 * 6) # anchor side in pixels # Reduce training ROIs per image because the images are small and have # few objects. Aim to allow ROI sampling to pick 33% positive ROIs. TRAIN_ROIS_PER_IMAGE =100 # Use a small epoch since the data is simple STEPS_PER_EPOCH = 100 # use small validation steps since the epoch is small VALIDATION_STEPS = 50 #import train_tongue #class InferenceConfig(coco.CocoConfig): class InferenceConfig(ShapesConfig): # Set batch size to 1 since we'll be running inference on # one image at a time. Batch size = GPU_COUNT * IMAGES_PER_GPU GPU_COUNT = 1 IMAGES_PER_GPU = 1 config = InferenceConfig() model = modellib.MaskRCNN(mode="inference", model_dir=MODEL_DIR, config=config) # Create model object in inference mode. model = modellib.MaskRCNN(mode="inference", model_dir=MODEL_DIR, config=config) # Load weights trained on MS-COCO model.load_weights(COCO_MODEL_PATH, by_name=True) # COCO Class names # Index of the class in the list is its ID. For example, to get ID of # the teddy bear class, use: class_names.index('teddy bear') class_names = ['BG', 'car','leg','well'] # Load a random image from the images folder file_names = next(os.walk(IMAGE_DIR))[2] image = skimage.io.imread(os.path.join(IMAGE_DIR, random.choice(file_names))) a=datetime.now() # Run detection results = model.detect([image], verbose=1) b=datetime.now() # Visualize results print("shijian",(b-a).seconds) r = results[0] visualize.display_instances(image, r['rois'], r['masks'], r['class_ids'], class_names, r['scores'])

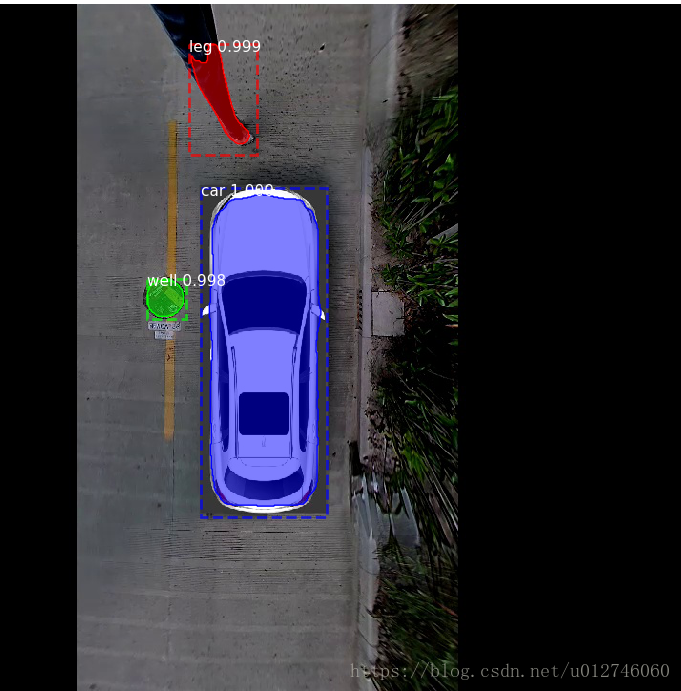

執行結果如下:

寫在最後:本文只是簡單的入門。本文謄寫最大的動機其實是第二步中的label.png的提到的兩個注意的問題,這也是很多人疑惑的地方。至於後面的示例,只是單純的方便讀者入門調通程式。當然讀者還需要進一步加入自己的工作。例如優化上面的步驟,計算map,還有在視訊檢測中使用maskrcnn。由於涉及到專案私密就不給出來,官方給出的很多示例程式:https://github.com/matterport/Mask_RCNN,這方面的部落格也有很多,還是需要大家繼續努力。

注:在執行test_model時會出現AttributeError: module 'keras.engine.topology' has no attribute'load_weights_from_hdf5_group_by_name. 解決辦法使用keras2.0.8版本。1)解除安裝keras:pip uninstall keras 2)安裝2.0.8版本的keras:pip install keras==2.0.8