iOS開發(第三方使用)——訊飛語音SDK接入

阿新 • • 發佈:2019-02-19

- 去到訊飛開放平臺建立應用並新增服務

- 下載SDK,下載時需要選上專案的,必須選上相應的專案,不能用專案1下載的SDK和專案2的app ID結合使用(估計是訊飛綁定了,所以步驟1和步驟2也不能顛倒)

- 拷貝下載的SDK中的iflyMSC.framework到桌面,然後拖到工程去

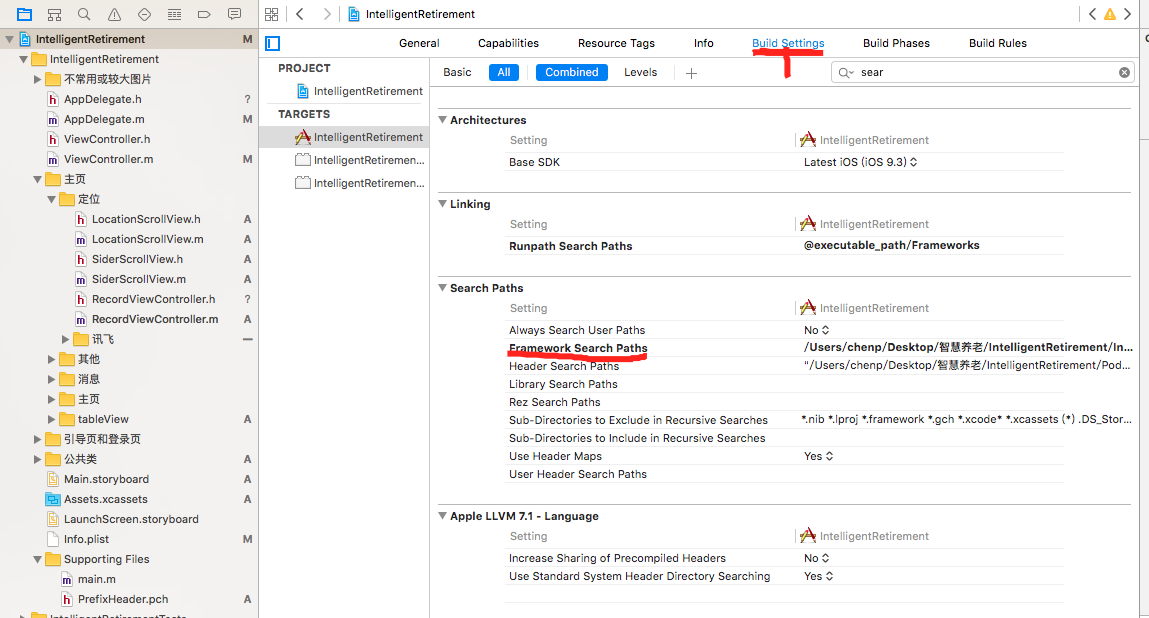

選擇剛剛拖進的iflyMSC.framework,show in finder,然後按照下圖操作,雙擊右邊部分,會彈出一個大框,把iflyMSC.framework所在的資料夾拖到大框裡。

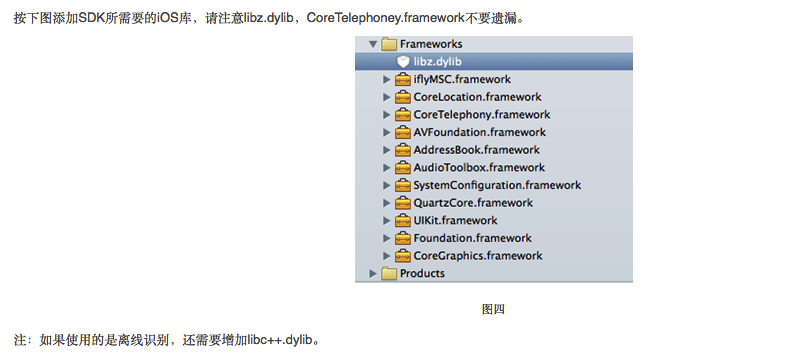

新增類庫,如下圖

在AppDelegate.m匯入標頭檔案iflyMSC/IFlyMSC.h 並新增一下程式碼

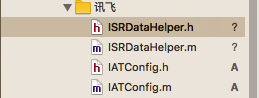

- (BOOL 7.在下載的SDK裡面找到下圖的幾個檔案,並拖到工程

8.在你需要用到的控制器裡面匯入標頭檔案,並設定代理IFlySpeechRecognizerDelegate

#import "iflyMSC/IFlySpeechRecognizerDelegate.h"

#import "iflyMSC/IFlySpeechRecognizer.h"

#import "iflyMSC/IFlyMSC.h"

#import "IATConfig.h"

#import "ISRDataHelper.h"9.宣告例項IFlySpeechRecognizer *_iFlySpeechRecognizer;在語音設別按鈕新增以下程式碼

if(_iFlySpeechRecognizer == nil)

{

[self initRecognizer];

}

[_iFlySpeechRecognizer cancel];

//設定音訊來源為麥克風

[_iFlySpeechRecognizer setParameter:IFLY_AUDIO_SOURCE_MIC forKey:@"audio_source"];

//設定聽寫結果格式為json

[_iFlySpeechRecognizer setParameter:@"json" forKey:[IFlySpeechConstant RESULT_TYPE]];

[_iFlySpeechRecognizer setDelegate:self];

BOOL ret = [_iFlySpeechRecognizer startListening];

if (ret) {

NSLog(@"start");

}else{

NSLog(@"error");

}10.其中initRecognizer方法如下

-(void)initRecognizer

{

//單例模式,無UI的例項

if (_iFlySpeechRecognizer == nil) {

_iFlySpeechRecognizer = [IFlySpeechRecognizer sharedInstance];

[_iFlySpeechRecognizer setParameter:@"" forKey:[IFlySpeechConstant PARAMS]];

//設定聽寫模式

[_iFlySpeechRecognizer setParameter:@"iat" forKey:[IFlySpeechConstant IFLY_DOMAIN]];

}

_iFlySpeechRecognizer.delegate = self;

if (_iFlySpeechRecognizer != nil) {

IATConfig *instance = [IATConfig sharedInstance];

//設定最長錄音時間

[_iFlySpeechRecognizer setParameter:instance.speechTimeout forKey:[IFlySpeechConstant SPEECH_TIMEOUT]];

//設定後端點

[_iFlySpeechRecognizer setParameter:instance.vadEos forKey:[IFlySpeechConstant VAD_EOS]];

//設定前端點

[_iFlySpeechRecognizer setParameter:instance.vadBos forKey:[IFlySpeechConstant VAD_BOS]];

//網路等待時間

[_iFlySpeechRecognizer setParameter:@"20000" forKey:[IFlySpeechConstant NET_TIMEOUT]];

//設定取樣率,推薦使用16K

[_iFlySpeechRecognizer setParameter:instance.sampleRate forKey:[IFlySpeechConstant SAMPLE_RATE]];

if ([instance.language isEqualToString:[IATConfig chinese]]) {

//設定語言

[_iFlySpeechRecognizer setParameter:instance.language forKey:[IFlySpeechConstant LANGUAGE]];

//設定方言

[_iFlySpeechRecognizer setParameter:instance.accent forKey:[IFlySpeechConstant ACCENT]];

}else if ([instance.language isEqualToString:[IATConfig english]]) {

[_iFlySpeechRecognizer setParameter:instance.language forKey:[IFlySpeechConstant LANGUAGE]];

}

//0無標點返回

[_iFlySpeechRecognizer setParameter:@"0" forKey:[IFlySpeechConstant ASR_PTT]];

}

}11.代理方法

- (void) onResults:(NSArray *) results isLast:(BOOL)isLast

{

NSMutableString *resultString = [[NSMutableString alloc] init];

NSDictionary *dic = results[0];

for (NSString *key in dic) {

[resultString appendFormat:@"%@",key];

}

NSString * resultFromJson = [ISRDataHelper stringFromJson:resultString];

NSLog(@"resultFromJson=%@",resultFromJson);

}

//識別會話錯誤返回

- (void)onError: (IFlySpeechError *) error

{

//error.errorCode =0 聽寫正確 other 聽寫出錯

NSLog(@"code=%d",error.errorCode);

if(error.errorCode!=0){

//出錯

}

}iOS開發交流群:301058503