從頭學pytorch(五) 多層感知機及其實現

多層感知機

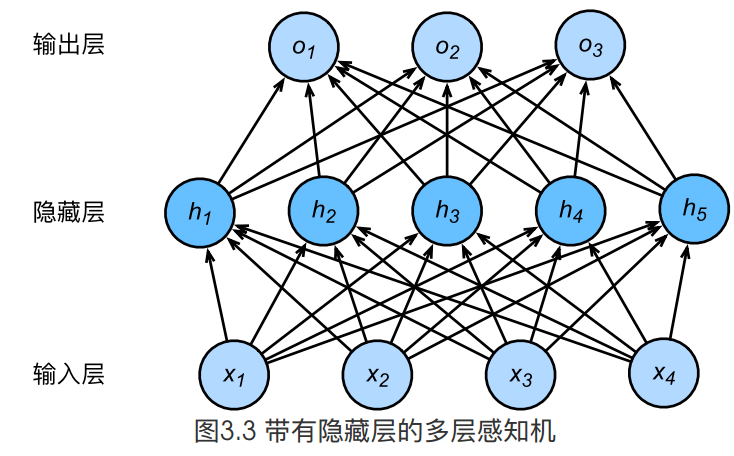

上圖所示的多層感知機中,輸入和輸出個數分別為4和3,中間的隱藏層中包含了5個隱藏單元(hidden unit)。由於輸入層不涉及計算,圖3.3中的多層感知機的層數為2。由圖3.3可見,隱藏層中的神經元和輸入層中各個輸入完全連線,輸出層中的神經元和隱藏層中的各個神經元也完全連線。因此,多層感知機中的隱藏層和輸出層都是全連線層。

具體來說,給定一個小批量樣本\(\boldsymbol{X} \in \mathbb{R}^{n \times d}\),其批量大小為\(n\),輸入個數為\(d\)。假設多層感知機只有一個隱藏層,其中隱藏單元個數為\(h\)。記隱藏層的輸出(也稱為隱藏層變數或隱藏變數)為\(\boldsymbol{H}\),有\(\boldsymbol{H} \in \mathbb{R}^{n \times h}\)。因為隱藏層和輸出層均是全連線層,可以設隱藏層的權重引數和偏差引數分別為\(\boldsymbol{W}_h \in \mathbb{R}^{d \times h}\)和 \(\boldsymbol{b}_h \in \mathbb{R}^{1 \times h}\),輸出層的權重和偏差引數分別為\(\boldsymbol{W}_o \in \mathbb{R}^{h \times q}\)和\(\boldsymbol{b}_o \in \mathbb{R}^{1 \times q}\)。

我們先來看一種含單隱藏層的多層感知機的設計。其輸出\(\boldsymbol{O} \in \mathbb{R}^{n \times q}\)的計算為

\[ \begin{aligned} \boldsymbol{H} &= \boldsymbol{X} \boldsymbol{W}_h + \boldsymbol{b}_h,\\ \boldsymbol{O} &= \boldsymbol{H} \boldsymbol{W}_o + \boldsymbol{b}_o, \end{aligned} \]

也就是將隱藏層的輸出直接作為輸出層的輸入。如果將以上兩個式子聯立起來,可以得到

\[ \boldsymbol{O} = (\boldsymbol{X} \boldsymbol{W}_h + \boldsymbol{b}_h)\boldsymbol{W}_o + \boldsymbol{b}_o = \boldsymbol{X} \boldsymbol{W}_h\boldsymbol{W}_o + \boldsymbol{b}_h \boldsymbol{W}_o + \boldsymbol{b}_o. \]

從聯立後的式子可以看出,雖然神經網路引入了隱藏層,卻依然等價於一個單層神經網路:其中輸出層權重引數為\(\boldsymbol{W}_h\boldsymbol{W}_o\),偏差引數為\(\boldsymbol{b}_h \boldsymbol{W}_o + \boldsymbol{b}_o\)。不難發現,即便再新增更多的隱藏層,以上設計依然只能與僅含輸出層的單層神經網路等價。

啟用函式

上面問題的根源就在於每一層的變換都是線性變換.線性變換的疊加依然是線性變換,所以,我們需要引入非線性.即對隱藏層的輸出經過啟用函式後,再作為輸入輸入到下一層.

幾種常見的啟用函式:

- relu

- sigmoid

- tanh

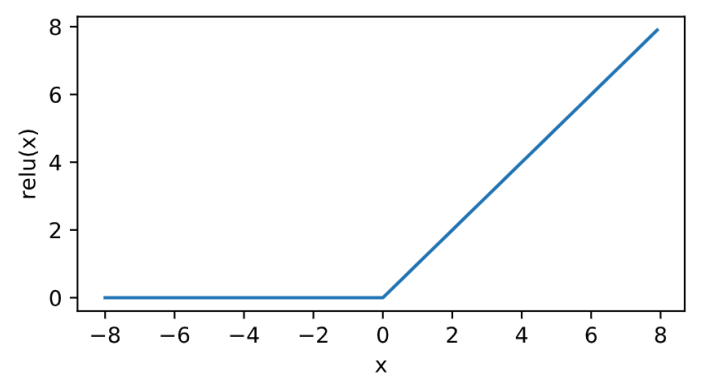

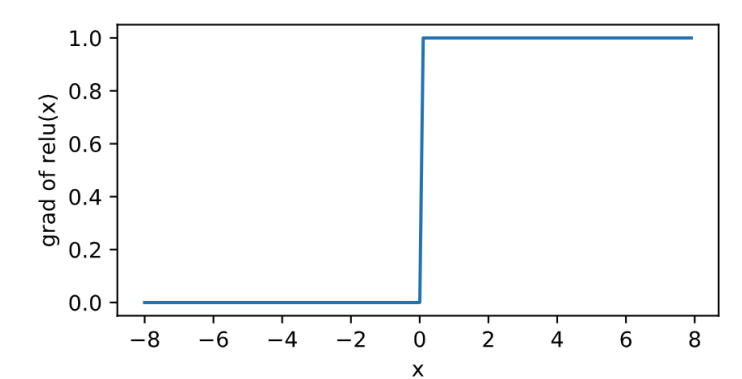

relu

\[\text{ReLU}(x) = \max(x, 0).\]

其曲線及導數的曲線圖繪製如下:

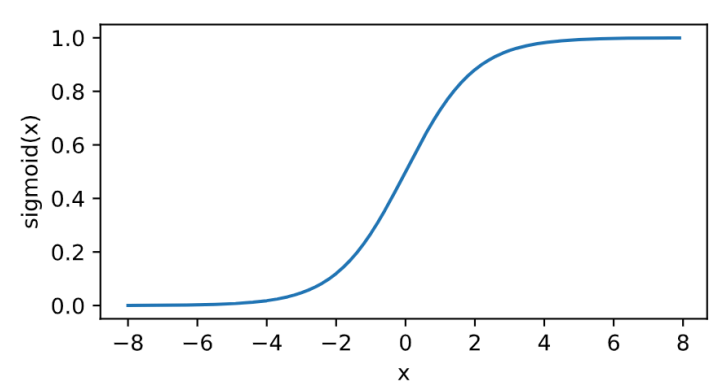

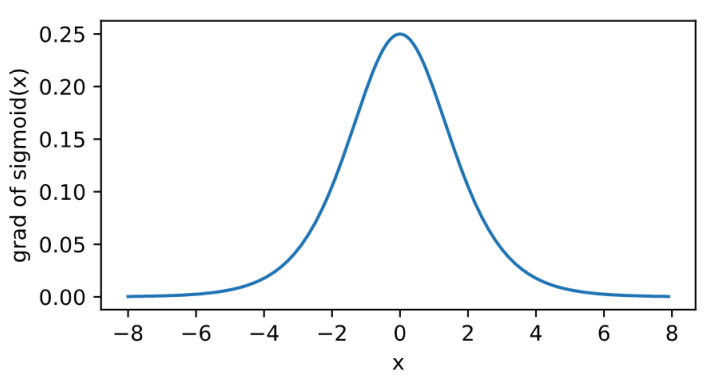

sigmoid

其曲線及導數的曲線圖繪製如下:

\[\text{sigmoid}(x) = \frac{1}{1 + \exp(-x)}.\]

tanh

\[\text{tanh}(x) = \frac{1 - \exp(-2x)}{1 + \exp(-2x)}.\]

其曲線及導數的曲線圖繪製如下:

從頭實現多層感知機

必要的模組匯入

import torch

import numpy as np

import matplotlib.pylab as plt

import sys

import torchvision

import torchvision.transforms as transforms獲取和讀取資料

依然是之前用到的FashionMNIST資料集

batch_size = 256

num_workers = 4 # 多程序同時讀取

def load_data(batch_size,num_workers):

mnist_train = torchvision.datasets.FashionMNIST(root='/home/sc/disk/keepgoing/learn_pytorch/Datasets/FashionMNIST',

train=True, download=True,

transform=transforms.ToTensor())

mnist_test = torchvision.datasets.FashionMNIST(root='/home/sc/disk/keepgoing/learn_pytorch/Datasets/FashionMNIST',

train=False, download=True,

transform=transforms.ToTensor())

train_iter = torch.utils.data.DataLoader(

mnist_train, batch_size=batch_size, shuffle=True, num_workers=num_workers)

test_iter = torch.utils.data.DataLoader(

mnist_test, batch_size=batch_size, shuffle=False, num_workers=num_workers)

return train_iter,test_iter

train_iter,test_iter = load_data(batch_size,num_workers)模型引數初始化

我們的神經網路有2層,所以相應的引數變成[W1,b1,W2,b2]

##

num_inputs, num_outputs, num_hiddens = 784, 10, 256 #假設隱藏層有256個神經元

W1 = torch.tensor(np.random.normal(0, 0.01, (num_inputs, num_hiddens)), dtype=torch.float)

b1 = torch.zeros(num_hiddens, dtype=torch.float)

W2 = torch.tensor(np.random.normal(0, 0.01, (num_hiddens,num_outputs)), dtype=torch.float)

b2 = torch.zeros(num_outputs, dtype=torch.float)

params = [W1,b1,W2,b2]

for param in params:

param.requires_grad_(requires_grad=True)模型定義

模型需要用到啟用函式relu以及將輸出轉換為概率的函式softmax.

所以首先定義好relu和softmax.

relu定義:

def relu(X):

#print(X.shape)

return torch.max(input=X,other=torch.zeros(X.shape))torch.max用法

softmax定義:

def softmax(X): # X.shape=[樣本數,類別數]

X_exp = X.exp()

partion = X_exp.sum(dim=1, keepdim=True) # 沿著列方向求和,即對每一行求和

#print(partion.shape)

return X_exp/partion # 廣播機制,partion被擴充套件成與X_exp同shape的,對應位置元素做除法模型結構定義:

def net(X):

X = X.view((-1,num_inputs))

#print(X.shape)

H = relu(torch.matmul(X,W1) + b1)

#print(H.shape)

output = torch.matmul(H,W2) + b2

return softmax(output)損失函式定義

def cross_entropy(y_hat, y):

y_hat_prob = y_hat.gather(1, y.view(-1, 1)) # ,沿著列方向,即選取出每一行下標為y的元素

return -torch.log(y_hat_prob)優化器定義

def sgd(params, lr, batch_size):

for param in params:

param.data -= lr * param.grad / batch_size # 注意這裡更改param時用的param.data模型訓練

定義精度評估函式

def evaluate_accuracy(data_iter, net):

acc_sum, n = 0.0, 0

for X, y in data_iter:

acc_sum += (net(X).argmax(dim=1) == y).float().sum().item()

n += y.shape[0]

return acc_sum / n訓練:

- 資料載入

- 前向傳播

- 計算loss

- 反向傳播,計算梯度

- 根據梯度值,更新引數

- 清空梯度

載入下一個batch的資料,迴圈往復.

num_epochs, lr = 5, 0.1

def train():

for epoch in range(num_epochs):

train_l_sum, train_acc_sum, n = 0.0, 0.0, 0

for X, y in train_iter:

#print(X.shape,y.shape)

y_hat = net(X)

l = cross_entropy(y_hat, y).sum() # 求loss

l.backward() # 反向傳播,計算梯度

sgd(params, lr, batch_size) # 根據梯度,更新引數

W1.grad.data.zero_() # 清空梯度

b1.grad.data.zero_()

W2.grad.data.zero_() # 清空梯度

b2.grad.data.zero_()

train_l_sum += l.item()

train_acc_sum += (y_hat.argmax(dim=1) == y).sum().item()

n += y.shape[0]

test_acc = evaluate_accuracy(test_iter, net)

print('epoch %d, loss %.4f, train_acc %.3f,test_acc %.3f'

% (epoch + 1, train_l_sum / n, train_acc_sum/n, test_acc))

train()輸出如下:

epoch 1, loss 1.0535, train_acc 0.629,test_acc 0.760

epoch 2, loss 0.6004, train_acc 0.789,test_acc 0.788

epoch 3, loss 0.5185, train_acc 0.819,test_acc 0.824

epoch 4, loss 0.4783, train_acc 0.833,test_acc 0.830

epoch 5, loss 0.4521, train_acc 0.842,test_acc 0.832多層感知機的簡單實現

必要的模組匯入

import torch

import torch.nn as nn

import torch.nn.init as init

import numpy as np

#import matplotlib.pylab as plt

import sys

import torchvision

import torchvision.transforms as transforms獲取和讀取資料

batch_size = 256

num_workers = 4 # 多程序同時讀取

def load_data(batch_size,num_workers):

mnist_train = torchvision.datasets.FashionMNIST(root='/home/sc/disk/keepgoing/learn_pytorch/Datasets/FashionMNIST',

train=True, download=True,

transform=transforms.ToTensor())

mnist_test = torchvision.datasets.FashionMNIST(root='/home/sc/disk/keepgoing/learn_pytorch/Datasets/FashionMNIST',

train=False, download=True,

transform=transforms.ToTensor())

train_iter = torch.utils.data.DataLoader(

mnist_train, batch_size=batch_size, shuffle=True, num_workers=num_workers)

test_iter = torch.utils.data.DataLoader(

mnist_test, batch_size=batch_size, shuffle=False, num_workers=num_workers)

return train_iter,test_iter

train_iter,test_iter = load_data(batch_size,num_workers)模型定義及引數初始化

這裡我們使用torch.nn中自帶的實現. 由於後續要定義的損失函式nn.nn.CrossEntropyLoss中包含了softmax的操作,所以這裡不再需要定義relu和softmax.

class Net(nn.Module):

def __init__(self,num_inputs, num_outputs, num_hiddens):

super(Net,self).__init__()

self.l1 = nn.Linear(num_inputs,num_hiddens)

self.relu1 = nn.ReLU()

self.l2 = nn.Linear(num_hiddens,num_outputs)

def forward(self,X):

X=X.view(X.shape[0],-1)

o1 = self.relu1(self.l1(X))

o2 = self.l2(o1)

return o2

def init_params(self):

for param in self.parameters():

#print(param.shape)

init.normal_(param,mean=0,std=0.01)

num_inputs, num_outputs, num_hiddens = 28*28,10,256

net = Net(num_inputs,num_outputs,num_hiddens)

net.init_params()定義損失函式

loss = nn.CrossEntropyLoss()定義優化器

optimizer = torch.optim.SGD(net.parameters(),lr=0.5)訓練模型

def evaluate_accuracy(data_iter, net):

acc_sum, n = 0.0, 0

for X, y in data_iter:

acc_sum += (net(X).argmax(dim=1) == y).float().sum().item()

n += y.shape[0]

return acc_sum / n

num_epochs=5

def train():

for epoch in range(num_epochs):

train_l_sum, train_acc_sum, n = 0.0, 0.0, 0

for X, y in train_iter:

y_hat=net(X) #前向傳播

l = loss(y_hat,y).sum()#計算loss

l.backward()#反向傳播

optimizer.step()#引數更新

optimizer.zero_grad()#清空梯度

train_l_sum += l.item()

train_acc_sum += (y_hat.argmax(dim=1) == y).sum().item()

n += y.shape[0]

test_acc = evaluate_accuracy(test_iter, net)

print('epoch %d, loss %.4f, train_acc %.3f,test_acc %.3f'

% (epoch + 1, train_l_sum / n, train_acc_sum/n, test_acc))

train()輸出如下:

epoch 1, loss 0.0031, train_acc 0.709,test_acc 0.785

epoch 2, loss 0.0019, train_acc 0.823,test_acc 0.831

epoch 3, loss 0.0016, train_acc 0.844,test_acc 0.830

epoch 4, loss 0.0015, train_acc 0.855,test_acc 0.854

epoch 5, loss 0.0014, train_acc 0.866,test_acc 0.836可以看到這裡的loss相比我們自己實現的loss小了很多,是因為torch裡在計算loss的時候求的是這個batch的平均loss.我們自己實現的損失函式並沒有求平