詳細教程丨使用Prometheus和Thanos進行高可用K8S監控

阿新 • • 發佈:2020-09-10

本文轉自[Rancher Labs](https://mp.weixin.qq.com/s/E7fF0SKdrQhTESBD3cYTmw "Rancher Labs")

## 介 紹

### Prometheus高可用的必要性

在過去的幾年裡,Kubernetes的採用量增長了數倍。很明顯,Kubernetes是容器編排的不二選擇。與此同時,Prometheus也被認為是監控容器化和非容器化工作負載的絕佳選擇。監控是任何基礎設施的一個重要關注點,我們應該確保我們的監控設定具有高可用性和高可擴充套件性,以滿足不斷增長的基礎設施的需求,特別是在採用Kubernetes的情況下。

因此,今天我們將部署一個叢集化的Prometheus設定,它不僅能夠彈性應對節點故障,還能保證合適的資料存檔,供以後參考。我們的設定還具有很強的可擴充套件性,以至於我們可以在同一個監控保護傘下跨越多個Kubernetes叢集。

### 當前方案

大部分的Prometheus部署都是使用持久卷的pod,而Prometheus則是使用聯邦機制進行擴充套件。但是並不是所有的資料都可以使用聯邦機制進行聚合,在這裡,當你增加額外的伺服器時,你往往需要一個機制來管理Prometheus配置。

### 解決方法

Thanos旨在解決上述問題。在Thanos的幫助下,我們不僅可以對Prometheus的例項進行多重複制,並在它們之間進行資料去重,還可以將資料歸檔到GCS或S3等長期儲存中。

## 實施過程

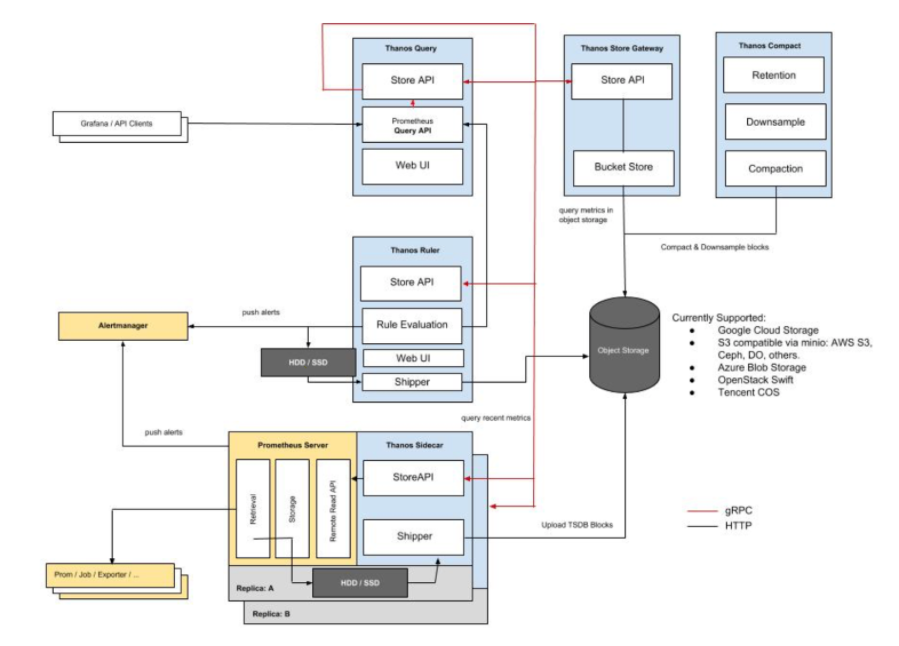

### Thanos 架構

圖片來源: https://thanos.io/quick-tutorial.md/

Thanos由以下元件構成:

- **Thanos sidecar**:這是執行在Prometheus上的主要元件。它讀取和歸檔物件儲存上的資料。此外,它還管理著Prometheus的配置和生命週期。為了區分每個Prometheus例項,sidecar元件將外部標籤注入到Prometheus配置中。該元件能夠在 Prometheus 伺服器的 PromQL 介面上執行查詢。Sidecar元件還能監聽Thanos gRPC協議,並在gRPC和REST之間翻譯查詢。

- **Thanos 儲存**:該元件在物件storage bucket中的歷史資料之上實現了Store API,它主要作為API閘道器,因此不需要大量的本地磁碟空間。它在啟動時加入一個Thanos叢集,並公佈它可以訪問的資料。它在本地磁碟上儲存了少量關於所有遠端區塊的資訊,並使其與 bucket 保持同步。通常情況下,在重新啟動時可以安全地刪除此資料,但會增加啟動時間。

- **Thanos查詢**:查詢元件在HTTP上監聽並將查詢翻譯成Thanos gRPC格式。它從不同的源頭彙總查詢結果,並能從Sidecar和Store讀取資料。在HA設定中,它甚至會對查詢結果進行重複資料刪除。

#### HA組的執行時重複資料刪除

Prometheus是有狀態的,不允許複製其資料庫。這意味著通過執行多個Prometheus副本來提高高可用性並不易於使用。簡單的負載均衡是行不通的,比如在發生某些崩潰之後,一個副本可能會啟動,但是查詢這樣的副本會導致它在關閉期間出現一個小的缺口(gap)。你有第二個副本可能正在啟動,但它可能在另一個時刻(如滾動重啟)關閉,因此在這些副本上面的負載均衡將無法正常工作。

- Thanos Querier則從兩個副本中提取資料,並對這些訊號進行重複資料刪除,從而為Querier使用者填補了缺口(gap)。

- Thanos Compact元件將Prometheus 2.0儲存引擎的壓實程式應用於物件儲存中的塊資料儲存。它通常不是語義上的併發安全,必須針對bucket 進行單例部署。它還負責資料的下采樣——40小時後執行5m下采樣,10天后執行1h下采樣。

- Thanos Ruler基本上和Prometheus的規則具有相同作用,唯一區別是它可以與Thanos元件進行通訊。

## 配 置

### 前期準備

要完全理解這個教程,需要準備以下東西:

1. 對Kubernetes和使用kubectl有一定的瞭解。

2. 執行中的Kubernetes叢集至少有3個節點(在本demo中,使用GKE叢集)

3. 實現Ingress Controller和Ingress物件(在本demo中使用Nginx Ingress Controller)。雖然這不是強制性的,但為了減少建立外部端點的數量,強烈建議使用。

4. 建立用於Thanos元件訪問物件儲存的憑證(在本例中為GCS bucket)。

5. 建立2個GCS bucket,並將其命名為Prometheus-long-term和thanos-ruler。

6. 建立一個服務賬戶,角色為Storage Object Admin。

7. 下載金鑰檔案作為json證書,並命名為thanos-gcs-credentials.json。

8. 使用憑證建立Kubernetes sercret

`kubectl create secret generic thanos-gcs-credentials --from-file=thanos-gcs-credentials.json`

### 部署各類元件

部署Prometheus服務賬戶、`Clusterroler`和`Clusterrolebinding`

```

apiVersion: v1

kind: Namespace

metadata:

name: monitoring

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: monitoring

namespace: monitoring

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRole

metadata:

name: monitoring

namespace: monitoring

rules:

- apiGroups: [""]

resources:

- nodes

- nodes/proxy

- services

- endpoints

- pods

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources:

- configmaps

verbs: ["get"]

- nonResourceURLs: ["/metrics"]

verbs: ["get"]

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

name: monitoring

subjects:

- kind: ServiceAccount

name: monitoring

namespace: monitoring

roleRef:

kind: ClusterRole

Name: monitoring

apiGroup: rbac.authorization.k8s.io

---

```

以上manifest建立了Prometheus所需的監控名稱空間以及服務賬戶、`clusterrole`以及`clusterrolebinding`。

#### 部署Prometheues配置configmap

```

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-server-conf

labels:

name: prometheus-server-conf

namespace: monitoring

data:

prometheus.yaml.tmpl: |-

global:

scrape_interval: 5s

evaluation_interval: 5s

external_labels:

cluster: prometheus-ha

# Each Prometheus has to have unique labels.

replica: $(POD_NAME)

rule_files:

- /etc/prometheus/rules/*rules.yaml

alerting:

# We want our alerts to be deduplicated

# from different replicas.

alert_relabel_configs:

- regex: replica

action: labeldrop

alertmanagers:

- scheme: http

path_prefix: /

static_configs:

- targets: ['alertmanager:9093']

scrape_configs:

- job_name: kubernetes-nodes-cadvisor

scrape_interval: 10s

scrape_timeout: 10s

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

kubernetes_sd_configs:

- role: node

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

# Only for Kubernetes ^1.7.3.

# See: https://github.com/prometheus/prometheus/issues/2916

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor

metric_relabel_configs:

- action: replace

source_labels: [id]

regex: '^/machine\.slice/machine-rkt\\x2d([^\\]+)\\.+/([^/]+)\.service$'

target_label: rkt_container_name

replacement: '${2}-${1}'

- action: replace

source_labels: [id]

regex: '^/system\.slice/(.+)\.service$'

target_label: systemd_service_name

replacement: '${1}'

- job_name: 'kubernetes-pods'

kubernetes_sd_configs:

- role: pod

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_pod_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: kubernetes_pod_name

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_pod_prometheus_io_port]

action: replace

target_label: __address__

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

- job_name: 'kubernetes-apiservers'

kubernetes_sd_configs:

- role: endpoints

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

action: keep

regex: default;kubernetes;https

- job_name: 'kubernetes-service-endpoints'

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: kubernetes_name

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: (.+)(?::\d+);(\d+)

replacement: $1:$2

```

上述Configmap建立了Prometheus配置檔案模板。這個配置檔案模板將被Thanos sidecar元件讀取,它將生成實際的配置檔案,而這個配置檔案又將被執行在同一個pod中的Prometheus容器所消耗。在配置檔案中新增external_labels部分是極其重要的,這樣Querier就可以根據這個來重複刪除資料。

#### 部署Prometheus Rules configmap

這將建立我們的告警規則,這些規則將被轉發到alertmanager,以便傳送。

```

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-rules

labels:

name: prometheus-rules

namespace: monitoring

data:

alert-rules.yaml: |-

groups:

- name: Deployment

rules:

- alert: Deployment at 0 Replicas

annotations:

summary: Deployment {{$labels.deployment}} in {{$labels.namespace}} is currently having no pods running

expr: |

sum(kube_deployment_status_replicas{pod_template_hash=""}) by (deployment,namespace) < 1

for: 1m

labels:

team: devops

- alert: HPA Scaling Limited

annotations:

summary: HPA named {{$labels.hpa}} in {{$labels.namespace}} namespace has reached scaling limited state

expr: |

(sum(kube_hpa_status_condition{condition="ScalingLimited",status="true"}) by (hpa,namespace)) == 1

for: 1m

labels:

team: devops

- alert: HPA at MaxCapacity

annotations:

summary: HPA named {{$labels.hpa}} in {{$labels.namespace}} namespace is running at Max Capacity

expr: |

((sum(kube_hpa_spec_max_replicas) by (hpa,namespace)) - (sum(kube_hpa_status_current_replicas) by (hpa,namespace))) == 0

for: 1m

labels:

team: devops

- name: Pods

rules:

- alert: Container restarted

annotations:

summary: Container named {{$labels.container}} in {{$labels.pod}} in {{$labels.namespace}} was restarted

expr: |

sum(increase(kube_pod_container_status_restarts_total{namespace!="kube-system",pod_template_hash=""}[1m])) by (pod,namespace,container) > 0

for: 0m

labels:

team: dev

- alert: High Memory Usage of Container

annotations:

summary: Container named {{$labels.container}} in {{$labels.pod}} in {{$labels.namespace}} is using more than 75% of Memory Limit

expr: |

((( sum(container_memory_usage_bytes{image!="",container_name!="POD", namespace!="kube-system"}) by (namespace,container_name,pod_name) / sum(container_spec_memory_limit_bytes{image!="",container_name!="POD",namespace!="kube-system"}) by (namespace,container_name,pod_name) ) * 100 ) < +Inf ) > 75

for: 5m

labels:

team: dev

- alert: High CPU Usage of Container

annotations:

summary: Container named {{$labels.container}} in {{$labels.pod}} in {{$labels.namespace}} is using more than 75% of CPU Limit

expr: |

((sum(irate(container_cpu_usage_seconds_total{image!="",container_name!="POD", namespace!="kube-system"}[30s])) by (namespace,container_name,pod_name) / sum(container_spec_cpu_quota{image!="",container_name!="POD", namespace!="kube-system"} / container_spec_cpu_period{image!="",container_name!="POD", namespace!="kube-system"}) by (namespace,container_name,pod_name) ) * 100) > 75

for: 5m

labels:

team: dev

- name: Nodes

rules:

- alert: High Node Memory Usage

annotations:

summary: Node {{$labels.kubernetes_io_hostname}} has more than 80% memory used. Plan Capcity

expr: |

(sum (container_memory_working_set_bytes{id="/",container_name!="POD"}) by (kubernetes_io_hostname) / sum (machine_memory_bytes{}) by (kubernetes_io_hostname) * 100) > 80

for: 5m

labels:

team: devops

- alert: High Node CPU Usage

annotations:

summary: Node {{$labels.kubernetes_io_hostname}} has more than 80% allocatable cpu used. Plan Capacity.

expr: |

(sum(rate(container_cpu_usage_seconds_total{id="/", container_name!="POD"}[1m])) by (kubernetes_io_hostname) / sum(machine_cpu_cores) by (kubernetes_io_hostname) * 100) > 80

for: 5m

labels:

team: devops

- alert: High Node Disk Usage

annotations:

summary: Node {{$labels.kubernetes_io_hostname}} has more than 85% disk used. Plan Capacity.

expr: |

(sum(container_fs_usage_bytes{device=~"^/dev/[sv]d[a-z][1-9]$",id="/",container_name!="POD"}) by (kubernetes_io_hostname) / sum(container_fs_limit_bytes{container_name!="POD",device=~"^/dev/[sv]d[a-z][1-9]$",id="/"}) by (kubernetes_io_hostname)) * 100 > 85

for: 5m

labels:

team: devops

```

#### 部署Prometheus Stateful Set

```

apiVersion: storage.k8s.io/v1beta1

kind: StorageClass

metadata:

name: fast

namespace: monitoring

provisioner: kubernetes.io/gce-pd

allowVolumeExpansion: true

---

apiVersion: apps/v1beta1

kind: StatefulSet

metadata:

name: prometheus

namespace: monitoring

spec:

replicas: 3

serviceName: prometheus-service

template:

metadata:

labels:

app: prometheus

thanos-store-api: "true"

spec:

serviceAccountName: monitoring

containers:

- name: prometheus

image: prom/prometheus:v2.4.3

args:

- "--config.file=/etc/prometheus-shared/prometheus.yaml"

- "--storage.tsdb.path=/prometheus/"

- "--web.enable-lifecycle"

- "--storage.tsdb.no-lockfile"

- "--storage.tsdb.min-block-duration=2h"

- "--storage.tsdb.max-block-duration=2h"

ports:

- name: prometheus

containerPort: 9090

volumeMounts:

- name: prometheus-storage

mountPath: /prometheus/

- name: prometheus-config-shared

mountPath: /etc/prometheus-shared/

- name: prometheus-rules

mountPath: /etc/prometheus/rules

- name: thanos

image: quay.io/thanos/thanos:v0.8.0

args:

- "sidecar"

- "--log.level=debug"

- "--tsdb.path=/prometheus"

- "--prometheus.url=http://127.0.0.1:9090"

- "--objstore.config={type: GCS, config: {bucket: prometheus-long-term}}"

- "--reloader.config-file=/etc/prometheus/prometheus.yaml.tmpl"

- "--reloader.config-envsubst-file=/etc/prometheus-shared/prometheus.yaml"

- "--reloader.rule-dir=/etc/prometheus/rules/"

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name : GOOGLE_APPLICATION_CREDENTIALS

value: /etc/secret/thanos-gcs-credentials.json

ports:

- name: http-sidecar

containerPort: 10902

- name: grpc

containerPort: 10901

livenessProbe:

httpGet:

port: 10902

path: /-/healthy

readinessProbe:

httpGet:

port: 10902

path: /-/ready

volumeMounts:

- name: prometheus-storage

mountPath: /prometheus

- name: prometheus-config-shared

mountPath: /etc/prometheus-shared/

- name: prometheus-config

mountPath: /etc/prometheus

- name: prometheus-rules

mountPath: /etc/prometheus/rules

- name: thanos-gcs-credentials

mountPath: /etc/secret

readOnly: false

securityContext:

fsGroup: 2000

runAsNonRoot: true

runAsUser: 1000

volumes:

- name: prometheus-config

configMap:

defaultMode: 420

name: prometheus-server-conf

- name: prometheus-config-shared

emptyDir: {}

- name: prometheus-rules

configMap:

name: prometheus-rules

- name: thanos-gcs-credentials

secret:

secretName: thanos-gcs-credentials

volumeClaimTemplates:

- metadata:

name: prometheus-storage

namespace: monitoring

spec:

accessModes: [ "ReadWriteOnce" ]

storageClassName: fast

resources:

requests:

storage: 20Gi

```

關於上面提供的manifest,理解以下內容很重要:

1. Prometheus是作為一個有狀態集部署的,有3個副本,每個副本動態地提供自己的持久化卷。

2. Prometheus配置是由Thanos sidecar容器使用我們上面建立的模板檔案生成的。

3. Thanos處理資料壓縮,因此我們需要設定--storage.tsdb.min-block-duration=2h和--storage.tsdb.max-block-duration=2h。

4. Prometheus有狀態集被標記為thanos-store-api: true,這樣每個pod就會被我們接下來建立的headless service發現。正是這個headless service將被Thanos Querier用來查詢所有Prometheus例項的資料。我們還將相同的標籤應用於Thanos Store和Thanos Ruler元件,這樣它們也會被Querier發現,並可用於查詢指標。

5. GCS bucket credentials路徑是使用GOOGLE_APPLICATION_CREDENTIALS環境變數提供的,配置檔案是由我們作為前期準備中建立的secret掛載到它上面的。

#### 部署Prometheus服務

```

apiVersion: v1

kind: Service

metadata:

name: prometheus-0-service

annotations:

prometheus.io/scrape: "true"

prometheus.io/port: "9090"

namespace: monitoring

labels:

name: prometheus

spec:

selector:

statefulset.kubernetes.io/pod-name: prometheus-0

ports:

- name: prometheus

port: 8080

targetPort: prometheus

---

apiVersion: v1

kind: Service

metadata:

name: prometheus-1-service

annotations:

prometheus.io/scrape: "true"

prometheus.io/port: "9090"

namespace: monitoring

labels:

name: prometheus

spec:

selector:

statefulset.kubernetes.io/pod-name: prometheus-1

ports:

- name: prometheus

port: 8080

targetPort: prometheus

---

apiVersion: v1

kind: Service

metadata:

name: prometheus-2-service

annotations:

prometheus.io/scrape: "true"

prometheus.io/port: "9090"

namespace: monitoring

labels:

name: prometheus

spec:

selector:

statefulset.kubernetes.io/pod-name: prometheus-2

ports:

- name: prometheus

port: 8080

targetPort: prometheus

---

#This service creates a srv record for querier to find about store-api's

apiVersion: v1

kind: Service

metadata:

name: thanos-store-gateway

namespace: monitoring

spec:

type: ClusterIP

clusterIP: None

ports:

- name: grpc

port: 10901

targetPort: grpc

selector:

thanos-store-api: "true"

```

除了上述方法外,你還可以[點選這篇文章](https://mp.weixin.qq.com/s/4doQMdxEcNMiOF-O2mFIwg "點選這篇文章")瞭解如何在Rancher上快速部署和配置Prometheus服務。

我們為stateful set中的每個Prometheus pod建立了不同的服務,儘管這並不是必要的。這些服務的建立只是為了除錯。上文已經解釋了 thanos-store-gateway headless service的目的。我們稍後將使用一個 ingress 物件來暴露 Prometheus 服務。

#### 部署Prometheus Querier

```

apiVersion: v1

kind: Namespace

metadata:

name: monitoring

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: thanos-querier

namespace: monitoring

labels:

app: thanos-querier

spec:

replicas: 1

selector:

matchLabels:

app: thanos-querier

template:

metadata:

labels:

app: thanos-querier

spec:

containers:

- name: thanos

image: quay.io/thanos/thanos:v0.8.0

args:

- query

- --log.level=debug

- --query.replica-label=replica

- --store=dnssrv+thanos-store-gateway:10901

ports:

- name: http

containerPort: 10902

- name: grpc

containerPort: 10901

livenessProbe:

httpGet:

port: http

path: /-/healthy

readinessProbe:

httpGet:

port: http

path: /-/ready

---

apiVersion: v1

kind: Service

metadata:

labels:

app: thanos-querier

name: thanos-querier

namespace: monitoring

spec:

ports:

- port: 9090

protocol: TCP

targetPort: http

name: http

selector:

app: thanos-querier

```

這是Thanos部署的主要內容之一。請注意以下幾點:

1. 容器引數`-store=dnssrv+thanos-store-gateway:10901`有助於發現所有應查詢的指標資料的元件。

2. thanos-querier服務提供了一個Web介面來執行PromQL查詢。它還可以選擇在不同的Prometheus叢集中去重複刪除資料。

3. 這是我們提供Grafana作為所有dashboard的資料來源的終點(end point)。

#### 部署Thanos儲存閘道器

```

apiVersion: v1

kind: Namespace

metadata:

name: monitoring

---

apiVersion: apps/v1beta1

kind: StatefulSet

metadata:

name: thanos-store-gateway

namespace: monitoring

labels:

app: thanos-store-gateway

spec:

replicas: 1

selector:

matchLabels:

app: thanos-store-gateway

serviceName: thanos-store-gateway

template:

metadata:

labels:

app: thanos-store-gateway

thanos-store-api: "true"

spec:

containers:

- name: thanos

image: quay.io/thanos/thanos:v0.8.0

args:

- "store"

- "--log.level=debug"

- "--data-dir=/data"

- "--objstore.config={type: GCS, config: {bucket: prometheus-long-term}}"

- "--index-cache-size=500MB"

- "--chunk-pool-size=500MB"

env:

- name : GOOGLE_APPLICATION_CREDENTIALS

value: /etc/secret/thanos-gcs-credentials.json

ports:

- name: http

containerPort: 10902

- name: grpc

containerPort: 10901

livenessProbe:

httpGet:

port: 10902

path: /-/healthy

readinessProbe:

httpGet:

port: 10902

path: /-/ready

volumeMounts:

- name: thanos-gcs-credentials

mountPath: /etc/secret

readOnly: false

volumes:

- name: thanos-gcs-credentials

secret:

secretName: thanos-gcs-credentials

---

```

這將建立儲存元件,它將從物件儲存中向Querier提供指標。

#### 部署Thanos Ruler

```

apiVersion: v1

kind: Namespace

metadata:

name: monitoring

---

apiVersion: v1

kind: ConfigMap

metadata:

name: thanos-ruler-rules

namespace: monitoring

data:

alert_down_services.rules.yaml: |

groups:

- name: metamonitoring

rules:

- alert: PrometheusReplicaDown

annotations:

message: Prometheus replica in cluster {{$labels.cluster}} has disappeared from Prometheus target discovery.

expr: |

sum(up{cluster="prometheus-ha", instance=~".*:9090", job="kubernetes-service-endpoints"}) by (job,cluster) < 3

for: 15s

labels:

severity: critical

---

apiVersion: apps/v1beta1

kind: StatefulSet

metadata:

labels:

app: thanos-ruler

name: thanos-ruler

namespace: monitoring

spec:

replicas: 1

selector:

matchLabels:

app: thanos-ruler

serviceName: thanos-ruler

template:

metadata:

labels:

app: thanos-ruler

thanos-store-api: "true"

spec:

containers:

- name: thanos

image: quay.io/thanos/thanos:v0.8.0

args:

- rule

- --log.level=debug

- --data-dir=/data

- --eval-interval=15s

- --rule-file=/etc/thanos-ruler/*.rules.yaml

- --alertmanagers.url=http://alertmanager:9093

- --query=thanos-querier:9090

- "--objstore.config={type: GCS, config: {bucket: thanos-ruler}}"

- --label=ruler_cluster="prometheus-ha"

- --label=replica="$(POD_NAME)"

env:

- name : GOOGLE_APPLICATION_CREDENTIALS

value: /etc/secret/thanos-gcs-credentials.json

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

ports:

- name: http

containerPort: 10902

- name: grpc

containerPort: 10901

livenessProbe:

httpGet:

port: http

path: /-/healthy

readinessProbe:

httpGet:

port: http

path: /-/ready

volumeMounts:

- mountPath: /etc/thanos-ruler

name: config

- name: thanos-gcs-credentials

mountPath: /etc/secret

readOnly: false

volumes:

- configMap:

name: thanos-ruler-rules

name: config

- name: thanos-gcs-credentials

secret:

secretName: thanos-gcs-credentials

---

apiVersion: v1

kind: Service

metadata:

labels:

app: thanos-ruler

name: thanos-ruler

namespace: monitoring

spec:

ports:

- port: 9090

protocol: TCP

targetPort: http

name: http

selector:

app: thanos-ruler

```

現在,如果你在與我們的工作負載相同的名稱空間中啟動互動式shell,並嘗試檢視我們的thanos-store-gateway解析到哪些pods,你會看到以下內容:

```

root@my-shell-95cb5df57-4q6w8:/# nslookup thanos-store-gateway

Server: 10.63.240.10

Address: 10.63.240.10#53

Name: thanos-store-gateway.monitoring.svc.cluster.local

Address: 10.60.25.2

Name: thanos-store-gateway.monitoring.svc.cluster.local

Address: 10.60.25.4

Name: thanos-store-gateway.monitoring.svc.cluster.local

Address: 10.60.30.2

Name: thanos-store-gateway.monitoring.svc.cluster.local

Address: 10.60.30.8

Name: thanos-store-gateway.monitoring.svc.cluster.local

Address: 10.60.31.2

root@my-shell-95cb5df57-4q6w8:/# exit

```

上面返回的IP對應的是我們的Prometheus Pod、`thanos-store`和`thanos-ruler`。這可以被驗證為:

```

$ kubectl get pods -o wide -l thanos-store-api="true"

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

prometheus-0 2/2 Running 0 100m 10.60.31.2 gke-demo-1-pool-1-649cbe02