ZooKeeper的偽分布式集群搭建以及真分布式集群搭建

zookeeper集群搭建:

- zk集群,主從節點,心跳機制(選舉模式)

- 配置數據文件 myid 1/2/3 對應 server.1/2/3

- 通過 zkCli.sh -server [ip]:[port] 命令檢測集群是否配置成功

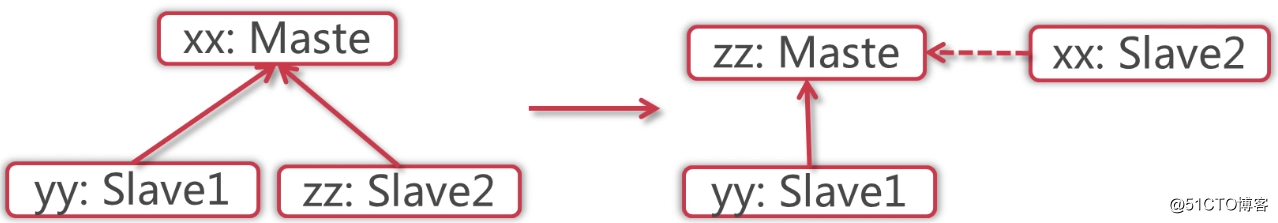

和其他大多數集群結構一樣,zookeeper集群也是主從結構。搭建集群時,機器數量最低也是三臺,因為小於三臺就無法進行選舉。選舉就是當集群中的master節點掛掉之後,剩余的兩臺機器會進行選舉,在這兩臺機器中選舉出一臺來做master節點。而當原本掛掉的master恢復正常後,也會重新加入集群當中。但是不會再作為master節點,而是作為slave節點。如下:

單機偽分布式搭建zookeeper集群

本節介紹單機偽分布式的zookeeper安裝,官方下載地址如下:

https://archive.apache.org/dist/zookeeper/

我這裏使用的是3.4.11版本,所以找到相應的版本點擊進去,復制到.tar.gz的下載鏈接到Linux上進行下載。命令如下:

[root@study-01 ~]# cd /usr/local/src/ [root@study-01 /usr/local/src]# wget https://archive.apache.org/dist/zookeeper/zookeeper-3.4.11/zookeeper-3.4.11.tar.gz

下載完成之後將其解壓到/usr/local/目錄下:

[root@study-01 /usr/local/src]# tar -zxvf zookeeper-3.4.11.tar.gz -C /usr/local/ [root@study-01 /usr/local/src]# cd ../zookeeper-3.4.11/ [root@study-01 /usr/local/zookeeper-3.4.11]# ls bin dist-maven lib README_packaging.txt zookeeper-3.4.11.jar.asc build.xml docs LICENSE.txt recipes zookeeper-3.4.11.jar.md5 conf ivysettings.xml NOTICE.txt src zookeeper-3.4.11.jar.sha1 contrib ivy.xml README.md zookeeper-3.4.11.jar [root@study-01 /usr/local/zookeeper-3.4.11]#

然後給目錄重命名一下:

[root@study-01 ~]# cd /usr/local/

[root@study-01 /usr/local]# mv zookeeper-3.4.11/ zookeeper00接著進行一系列的配置:

[root@study-01 /usr/local]# cd zookeeper00/

[root@study-01 /usr/local/zookeeper00]# cd conf/

[root@study-01 /usr/local/zookeeper00/conf]# cp zoo_sample.cfg zoo.cfg # 拷貝官方提供的模板配置文件

[root@study-01 /usr/local/zookeeper00/conf]# vim zoo.cfg # 增加或修改成如下內容

tickTime=2000

initLimit=10

syncLimit=5

dataDir=/usr/local/zookeeper00/dataDir

dataLogDir=/usr/local/zookeeper00/dataLogDir

clientPort=2181

4lw.commands.whitelist=*

server.1=192.168.190.129:2888:3888 # master節點,ip後面跟的是集群通信的端口

server.2=192.168.190.129:2889:3889

server.3=192.168.190.129:2890:3890

[root@study-01 /usr/local/zookeeper00/conf]# cd ../

[root@study-01 /usr/local/zookeeper00]# mkdir {dataDir,dataLogDir}

[root@study-01 /usr/local/zookeeper00]# cd dataDir/

[root@study-01 /usr/local/zookeeper00/dataDir]# vim myid # 配置該節點的id

1

[root@study-01 /usr/local/zookeeper00/dataDir]# 配置完之後,拷貝多個目錄出來,因為是單機的偽分布式所以需要在一臺機器上安裝多個zookeeper:

[root@study-01 /usr/local]# cp zookeeper00 zookeeper01 -rf

[root@study-01 /usr/local]# cp zookeeper00 zookeeper02 -rf配置 zookeeper01:

[root@study-01 /usr/local]# cd zookeeper01/conf/

[root@study-01 /usr/local/zookeeper01/conf]# vim zoo.cfg # 修改內容如下

tickTime=2000

initLimit=10

syncLimit=5

dataDir=/usr/local/zookeeper01/dataDir

dataLogDir=/usr/local/zookeeper01/dataLogDir

clientPort=2182 # 端口號必須要修改

4lw.commands.whitelist=*

server.1=192.168.190.129:2888:3888

server.2=192.168.190.129:2889:3889

server.3=192.168.190.129:2890:3890

[root@study-01 /usr/local/zookeeper01/conf]# cd ../dataDir/

[root@study-01 /usr/local/zookeeper01/dataDir]# vim myid

2

[root@study-01 /usr/local/zookeeper01/dataDir]#配置 zookeeper02:

[root@study-01 /usr/local]# cd zookeeper02/conf/

[root@study-01 /usr/local/zookeeper02/conf]# vim zoo.cfg

tickTime=2000

initLimit=10

syncLimit=5

dataDir=/usr/local/zookeeper02/dataDir

dataLogDir=/usr/local/zookeeper02/dataLogDir

clientPort=2183 # 端口號必須要修改

4lw.commands.whitelist=*

server.1=192.168.190.129:2888:3888

server.2=192.168.190.129:2889:3889

server.3=192.168.190.129:2890:3890

[root@study-01 /usr/local/zookeeper02/conf]# cd ../dataDir/

[root@study-01 /usr/local/zookeeper02/dataDir]# vim myid

3

[root@study-01 /usr/local/zookeeper02/dataDir]# 以上就在單機上配置好三個zookeeper集群節點了,現在我們來測試一下,這個偽分布式的zookeeper集群能否正常運作起來:

[root@study-01 ~]# cd /usr/local/zookeeper00/bin/

[root@study-01 /usr/local/zookeeper00/bin]# ./zkServer.sh start # 啟動第一個節點

ZooKeeper JMX enabled by default

Using config: /usr/local/zookeeper00/bin/../conf/zoo.cfg

Starting zookeeper ... STARTED

[root@study-01 /usr/local/zookeeper00/bin]# netstat -lntp |grep java # 查看監聽的端口

tcp6 0 0 192.168.190.129:3888 :::* LISTEN 3191/java # 集群通信的端口

tcp6 0 0 :::44793 :::* LISTEN 3191/java

tcp6 0 0 :::2181 :::* LISTEN 3191/java

[root@study-01 /usr/local/zookeeper00/bin]# cd ../../zookeeper01/bin/

[root@study-01 /usr/local/zookeeper01/bin]# ./zkServer.sh start

ZooKeeper JMX enabled by default

Using config: /usr/local/zookeeper01/bin/../conf/zoo.cfg # 啟動第二個節點

Starting zookeeper ... STARTED

[root@study-01 /usr/local/zookeeper01/bin]# cd ../../zookeeper02/bin/

[root@study-01 /usr/local/zookeeper02/bin]# ./zkServer.sh start # 啟動第三個節點

[root@study-01 /usr/local/zookeeper02/bin]# netstat -lntp |grep java # 查看監聽的端口

tcp6 0 0 192.168.190.129:2889 :::* LISTEN 3232/java

tcp6 0 0 :::48463 :::* LISTEN 3232/java

tcp6 0 0 192.168.190.129:3888 :::* LISTEN 3191/java

tcp6 0 0 192.168.190.129:3889 :::* LISTEN 3232/java

tcp6 0 0 192.168.190.129:3890 :::* LISTEN 3286/java

tcp6 0 0 :::44793 :::* LISTEN 3191/java

tcp6 0 0 :::60356 :::* LISTEN 3286/java

tcp6 0 0 :::2181 :::* LISTEN 3191/java

tcp6 0 0 :::2182 :::* LISTEN 3232/java

tcp6 0 0 :::2183 :::* LISTEN 3286/java

[root@study-01 /usr/local/zookeeper02/bin]# jps # 查看進程

3232 QuorumPeerMain

3286 QuorumPeerMain

3191 QuorumPeerMain

3497 Jps

[root@study-01 /usr/local/zookeeper02/bin]#如上,可以看到,三個節點都正常啟動成功了,接下來我們進入客戶端,創建一些znode,看看是否會同步到集群中的其他節點上去:

[root@study-01 /usr/local/zookeeper02/bin]# ./zkCli.sh -server localhost:2181 # 登錄第一個節點的客戶端

[zk: localhost:2181(CONNECTED) 0] ls /

[zookeeper]

[zk: localhost:2181(CONNECTED) 1] create /data test-data

Created /data

[zk: localhost:2181(CONNECTED) 2] ls /

[zookeeper, data]

[zk: localhost:2181(CONNECTED) 3] quit

[root@study-01 /usr/local/zookeeper02/bin]# ./zkCli.sh -server localhost:2182 # 登錄第二個節點的客戶端

[zk: localhost:2182(CONNECTED) 0] ls / # 可以查看到我們在第一個節點上創建的znode,代表集群中的節點能夠正常同步數據

[zookeeper, data]

[zk: localhost:2182(CONNECTED) 1] get /data # 數據也是一致的

test-data

cZxid = 0x100000002

ctime = Tue Apr 24 18:35:56 CST 2018

mZxid = 0x100000002

mtime = Tue Apr 24 18:35:56 CST 2018

pZxid = 0x100000002

cversion = 0

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 9

numChildren = 0

[zk: localhost:2182(CONNECTED) 2] quit

[root@study-01 /usr/local/zookeeper02/bin]# ./zkCli.sh -server localhost:2183 # 登錄第三個節點的客戶端

[zk: localhost:2183(CONNECTED) 0] ls / # 第三個節點也能查看到我們在第一個節點上創建的znode

[zookeeper, data]

[zk: localhost:2183(CONNECTED) 1] get /data # 數據也是一致的

test-data

cZxid = 0x100000002

ctime = Tue Apr 24 18:35:56 CST 2018

mZxid = 0x100000002

mtime = Tue Apr 24 18:35:56 CST 2018

pZxid = 0x100000002

cversion = 0

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 9

numChildren = 0

[zk: localhost:2183(CONNECTED) 2] quit

[root@study-01 /usr/local/zookeeper02/bin]#查看集群的狀態、主從信息需要使用 ./zkServer.sh status 命令,但是多個節點的話,逐個查看有些費勁,所以我們寫一個簡單的shell腳本來批量執行命令。如下:

[root@study-01 ~]# vim checked.sh # 腳本內容如下

#!/bin/bash

/usr/local/zookeeper00/bin/zkServer.sh status

/usr/local/zookeeper01/bin/zkServer.sh status

/usr/local/zookeeper02/bin/zkServer.sh status

[root@study-01 ~]# sh ./checked.sh # 執行腳本

ZooKeeper JMX enabled by default

Using config: /usr/local/zookeeper00/bin/../conf/zoo.cfg

Mode: follower # 從節點

ZooKeeper JMX enabled by default

Using config: /usr/local/zookeeper01/bin/../conf/zoo.cfg

Mode: leader # 主節點

ZooKeeper JMX enabled by default

Using config: /usr/local/zookeeper02/bin/../conf/zoo.cfg

Mode: follower

[root@study-01 ~]# 到此為止,我們就成功完成了單機zookeeper偽分布式集群的搭建,並且也測試成功了。

搭建zookeeper分布式集群

接下來,我們使用三臺虛擬機來搭建zookeeper真實分布式集群,機器的ip地址如下:

- 192.168.190.128

- 192.168.190.129

- 192.168.190.130

註:三臺機器都必須具備java的運行環境,並且關閉或清空防火墻規則,不想關閉防火墻的話,就需要去配置相應的防火墻規則

首先配置一下系統的hosts文件:

[root@localhost ~]# vim /etc/hosts

192.168.190.128 zk000

192.168.190.129 zk001

192.168.190.130 zk002把之前做偽分布式實驗機器上的其他zookeeper目錄刪除掉,並把zookeeper目錄使用rsync同步到其他機器上。如下:

[root@zk001 ~]# cd /usr/local/

[root@zk001 /usr/local]# rm -rf zookeeper01

[root@zk001 /usr/local]# rm -rf zookeeper02

[root@zk001 /usr/local]# mv zookeeper00/ zookeeper

[root@zk001 /usr/local]# rsync -av /usr/local/zookeeper/ 192.168.190.128:/usr/local/zookeeper/

[root@zk001 /usr/local]# rsync -av /usr/local/zookeeper/ 192.168.190.130:/usr/local/zookeeper/然後逐個在三臺機器上都配置一下環境變量,如下:

[root@zk001 ~]# vim .bash_profile # 增加如下內容

export ZOOKEEPER_HOME=/usr/local/zookeeper

PATH=$PATH:$HOME/bin:$JAVA_HOME/bin:$ZOOKEEPER_HOME/bin

export PATH

[root@zk001 ~]# source .bash_profile逐個修改配置文件,zk000:

[root@zk000 ~]# cd /usr/local/zookeeper/conf/

[root@zk000 /usr/local/zookeeper/conf]# vim zoo.cfg

tickTime=2000

initLimit=10

syncLimit=5

dataDir=/usr/local/zookeeper/dataDir

dataLogDir=/usr/local/zookeeper/dataLogDir

clientPort=2181

4lw.commands.whitelist=*

server.1=192.168.190.128:2888:3888 # 默認server.1為master節點

server.2=192.168.190.129:2888:3888

server.3=192.168.190.130:2888:3888

[root@zk000 /usr/local/zookeeper/conf]# cd ../dataDir/

[root@zk000 /usr/local/zookeeper/dataDir]# vim myid

1

[root@zk000 /usr/local/zookeeper/dataDir]#zk001:

[root@zk001 ~]# cd /usr/local/zookeeper/conf/

[root@zk001 /usr/local/zookeeper/conf]# vim zoo.cfg

tickTime=2000

initLimit=10

syncLimit=5

dataDir=/usr/local/zookeeper/dataDir

dataLogDir=/usr/local/zookeeper/dataLogDir

clientPort=2181

4lw.commands.whitelist=*

server.1=192.168.190.128:2888:3888 # 默認server.1為master節點

server.2=192.168.190.129:2888:3888

server.3=192.168.190.130:2888:3888

[root@zk001 /usr/local/zookeeper/conf]# cd ../dataDir/

[root@zk001 /usr/local/zookeeper/dataDir]# vim myid

2

[root@zk001 /usr/local/zookeeper/dataDir]#zk002:

[root@zk002 ~]# cd /usr/local/zookeeper/conf/

[root@zk002 /usr/local/zookeeper/conf]# vim zoo.cfg

tickTime=2000

initLimit=10

syncLimit=5

dataDir=/usr/local/zookeeper/dataDir

dataLogDir=/usr/local/zookeeper/dataLogDir

clientPort=2181

4lw.commands.whitelist=*

server.1=192.168.190.128:2888:3888 # 默認server.1為master節點

server.2=192.168.190.129:2888:3888

server.3=192.168.190.130:2888:3888

[root@zk002 /usr/local/zookeeper/conf]# cd ../dataDir/

[root@zk002 /usr/local/zookeeper/dataDir]# vim myid

3

[root@zk002 /usr/local/zookeeper/dataDir]#配置完成之後,啟動三臺機器的zookeeper服務:

[root@zk000 ~]# zkServer.sh start

[root@zk001 ~]# zkServer.sh start

[root@zk002 ~]# zkServer.sh start啟動成功後,查看三個機器的集群狀態信息:

[root@zk000 ~]# zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /usr/local/zookeeper/bin/../conf/zoo.cfg

Mode: leader

[root@zk000 ~]#

[root@zk001 ~]# zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /usr/local/zookeeper/bin/../conf/zoo.cfg

Mode: follower

[root@zk001 ~]#

[root@zk002 ~]# zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /usr/local/zookeeper/bin/../conf/zoo.cfg

Mode: follower

[root@zk002 ~]#然後我們來測試創建znode是否會同步,進入192.168.190.128機器的客戶端:

[root@zk000 ~]# zkCli.sh -server 192.168.190.128:2181

[zk: 192.168.190.128:2181(CONNECTED) 0] ls /

[zookeeper, data]

[zk: 192.168.190.128:2181(CONNECTED) 1] create /real-culster real-data

Created /real-culster

[zk: 192.168.190.128:2181(CONNECTED) 2] ls /

[zookeeper, data, real-culster]

[zk: 192.168.190.128:2181(CONNECTED) 3] get /real-culster

real-data

cZxid = 0x300000002

ctime = Tue Apr 24 20:48:32 CST 2018

mZxid = 0x300000002

mtime = Tue Apr 24 20:48:32 CST 2018

pZxid = 0x300000002

cversion = 0

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 9

numChildren = 0

[zk: 192.168.190.128:2181(CONNECTED) 4] quit進入192.168.190.129機器的客戶端

[root@zk000 ~]# zkCli.sh -server 192.168.190.129:2181

[zk: 192.168.190.129:2181(CONNECTED) 0] ls /

[zookeeper, data, real-culster]

[zk: 192.168.190.129:2181(CONNECTED) 1] get /real-culster

real-data

cZxid = 0x300000002

ctime = Tue Apr 24 20:48:32 CST 2018

mZxid = 0x300000002

mtime = Tue Apr 24 20:48:32 CST 2018

pZxid = 0x300000002

cversion = 0

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 9

numChildren = 0

[zk: 192.168.190.129:2181(CONNECTED) 2] quit進入192.168.190.130機器的客戶端

[root@zk000 ~]# zkCli.sh -server 192.168.190.130:2181

[zk: 192.168.190.130:2181(CONNECTED) 0] ls /

[zookeeper, data, real-culster]

[zk: 192.168.190.130:2181(CONNECTED) 1] get /real-culster

real-data

cZxid = 0x300000002

ctime = Tue Apr 24 20:48:32 CST 2018

mZxid = 0x300000002

mtime = Tue Apr 24 20:48:32 CST 2018

pZxid = 0x300000002

cversion = 0

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 9

numChildren = 0

[zk: 192.168.190.130:2181(CONNECTED) 2] quit從以上的測試可以看到,在zookeeper分布式集群中,我們在任意一個節點創建的znode都會被同步的集群中的其他節點上,數據也會被一並同步。所以到此為止,我們的zookeeper分布式集群就搭建成功了。

測試集群角色以及選舉

以上我們只是測試了znode的同步,還沒有測試集群中的節點選舉,所以本節就來測試一下,當master節點掛掉之後看看slave節點會不會通過選舉坐上master的位置。首先我們來把master節點的zookeeper服務給停掉:

[root@zk001 ~]# zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /usr/local/zookeeper/bin/../conf/zoo.cfg

Mode: leader

[root@zk001 ~]# zkServer.sh stop

ZooKeeper JMX enabled by default

Using config: /usr/local/zookeeper/bin/../conf/zoo.cfg

Stopping zookeeper ... STOPPED

[root@zk001 ~]# 這時到其他兩臺機器上進行查看,可以看到有一臺已經成為master節點了:

[root@zk002 ~]# zkServer.sh status # 可以看到zk002這個節點成為了master節點

ZooKeeper JMX enabled by default

Using config: /usr/local/zookeeper/bin/../conf/zoo.cfg

Mode: leader

[root@zk002 ~]#

[root@zk000 ~]# zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /usr/local/zookeeper/bin/../conf/zoo.cfg

Mode: follower

[root@zk000 ~]# 然後再把停掉的節點啟動起來,可以看到,該節點重新加入了集群,但是此時是以slave角色存在,而不會以master角色存在:

[root@zk001 ~]# zkServer.sh start

ZooKeeper JMX enabled by default

Using config: /usr/local/zookeeper/bin/../conf/zoo.cfg

Starting zookeeper ... STARTED

[root@zk001 ~]# zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /usr/local/zookeeper/bin/../conf/zoo.cfg

Mode: follower

[root@zk001 ~]#可以看到,zk002這個節點依舊是master角色,不會被取代,所以只有在選舉的時候集群中的節點才會切換角色:

[root@zk002 ~]# zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /usr/local/zookeeper/bin/../conf/zoo.cfg

Mode: leader

[root@zk002 ~]# ZooKeeper的偽分布式集群搭建以及真分布式集群搭建