部署mimic版本的Ceph分布式存儲系統

Ceph: 開源的分布式存儲系統。主要分為對象存儲、塊設備存儲、文件系統服務。Ceph核心組件包括:Ceph OSDs、Monitors、Managers、MDSs。Ceph存儲集群至少需要一個Ceph Monitor,Ceph Manager和Ceph OSD(對象存儲守護進程)。運行Ceph Filesystem客戶端時也需要Ceph元數據服務器( Metadata Server )。

Ceph OSDs: Ceph OSD 守護進程(ceph-osd)的功能是存儲數據,處理數據的復制、恢復、回填、再均衡,並通過檢查其他 OSD 守護進程的心跳來向 Ceph Monitors

Monitors: Ceph Monitor(ceph-mon) 維護著展示集群狀態的各種圖表,包括監視器圖、 OSD 圖、歸置組( PG )圖、和 CRUSH 圖。 Ceph 保存著發生在Monitors 、 OSD 和 PG上的每一次狀態變更的歷史信息(稱為 epoch )。監視器還負責管理守護進程和客戶端之間的身份驗證。冗余和高可用性通常至少需要三個監視器

Managers: Ceph Manager守護進程(ceph-mgr)負責跟蹤運行時指標和Ceph集群的當前狀態,包括存儲利用率,當前性能指標和系統負載。Ceph Manager守護進程還托管基於python的插件來管理和公開Ceph集群信息,包括基於Web的Ceph Manager Dashboard和 REST API。高可用性通常至少需要兩個管理器。

MDSs: Ceph 元數據服務器( MDS )為 Ceph 文件系統存儲元數據(也就是說,Ceph 塊設備和 Ceph 對象存儲不使用MDS )。元數據服務器使得 POSIX 文件系統的用戶們,可以在不對 Ceph 存儲集群造成負擔的前提下,執行諸如 ls、find 等基本命令。

2.準備

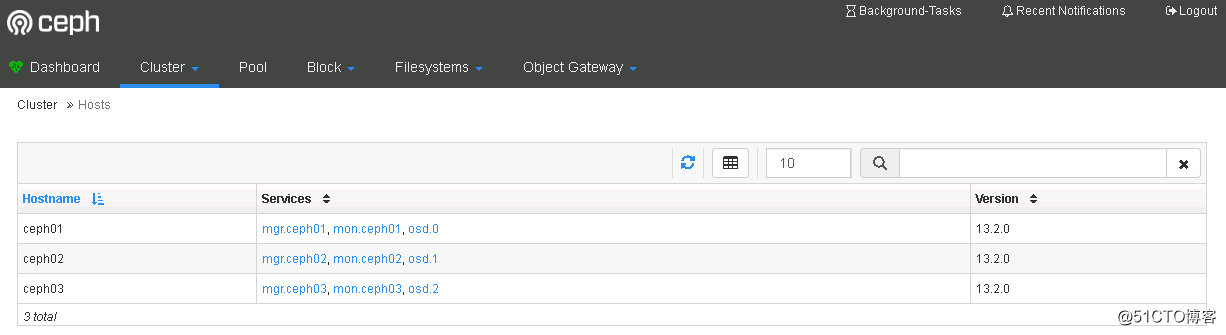

這裏使用 ceph-deploy 來部署三節點的ceph集群,節點信息如下:

192.168.100.116 ceph01

192.168.100.117 ceph02

192.168.100.118 ceph03

所有操作均在 ceph01 節點進行操作。

A.配置hosts

# cat /etc/hosts 192.168.100.116 ceph01 192.168.100.117 ceph02 192.168.100.118 ceph03

B.配置互信

# ssh-keygen -t rsa -P '' # ssh-copy-id -i .ssh/id_rsa.pub [email protected] # ssh-copy-id -i .ssh/id_rsa.pub [email protected]

C.安裝ansible

# yum -y install ansible # cat /etc/ansible/hosts | grep -v ^# | grep -v ^$ [node] 192.168.100.117 192.168.100.118

D.關閉SeLinux和Firewall

# ansible node -m copy -a 'src=/etc/hosts dest=/etc/' # sed -i "s/SELINUX=enforcing/SELINUX=disabled/g" /etc/selinux/config # ansible node -m copy -a 'src=/etc/selinux/config dest=/etc/selinux/' # systemctl stop firewalld # systemctl disable firewalld # ansible node -a 'systemctl stop firewalld' # ansible node -a 'systemctl disable firewalld'

E.安裝NTP

# yum -y install ntp ntpdate ntp-doc # systemctl start ntpdate # systemctl start ntpd # systemctl enable ntpd ntpdate # ansible node -a 'yum -y install ntp ntpdate ntp-doc' # ansible node -a 'systemctl start ntpdate' # ansible node -a 'systemctl start ntpd' # ansible node -a 'systemctl enable ntpdate' # ansible node -a 'systemctl enable ntpd'

F.安裝相應源

# wget -O /etc/yum.repos.d/epel.repo http://mirrors.aliyun.com/repo/epel-7.repo ###安裝EPEL源 # ansible node -m copy -a 'src=/etc/yum.repos.d/epel.repo dest=/etc/yum.repos.d/' # yum -y install yum-plugin-priorities # yum -y install snappy leveldb gdisk python-argparse gperftools-libs # rpm --import 'https://mirrors.aliyun.com/ceph/keys/release.asc' # vim /etc/yum.repos.d/ceph.repo ###安裝阿裏雲的ceph源 [Ceph] name=Ceph packages for $basearch baseurl=https://mirrors.aliyun.com/ceph/rpm-mimic/el7/$basearch enabled=1 gpgcheck=1 priority=1 gpgkey=https://mirrors.aliyun.com/ceph/keys/release.asc [Ceph-noarch] name=Ceph noarch packages baseurl=https://mirrors.aliyun.com/ceph/rpm-mimic/el7/noarch enabled=1 gpgcheck=1 priority=1 gpgkey=https://mirrors.aliyun.com/ceph/keys/release.asc [ceph-source] name=Ceph source packages baseurl=https://mirrors.aliyun.com/ceph/rpm-mimic/el7/SRPMS enabled=1 gpgcheck=1 priority=1 gpgkey=https://mirrors.aliyun.com/ceph/keys/release.asc # yum makecache

3.部署ceph集群

A.安裝ceph-deploy

# yum -y install ceph-deploy

B.啟動一個新的 ceph 集群(官方建議創建特定用戶來部署集群)

# mkdir /etc/ceph && cd /etc/ceph

# ceph-deploy new ceph{01,02,03}

# ls

ceph.conf ceph-deploy-ceph.log ceph.log ceph.mon.keyring

# ceph-deploy new --cluster-network 192.168.100.0/24 --public-network 192.168.100.0/24 ceph{01,02,03}C.部署 mimic 版本的 ceph 集群並查看配置文件

# ceph-deploy install --release mimic ceph{01,02,03}

# ceph --version

ceph version 13.2.0 (79a10589f1f80dfe21e8f9794365ed98143071c4) mimic (stable)

# ls

ceph.conf ceph-deploy-ceph.log ceph.log ceph.mon.keyring rbdmap

# cat ceph.conf

[global]

fsid = afd8b41f-f9fa-41da-85dc-e68a1612eba9

public_network = 192.168.100.0/24

cluster_network = 192.168.100.0/24

mon_initial_members = ceph01, ceph02, ceph03

mon_host = 192.168.100.116,192.168.100.117,192.168.100.118

auth_cluster_required = cephx

auth_service_required = cephx

auth_client_required = cephD.激活監控節點

# ceph-deploy mon create-initial # ls ceph.bootstrap-mds.keyring ceph.bootstrap-osd.keyring ceph.client.admin.keyring ceph-deploy-ceph.log ceph.mon.keyring ceph.bootstrap-mgr.keyring ceph.bootstrap-rgw.keyring ceph.conf ceph.log rbdmap

E.查看健康狀況

# ceph health

HEALTH_OK

# ceph -s

cluster:

id: afd8b41f-f9fa-41da-85dc-e68a1612eba9

health: HEALTH_OK

services:

mon: 3 daemons, quorum ceph01,ceph02,ceph03

mgr: no daemons active

osd: 0 osds: 0 up, 0 in

data:

pools: 0 pools, 0 pgs

objects: 0 objects, 0 B

usage: 0 B used, 0 B / 0 B avail

pgs:F.將管理密鑰拷貝到各個節點上

# ceph-deploy admin ceph{01,02,03}

# ansible node -a 'ls /etc/ceph/'

192.168.100.117 | SUCCESS | rc=0 >>

ceph.client.admin.keyring

ceph.conf

rbdmap

tmpUxAfBs

192.168.100.118 | SUCCESS | rc=0 >>

ceph.client.admin.keyring

ceph.conf

rbdmap

tmp5Sx_4nG.創建 ceph 管理進程服務

# ceph-deploy mgr create ceph{01,02,03}

# ceph -s

cluster:

id: afd8b41f-f9fa-41da-85dc-e68a1612eba9

health: HEALTH_OK

services:

mon: 3 daemons, quorum ceph01,ceph02,ceph03

mgr: ceph01(active), standbys: ceph02, ceph03

osd: 0 osds: 0 up, 0 in

data:

pools: 0 pools, 0 pgs

objects: 0 objects, 0 B

usage: 0 B used, 0 B / 0 B avail

pgs:H.啟動 osd 創建數據

# ceph-deploy osd create --data /dev/sdb ceph01

# ceph-deploy osd create --data /dev/sdb ceph02

# ceph-deploy osd create --data /dev/sdb ceph03

# ceph -s

cluster:

id: afd8b41f-f9fa-41da-85dc-e68a1612eba9

health: HEALTH_OK

services:

mon: 3 daemons, quorum ceph01,ceph02,ceph03

mgr: ceph01(active), standbys: ceph02, ceph03

osd: 3 osds: 3 up, 3 in

data:

pools: 0 pools, 0 pgs

objects: 0 objects, 0 B

usage: 3.0 GiB used, 57 GiB / 60 GiB avail

pgs:3.查看相關信息

A.查看運行狀況

# systemctl status ceph-mon.target

# systemctl status ceph/*.service

# systemctl status | grep ceph

● ceph01

│ └─4349 grep --color=auto ceph

├─system-ceph\x2dosd.slice

│ └─[email protected]

│ └─4131 /usr/bin/ceph-osd -f --cluster ceph --id 0 --setuser ceph --setgroup ceph

├─system-ceph\x2dmgr.slice

│ └─[email protected]

│ └─3715 /usr/bin/ceph-mgr -f --cluster ceph --id ceph01 --setuser ceph --setgroup ceph

├─system-ceph\x2dmon.slice

│ └─[email protected]

│ └─3219 /usr/bin/ceph-mon -f --cluster ceph --id ceph01 --setuser ceph --setgroup cephB.查看 ceph 存儲空間

# ceph df

GLOBAL:

SIZE AVAIL RAW USED %RAW USED

60 GiB 57 GiB 3.0 GiB 5.01

POOLS:

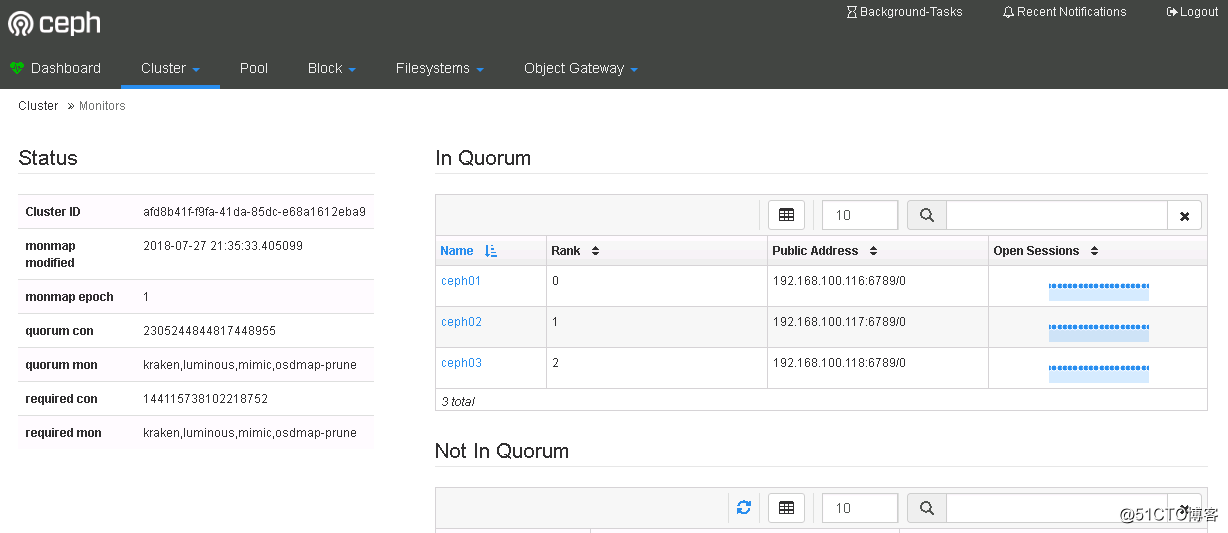

NAME ID USED %USED MAX AVAIL OBJECTSC.查看 mon 相關信息

# ceph mon stat ##查看 mon 狀態信息

e1: 3 mons at {ceph01=192.168.100.116:6789/0,ceph02=192.168.100.117:6789/0,ceph03=192.168.100.118:6789/0}, election epoch 10, leader 0 ceph01, quorum 0,1,2 ceph01,ceph02,ceph03

# ceph quorum_status ##查看 mon 的選舉狀態

# ceph mon dump ##查看 mon 映射信息

dumped monmap epoch 1

epoch 1

fsid afd8b41f-f9fa-41da-85dc-e68a1612eba9

last_changed 2018-07-27 21:35:33.405099

created 2018-07-27 21:35:33.405099

0: 192.168.100.116:6789/0 mon.ceph01

1: 192.168.100.117:6789/0 mon.ceph02

2: 192.168.100.118:6789/0 mon.ceph03

# ceph daemon mon.ceph01 mon_status ##查看 mon 詳細狀態D.查看 osd 相關信息

# ceph osd stat ##查看 osd 運行狀態

3 osds: 3 up, 3 in

# ceph osd dump ##查看 osd 映射信息

# ceph osd perf ##查看數據延遲

# ceph osd df ##詳細列出集群每塊磁盤的使用情況

ID CLASS WEIGHT REWEIGHT SIZE USE AVAIL %USE VAR PGS

0 hdd 0.01949 1.00000 20 GiB 1.0 GiB 19 GiB 5.01 1.00 0

1 hdd 0.01949 1.00000 20 GiB 1.0 GiB 19 GiB 5.01 1.00 0

2 hdd 0.01949 1.00000 20 GiB 1.0 GiB 19 GiB 5.01 1.00 0

TOTAL 60 GiB 3.0 GiB 57 GiB 5.01

MIN/MAX VAR: 1.00/1.00 STDDEV: 0

# ceph osd tree ##查看 osd 目錄樹

# ceph osd getmaxosd ##查看最大 osd 的個數

max_osd = 3 in epoch 60E.查看 PG 信息

# ceph pg dump ##查看 PG 組的映射信息 # ceph pg stat ##查看 PG 狀態 0 pgs: ; 0 B data, 3.0 GiB used, 57 GiB / 60 GiB avail # ceph pg dump --format plain ##顯示集群中的所有的 PG 統計,可用格式有純文本plain(默認)和json

4.啟用dashboard

使用如下命令即可啟用dashboard模塊:

# ceph mgr module enable dashboard

默認情況下,儀表板的所有HTTP連接均使用SSL/TLS進行保護。

要快速啟動並運行儀表板,可以使用以下內置命令生成並安裝自簽名證書:

# ceph dashboard create-self-signed-cert Self-signed certificate created

創建具有管理員角色的用戶:

# ceph dashboard set-login-credentials admin admin Username and password updated

查看ceph-mgr服務:

# ceph mgr services

{

"dashboard": "https://ceph01:8080/",

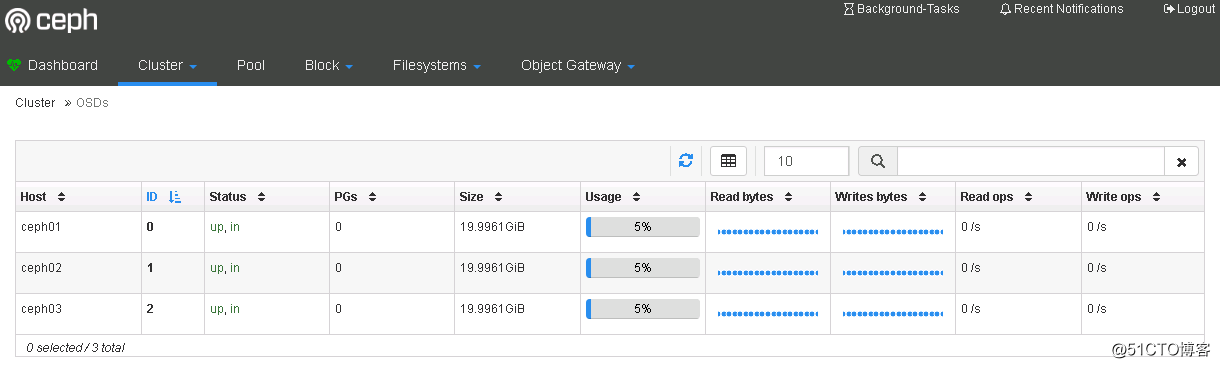

}瀏覽器輸入https://ceph01:8080輸入用戶名admin,密碼admin登錄即可查看

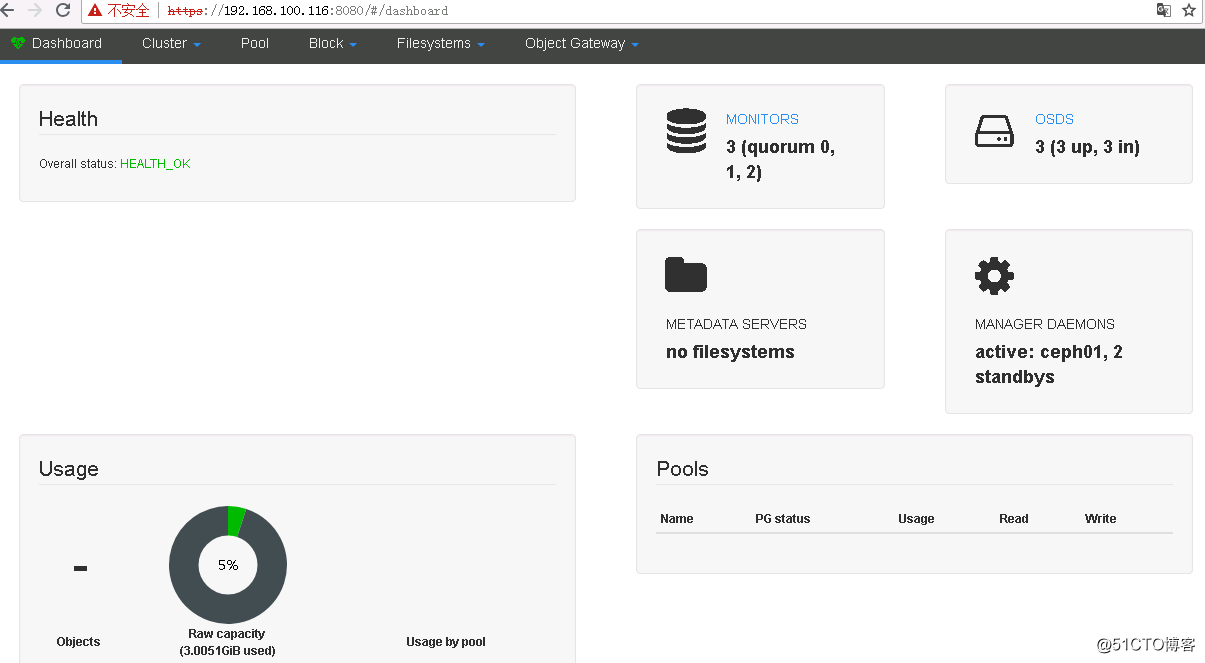

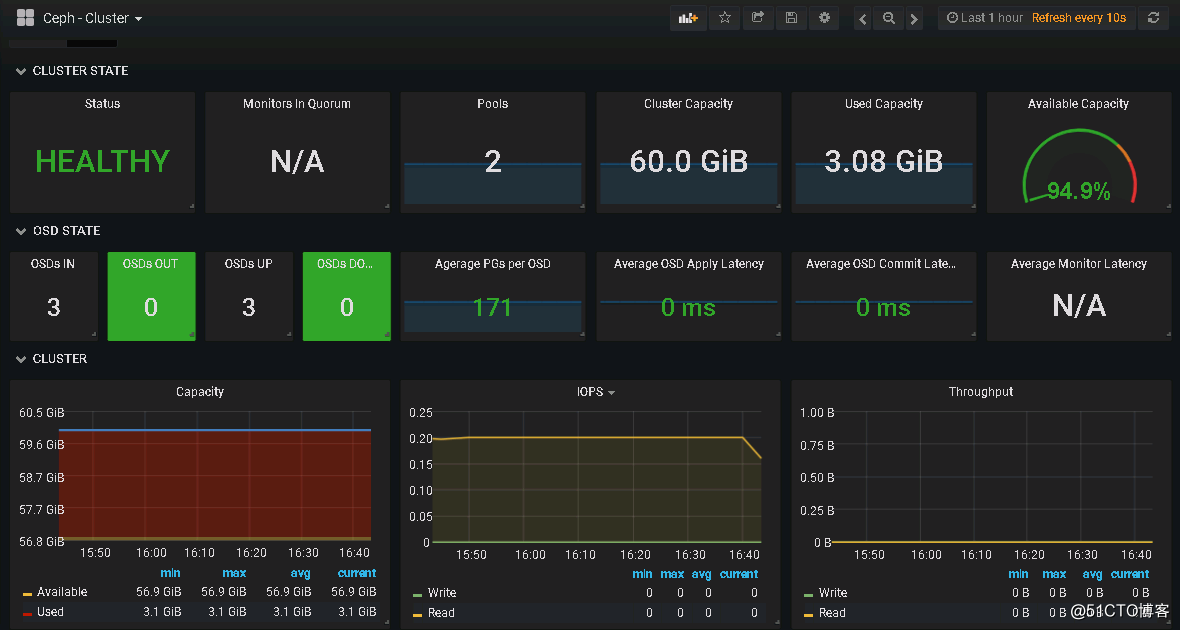

查看集群狀態:

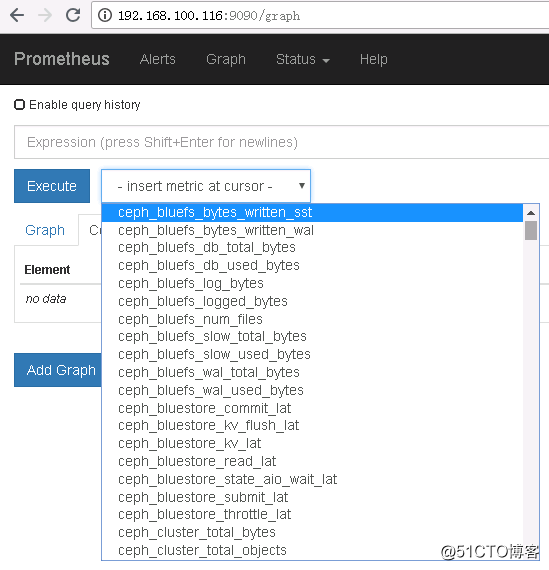

5.啟用Prometheus模塊

啟用Prometheus監控模塊:

# ceph mgr module enable prometheus

# ss -tlnp|grep 9283

LISTEN 0 5 :::9283 :::* users:(("ceph-mgr",pid=3715,fd=70))

# ceph mgr services

{

"dashboard": "https://ceph01:8080/",

"prometheus": "http://ceph01:9283/"

}安裝Prometheus:

# tar -zxvf prometheus-*.tar.gz

# cd prometheus-*

# cp prometheus promtool /usr/local/bin/

# prometheus --version

prometheus, version 2.3.2 (branch: HEAD, revision: 71af5e29e815795e9dd14742ee7725682fa14b7b)

build user: root@5258e0bd9cc1

build date: 20180712-14:02:52

go version: go1.10.3

# mkdir /etc/prometheus && mkdir /var/lib/prometheus

# vim /usr/lib/systemd/system/prometheus.service ###配置啟動項

[Unit]

Description=Prometheus

Documentation=https://prometheus.io

[Service]

Type=simple

WorkingDirectory=/var/lib/prometheus

EnvironmentFile=-/etc/prometheus/prometheus.yml

ExecStart=/usr/local/bin/prometheus --config.file /etc/prometheus/prometheus.yml --storage.tsdb.path /var/lib/prometheus/

[Install]

WantedBy=multi-user.target

# vim /etc/prometheus/prometheus.yml ##配置配置文件

global:

scrape_interval: 15s

evaluation_interval: 15s

scrape_configs:

- job_name: 'prometheus'

static_configs:

- targets: ['192.168.100.116:9090']

- job_name: 'ceph'

static_configs:

- targets:

- 192.168.100.116:9283

- 192.168.100.117:9283

- 192.168.100.118:9283

# systemctl daemon-reload

# systemctl start prometheus

# systemctl status prometheus

安裝grafana:

# wget https://s3-us-west-2.amazonaws.com/grafana-releases/release/grafana-5.2.2-1.x86_64.rpm # yum -y localinstall grafana-5.2.2-1.x86_64.rpm # systemctl start grafana-server # systemctl status grafana-server

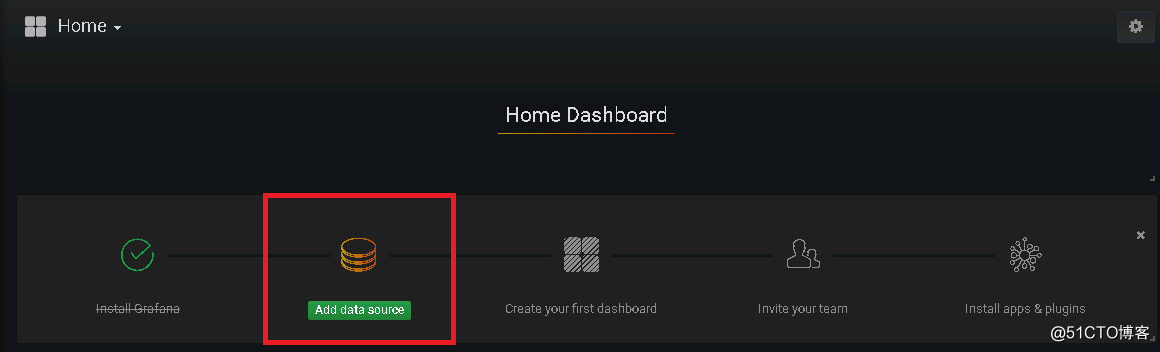

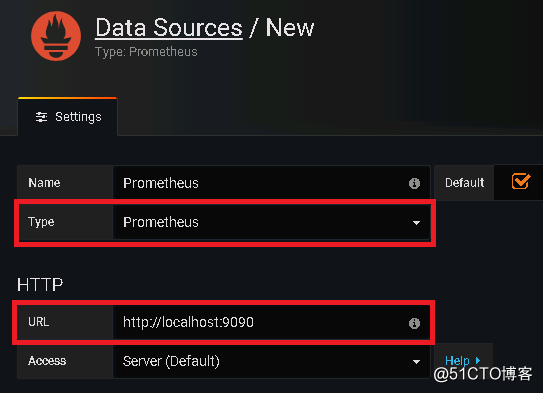

輸入默認帳號密碼admin登錄之後會提示更改默認密碼,更改後即可進入,之後添加數據源:

配置數據源名稱、類型以及其URL

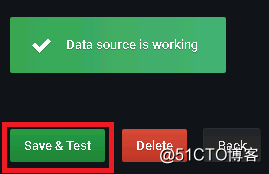

然後點擊最下邊的“Save&Test”,提示“Data source is working”即是成功連接數據源

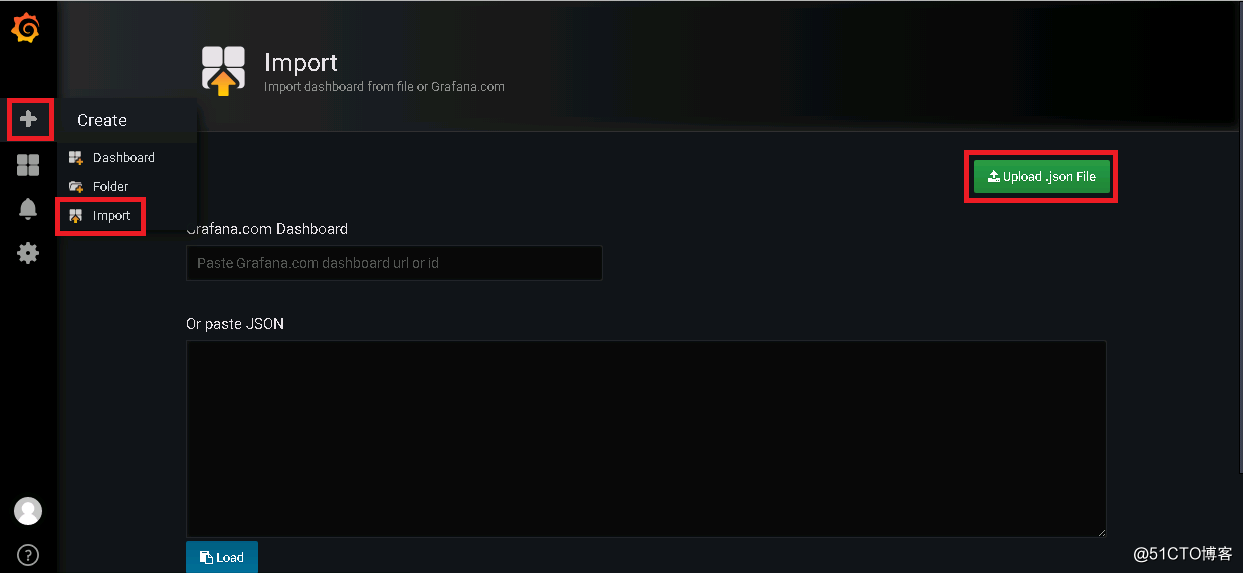

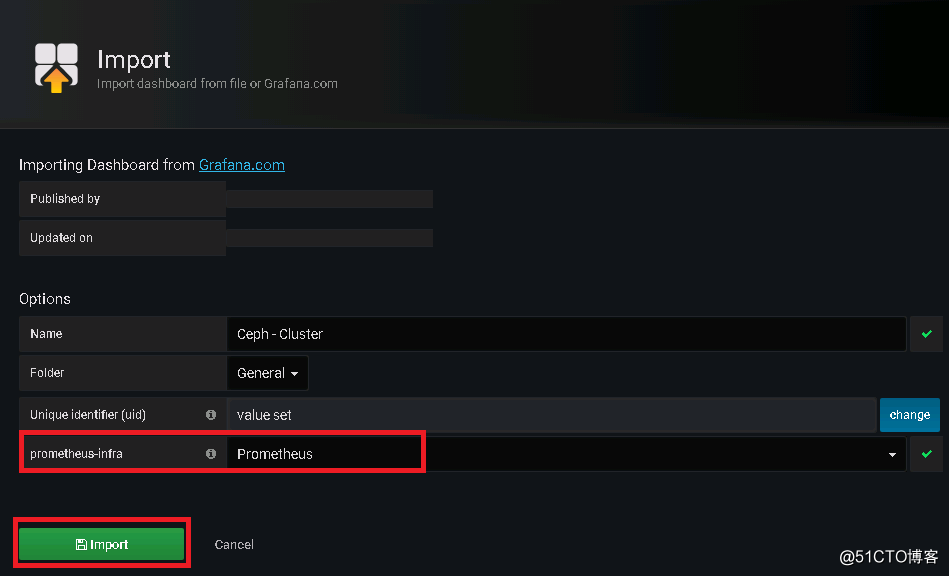

從grafana官網上下載相應儀表盤文件並導入到grafana

最後呈現效果如下:

部署mimic版本的Ceph分布式存儲系統