Spark讀寫OSS並使用OSS-Select來加速查詢

Spark讀寫OSS

基於這篇文章搭建的CDH6以及配置,我們來使Spark能夠讀寫OSS(其他版本的Spark都是類似的做法,不再贅述)。

由於預設Spark並沒有將OSS的支援包放到它的CLASSPATH裡面,所以我們需要執行如下命令

下面的步驟需要在所有的CDH節點執行

進入到$CDH_HOME/lib/spark目錄, 執行如下命令

[[email protected] spark]# cd jars/

[[email protected] jars]# ln -s ../../../jars/hadoop-aliyun-3.0.0-cdh6.0.1.jar hadoop-aliyun.jar

[ 進入到$CDH_HOME/lib/spark目錄,執行一個查詢

[[email protected] spark]# ./bin/spark-shell WARNING: User-defined SPARK_HOME (/opt/cloudera/parcels/CDH-6.0.1-1.cdh6.0.1.p0.590678/lib/spark) overrides detected (/opt/cloudera/parcels/CDH/lib/spark). WARNING: Running spark-class from user-defined location. Setting default log level to "WARN". To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel). Spark context Web UI available at http://x.x.x.x:4040 Spark context available as 'sc' (master = yarn, app id = application_1540878848110_0004). Spark session available as 'spark'. Welcome to ____ __ / __/__ ___ _____/ /__ _\ \/ _ \/ _ `/ __/ '_/ /___/ .__/\_,_/_/ /_/\_\ version 2.2.0-cdh6.0.1 /_/ Using Scala version 2.11.8 (Java HotSpot(TM) 64-Bit Server VM, Java 1.8.0_152) Type in expressions to have them evaluated. Type :help for more information. scala> val myfile = sc.textFile("oss://{your-bucket-name}/50/store_sales") myfile: org.apache.spark.rdd.RDD[String] = oss://{your-bucket-name}/50/store_sales MapPartitionsRDD[1] at textFile at <console>:24 scala> myfile.count() res0: Long = 144004764 scala> myfile.map(line => line.split('|')).filter(_(0).toInt >= 2451262).take(3) res15: Array[Array[String]] = Array(Array(2451262, 71079, 20359, 154660, 284233, 6206, 150579, 46, 512, 2160001, 84, 6.94, 11.38, 9.33, 681.83, 783.72, 582.96, 955.92, 5.09, 681.83, 101.89, 106.98, -481.07), Array(2451262, 71079, 26863, 154660, 284233, 6206, 150579, 46, 345, 2160001, 12, 67.82, 115.29, 25.36, 0.00, 304.32, 813.84, 1383.48, 21.30, 0.00, 304.32, 325.62, -509.52), Array(2451262, 71079, 55852, 154660, 284233, 6206, 150579, 46, 243, 2160001, 74, 32.41, 34.67, 1.38, 0.00, 102.12, 2398.34, 2565.58, 4.08, 0.00, 102.12, 106.20, -2296.22)) scala> myfile.map(line => line.split('|')).filter(_(0) >= "2451262").saveAsTextFile("oss://{your-bucket-name}/spark-oss-test.1")

Spark支援OSS Select

這篇文章介紹了OSS Select,OSS Select目前已經在深圳區域實現商業化,下面的實驗將基於oss-cn-shenzhen.aliyuncs.com這個OSS EndPoint來進行(基於CDH6,其他版本的Spark做法類似)。

部署

下面的步驟需要在所有的CDH節點執行

下載OSS Select的Spark支援包(目前該支援包還在測試中),放到$CDH_HOME/jars下

http://gosspublic.alicdn.com/hadoop-spark/spark-2.2.0-oss-select-0.1.0-SNAPSHOT.tar.gz

[ 進入到$CDH_HOME/lib/spark/jars

[[email protected] jars]# pwd

/opt/cloudera/parcels/CDH/lib/spark/jars

[[email protected] jars]# rm -f aliyun-sdk-oss-2.8.3.jar

[[email protected] jars]# ln -s ../../../jars/aliyun-oss-select-spark_2.11-0.1.0-SNAPSHOT.jar aliyun-oss-select-spark_2.11-0.1.0-SNAPSHOT.jar

[[email protected] jars]# ln -s ../../../jars/aliyun-java-sdk-core-3.4.0.jar aliyun-java-sdk-core-3.4.0.jar

[[email protected] jars]# ln -s ../../../jars/aliyun-java-sdk-ecs-4.2.0.jar aliyun-java-sdk-ecs-4.2.0.jar

[[email protected] jars]# ln -s ../../../jars/aliyun-java-sdk-ram-3.0.0.jar aliyun-java-sdk-ram-3.0.0.jar

[[email protected] jars]# ln -s ../../../jars/aliyun-java-sdk-sts-3.0.0.jar aliyun-java-sdk-sts-3.0.0.jar

[[email protected] jars]# ln -s ../../../jars/aliyun-sdk-oss-3.3.0.jar aliyun-sdk-oss-3.3.0.jar

[[email protected] jars]# ln -s ../../../jars/jdom-1.1.jar jdom-1.1.jar對比測試

這裡使用的是spark on yarn,其中Node Manager節點是4個,每個節點最多可以執行4個container,每個container配備的資源是1核2GB記憶體。

測試資料共630MB,包含3列,分別是姓名、公司和年齡。

[[email protected] jars]# hadoop fs -ls oss://select-test-sz/people/

Found 10 items

-rw-rw-rw- 1 63079930 2018-10-30 17:03 oss://select-test-sz/people/part-00000

-rw-rw-rw- 1 63079930 2018-10-30 17:03 oss://select-test-sz/people/part-00001

-rw-rw-rw- 1 63079930 2018-10-30 17:05 oss://select-test-sz/people/part-00002

-rw-rw-rw- 1 63079930 2018-10-30 17:05 oss://select-test-sz/people/part-00003

-rw-rw-rw- 1 63079930 2018-10-30 17:06 oss://select-test-sz/people/part-00004

-rw-rw-rw- 1 63079930 2018-10-30 17:12 oss://select-test-sz/people/part-00005

-rw-rw-rw- 1 63079930 2018-10-30 17:14 oss://select-test-sz/people/part-00006

-rw-rw-rw- 1 63079930 2018-10-30 17:14 oss://select-test-sz/people/part-00007

-rw-rw-rw- 1 63079930 2018-10-30 17:15 oss://select-test-sz/people/part-00008

-rw-rw-rw- 1 63079930 2018-10-30 17:16 oss://select-test-sz/people/part-00009進入到$CDH_HOME/lib/spark/,啟動spark-shell

[[email protected] spark]# ./bin/spark-shell

WARNING: User-defined SPARK_HOME (/opt/cloudera/parcels/CDH-6.0.1-1.cdh6.0.1.p0.590678/lib/spark) overrides detected (/opt/cloudera/parcels/CDH/lib/spark).

WARNING: Running spark-class from user-defined location.

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

Spark context Web UI available at http://x.x.x.x:4040

Spark context available as 'sc' (master = yarn, app id = application_1540887123331_0008).

Spark session available as 'spark'.

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/___/ .__/\_,_/_/ /_/\_\ version 2.2.0-cdh6.0.1

/_/

Using Scala version 2.11.8 (Java HotSpot(TM) 64-Bit Server VM, Java 1.8.0_152)

Type in expressions to have them evaluated.

Type :help for more information.

scala> val sqlContext = spark.sqlContext

sqlContext: org.apache.spark.sql.SQLContext = [email protected]

scala> sqlContext.sql("CREATE TEMPORARY VIEW people USING com.aliyun.oss " +

| "OPTIONS (" +

| "oss.bucket 'select-test-sz', " +

| "oss.prefix 'people', " + // objects with this prefix belong to this table

| "oss.schema 'name string, company string, age long'," + // like 'column_a long, column_b string'

| "oss.data.format 'csv'," + // we only support csv now

| "oss.input.csv.header 'None'," +

| "oss.input.csv.recordDelimiter '\r\n'," +

| "oss.input.csv.fieldDelimiter ','," +

| "oss.input.csv.commentChar '#'," +

| "oss.input.csv.quoteChar '\"'," +

| "oss.output.csv.recordDelimiter '\n'," +

| "oss.output.csv.fieldDelimiter ','," +

| "oss.output.csv.quoteChar '\"'," +

| "oss.endpoint 'oss-cn-shenzhen.aliyuncs.com', " +

| "oss.accessKeyId 'Your Access Key Id', " +

| "oss.accessKeySecret 'Your Access Key Secret')")

res0: org.apache.spark.sql.DataFrame = []

scala> val sql: String = "select count(*) from people where name like 'Lora%'"

sql: String = select count(*) from people where name like 'Lora%'

scala> sqlContext.sql(sql).show()

+--------+

|count(1)|

+--------+

| 31770|

+--------+

scala> val textFile = sc.textFile("oss://select-test-sz/people/")

textFile: org.apache.spark.rdd.RDD[String] = oss://select-test-sz/people/ MapPartitionsRDD[8] at textFile at <console>:24

scala> textFile.map(line => line.split(',')).filter(_(0).startsWith("Lora")).count()

res3: Long = 31770

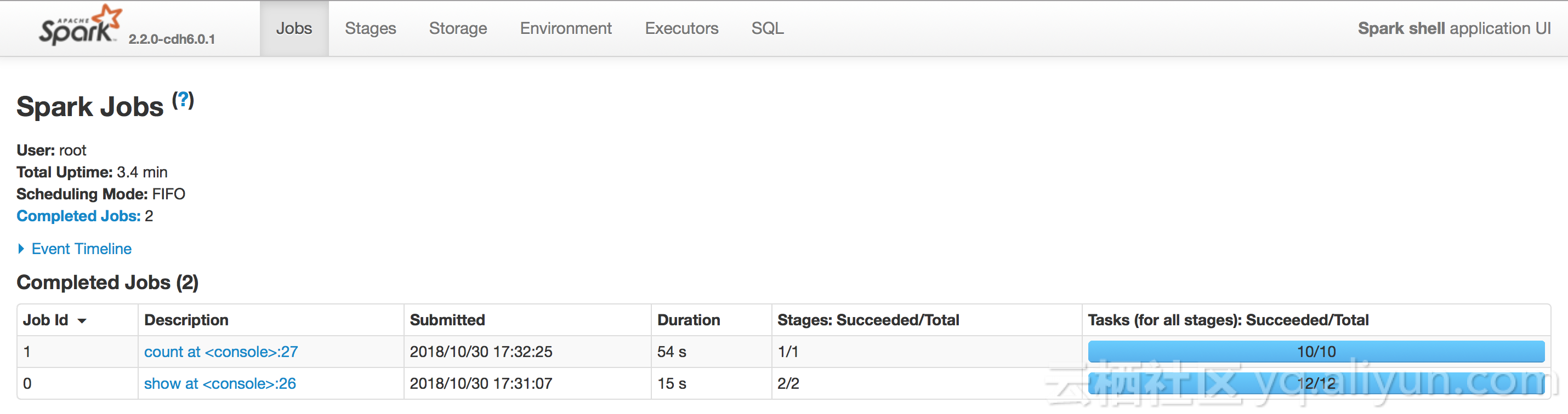

然後我們分別看使用OSS Select與不使用OSS Select的時間對比,可以看到,使用OSS Select的時間是不使用OSS Select時間的四分之一。

Spark對接OSS Select支援包的實現

我們通過擴充套件Spark的DataSource API來實現Spark對接OSS Select。通過實現PrunedFilteredScan,我們可以把需要的列和過濾條件下推到OSS Select執行。目前這個支援包還在開發中,定義的規範如下:

scala> sqlContext.sql("CREATE TEMPORARY VIEW people USING com.aliyun.oss " +

| "OPTIONS (" +

| "oss.bucket 'select-test-sz', " +

| "oss.prefix 'people', " + // objects with this prefix belong to this table

| "oss.schema 'name string, company string, age long'," + // like 'column_a long, column_b string'

| "oss.data.format 'csv'," + // we only support csv now

| "oss.input.csv.header 'None'," +

| "oss.input.csv.recordDelimiter '\r\n'," +

| "oss.input.csv.fieldDelimiter ','," +

| "oss.input.csv.commentChar '#'," +

| "oss.input.csv.quoteChar '\"'," +

| "oss.output.csv.recordDelimiter '\n'," +

| "oss.output.csv.fieldDelimiter ','," +

| "oss.output.csv.quoteChar '\"'," +

| "oss.endpoint 'oss-cn-shenzhen.aliyuncs.com', " +

| "oss.accessKeyId 'Your Access Key Id', " +

| "oss.accessKeySecret 'Your Access Key Secret')")| 欄位 | 說明 |

|---|---|

| oss.bucket | 資料所在的bucket |

| oss.prefix | 擁有這個字首的Object都屬於定義的這個TEMPORARY VIEW |

| oss.schema | 這個 TEMPORARY VIEW的schema,目前通過字串指定,後續會通過一個檔案來指定schema |

| oss.data.format | 資料內容的格式,目前支援CSV格式,其他格式也會陸續支援 |

| oss.input.csv.* | 定義CSV輸入格式引數 |

| oss.output.csv.* | 定義CSV輸出格式引數 |

| oss.endpoint | bucket所在的Endpoint |

| oss.accessKeyId | 你的Access Key Id |

| oss.accessKeySecret | 你的Access Key Secret |

目前只定義基本引數,可以參考OSS Select API文件,其餘的引數也在支援中。

支援的過濾條件:=,<,>,<=, >=,||,or,not,and,in,like(StringStartsWith,StringEndsWith,StringContains)。對於不能下推的過濾條件(如算術運算、字串拼接等,這些通過PrunedFilteredScan獲取不到),則只下推需要的列到OSS Select。

然而,OSS Select還支援其他過濾條件,可以參考OSS Select API文件

對比TPC-H的查詢

主要對比TPC-H中query1.sql對於lineitem這個table的查詢效能,為了能使OSS Select過濾更多的資料,我們將where條件改一下(由l_shipdate <= '1998-09-16'改為where l_shipdate > '1997-09-16'),測試資料大小是2.27GB

[[email protected] ~]# hadoop fs -ls oss://select-test-sz/data/lineitem.csv

-rw-rw-rw- 1 2441079322 2018-10-31 11:18 oss://select-test-sz/data/lineitem.csv對比如下

scala> import org.apache.spark.sql.types.{IntegerType, LongType, StringType, StructField, StructType, DoubleType}

import org.apache.spark.sql.types.{IntegerType, LongType, StringType, StructField, StructType, DoubleType}

scala> import org.apache.spark.sql.{Row, SQLContext}

import org.apache.spark.sql.{Row, SQLContext}

scala> val sqlContext = spark.sqlContext

sqlContext: org.apache.spark.sql.SQLContext = [email protected]

scala> val textFile = sc.textFile("oss://select-test-sz/data/lineitem.csv")

textFile: org.apache.spark.rdd.RDD[String] = oss://select-test-sz/data/lineitem.csv MapPartitionsRDD[1] at textFile at <console>:26

scala> val dataRdd = textFile.map(_.split('|'))

dataRdd: org.apache.spark.rdd.RDD[Array[String]] = MapPartitionsRDD[2] at map at <console>:28

scala> val schema = StructType(

| List(

| StructField("L_ORDERKEY",LongType,true),

| StructField("L_PARTKEY",LongType,true),

| StructField("L_SUPPKEY",LongType,true),

| StructField("L_LINENUMBER",IntegerType,true),

| StructField("L_QUANTITY",DoubleType,true),

| StructField("L_EXTENDEDPRICE",DoubleType,true),

| StructField("L_DISCOUNT",DoubleType,true),

| StructField("L_TAX",DoubleType,true),

| StructField("L_RETURNFLAG",StringType,true),

| StructField("L_LINESTATUS",StringType,true),

| StructField("L_SHIPDATE",StringType,true),

| StructField("L_COMMITDATE",StringType,true),

| StructField("L_RECEIPTDATE",StringType,true),

| StructField("L_SHIPINSTRUCT",StringType,true),

| StructField("L_SHIPMODE",StringType,true),

| StructField("L_COMMENT",StringType,true)

| )

| )

schema: org.apache.spark.sql.types.StructType = StructType(StructField(L_ORDERKEY,LongType,true), StructField(L_PARTKEY,LongType,true), StructField(L_SUPPKEY,LongType,true), StructField(L_LINENUMBER,IntegerType,true), StructField(L_QUANTITY,DoubleType,true), StructField(L_EXTENDEDPRICE,DoubleType,true), StructField(L_DISCOUNT,DoubleType,true), StructField(L_TAX,DoubleType,true), StructField(L_RETURNFLAG,StringType,true), StructField(L_LINESTATUS,StringType,true), StructField(L_SHIPDATE,StringType,true), StructField(L_COMMITDATE,StringType,true), StructField(L_RECEIPTDATE,StringType,true), StructField(L_SHIPINSTRUCT,StringType,true), StructField(L_SHIPMODE,StringType,true), StructField(L_COMMENT,StringType,true))

scala> val dataRowRdd = dataRdd.map(p => Row(p(0).toLong, p(1).toLong, p(2).toLong, p(3).toInt, p(4).toDouble, p(5).toDouble, p(6).toDouble, p(7).toDouble, p(8), p(9), p(10), p(11), p(12), p(13), p(14), p(15)))

dataRowRdd: org.apache.spark.rdd.RDD[org.apache.spark.sql.Row] = MapPartitionsRDD[3] at map at <console>:30

scala> val dataFrame = sqlContext.createDataFrame(dataRowRdd, schema)

dataFrame: org.apache.spark.sql.DataFrame = [L_ORDERKEY: bigint, L_PARTKEY: bigint ... 14 more fields]

scala> dataFrame.createOrReplaceTempView("lineitem")

scala> spark.sql("select l_returnflag, l_linestatus, sum(l_quantity) as sum_qty, sum(l_extendedprice) as sum_base_price, sum(l_extendedprice * (1 - l_discount)) as sum_disc_price, sum(l_extendedprice * (1 - l_discount) * (1 + l_tax)) as sum_charge, avg(l_quantity) as avg_qty, avg(l_extendedprice) as avg_price, avg(l_discount) as avg_disc, count(*) as count_order from lineitem where l_shipdate > '1997-09-16' group by l_returnflag, l_linestatus order by l_returnflag, l_linestatus").show()

+------------+------------+-----------+--------------------+--------------------+--------------------+------------------+------------------+-------------------+-----------+

|l_returnflag|l_linestatus| sum_qty| sum_base_price| sum_disc_price| sum_charge| avg_qty| avg_price| avg_disc|count_order|

+------------+------------+-----------+--------------------+--------------------+--------------------+------------------+------------------+-------------------+-----------+

| N| O|7.5697385E7|1.135107538838699...|1.078345555027154...|1.121504616321447...|25.501957856643052|38241.036487881756|0.04999335309103123| 2968297|

+------------+------------+-----------+--------------------+--------------------+--------------------+------------------+------------------+-------------------+-----------+

scala> sqlContext.sql("CREATE TEMPORARY VIEW item USING com.aliyun.oss " +

| "OPTIONS (" +

| "oss.bucket 'select-test-sz', " +

| "oss.prefix 'data', " +

| "oss.schema 'L_ORDERKEY long, L_PARTKEY long, L_SUPPKEY long, L_LINENUMBER int, L_QUANTITY double, L_EXTENDEDPRICE double, L_DISCOUNT double, L_TAX double, L_RETURNFLAG string, L_LINESTATUS string, L_SHIPDATE string, L_COMMITDATE string, L_RECEIPTDATE string, L_SHIPINSTRUCT string, L_SHIPMODE string, L_COMMENT string'," +

| "oss.data.format 'csv'," + // we only support csv now

| "oss.input.csv.header 'None'," +

| "oss.input.csv.recordDelimiter '\n'," +

| "oss.input.csv.fieldDelimiter '|'," +

| "oss.input.csv.commentChar '#'," +

| "oss.input.csv.quoteChar '\"'," +

| "oss.output.csv.recordDelimiter '\n'," +

| "oss.output.csv.fieldDelimiter ','," +

| "oss.output.csv.commentChar '#'," +

| "oss.output.csv.quoteChar '\"'," +

| "oss.endpoint 'oss-cn-shenzhen.aliyuncs.com', " +

| "oss.accessKeyId 'Your Access Key Id', " +

| "oss.accessKeySecret 'Your Access Key Secret')")

res2: org.apache.spark.sql.DataFrame = []

scala> sqlContext.sql("select l_returnflag, l_linestatus, sum(l_quantity) as sum_qty, sum(l_extendedprice) as sum_base_price, sum(l_extendedprice * (1 - l_discount)) as sum_disc_price, sum(l_extendedprice * (1 - l_discount) * (1 + l_tax)) as sum_charge, avg(l_quantity) as avg_qty, avg(l_extendedprice) as avg_price, avg(l_discount) as avg_disc, count(*) as count_order from item where l_shipdate > '1997-09-16' group by l_returnflag, l_linestatus order by l_returnflag, l_linestatus").show()

scala> sqlContext.sql("select l_returnflag, l_linestatus, sum(l_quantity) as sum_qty, sum(l_extendedprice) as sum_base_price, sum(l_extendedprice * (1 - l_discount)) as sum_disc_price, sum(l_extendedprice * (1 - l_discount) * (1 + l_tax)) as sum_charge, avg(l_quantity) as avg_qty, avg(l_extendedprice) as avg_price, avg(l_discount) as avg_disc, count(*) as count_order from item where l_shipdate > '1997-09-16' group by l_returnflag, l_linestatus order by l_returnflag, l_linestatus").show()

+------------+------------+-----------+--------------------+--------------------+--------------------+------------------+-----------------+-------------------+-----------+

|l_returnflag|l_linestatus| sum_qty| sum_base_price| sum_disc_price| sum_charge| avg_qty| avg_price| avg_disc|count_order|

+------------+------------+-----------+--------------------+--------------------+--------------------+------------------+-----------------+-------------------+-----------+

| N| O|7.5697385E7|1.135107538838701E11|1.078345555027154...|1.121504616321447...|25.501957856643052|38241.03648788181|0.04999335309103024| 2968297|

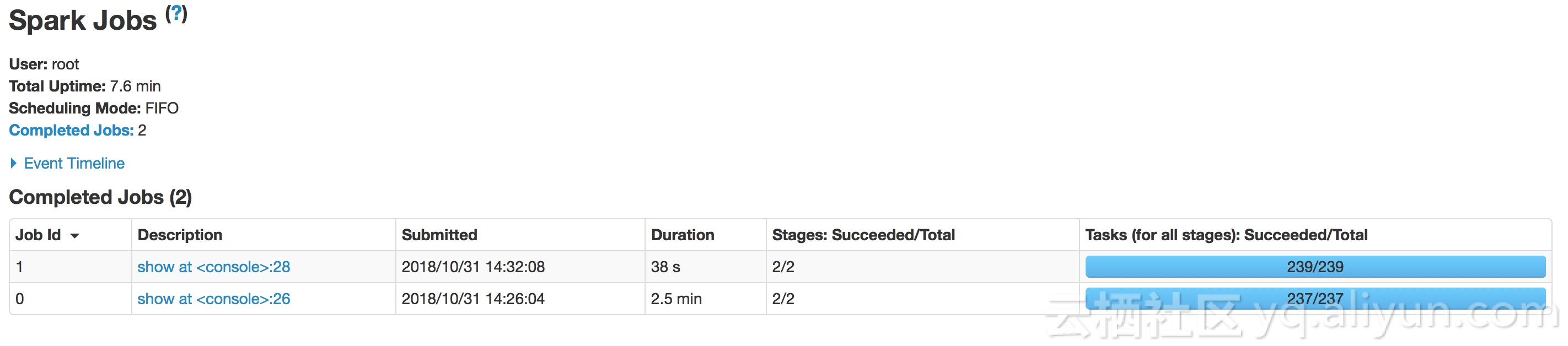

+------------+------------+-----------+--------------------+--------------------+--------------------+------------------+-----------------+-------------------+-----------+耗時對比如下

其中使用Spark SQL與在Spark SQL上使用OSS Select耗時分別是2.5分鐘和38秒。

參考文章

https://yq.aliyun.com/articles/593910

https://yq.aliyun.com/articles/659735

https://yq.aliyun.com/articles/658473

https://mapr.com/blog/spark-data-source-api-extending-our-spark-sql-query-engine/