tensorflow model server 迴歸模型儲存與呼叫方法

安裝tensorfow model server:

安裝依賴包,

sudo apt-get update && sudo apt-get install -y \

build-essential \

curl \

libcurl3-dev \

git \

libfreetype6-dev \

libpng12-dev \

libzmq3-dev \

pkg-config \

python-dev \

python-numpy \

安裝tensorflow-serving-api,

pip install tensorflow-serving-api安裝server,

sudo apt-get update && sudo apt-get install tensorflow-model-server將模型儲存

設定儲存模型路徑,模型版本,

# Export inference model. 使用tf.saved_model.utils.build_tensor_info,將模型輸入,輸出轉換為server變數形式,並儲存

image_size=512

images = tf.placeholder(tf.float32, [None, image_size, image_size,3])#模型輸入

model=pix2pix()

# Run inference.

outputs = model.sampler(images)#模型輸出

saver = tf.train.Saver()

saver.restore(sess, 'checkpoint-0')#載入已經訓練好的模型引數

inputs_tensor_info = tf.saved_model.utils.build_tensor_info(images)

outputs_tensor_info = tf.saved_model.utils.build_tensor_info(outputs)prediction_signature = (

tf.saved_model.signature_def_utils.build_signature_def(

inputs={'images': inputs_tensor_info},

outputs={

'outputs': outputs_tensor_info,

},

method_name=tf.saved_model.signature_constants.REGRESS_METHOD_NAME

))builder.save()#儲存由於我這裡使用的時迴歸模型,因此method_name=tf.saved_model.signature_constants.**REGRESS**_METHOD_NAME

若是分類模型,則改為,

method_name=tf.saved_model.signature_constants.**PREDICT**_METHOD_NAME

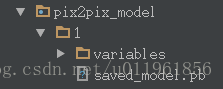

儲存之後,便可以在對應的路徑下得到對應版本的模型檔案,例如,本文中,儲存路徑為pix2pix_model,版本為1,則有,

使用方法

按照上述方法儲存模型後,便可以啟動客戶端,命令如下:

tensorflow_model_server –port=9000 –model_name=pix2pix –model_base_path=/home/detection/tensorflow_serving/example/data/pix2pix_model/

注意,model_base_path必須為絕對路徑,否則會出錯.

客戶端呼叫model:

python pix2pix_client.py –num_tests=1000 –server=localhost:9000

pix2pix_clinet.py定義如下,

def main(_):

host, port = FLAGS.server.split(':')

channel = implementations.insecure_channel(host, int(port))

stub = prediction_service_pb2.beta_create_PredictionService_stub(channel)

data = imread(FLAGS.image)

data = data / 127.5 - 1.

image_size=512

sample=[]

sample.append(data)

sample_image = np.asarray(sample).astype(np.float32)

request = predict_pb2.PredictRequest()

request.model_spec.name = 'pix2pix'

request.model_spec.signature_name =tf.saved_model.signature_constants.DEFAULT_SERVING_SIGNATURE_DEF_KEY

request.inputs['images'].CopyFrom(

tf.contrib.util.make_tensor_proto(sample_image, shape=[1, image_size, image_size,3]))

result_future = stub.Predict.future(request, 5.0) # 5 seconds

response = np.array(

result_future.result().outputs['outputs'].float_val)

out=(response.reshape((512,512,3))+1)*127.5

out= cv2.cvtColor(out.astype(np.float32), cv2.COLOR_BGR2RGB)

cv2.imwrite('data/test_result/' + '1.jpg', out)完整程式碼可以參考我的github:https://github.com/qinghua2016/pix2pix_server

c++呼叫可參考:

https://github.com/tensorflow/serving/blob/master/tensorflow_serving/example/inception_client.cc

java呼叫可以參考:

https://github.com/foxgem/how-to/blob/master/tensorflow/clients/src/main/java/foxgem/Launcher.java