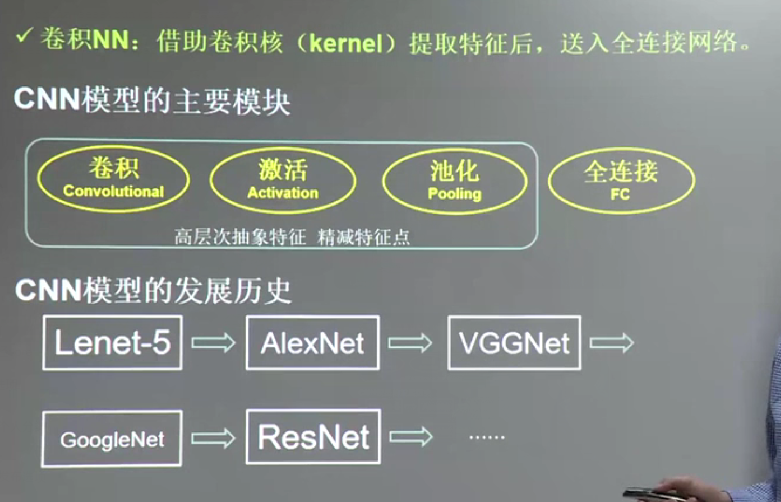

神經網路 CNN

阿新 • • 發佈:2018-11-26

# encoding=utf-8

import tensorflow as tf

import numpy as np

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets('MNIST_data', one_hot=True)

def weight_variable(shape):

initial = tf.truncated_normal(shape, stddev=0.1) # 定義Weights的函式 使用tf.trunncated_normal 標準值為0.1

return tf.Variable(initial)

def bias_variable(shape): #定義biase函式,tf.constant

initial = tf.constant(0.1, shape=shape)

return tf.Variable(initial)

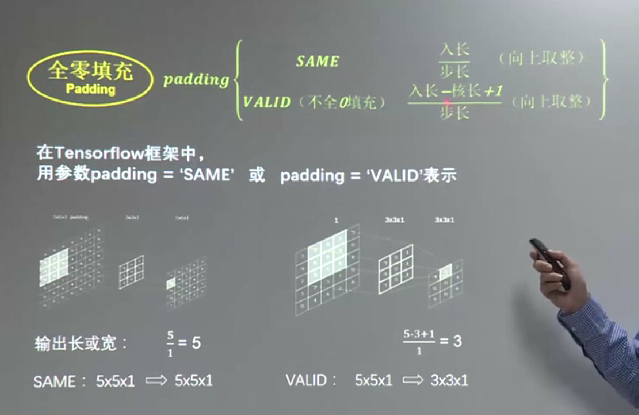

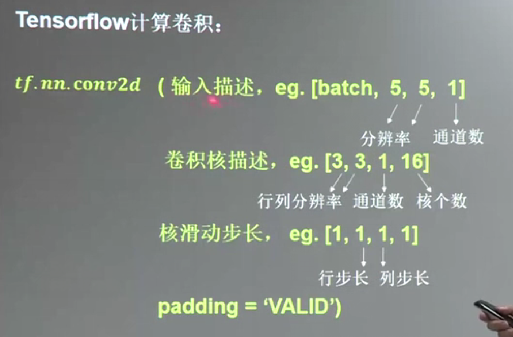

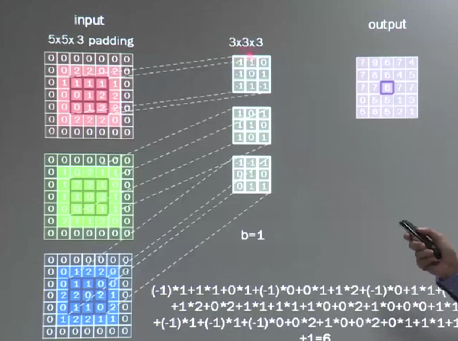

def conv2d(x, W): #定義卷積層

return tf.nn.conv2d(x, W, strides=[1, 1, 1, 1], padding='SAME') # strides第0位和第3為一定為1,剩下的是卷積的橫向和縱向步長

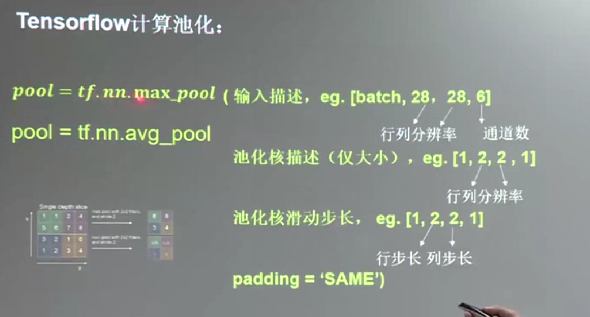

def max_pool_2x2(x): #定義最大化池化層pooling

return tf.nn.max_pool(x, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='SAME') # 引數同上,ksize是池化塊的大小

x = tf.placeholder(tf.float32,[None,784])

y_ = tf.placeholder(tf.float32, [None, 10])

# 影象轉化為一個四維張量,第一個引數代表樣本數量,-1表示不定,第二三引數代表影象尺寸,最後一個引數代表影象通道數

#x_image = tf.reshape(x, [-1, 28, 28, 1])

# 第一層卷積加池化

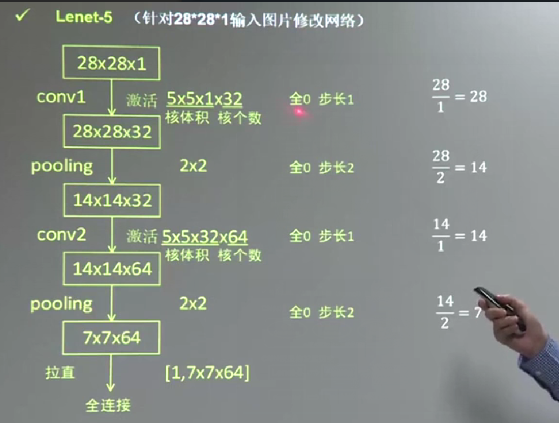

w_conv1 = weight_variable([5, 5, 1, 32]) # 第一二引數值得卷積核尺寸大小,即patch,第三個引數是影象通道數,第四個引數是卷積核的數目,代表會出現多少個卷積特徵 其實就是輸出的尺寸

b_conv1 = bias_variable([32])

h_conv1 = tf.nn.relu(conv2d(x, w_conv1) + b_conv1)

h_pool1 = max_pool_2x2(h_conv1)

# 第二層卷積加池化

w_conv2 = weight_variable([5, 5, 32, 64]) # 多通道卷積,卷積出64個特徵

b_conv2 = bias_variable([64])

h_conv2 = tf.nn.relu(conv2d(h_pool1, w_conv2) + b_conv2)

h_pool2 = max_pool_2x2(h_conv2)

# 原影象尺寸28*28,第一輪影象縮小為14*14,共有32張,第二輪後圖像縮小為7*7,共有64張

w_fc1 = weight_variable([7 * 7 * 64, 1024])

b_fc1 = bias_variable([1024])

h_pool2_flat = tf.reshape(h_pool2, [-1, 7 * 7 * 64]) # 展開,第一個引數為樣本數量,-1未知

f_fc1 = tf.nn.relu(tf.matmul(h_pool2_flat, w_fc1) + b_fc1)

# dropout操作,減少過擬合

keep_prob = tf.placeholder(tf.float32)

h_fc1_drop = tf.nn.dropout(f_fc1, keep_prob)

w_fc2 = weight_variable([1024, 10])

b_fc2 = bias_variable([10])

y_conv = tf.nn.softmax(tf.matmul(h_fc1_drop, w_fc2) + b_fc2)

cross_entropy = -tf.reduce_sum(y_ * tf.log(y_conv)) # 定義交叉熵為loss函式

train_step = tf.train.AdamOptimizer(1e-4).minimize(cross_entropy) # 呼叫優化器優化

def compute_accuracy(v_xs,v_ys):

correct_prediction=tf.equal(tf.argmax(y_conv,1),tf.argmax(v_ys,1))#比較你預測的y和真實y那個1所在的位置。是一個布林型量

print(sess.run(correct_prediction))

accuracy=tf.reduce_mean(tf.cast(correct_prediction,tf.float32))#轉化為float32格式然後求其正確率 tf.cast轉換格式

result=sess.run(accuracy,feed_dict={x:v_xs,y_:v_ys})

return result

sess = tf.InteractiveSession()

sess.run(tf.initialize_all_variables())

for i in range(2000):

batch = mnist.train.next_batch(50)

if i % 100 == 0:

train_accuracy = compute_accuracy(batch[0], batch[1])

print(sess.run(train_accuracy))

# train_step.run(feed_dict={x: batch[0], y_: batch[1], keep_prob: 0.5})

print(sess.run(compute_accuracy(mnist.test.images[0:50], mnist.test.labels[0:50])))

計算輸出特徵通道數,針對polling方式