Spark Streaming實時流處理筆記(5)—— Kafka API 程式設計

阿新 • • 發佈:2018-12-06

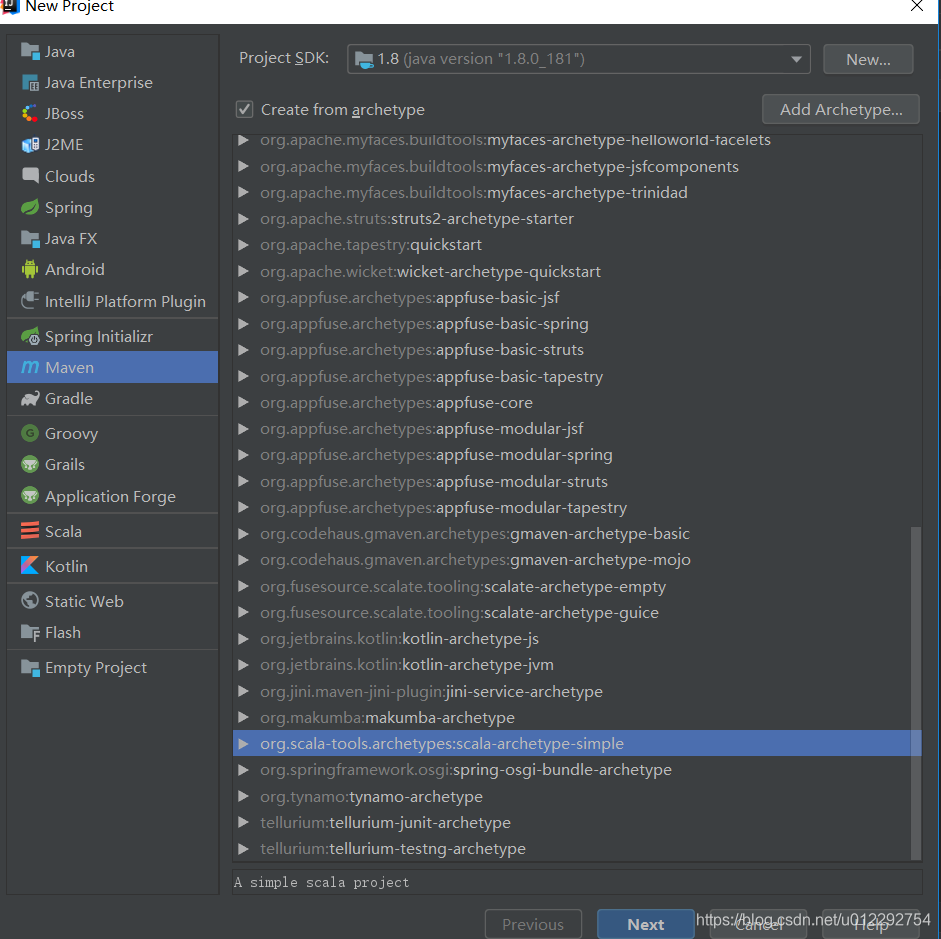

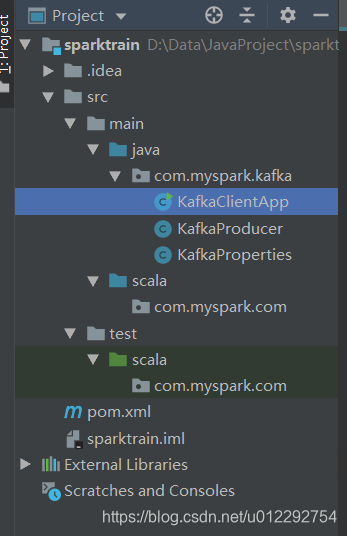

1 新建 Maven工程

pom檔案

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/maven-v4_0_0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.myspark.com</groupId 2 生產者原始碼

KafkaProperties.java

package com.myspark.kafka;

/*

*

* Kafka 配置檔案

* */

public class KafkaProperties {

public static final String ZK = "192.168.30.131:2181";

public static final String TOPIC = "hello_topic";

public static final String BROKER_LIST = "192.168.30.131:9092";

}

KafkaProducer.java

package com.myspark.kafka;

import kafka.javaapi.producer.Producer;

import kafka.producer.KeyedMessage;

import kafka.producer.ProducerConfig;

import java.util.Properties;

public class KafkaProducer extends Thread {

private String topic;

private Producer<Integer, String> producer;

public KafkaProducer(String topic) {

this.topic = topic;

Properties properties = new Properties();

properties.put("metadata.broker.list", KafkaProperties.BROKER_LIST);

properties.put("serializer.class", "kafka.serializer.StringEncoder");

/*

* The number of acknowledgments the producer requires the leader to have received before considering a request complete.

* This controls the durability of the messages sent by the producer.

*

* request.required.acks = 0 - means the producer will not wait for any acknowledgement from the leader.

* request.required.acks = 1 - means the leader will write the message to its local log and immediately acknowledge

* request.required.acks = -1 - means the leader will wait for acknowledgement from all in-sync replicas before acknowledging the write

*/

properties.put("request.required.acks", "1");

producer = new Producer<Integer, String>(new ProducerConfig(properties));

}

@Override

public void run() {

int messageNo = 1;

while (true) {

String message = "message_" + messageNo;

producer.send(new KeyedMessage<Integer, String>(topic, message));

System.out.println("Sent: " + message);

messageNo++;

try{

Thread.sleep(2000);

}catch (Exception e){

e.printStackTrace();

}

}

}

}

KafkaClientApp.java

package com.myspark.kafka;

/*

* Kafka Java API測試

* */

public class KafkaClientApp {

public static void main(String[] args) {

new KafkaProducer(KafkaProperties.TOPIC).start();

}

}

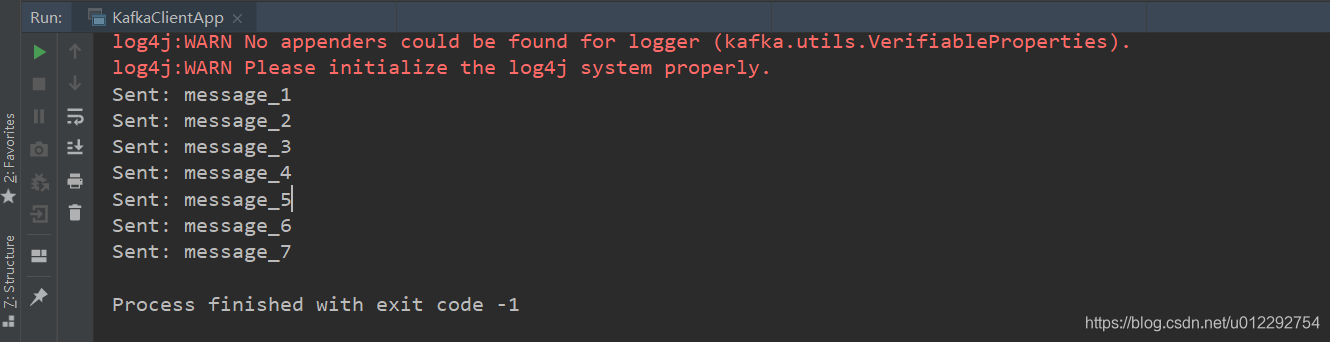

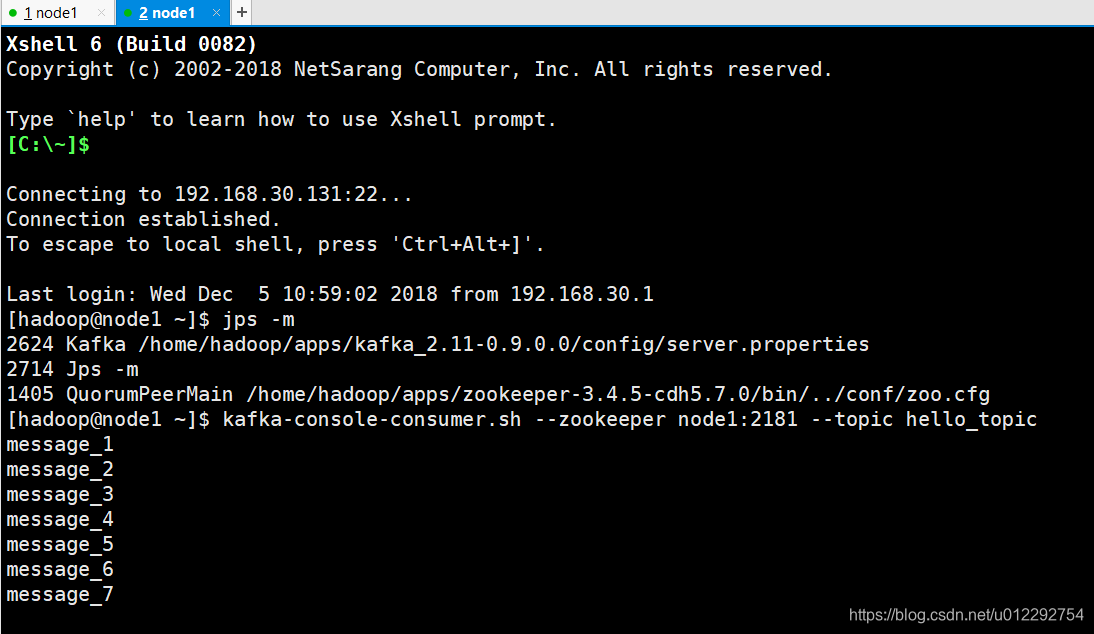

測試

先啟動 zookeeper,然後啟動 kafka

kafka-server-start.sh $KAFKA_HOME/config/server.properties

[[email protected] ~]$ jps -m

2624 Kafka /home/hadoop/apps/kafka_2.11-0.9.0.0/config/server.properties

2714 Jps -m

1405 QuorumPeerMain /home/hadoop/apps/zookeeper-3.4.5-cdh5.7.0/bin/../conf/zoo.cfg

[[email protected] ~]$

啟動消費者

kafka-console-consumer.sh --zookeeper node1:2181 --topic hello_topic

然後啟動 KafkaClientApp

3 消費者

KafkaProperties.java

package com.myspark.kafka;

/*

*

* Kafka 配置檔案

* */

public class KafkaProperties {

public static final String ZK = "192.168.30.131:2181";

public static final String TOPIC = "hello_topic";

public static final String BROKER_LIST = "192.168.30.131:9092";

public static final String GROUP_ID = "test_group1";

}

KafkaConsumer.java

package com.myspark.kafka;

import kafka.consumer.Consumer;

import kafka.consumer.ConsumerConfig;

import kafka.consumer.ConsumerIterator;

import kafka.consumer.KafkaStream;

import kafka.javaapi.consumer.ConsumerConnector;

import java.util.HashMap;

import java.util.List;

import java.util.Map;

import java.util.Properties;

public class KafkaConsumer extends Thread {

private String topic;

public KafkaConsumer(String topic) {

this.topic = topic;

}

private ConsumerConnector createConnector() {

Properties properties = new Properties();

properties.put("zookeeper.connect", KafkaProperties.ZK);

properties.put("group.id",KafkaProperties.GROUP_ID);

return Consumer.createJavaConsumerConnector(new ConsumerConfig(properties));

}

@Override

public void run() {

ConsumerConnector consumer = createConnector();

Map<String, Integer> topicCountMap = new HashMap<String, Integer>();

topicCountMap.put(topic, 1);

//String : topic

//第二個引數:資料流

Map<String, List<KafkaStream<byte[], byte[]>>> messageStream = consumer.createMessageStreams(topicCountMap);

KafkaStream<byte[], byte[]> stream = messageStream.get(topic).get(0); //獲取每次接收到的資料

ConsumerIterator<byte[], byte[]> iterator = stream.iterator();

while(iterator.hasNext()){

String message = new String(iterator.next().message());

System.out.println("receive: "+message);

}

}

}

KafkaClientApp.java

package com.myspark.kafka;

/*

* Kafka Java API測試

* */

public class KafkaClientApp {

public static void main(String[] args) {

new KafkaProducer(KafkaProperties.TOPIC).start();

new KafkaConsumer(KafkaProperties.TOPIC).start();

}

}