二進位制安裝kubernetes k8s 1.10

容我縷縷:

| K8S 2核4G40G磁碟 | 192.168.3.121 |

| node1 2核4G40G磁碟 | 192.168.3.193 |

| node2 2核4G40G磁碟 | 192.168.3.219 |

| kubernetes | 1.10.7 |

| flannel | flannel-v0.10.0-linux-amd64.tar |

| ETCD | etcd-v3.3.8-linux-amd64.tar |

| CNI | cni-plugins-amd64-v0.7.1 |

| docker | 18.03.1-ce |

連結:pan.baidu.com/s/1vhlUkQjI8hMSBM7EJbuPbA

提取碼:t1z1

因為谷歌翻譯的問題,有幾個標題可能不是很仔細,不過內容是沒問題的。。。。

一:互相解析,關防火牆,關掉分割槽,三臺伺服器時間一致(以下操作三臺都要做)

1.1:互相解析

[ 二:安裝docker(三臺都做)

2.1:解除安裝原有版本

[[email protected] ~]# yum remove docker docker-common docker-selinux docker-engine

2.2:安裝docker所依賴驅動

[[email protected] ~]# yum install -y yum-utils device-mapper-persistent-data lvm2

2.3:新增yum源

[[email protected] ~]# yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo

2.4:選擇docker版本安裝

[[email protected] ~]# yum list docker-ce --showduplicates | sort -r

2.5:選擇安裝18.03.1.ce

[[email protected] ~]# yum -y install docker-ce-18.03.1.ce

2.6:啟動docker

[[email protected] ~]# systemctl start docker

三:安裝ETCD

[[email protected] ~]# tar -zxvf etcd-v3.3.8-linux-amd64.tar.gz

[[email protected] ~]# cd etcd-v3.3.8-linux-amd64

[[email protected] etcd-v3.3.8-linux-amd64]# cp etcd etcdctl /usr/bin

[[email protected] etcd-v3.3.8-linux-amd64]# mkdir -p /var/lib/etcd /etc/etcd

所有節點均做以上操作

ETCD配置檔案

三臺機器都要有,略有不同,主要是兩個檔案

/usr/lib/systemd/system/etcd.service 和 /etc/etcd/etcd.conf

ETCD叢集的主從節點關係與kubernetes叢集的主從節點關係不是同的

ETCD的配置檔案只是表示三個ETCD節點,ETCD叢集在啟動和執行過程中會選舉出主節點

因此,配置檔案中體現的只是三個節點ETCD-I,ETCD-II,ETCD-ⅲ

配置好三個節點的配置檔案後,便可以啟動ETCD叢集了

K8S

[[email protected] ~]# cat /usr/lib/systemd/system/etcd.service

[Unit]

Description=Etcd Server

After=network.target

[Service]

Type=notify

WorkingDirectory=/var/lib/etcd/

EnvironmentFile=/etc/etcd/etcd.conf

ExecStart=/usr/bin/etcd

[Install]

WantedBy=multi-user.target

[[email protected] ~]# cat /etc/etcd/etcd.conf

# [member]

# 節點名稱

ETCD_NAME=etcd-i

# 資料存放位置

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

# 監聽其他Etcd例項的地址

ETCD_LISTEN_PEER_URLS="http://192.168.3.121:2380"

# 監聽客戶端地址

ETCD_LISTEN_CLIENT_URLS="http://192.168.3.121:2379,http://127.0.0.1:2379"

#[cluster]

# 通知其他Etcd例項地址

ETCD_INITIAL_ADVERTISE_PEER_URLS="http://192.168.3.121:2380"

# 初始化叢集內節點地址 //這裡折行了。

ETCD_INITIAL_CLUSTER="etcd-i=http://192.168.3.121:2380,etcd-

ii=http://192.168.3.193:2380,etcd-iii=http://192.168.3.219:2380"

# 初始化叢集狀態,new表示新建

ETCD_INITIAL_CLUSTER_STATE="new"

# 初始化叢集token

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster-token"

# 通知客戶端地址

ETCD_ADVERTISE_CLIENT_URLS="http://192.168.3.121:2379,http://127.0.0.1:2379"

node1

[[email protected] ~]# cat /usr/lib/systemd/system/etcd.service

[Unit]

Description=Etcd Server

After=network.target

[Service]

Type=notify

WorkingDirectory=/var/lib/etcd/

EnvironmentFile=/etc/etcd/etcd.conf

ExecStart=/usr/bin/etcd

[Install]

WantedBy=multi-user.target

[[email protected] ~]# cat /etc/etcd/etcd.conf

# [member]

ETCD_NAME=etcd-ii

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="http://192.168.3.193:2380"

ETCD_LISTEN_CLIENT_URLS="http://192.168.3.193:2379,http://127.0.0.1:2379"

#[cluster]

ETCD_INITIAL_ADVERTISE_PEER_URLS="http://192.168.3.193:2380"

ETCD_INITIAL_CLUSTER="etcd-i=http://192.168.3.121:2380,etcd-ii=http://192.168.3.193:2380,etcd-iii=http://192.168.3.219:2380"

ETCD_INITIAL_CLUSTER_STATE="new"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster-token"

ETCD_ADVERTISE_CLIENT_URLS="http://192.168.3.193:2379,http://127.0.0.1:2379"

node2

[[email protected] ~]# cat /usr/lib/systemd/system/etcd.service

[Unit]

Description=Etcd Server

After=network.target

[Service]

Type=notify

WorkingDirectory=/var/lib/etcd/

EnvironmentFile=/etc/etcd/etcd.conf

ExecStart=/usr/bin/etcd

[Install]

WantedBy=multi-user.target

[[email protected] ~]# cat /etc/etcd/etcd.conf

# [member]

ETCD_NAME=etcd-iii

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="http://192.168.3.219:2380"

ETCD_LISTEN_CLIENT_URLS="http://192.168.3.219:2379,http://127.0.0.1:2379"

#[cluster]

ETCD_INITIAL_ADVERTISE_PEER_URLS="http://192.168.3.219:2380"

ETCD_INITIAL_CLUSTER="etcd-i=http://192.168.3.121:2380,etcd-ii=http://192.168.3.193:2380,etcd-iii=http://192.168.3.219:2380"

ETCD_INITIAL_CLUSTER_STATE="new"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster-token"

ETCD_ADVERTISE_CLIENT_URLS="http://192.168.3.219:2379,http://127.0.0.1:2379"

3.2:檢查啟動ETCD叢集

[[email protected] ~]# systemctl daemon-reload

[[email protected] ~]# systemctl start etcd.service

[[email protected] ~]# etcdctl member list

1f6e47d3e5c09902: name=etcd-i peerURLs=http://192.168.3.121:2380 clientURLs=http://127.0.0.1:2379,http://192.168.3.121:2379 isLeader=true

8059b18c36b2ba6b: name=etcd-ii peerURLs=http://192.168.3.193:2380 clientURLs=http://127.0.0.1:2379,http://192.168.3.193:2379 isLeader=false

ad715b003d53f3e6: name=etcd-iii peerURLs=http://192.168.3.219:2380 clientURLs=http://127.0.0.1:2379,http://192.168.3.219:2379 isLeader=false

[[email protected] ~]# etcdctl cluster-health

member 1f6e47d3e5c09902 is healthy: got healthy result from http://127.0.0.1:2379

member 8059b18c36b2ba6b is healthy: got healthy result from http://127.0.0.1:2379

member ad715b003d53f3e6 is healthy: got healthy result from http://127.0.0.1:2379

cluster is healthy

四:安裝flannel

叢集機器均需操作

flannel服務依賴ETCD,必須先安裝好ETCD,並配置ETCD服務地址-etcd的端點,ETCD字首是ETCD儲存的flannel網路配置的鍵字首

4.1:安裝flannel

[[email protected] ~]# mkdir -p /opt/flannel/bin/

[[email protected] ~]# tar -xzvf flannel-v0.10.0-linux-amd64.tar.gz -C /opt/flannel/bin/

[[email protected] ~]# cat /usr/lib/systemd/system/flannel.service

[Unit]

Description=Flanneld overlay address etcd agent

After=network.target

After=network-online.target

Wants=network-online.target

After=etcd.service

Before=docker.service

[Service]

Type=notify

ExecStart=/opt/flannel/bin/flanneld -etcd-endpoints=http://192.168.3.121:2379,http://192.168.3.193:2379,http://192.168.3.219:2379 -etcd-prefix=coreos.com/network

ExecStartPost=/opt/flannel/bin/mk-docker-opts.sh -d /etc/docker/flannel_net.env -c

Restart=on-failure

[Install]

WantedBy=multi-user.target

RequiredBy=docker.service

執行一下命令設定flannel網路配置.(ip等資訊可修改)

[[email protected] ~]# etcdctl mk /coreos.com/network/config '{"Network":"172.18.0.0/16", "SubnetMin": "172.18.1.0", "SubnetMax": "172.18.254.0", "Backend": {"Type": "vxlan"}}'

想刪除的話:etcdctl rm /coreos.com/network/config 刪了後面報錯

4.2:下載flannel

flannel服務依賴flannel映象,所以要先下載flannel映象,執行以下命令從阿里雲下載,並建立映象tag:

[[email protected] ~]# docker pull registry.cn-beijing.aliyuncs.com/k8s_images/flannel:v0.10.0-amd64

[[email protected] ~]# docker tag registry.cn-beijing.aliyuncs.com/k8s_images/flannel:v0.10.0-amd64 quay.io/coreos/flannel:v0.10.0

配置docker

flannel配置中有一項

ExecStartPost=/opt/flannel/bin/mk-docker-opts.sh -d /etc/docker/flannel_net.env -c

flannel啟動後執行mk-docker-opts.sh,並生成/etc/docker/flannel_net.env檔案

flannel會修改docker網路,flannel_net.env是flannel生成的docker配置引數,因此,還要修改docker配置項

/usr/lib/systemd/system/docker.service

[[email protected] ~]# cat /usr/lib/systemd/system/docker.service

[Unit]

Description=Docker Application Container Engine

Documentation=https://docs.docker.com

# After=network-online.target firewalld.service

After=network-online.target flannel.service

Wants=network-online.target

[Service]

Type=notify

# the default is not to use systemd for cgroups because the delegate issues still

# exists and systemd currently does not support the cgroup feature set required

# for containers run by docker

EnvironmentFile=/etc/docker/flannel_net.env

#ExecStart=/usr/bin/dockerd $DOCKER_OPTS

ExecStart=/usr/bin/dockerd

ExecReload=/bin/kill -s HUP $MAINPID

ExecStartPost=/usr/sbin/iptables -P FORWARD ACCEPT

# Having non-zero Limit*s causes performance problems due to accounting overhead

# in the kernel. We recommend using cgroups to do container-local accounting.

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

# Uncomment TasksMax if your systemd version supports it.

# Only systemd 226 and above support this version.

#TasksMax=infinity

TimeoutStartSec=0

# set delegate yes so that systemd does not reset the cgroups of docker containers

Delegate=yes

# kill only the docker process, not all processes in the cgroup

KillMode=process

# restart the docker process if it exits prematurely

Restart=on-failure

StartLimitBurst=3

StartLimitInterval=60s

[Install]

WantedBy=multi-user.target

After:flannel啟動之後再啟動docker

EnvironmentFile:配置docker的啟動引數,由flannel生成

ExecStart:增加docker啟動引數

ExecStartPost:在docker啟動之後執行,會修改主機的iptables路由規則。

4.3:啟動flannel

[[email protected] ~]# systemctl daemon-reload

[[email protected] ~]# systemctl start flannel.service

[[email protected] ~]# systemctl restart docker.service

五:安裝CNI

叢集機器均需操作

CNI(Container Network Interface)容器網路介面,是Linux容器網路配置的一組標準和庫,使用者需要根據這些標準和庫來開發自己的容器網路外掛

[[email protected] ~]# mkdir -p /opt/cni/bin /etc/cni/net.d

[[email protected] ~]# tar -xzvf cni-plugins-amd64-v0.7.1.tgz -C /opt/cni/bin

[[email protected] ~]# cat /etc/cni/net.d/10-flannel.conflist

{

"name":"cni0",

"cniVersion":"0.3.1",

"plugins":[

{

"type":"flannel",

"delegate":{

"forceAddress":true,

"isDefaultGateway":true

}

},

{

"type":"portmap",

"capabilities":{

"portMappings":true

}

}

]

}6:安裝K8S叢集

CA證書

| 證書用途 | 命名 |

|---|---|

| 根證書和私鑰 | ca.crt、ca.key |

| kube-apiserver證書和私鑰 | apiserver-key.pem、apiserver.pem |

| kube-controller-manager/kube-scheduler證書和私鑰 | cs_client.crt、cs_client.key |

| kubelet/kube-proxy證書和私鑰 | kubelet_client.crt、kubelet_client.key |

master

建立證書目錄

6.1:生成根證書和私鑰

[[email protected] ~]# mkdir -p /etc/kubernetes/ca

[[email protected] ~]# cd /etc/kubernetes/ca/

[[email protected] ca]# openssl genrsa -out ca.key 2048

[[email protected] ca]# openssl req -x509 -new -nodes -key ca.key -subj "/CN=k8s" -days 5000 -out ca.crt

6.2:生成kube-apiserver證書和私鑰

[[email protected] ca]# cat master_ssl.conf

[req]

req_extensions = v3_req

distinguished_name = req_distinguished_name

[req_distinguished_name]

[ v3_req ]

basicConstraints = CA:FALSE

keyUsage = nonRepudiation, digitalSignature, keyEncipherment

subjectAltName = @alt_names

[alt_names]

DNS.1 = kubernetes

DNS.2 = kubernetes.default

DNS.3 = kubernetes.default.svc

DNS.4 = kubernetes.default.svc.cluster.local

DNS.5 = k8s

IP.1 = 172.18.0.1

IP.2 = 192.168.3.121

[[email protected] ca]# openssl genrsa -out apiserver-key.pem 2048

[[email protected] ca]# openssl req -new -key apiserver-key.pem -out apiserver.csr -subj "/CN=k8s" -config master_ssl.conf

[[email protected] ca]# openssl x509 -req -in apiserver.csr -CA ca.crt -CAkey ca.key -CAcreateserial -out apiserver.pem -days 365 -extensions v3_req -extfile master_ssl.conf

6.3:生成kube-controller-manager/kube-scheduler證書和私鑰

[[email protected] ca]# openssl genrsa -out cs_client.key 2048

[[email protected] ca]# openssl req -new -key cs_client.key -subj "/CN=k8s" -out cs_client.csr

[[email protected] ca]# openssl x509 -req -in cs_client.csr -CA ca.crt -CAkey ca.key -CAcreateserial -out cs_client.crt -days 5000

6.4:拷貝證書到node

[[email protected] ca]# scp ca.crt ca.key node1:/etc/kubernetes/ca/

[[email protected] ca]# scp ca.crt ca.key node2:/etc/kubernetes/ca/

----------------------------------------------------------------

到這裡下面這些檔案少一個,就去面壁尋思尋思為啥少一個。

[[email protected] ca]# ls

apiserver.csr apiserver.pem ca.key cs_client.crt cs_client.key

apiserver-key.pem ca.crt ca.srl cs_client.csr master_ssl.conf

----------------------------------------------------------------

6.4:node1證書配置

[[email protected] ca]# openssl genrsa -out kubelet_client.key 2048

[[email protected] ca]# openssl req -new -key kubelet_client.key -subj "/CN=192.168.3.193" -out kubelet_client.csr

[[email protected] ca]# openssl x509 -req -in kubelet_client.csr -CA ca.crt -CAkey ca.key -CAcreateserial -out kubelet_client.crt -days 5000

node2證書配置

[[email protected] ca]# openssl genrsa -out kubelet_client.key 2048

[[email protected] ca]# openssl req -new -key kubelet_client.key -subj "/CN=192.168.3.219" -out kubelet_client.csr

[[email protected] ca]# openssl x509 -req -in kubelet_client.csr -CA ca.crt -CAkey ca.key -CAcreateserial -out kubelet_client.crt -days 5000七:安裝k8s

7.1:安裝

[[email protected] bin]# tar -zxvf kubernetes-server-linux-amd64.tar.gz -C /opt

[[email protected] bin]# cd /opt/kubernetes/server/bin

[[email protected] bin]# cp -a `ls |egrep -v "*.tar|*_tag"` /usr/bin

[[email protected] bin]# mkdir -p /var/log/kubernetes

7.2:配置kube-apiserver

[[email protected] bin]# cat /usr/lib/systemd/system/kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=etcd.service

Wants=etcd.service

[Service]

EnvironmentFile=/etc/kubernetes/apiserver.conf

ExecStart=/usr/bin/kube-apiserver $KUBE_API_ARGS

Restart=on-failure

Type=notify

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target7.3:配置apiserver.conf

[[email protected] bin]# cat /etc/kubernetes/apiserver.conf

KUBE_API_ARGS="\

--storage-backend=etcd3 \

--etcd-servers=http://192.168.3.121:2379,http://192.168.3.193:2379,http://192.168.3.219:2379 \

--bind-address=0.0.0.0 \

--secure-port=6443 \

--service-cluster-ip-range=172.18.0.0/16 \

--service-node-port-range=1-65535 \

--kubelet-port=10250 \

--advertise-address=192.168.3.121 \

--allow-privileged=false \

--anonymous-auth=false \

--client-ca-file=/etc/kubernetes/ca/ca.crt \

--tls-private-key-file=/etc/kubernetes/ca/apiserver-key.pem \

--tls-cert-file=/etc/kubernetes/ca/apiserver.pem \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,NamespaceExists,SecurityContextDeny,ServiceAccount,DefaultStorageClass,ResourceQuota \

--logtostderr=true \

--log-dir=/var/log/kubernets \

--v=2"

#############################

#解釋說明

--etcd-servers #連線到etcd叢集

--secure-port #開啟安全埠6443

--client-ca-file、--tls-private-key-file、--tls-cert-file配置CA證書

--enable-admission-plugins #開啟准入許可權

--anonymous-auth=false #不接受匿名訪問,若為true,則表示接受,此處設定為false,便於dashboard訪問7.4:配置kube-controller-manager(server引用conf,conf裡引用yaml)

[[email protected] bin]# cat /etc/kubernetes/kube-controller-config.yaml

apiVersion: v1

kind: Config

users:

- name: controller

user:

client-certificate: /etc/kubernetes/ca/cs_client.crt

client-key: /etc/kubernetes/ca/cs_client.key

clusters:

- name: local

cluster:

certificate-authority: /etc/kubernetes/ca/ca.crt

contexts:

- context:

cluster: local

user: controller

name: default-context

current-context: default-context

7.5:配置kube-controller-manager.service

[[email protected] bin]# cat /usr/lib/systemd/system/kube-controller-manager.service

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=kube-apiserver.service

Requires=kube-apiserver.service

[Service]

EnvironmentFile=/etc/kubernetes/controller-manager.conf

ExecStart=/usr/bin/kube-controller-manager $KUBE_CONTROLLER_MANAGER_ARGS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

7.6:配置controller-manager.conf

[[email protected] bin]# cat /etc/kubernetes/controller-manager.conf

KUBE_CONTROLLER_MANAGER_ARGS="\

--master=https://192.168.3.121:6443 \

--service-account-private-key-file=/etc/kubernetes/ca/apiserver-key.pem \

--root-ca-file=/etc/kubernetes/ca/ca.crt \

--cluster-signing-cert-file=/etc/kubernetes/ca/ca.crt \

--cluster-signing-key-file=/etc/kubernetes/ca/ca.key \

--kubeconfig=/etc/kubernetes/kube-controller-config.yaml \

--logtostderr=true \

--log-dir=/var/log/kubernetes \

--v=2"

#######################

master連線到master節點

service-account-private-key-file、root-ca-file、cluster-signing-cert-file、cluster-signing-key-file配置CA證書

kubeconfig是配置檔案7.7:配置kube-scheduler

[[email protected] bin]# cat /etc/kubernetes/kube-scheduler-config.yaml

apiVersion: v1

kind: Config

users:

- name: scheduler

user:

client-certificate: /etc/kubernetes/ca/cs_client.crt

client-key: /etc/kubernetes/ca/cs_client.key

clusters:

- name: local

cluster:

certificate-authority: /etc/kubernetes/ca/ca.crt

contexts:

- context:

cluster: local

user: scheduler

name: default-context

current-context: default-context

[[email protected] bin]# cat /usr/lib/systemd/system/kube-scheduler.service

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=kube-apiserver.service

Requires=kube-apiserver.service

[Service]

User=root

EnvironmentFile=/etc/kubernetes/scheduler.conf

ExecStart=/usr/bin/kube-scheduler $KUBE_SCHEDULER_ARGS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

[[email protected] bin]# cat /etc/kubernetes/scheduler.conf

KUBE_SCHEDULER_ARGS="\

--master=https://192.168.3.121:6443 \

--kubeconfig=/etc/kubernetes/kube-scheduler-config.yaml \

--logtostderr=true \

--log-dir=/var/log/kubernetes \

--v=2"

7.8:啟動master

[[email protected] bin]# systemctl daemon-reload

[[email protected] bin]# systemctl start kube-apiserver.service //啟動報錯,就是上面配置檔案的問題。

[[email protected] bin]# systemctl start kube-controller-manager.service

[[email protected] bin]# systemctl start kube-scheduler.service

7.9:日誌檢視

[[email protected] bin]# journalctl -xeu kube-apiserver --no-pager

[[email protected] bin]# journalctl -xeu kube-controller-manager --no-pager

[[email protected] bin]# journalctl -xeu kube-scheduler --no-pager

# 實時檢視加 -f

節點安裝K8S(下面這些兩個節點都要做)

[[email protected] ~]# tar -zxvf kubernetes-server-linux-amd64.tar.gz -C /opt

[[email protected] ~]# cd /opt/kubernetes/server/bin

[[email protected] bin]# cp -a kubectl kubelet kube-proxy /usr/bin/

[[email protected] bin]# mkdir -p /var/log/kubernetes

[[email protected] bin]# cat /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

# 修改核心引數,iptables過濾規則生效.如果未用到可忽略

[[email protected] bin]# sysctl -p #配置生效節點1:配置kubelet

[[email protected] bin]# cat /etc/kubernetes/kubelet-config.yaml

apiVersion: v1

kind: Config

users:

- name: kubelet

user:

client-certificate: /etc/kubernetes/ca/kubelet_client.crt

client-key: /etc/kubernetes/ca/kubelet_client.key

clusters:

- cluster:

certificate-authority: /etc/kubernetes/ca/ca.crt

server: https://192.168.3.121:6443

name: local

contexts:

- context:

cluster: local

user: kubelet

name: default-context

current-context: default-context

preferences: {}

[[email protected] bin]# cat /usr/lib/systemd/system/kubelet.service

[Unit]

Description=Kubelet Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=docker.service

Requires=docker.service

[Service]

EnvironmentFile=/etc/kubernetes/kubelet.conf

ExecStart=/usr/bin/kubelet $KUBELET_ARGS

Restart=on-failure

[Install]

WantedBy=multi-user.target[[email protected] bin]# cat /etc/kubernetes/kubelet.conf

KUBELET_ARGS="\

--kubeconfig=/etc/kubernetes/kubelet-config.yaml \

--pod-infra-container-image=registry.aliyuncs.com/archon/pause-amd64:3.0 \

--hostname-override=192.168.3.193 \

--network-plugin=cni \

--cni-conf-dir=/etc/cni/net.d \

--cni-bin-dir=/opt/cni/bin \

--logtostderr=true \

--log-dir=/var/log/kubernetes \

--v=2"

---------------------------------------------------------------------

###################

--hostname-override #配置node名稱 建議使用node節點的IP

#--pod-infra-container-image=gcr.io/google_containers/pause-amd64:3.0 \

--pod-infra-container-image #指定pod的基礎映象 預設是google的,建議改為國內,或者FQ

或者 下載到本地重新命名映象

docker pull registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0

docker tag registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0 gcr.io/google_containers/pause-amd64:3.0

--kubeconfig #為配置檔案配置KUBE-代理

[[email protected] bin]# cat /etc/kubernetes/proxy-config.yaml

apiVersion: v1

kind: Config

users:

- name: proxy

user:

client-certificate: /etc/kubernetes/ca/kubelet_client.crt

client-key: /etc/kubernetes/ca/kubelet_client.key

clusters:

- cluster:

certificate-authority: /etc/kubernetes/ca/ca.crt

server: https://192.168.3.121:6443

name: local

contexts:

- context:

cluster: local

user: proxy

name: default-context

current-context: default-context

preferences: {}

[[email protected] bin]# cat /usr/lib/systemd/system/kube-proxy.service

[Unit]

Description=Kube-Proxy Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=network.target

Requires=network.service

[Service]

EnvironmentFile=/etc/kubernetes/proxy.conf

ExecStart=/usr/bin/kube-proxy $KUBE_PROXY_ARGS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target[[email protected] bin]# cat /etc/kubernetes/proxy.conf

KUBE_PROXY_ARGS="\

--master=https://192.168.3.121:6443 \

--hostname-override=192.168.3.193 \

--kubeconfig=/etc/kubernetes/proxy-config.yaml \

--logtostderr=true \

--log-dir=/var/log/kubernetes \

--v=2"

節點2:配置kubelet

[[email protected] bin]# cat /etc/kubernetes/kubelet-config.yaml

apiVersion: v1

kind: Config

users:

- name: kubelet

user:

client-certificate: /etc/kubernetes/ca/kubelet_client.crt

client-key: /etc/kubernetes/ca/kubelet_client.key

clusters:

- cluster:

certificate-authority: /etc/kubernetes/ca/ca.crt

server: https://192.168.3.121:6443

name: local

contexts:

- context:

cluster: local

user: kubelet

name: default-context

current-context: default-context

preferences: {}

[[email protected] bin]# cat /usr/lib/systemd/system/kubelet.service

[Unit]

Description=Kubelet Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=docker.service

Requires=docker.service

[Service]

EnvironmentFile=/etc/kubernetes/kubelet.conf

ExecStart=/usr/bin/kubelet $KUBELET_ARGS

Restart=on-failure

[Install]

WantedBy=multi-user.target

[[email protected] bin]# cat /etc/kubernetes/kubelet.conf

KUBELET_ARGS="\

--kubeconfig=/etc/kubernetes/kubelet-config.yaml \

--pod-infra-container-image=registry.aliyuncs.com/archon/pause-amd64:3.0 \

--hostname-override=192.168.3.219 \

--network-plugin=cni \

--cni-conf-dir=/etc/cni/net.d \

--cni-bin-dir=/opt/cni/bin \

--logtostderr=true \

--log-dir=/var/log/kubernetes \

--v=2"

---------------------------------------------------------------------------------

###################

--hostname-override #配置node名稱 建議使用node節點的IP

#--pod-infra-container-image=gcr.io/google_containers/pause-amd64:3.0 \

--pod-infra-container-image #指定pod的基礎映象 預設是google的,建議改為國內,或者FQ

或者 下載到本地重新命名映象

docker pull registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0

docker tag registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0 gcr.io/google_containers/pause-amd64:3.0

--kubeconfig #為配置檔案配置KUBE-代理

[[email protected] bin]# cat /etc/kubernetes/proxy-config.yaml

apiVersion: v1

kind: Config

users:

- name: proxy

user:

client-certificate: /etc/kubernetes/ca/kubelet_client.crt

client-key: /etc/kubernetes/ca/kubelet_client.key

clusters:

- cluster:

certificate-authority: /etc/kubernetes/ca/ca.crt

server: https://192.168.3.121:6443

name: local

contexts:

- context:

cluster: local

user: proxy

name: default-context

current-context: default-context

preferences: {}

[[email protected] bin]# cat /usr/lib/systemd/system/kube-proxy.service

[Unit]

Description=Kube-Proxy Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=network.target

Requires=network.service

[Service]

EnvironmentFile=/etc/kubernetes/proxy.conf

ExecStart=/usr/bin/kube-proxy $KUBE_PROXY_ARGS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

[[email protected] bin]# cat /etc/kubernetes/proxy.conf

KUBE_PROXY_ARGS="\

--master=https://192.168.3.121:6443 \

--hostname-override=192.168.3.219 \

--kubeconfig=/etc/kubernetes/proxy-config.yaml \

--logtostderr=true \

--log-dir=/var/log/kubernetes \

--v=2"

---------------------------------------------------

--hostname-override #配置node名稱,要與kubelet對應,kubelet配置了,則kube-proxy也要配置

--master #連線master服務

--kubeconfig #為配置檔案啟動節點,日誌檢視(兩節點都做)

[[email protected] bin]# systemctl daemon-reload

[[email protected] bin]# systemctl start kubelet.service

[[email protected] bin]# systemctl start kube-proxy.service

[[email protected] bin]# journalctl -xeu kubelet --no-pager

[[email protected] bin]# journalctl -xeu kube-proxy --no-pager

# 實時檢視加 -f

master上檢視節點

[[email protected] ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

192.168.3.193 Ready <none> 19m v1.10.7

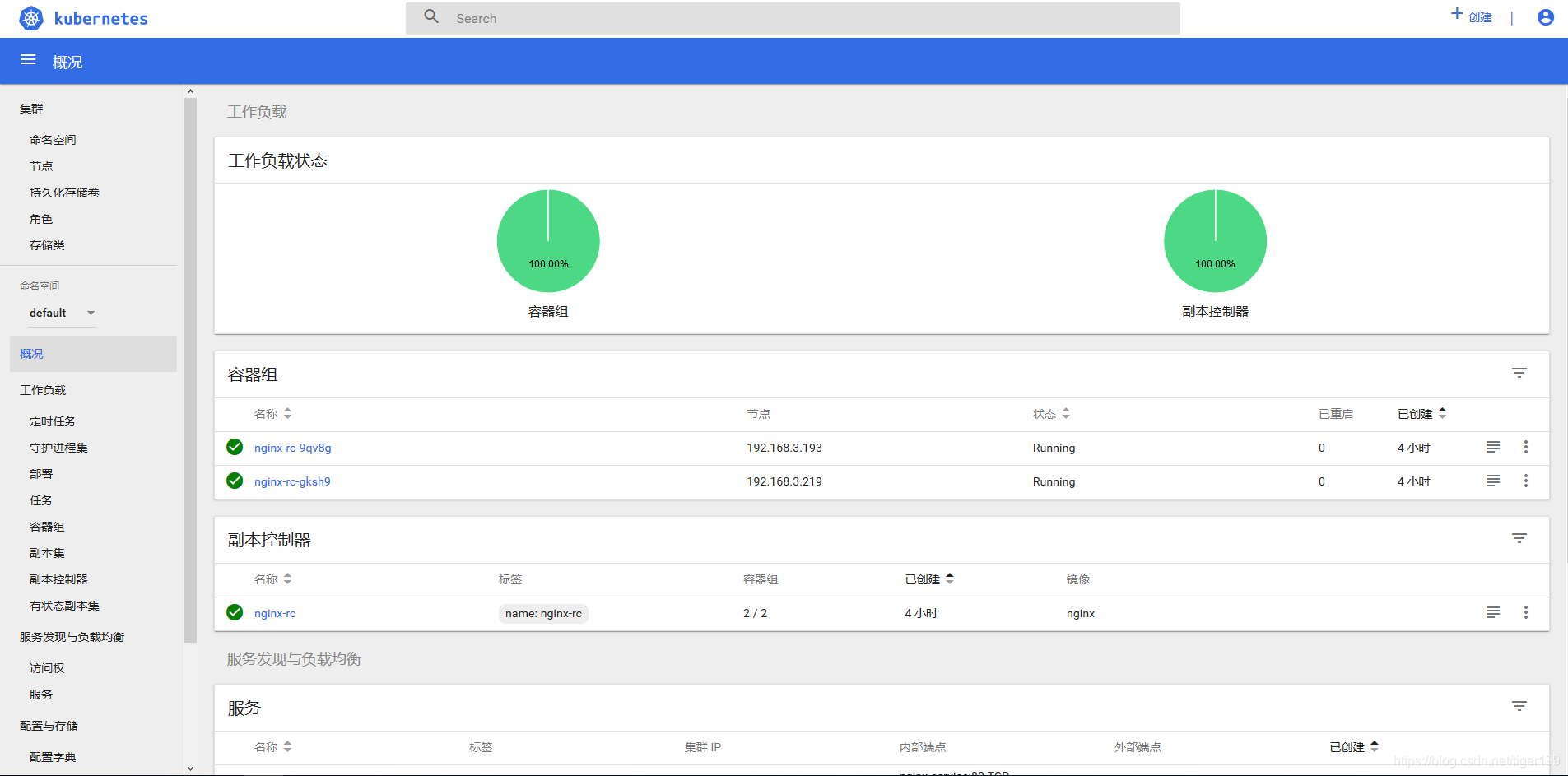

192.168.3.219 Ready <none> 19m v1.10.7叢集測試(配置nginx測試檔案(master doing))

[[email protected] bin]# cat nginx-rc.yaml

apiVersion: v1

kind: ReplicationController

metadata:

name: nginx-rc

labels:

name: nginx-rc

spec:

replicas: 2

selector:

name: nginx-pod

template:

metadata:

labels:

name: nginx-pod

spec:

containers:

- name: nginx

image: nginx

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80

[[email protected] bin]# cat nginx-svc.yaml

apiVersion: v1

kind: Service

metadata:

name: nginx-service

labels:

name: nginx-service

spec:

type: NodePort

ports:

- port: 80

protocol: TCP

targetPort: 80

nodePort: 30081

selector:

name: nginx-pod啟動YAML檔案

[[email protected] bin]# kubectl create -f nginx-rc.yaml

[[email protected] bin]# kubectl create -f nginx-svc.yaml

#檢視pod建立情況:

[[email protected] bin]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE

nginx-rc-9qv8g 0/1 ContainerCreating 0 6s <none> 192.168.3.193

nginx-rc-gksh9 0/1 ContainerCreating 0 6s <none> 192.168.3.219

瀏覽器訪問http://node-ip:30081/

出現NGINX頁面就OK

可以使用以下命令來刪除服務及nginx的部署:

[[email protected] bin]# kubectl delete -f nginx-svc.yaml

[[email protected] bin]# kubectl delete -f nginx-rc.yaml

8:k8s介面安裝WEB UI

下載儀表板yaml

[[email protected] ~]# wget https://raw.githubusercontent.com/kubernetes/dashboard/master/src/deploy/recommended/kubernetes-dashboard.yaml

修改檔案kubernetes-dashboard.yaml

image 那裡 要修改下.預設的地址被牆了

#image: k8s.gcr.io/kubernetes-dashboard-amd64:v1.10.0

image: mirrorgooglecontainers/kubernetes-dashboard-amd64:v1.8.3

---------------------------------------------------------------

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kube-system

spec:

ports:

- port: 443

targetPort: 8443

selector:

k8s-app: kubernetes-dashboard

##############修改後#############

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kube-system

spec:

type: NodePort

ports:

- port: 443

targetPort: 8443

nodePort: 30000

selector:

k8s-app: kubernetes-dashboard

新增type:NodePort

暴露埠 :30000建立許可權控制YAML

[[email protected] ~]# cat dashboard-admin.yaml

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

name: kubernetes-dashboard

labels:

k8s-app: kubernetes-dashboard

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard

namespace: kube-system

建立,檢視

[[email protected] ~]# kubectl create -f kubernetes-dashboard.yaml

[[email protected] ~]# kubectl create -f dashboard-admin.yaml

[[email protected] ~]# kubectl get pods --all-namespaces -o wide

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE

default nginx-rc-9qv8g 1/1 Running 0 26m 172.18.19.2 192.168.3.193

default nginx-rc-gksh9 1/1 Running 0 26m 172.18.58.2 192.168.3.219

kube-system kubernetes-dashboard-66c9d98865-k84kb 1/1 Running 0 23m 172.18.58.3 192.168.3.219

訪問

成功後可以直接訪問 https://開頭NODE_IP:的配置埠 訪問

這邊我的英文 https://192.168.3.193:30000

訪問會提示登入。我們採取令牌登入

[[email protected] ~]# kubectl -n kube-system describe secret $(kubectl -n kube-system get secret | grep admin-user | awk '{print $1}') | grep token